As enterprises migrate large applications and large on-premises backend databases to AWS, you likely want to place the databases closer to your customers for low latency and better application performance. This isn’t always easy because your customers may be located all over and on-premises data centers are usually at a handful of locations. This means that data in the on-premises databases need to be split across various AWS Regions while maintaining data integrity, which is critical for smooth running of the application and the end-user experience.

The way we can split data is based on the amount of data that we need to process, the downtime, and the cutover window duration. In this series, we discuss two approaches that you can use to split data from one Oracle source database to two Aurora PostgreSQL instances in different Regions even if some tables don’t have a particular column on which the data can be split. The approaches use AWS Database Migration Service (AWS DMS) to successfully split the data as it gets migrated to AWS without compromising data integrity.

Part 1: Use AWS DMS to split the data into different Regions

We can use AWS DMS for the parent tables to split the data in such a way that data is migrated closer to the customer, for example, a West coast-based customer in the US has their data migrated to a us-west Region. The corresponding child tables and other tables are copied entirely into the particular Region. When the migration is complete and application is up and running, a cleanup exercise is performed during a low-activity window to clean up unwanted data from the child table and other tables. This approach is described in this post.

Part 2: Use Oracle materialized views and AWS DMS to split the data into different Regions

With our second approach, we use AWS DMS to split the data from the parent tables as we did in the first approach. For the child tables, we create materialized views on the source database, which we use as source tables for AWS DMS, which are mapped to the child tables on the Aurora target database. This way, the corresponding child table’s data is migrated to the corresponding Region without compromising data integrity and without duplicating the data. This approach is described in part 2.

As in any real-life scenario, each approach has advantages and disadvantages. We recommend working closely with your application team and cloud architect (for large enterprises, this can be cloud Center of Excellence team) to decide on either of the approaches.

Comparing approaches

The following table summarizes the advantages and disadvantages of each of the approach, which can help you make a quicker decision when choosing an approach that suits your data migration needs.

Part 1: Use AWS DMS

Part 2: Use AWS DMS and materialized views

No additional setup needed on the Oracle source

Materialized views need to be built and tested on the source database

No performance impact on the Oracle source because all the work of splitting and cleaning data happens on the Aurora target database

There can be a potential performance impact on the existing Oracle source database because the materialized view gets refreshed every time data gets committed

Data is split and cleaned on the target Aurora database, so a larger amount of data needs to be processed and transferred in various Regions, which increases the AWS cost

Data is pre-split on the Oracle source database, so no additional AWS cost is incurred for transferring and storing excess data

No additional storage is necessary to accommodate for Oracle materialized views

Additional storage is necessary on the Oracle source to create and maintain materialized views

Additional cleanup steps need to be developed and tested on the Aurora target

No additional cleanup is necessary on the Aurora target

Post-migration cleanup on the Aurora target database needs to be performed during a low-activity window

No cleanup necessary

Higher cost for Aurora is incurred for additional storage of data until cleanup is completed

No additional data is stored, so no cleanup is necessary and no additional costs are incurred

For more information about best practices, see Best practices for AWS Database Migration Service.

Solution overview

This solution assumes you have an on-premises Oracle OLTP database, which we migrate over to Amazon Aurora for PostgreSQL.

The Oracle database for this post consists of a large number of tables with parent and child relationships. You can decide the customer location using certain logic and criteria on the parent tables, but you can’t apply similar logic on all the child tables because the necessary columns pertaining to a customer’s Regional tenancy aren’t present in the child tables. This creates a significant challenge to migrate all the data for a given customer to a particular Region.

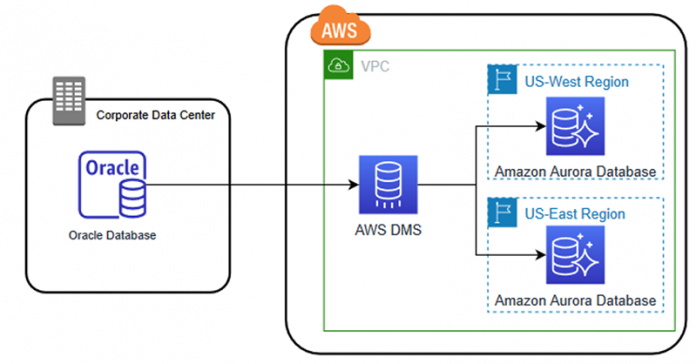

For our use case, we consider a large enterprise, with its data centers located on the East coast of the United States. The enterprise embarks on the journey of migrating its applications and databases to AWS, taking advantages of various Regions. The application team decides to separate out the data in us-east and us-west Regions based on customer location. We use AWS DMS to split the data across the Regions based on the logic provided by the application team. As the data gets split, maintaining the data integrity is crucial for the smooth operation of the application and also for the customer.

The following diagram represents the data flow from AWS DMS to the Aurora database in different Regions.

Example table design

In this post, we use the following table design to migrate data.

The column TENANT_ID in the table PARENTTABLE has the customer-related information that the application can map to individual customers and their locations and thereby decide if the data corresponding to a particular TENANT_ID goes into a particular Region.

The table CHILDTABLE doesn’t have the TENANT_ID column, so data migration to a particular Region needs to rely on the foreign key relation to the PARENTTABLE.

For this post, we assume that the tenant IDs that are under 12,000 are migrating to an Aurora instance in the us-east Region, and those greater than 12,000 are migrating to the us-west Region. We create the tables with the following code:

The following code is the DDL for the table for an Oracle database.

The following code is the DDL for the table for Amazon Aurora database.

Prerequisites

For this walkthrough, you should have the following prerequisites:

An AWS account.

An on-premises Oracle instance. If you want to follow along but an Oracle on-premises instance isn’t available, you can use an Amazon Relational Database Service (Amazon RDS) instance.

Aurora instances in different Regions (for this post, us-east-1 and us-east-2).

Knowledge of AWS DMS.

Knowledge of the database design and the relationships between tables that exist in the database.

An existing table structure in Oracle and an Aurora database. If the table structure doesn’t exist in Aurora, you can use the AWS Schema Conversion Tool (AWS SCT) to convert the schema and create the tables in an Aurora database.

A source Oracle database configured to run AWS DMS.

A configured AWS DMS replication instance.

Endpoints created in AWS DMS for both the source Oracle on-premises database and target Aurora instances.

The services involved in this solution incur costs. You’re responsible for the costs related to your use of any AWS services while running this reference deployment. Prices are subject to change. For more information, refer to the pricing calculator.

Approach: Use AWS DMS to split the data into different Regions

This approach uses AWS DMS to migrate the data from the Oracle database to multiple Aurora instances based on the tenant_id of a customer.

We split the data from the parent table into appropriate Regions based on the tenant_id column by using a condition in the AWS DMS task settings. However, the data in CHILDTABLE can’t be split using the condition because it doesn’t have a tenant_id column. To migrate CHILDTABLE, we create an AWS DMS task to migrate the whole table, ignoring the foreign key constraints. After the data is migrated, we clean up CHILDTABLE for all those entries that don’t have any referential values in the parent table.

Step 1: Create an AWS DMS task to migrate the parent and child tables

To create our AWS DMS task, complete the following steps:

Create a task with the migration type Migrate existing data and replicate ongoing changes.

For Stop task after full load completes, select Stop after applying cached changes.

This is needed so that the AWS DMS endpoint attributes and the enabling of foreign keys can be done after the initial full load to maintain consistency of data.

Based on the tenant_id value, you can send the data to the database you need. In this example, the tenant_id with values less than 12,000 are sent to the Aurora database in us-east and those with greater than 12,000 are sent to the Aurora database in us-west. Therefore, you need to create the endpoints, replication instance, and database migration tasks in both the Regions and change the condition based on the Region.

Configure the data split for the table PARENTTABLE for us-east for tenant_id values less than or equal to 12,000.

Add another table selection rule for the CHILDTABLE for transferring all the data to the Aurora databases.

For Migration task startup configuration, select Manually later.

You need to create a similar task in each Region that you want data to be split into.

Create a condition for the us-west Region for values greater than or equal to 12,001.

The condition doesn’t need to be just a number; it can also be a character or any value based on how tenancy is defined in the database.

Apply filters only to immutable columns, which aren’t updated after creation. If source filters are applied to mutable columns, which can be updated after creation, adverse behavior can result. For example, if the tenant_id column is updated in the source table, the values continue to be excluded or included even when the data is no longer the same. For more information about the limitations of AWS DMS tasks, see Using source filters.

Step 2: Change the parameter in the Aurora RDS database

Before you start the full load AWS DMS task, change the session_replication_role parameter in the Aurora RDS parameter groups to the replica. This parameter makes sure that the foreign keys are disabled and the data can be migrated without errors because only partial data is being migrated in the parent table.

When the full load is complete and is stopped after applying cached changes, this parameter is reverted back to the origin (shown in Step 6) so that Aurora database can have the foreign key constraints enabled for any data that is written to the database.

Step 3: Start the AWS DMS full load task

Start the AWS DMS task created in Step 1 and wait for it to complete the load for all the tables. You need to run this task in all the Regions (us-east and us-west in our example).

You can confirm the data was migrated via the task details on the AWS DMS console.

The task details include the table statistics, which show the amount of data migrated to the databases and their validation. For more information, see Task status.

After the initial data is migrated, the task stops after applying cached changes. This gives us the chance to modify the parameter on the database and resume the task so as to migrate the change data capture (CDC) changes.

Step 4: Data verification

Verify that the data is split into the Aurora databases across Regions based on tenancy for PARENTTABLE. Also, verify that CHILDTABLE has complete data without it being split.

The following screenshots show that PARENTTABLE_ID 1, 2, and 3 moved over to us-east and PARENTTABLE_ID 4, 5, and 6 moved to us-west. The complete data for CHILDTABLE is moved to both us-east and us-west Regions.

Step 5: Add extra connection attributes to endpoints

Set the extra connection attributes in the Aurora RDS endpoint as afterConnectScript=SET session_replication_role=’replica’. For more information, see Extra connection attributes when using PostgreSQL as a target for AWS DMS.

This ensures that the CDC data ignores the foreign key constraints.

Step 6: Change the parameter setting in the parameter groups

In Step 2, we set session_replication_role to replica to ignore the foreign constraints during the full migration task. However, when the full migration task is complete and stopped, CDC resumes to capture new data changes. We can change the parameter session_replication_role to origin in the parameter group for the Aurora RDS instance, which ensures that any new data inserted into the database that doesn’t come through the AWS DMS task enforces the foreign key constraints to maintain data integrity.

Step 7: Resume the AWS DMS task for migrating the changed data

After you make the parameter changes, you need to resume the task from the last point when it was stopped to capture any data changes to the tables.

Step 8: Verify data changes

Insert new data into the source tables to verify that the data is migrated to the Aurora databases after being split for PARENTTABLE and CHILDTABLE without any errors.

For this post, we enter the following sample data:

You can check the task status on the task details page.

You can also verity data in the target Aurora databases in both Regions to check if the data split happened successfully, as shown in the following screenshots.

Step 10: Delete data from CHILDTABLE

After the data is migrated and verified, you can check the data in CHILDTABLE for orphaned records and delete them. Because CHILDTABLE is migrated without being split during the AWS DMS task, this step is necessary to clean up the data from the table when the migration is complete.

For this post, we clean up using SQL:

Conclusion

When you’re migrating on-premises data to AWS and need to place this data close to your customers for low latency and better application performance, you may need to split data across various Regions. This post showed you how AWS DMS can be used to successfully split the data without compromising the data integrity by first migrating the data and later performing data cleanup.

In part 2, we describe the method of using Oracle Materialized Views and AWS DMS to split the data across various Regions without compromising the data integrity.

As always, AWS welcomes feedback. If you have any comments or questions on this post, please share them in the comments.

About the authors

Saumya Mula is a Database Consultant with the Professional Services team at Amazon Web Services. She works as a database migration consultant to provide Amazon customers with technical guidance to migrate their on-premises databases to AWS.

Mandar Paranjpe is Practice Manger, Worldwide Public Sector with the Professional Services team at Amazon Web Services. He helps customers on their digital and cloud transformation journeys, with a focus on big data and database migrations.

Read MoreAWS Database Blog