This is a guest post by Russell Waterson, Knowledge Graph Engineer at Data Lens Ltd.

Customers use knowledge graphs to consolidate and integrate information assets and make them more readily available.

Building knowledge graphs by getting data from disparate existing data sources can be expensive, time-consuming, and complex. Project planning, project management, engineering, maintenance and release cycles all contribute to the complexity and time to build a platform to populate your knowledge graph database.

The Data Lens team have been implementing Knowledge Graph solutions as a consultancy for 10 years, and have now released tooling to greatly reduce the time and effort required to build a Knowledge Graph.

Use Data Lens to make building a knowledge graph in Amazon Neptune faster and simpler. With no engineering, just configuration.

In this post we setup all the services required to run the solution discussed in build a knowledge graph in Amazon Neptune using Data Lens.

Solution overview

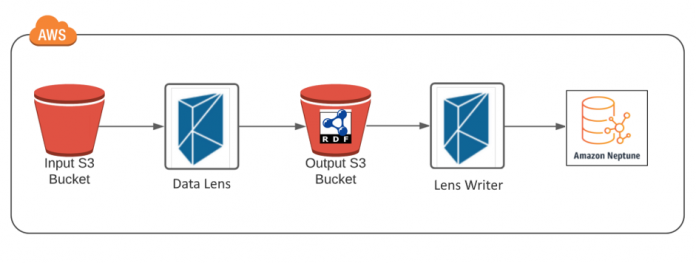

Here we use Data Lens on AWS Marketplace to export JSON content from an Amazon Simple Storage Service (Amazon S3) bucket, transform the JSON into RDF, and load the RDF data into Amazon Neptune.

The following diagram illustrates our architecture.

In this guide, we walk you through the following steps to set up your AWS services:

Configure your file management in Amazon S3, including creating an S3 bucket and configuring the management of the input, mapping, and output files.

Configure and call your Structured File Lens for the JSON data transformation.

Configure your Neptune instance.

Configure your Lens Writer for loading RDF data into Neptune.

Configure and query Neptune using Neptune Workbench with a Jupyter notebook.

Build the functional solution related to this AWS configuration

For a step by step-by-step guide to implement the functional solution, visit this related post:

Build a knowledge graph in Amazon Neptune using Data Lens

Configure your file management in Amazon S3

Although you can store files locally on a mounted storage volume, full integration with Amazon S3 has been incorporated into the Lenses and Writer. This allows you to store all input, mapping, and output files in an S3 bucket of your choice, both with and without authentication.

If you don’t already have an available S3 bucket, you must create a new one on the Amazon S3 console. When creating your bucket, along with the rest of your stack, all services must reside in the same AWS Region. After you create your bucket, we recommend adopting a similar folder structure to the following, because the Lenses and Writer are URL-based systems and correct file management aids in the organization of your different file types:

An input folder to store all your input source data. For this post, we store the JSON sample file explored previously in this folder, and name it inputfile.json.

A mapping folder to store your Turtle (.ttl) mapping file. For this post, the mapping file named mapping.ttl is stored in this directory. Make sure that this folder only contains the mapping file you wish to use for the RDF conversion.

An output folder where your transformed RDF output files are saved to.

A provenance output folder where your generated provenance files are saved to.

Configure and call your Structured File Lens

When we process a JSON file, we use the Structured File Lens. If can also process XML and CSV files. To use the Lens, you must first subscribe via the product page on AWS Marketplace then complete the following steps.

On the Launch page, you’re presented with information regarding the container image. To assist with easy deployment of the Lens, you’re provided with a preconfigured AWS CloudFormation template, allowing for a one-click deploy solution. This starts up an Amazon Elastic Container Service (Amazon ECS) cluster with all the required permissions and networking, with the Lens running within as a task.

To use this template, choose Quick Stack Creation – Structured File Lens Only.

On the Quick create stack page, enter your previously configured S3 paths for your mapping file and RDF output:

MappingsDirUrl – The directory where your mapping file is located. This is a directory and not a file name.

OutputDirURL – The S3 directory where your RDF output files are saved to.

ProvOutputDirURL – The S3 directory where your provenance files are saved to.When entering your S3 directories, they must be in the format of s3://<bucket-name>/<directory>/. You can obtain this path on the Amazon S3 console by choosing Copy path.

Choose Create stack.The CloudFormation template starts to spin up the cluster and you’re redirected to the CloudFormation stack’s Events page, where you can observe its status.As discussed earlier, you can trigger the Lens via the built-in REST API endpoint.

To retrieve the endpoint address of the Lens within AWS, from the CloudFormation stacks page, choose the Outputs tab for the Structured File Lens stack.

Using the GET request format with the structure and parameters http://<lens-address>/process?inputFileURL=<input-file-url>, we can use Postman to call, for example:

After a successful call to the RESTful endpoint, within the report we’re sent the location of the successfully transformed RDF file. Take note of this location because we use it later to trigger its ingestion by the Lens Writer.

If you want to track the status of the Lens, logging is available on the Resource tab on the CloudFormation stack page. This can be found in the CloudWatchLogsGroup resource.

Configure your Neptune Instance

As with most AWS services, you can initialize and set up Neptune on the AWS Management Console. If you already have an instance running, you can skip this step and move on to configuring your Lens Writer. Otherwise, on the Neptune console, choose Create database.

The Neptune console allows you to create a database with a single click using their pre-built configuration; alternatively, you can choose your own. At this step, the Lens Writer doesn’t require any specific configuration, so complete the form with your required credentials. Make sure that it has the correct VPC configuration and can communicate with the outside world by enabling access from external endpoints.

As your database is being initialized, we can set up the Lens Writer.

Configure and call your Lens Writer

Similarly to the Lenses, the Writer is a scalable container server available on AWS Marketplace. Subscribe to the product and then complete the following steps.

On the Launch page, you’re presented with information regarding the container image as well as a similarly preconfigured CloudFormation template, allowing for a one-click deploy solution. This also starts up an ECS cluster with the Writer running as a task.

Choose Quick Stack Creation – Lens Writer Only.

On the Quick create stack page, specify the configuration options for the Writer’s ingestion mode, along with the details of your configured Neptune instance (or any other knowledge graph).

When specifying the details of your Neptune instance, you must choose the type of the triple store we wish to connect to, which in this case is neptune.

Provide the graph’s endpoint and its current VPC and subnets.

If your graph completed its initialization, these details are available on the Neptune console.

Choose the database you wish to use and choose the instance that has Writer as its role.

Choose the Connectivity & security tab.

This tab contains the instance’s connection information, including its endpoint and port.

Copy these into your Lens Writer AWS CloudFormation form in the format of https://<db-endpoint>:<db-port>.

Also displayed are the VPC and subnets.

Find and choose all the options displayed in the following screenshot from the drop-down menus.

As previously mentioned, when ingesting data using the Lens Writer, we have two modes to choose from: insert mode and update mode. For this post, we use update mode because we’re not concerned with retaining provenance or time series data.

After you specify your Writer parameters, choose Create stack and wait for your cluster to initialize.Similarly to the Lenses, when the stack is complete, you can trigger the Lens Writer using the built-in REST API endpoint.

As before, choose the Outputs tab on the Lens Writer’s CloudFormation stack page to retrieve the Writer’s endpoint, and call this using a very similar structure as to the Lens, specifying the URL of the RDF file to ingest: http://<writer-address>/process?inputRdfURL=<input-rdf-file-url>.

For example, the Postman call looks like the following code:

Similarly to the Lenses, a successful call results in a JSON response.

Configure and query Neptune using Neptune Workbench with Jupyter Notebooks

To query Neptune, we must first create a Notebook.

On the Neptune console, in the navigation pane, choose Notebooks.

Choose Create notebook.

After you create your notebook, choose Open Notebook and navigate to 01-Getting-Started in the folder structure.To find out more about the Neptune notebook Workbench and its features, open the 01-About-the-Neptune-Notebook file.

Open the file 03-Using-RDF-and-SPARQL-to-Access-the-Graph to run our first SPARQL query.

Navigate to the second query and run it by choosing on the run icon.

This query shows us the RDF data that we previously inserted. It’s a simple SPARQL query returning the triples (subject-predicate-object) in the graph by using ?s ?p ?o with a limit of 10. Because we only have six triples in our graph, it displays the entire contents of the graph. As seen from the results of the SPARQL query, the RDF data has been successfully loaded into the Neptune knowledge graph.

If you want to query something else, you can replace the SPARQL query with one of your own. For example, if we replace it with the following query, we can view the named graph of all our NQuads:

Summary

When converting data into RDF, you often need to develop an expensive and time-consuming parser. In this post, we explored a deployable container-based solution that you can deploy into a new or existing AWS Cloud architecture stack. In addition, we examined how to insert and update RDF data into Neptune, a task that otherwise requires complicated SPARQL queries and data management.

The Lenses and the Writer of Data Lens allows you to migrate all these problems and more. To try out an RDF conversion and Neptune upload for yourself, launch a Neptune instance, and then go to AWS Marketplace to try out your choice of Lens and Lens Writer.

For more information, see the Data Lens documentation and the Data Lens website, where you can share any questions, comments, or other feedback.

About the author

Russell Waterson is a Knowledge Graph Engineer at Data Lens Ltd. He is a key member of the team who successfully built the integration between Data Lens and Amazon Neptune on AWS Marketplace.

Read MoreAWS Database Blog