Deploying high-quality, trained machine learning (ML) models to perform either batch or real-time inference is a critical piece of bringing value to customers. However, the ML experimentation process can be tedious—there are a lot of approaches requiring a significant amount of time to implement. That’s why pre-trained ML models like the ones provided in the PyTorch Model Zoo are so helpful. Amazon SageMaker provides a unified interface to experiment with different ML models, and the PyTorch Model Zoo allows us to easily swap our models in a standardized manner.

This blog post demonstrates how to perform ML inference using an object detection model from the PyTorch Model Zoo within SageMaker. Pre-trained ML models from the PyTorch Model Zoo are ready-made and can easily be used as part of ML applications. Setting up these ML models as a SageMaker endpoint or SageMaker Batch Transform job for online or offline inference is easy with the steps outlined in this blog post. We will use a Faster R-CNN object detection model to predict bounding boxes for pre-defined object classes.

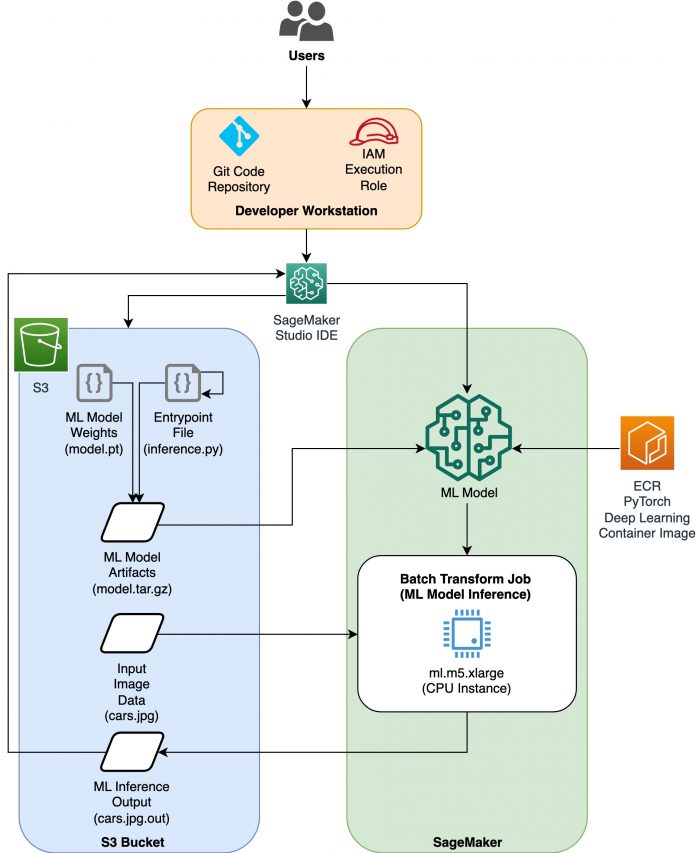

We walk through an end-to-end example, from loading the Faster R-CNN object detection model weights, to saving them to an Amazon Simple Storage Service (Amazon S3) bucket, and to writing an entrypoint file and understanding the key parameters in the PyTorchModel API. Finally, we will deploy the ML model, perform inference on it using SageMaker Batch Transform, and inspect the ML model output and learn how to interpret the results. This solution can be applied to any other pre-trained model on the PyTorch Model Zoo. For a list of available models, see the PyTorch Model Zoo documentation.

Solution overview

This blog post will walk through the following steps. For a full working version of all steps, see create_pytorch_model_sagemaker.ipynb

Step 1: Setup

Step 2: Loading an ML model from PyTorch Model Zoo

Step 3 Save and upload ML model artifacts to Amazon S3

Step 4: Building ML model inference scripts

Step 5: Launching a SageMaker batch transform job

Step 6: Visualizing results

Architecture diagram

Directory structure

The code for this blog can be found in this GitHub repository. The codebase contains everything we need to build ML model artifacts, launch the transform job, and visualize results.

This is the workflow we use. All of the following steps will refer to modules in this structure.

The sagemaker_torch_model_zoo folder should contain inference.py as an entrypoint file, and create_pytorch_model_sagemaker.ipynb to load and save the model weights, create a SageMaker model object, and finally pass that into a SageMaker batch transform job. In order to bring your own ML models, change the paths in the Step 1: setup section of the notebook and load a new model in the Step 2: Loading an ML Model from the PyTorch Model Zoo section. The rest of the following steps below would remain the same.

Step 1: Setup

IAM roles

SageMaker performs operations on infrastructure that is managed by SageMaker. SageMaker can only perform actions permitted as defined in the notebook’s accompanying IAM execution role for SageMaker. For a more detailed documentation on creating IAM roles and managing IAM permissions, refer to the AWS SageMaker roles documentation. We can create a new role, or we could get the SageMaker (Studio) notebook’s default execution role by running the following lines of code:

The above code gets the SageMaker execution role for the notebook instance. This is the IAM role that we created for our SageMaker or SageMaker Studio notebook instance.

User configurable parameters

Here are all the configurable parameters needed for building and launching our SageMaker batch transform job:

Step 2: Loading an ML model from the PyTorch Model Zoo

Next, we specify an object detection model from the PyTorch Model Zoo and save its ML model weights. Typically, we save a PyTorch model using the .pt or .pth file extensions. The code snippet below downloads a pre-trained Faster R-CNN ResNet50 ML model from the PyTorch Model Zoo:

model = torchvision.models.detection.fasterrcnn_resnet50_fpn(pretrained=True)

SageMaker batch transform requires as an input some model weights, so we will save the pre-trained ML model as model.pt. If we want to load a custom model, we could save the model weights from another PyTorch model as model.pt instead.

Step 3: Save and upload ML model artifacts to Amazon S3

Since we will be using SageMaker for ML inference, we need to upload the model weights to an S3 bucket. We can do this using the following commands or by downloading and simply dragging and dropping the file directly into S3. The following commands will first compress the group of files within model.pt to a tarball and copy the model weights from our local machine to the S3 bucket.

Note: To run the following commands, you need to have the AWS Command Line Interface (AWS CLI) installed.

Next, we copy our input image over to S3. Below is the full S3 path for the image.

We can copy over this image to S3 with another aws s3 cp command.

Step 4: Building ML model inference scripts

Now we will go over our entrypoint file, inference.py module. We can deploy a PyTorch model trained outside of SageMaker using the PyTorchModel class. First, we instantiate the PyTorchModelZoo object. Then we will construct an inference.py entrypoint file to perform ML inference using SageMaker batch transform on sample data hosted in Amazon S3.

Understanding the PyTorchModel object

The PyTorchModel class within the SageMaker Python API allows us to perform ML inference using our downloaded model artifact.

To initiate the PyTorchModel class, we need to understand the following input parameters:

name: Model name; we recommend using either the model name + date time, or a random string + date time for uniqueness.

model_data: The S3 URI of the packaged ML model artifact.

entry_point: A user-defined Python file to be used by the inference Docker image to define handlers for incoming requests. The code defines model loading, input preprocessing, prediction logic, and output post-processing.

framework_version: Needs to be set to version 1.2 or higher to enable automatic PyTorch model repackaging.

source_dir: The directory of the entry_point file.

role: An IAM role to make AWS service requests.

image_uri: Use this Amazon ECR Docker container image as a base for the ML model compute environment.

sagemaker_session: The SageMaker session.

py_version: The Python version to be used

The following code snippet instantiates the PyTorchModel class to perform inference using the pre-trained PyTorch model:

Understanding the entrypoint file (inference.py)

The entry_point parameter points to a Python file named inference.py. This entrypoint defines model loading, input preprocessing, prediction logic, and output post-processing. It supplements the ML model serving code in the prebuilt PyTorch SageMaker Deep Learning Container image.

Inference.py will contain the following functions. In our example, we implement the model_fn, input_fn, predict_fn and output_fn functions to override the default PyTorch inference handler.

model_fn: Takes in a directory containing static model checkpoints in the inference image. Opens and loads the model from a specified path and returns a PyTorch model.

input_fn: Takes in the payload of the incoming request (request_body) and the content type of an incoming request (request_content_type) as input. Handles data decoding. This function needs to be adjusted for what input the model is expecting.

predict_fn: Calls a model on data deserialized in input_fn. Performs prediction on the deserialized object with the loaded ML model.

output_fn: Serializes the prediction result into the desired response content type. Converts predictions obtained from the predict_fn function to JSON, CSV, or NPY formats.

Step 5: Launching a SageMaker batch transform job

For this example, we will obtain ML inference results through a SageMaker batch transform job. Batch transform jobs are most useful when we want to obtain inferences from datasets once, without the need for a persistent endpoint. We instantiate a sagemaker.transformer.Transformer object for creating and interacting with SageMaker batch transform jobs.

See the documentation for creating a batch transform job at CreateTransformJob.

Step 6: Visualizing tesults

Once the SageMaker batch transform job finishes, we can load the ML inference outputs from Amazon S3. For this, navigate to the AWS Management Console and search for Amazon SageMaker. On the left panel, under Inference, see Batch transform jobs.

After selecting Batch transform, see the webpage listing all SageMaker batch transform jobs. We can view the progress of our most recent job execution.

First, the job will have the status “InProgress.” Once it’s done, see the status change to Completed.

Once the status is marked as completed, we can click on the job to view the results. This webpage contains the job summary, including configurations of the job we just executed.

Under Output data configuration, we will see an S3 output path. This is where we will find our ML inference output.

Select the S3 output path and see an [image_name].[file_type].out file with our output data. Our output file will contain a list of mappings. Example output:

Next, we process this output file and visualize our predictions. Below we specify our confidence threshold. We get the list of classes from the COCO dataset object mapping. During inference, the model requires only the input tensors and returns the post-processed predictions as a List[Dict[Tensor]], one for each input image. The fields of the Dict are as follows, where N is the number of detections:

boxes (FloatTensor[N, 4]): the predicted boxes in [x1, y1, x2, y2] format, with 0 <= x1 < x2 <= W and 0 <= y1 < y2 <= H, where W is the width of the image and H is the height of the image

labels (Int64Tensor[N]): the predicted labels for each detection

scores (Tensor[N]): the prediction scores for each detection

For more details on the output, refer to the PyTorch Faster R-CNN FPN Documentation.

The model output contains bounding boxes with respective confidence scores. We can optimize displaying false positives by removing bounding boxes for which the model is not confident. The following code snippets process the predictions in the output file and draw bounding boxes on the predictions where the score is above our confidence threshold. We set the probability threshold, CONF_THRESH, to .75 for this example.

Finally, we visualize these mappings to understand our output.

Note: if the image doesn’t display in your notebook, please locate it in the directory tree on the left-hand side of JupyterLab and open it from there.

Running the example code

For a full working example, clone the code in the amazon-sagemaker-examples GitHub and run the cells in the create_pytorch_model_sagemaker.ipynb notebook.

Conclusion

In this blog post, we showcased an end-to-end example of performing ML inference using an object detection model from the PyTorch Model Zoo using SageMaker batch transform. We covered loading the Faster R-CNN object detection model weights, saving them to an S3 bucket, writing an entrypoint file, and understanding the key parameters in the PyTorchModel API. Finally, we deployed the model and performed ML model inference, visualized the model output, and learned how to interpret the results.

About the Authors

Dipika Khullar is an ML Engineer in the Amazon ML Solutions Lab. She helps customers integrate ML solutions to solve their business problems. Most recently, she has built training and inference pipelines for media customers and predictive models for marketing.

Marcelo Aberle is an ML Engineer in the AWS AI organization. He is leading MLOps efforts at the Amazon ML Solutions Lab, helping customers design and implement scalable ML systems. His mission is to guide customers on their enterprise ML journey and accelerate their ML path to production.

Ninad Kulkarni is an Applied Scientist in the Amazon ML Solutions Lab. He helps customers adopt ML and AI by building solutions to address their business problems. Most recently, he has built predictive models for sport, automotive, and media customers.

Yash Shah is a Science Manager in the Amazon ML Solutions Lab. He and his team of applied scientists and ML engineers work on a range of ML use cases from healthcare, sports, automotive, and manufacturing.

Read MoreAWS Machine Learning Blog