When you are building data pipelines, you need to manage and monitor the workflows in the pipeline and often automate them to run periodically. Cloud Composer is a fully managed workflow orchestration service built on Apache Airflow that helps you author, schedule, and monitor pipelines spanning hybrid and multi-cloud environments.

By using Cloud Composer instead of managing a local instance of Apache Airflow, you can benefit from the best of Airflow with no installation, management, patching, and backup overhead because Google Cloud takes care of that technical complexity. Cloud Composer is also enterprise-ready and offers a ton of security features so you don’t have to worry about it yourself. Last but not least, the latest version of Cloud Composer supports autoscaling, which provides cost efficiency and additional reliability for workflows that have bursty execution patterns.

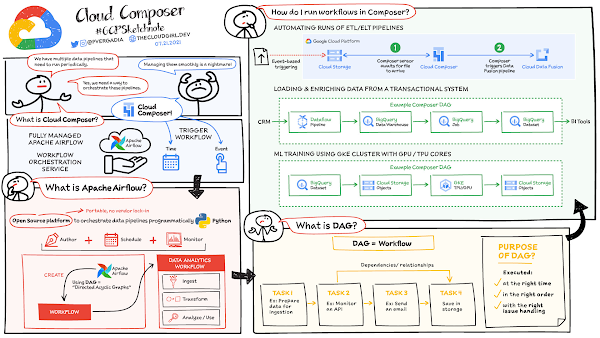

How does Cloud Composer work?

In data analytics, a workflow represents a series of tasks for ingesting, transforming, analyzing, or utilizing data. In Airflow, workflows are created using directed acyclic graphs (DAGs).

A DAG is a collection of tasks that you want to schedule and run, organized in a way that reflects their relationships and dependencies. DAGs are created in Python scripts, which define the DAG structure (tasks and their dependencies) using code. The purpose of a DAG is to ensure that each task is executed at the right time, in the right order, and with the right issue handling.

Each task in a DAG can represent almost anything—for example, one task might perform data ingestion, another sends an email, and yet another runs a pipeline.

How to run workflows in Cloud Composer?

After you create a Cloud Composer environment, you can run any workflows your business case requires. The Composer service is based on a distributed architecture running in GKE and other Google Cloud services. You can schedule a workload at a specific time or you can start a workflow when a specific condition is met, for example when an object is saved to a storage bucket. Cloud Composer comes with built-in integrations to almost all Google Cloud products including BigQuery and Dataproc; it also supports integrations (enabled by provider packages from vendors) with applications running on-prem or on another cloud. Here is a list of built-in integrations and provider packages.

Cloud Composer security features

Private IP: Using private IP means that the compute node in Cloud Composer is not publicly accessible and therefore is protected from the public internet. Developer can access the internet but cannot be accessed from outside. Private IP + Web Server ACLs: The user interface for Airflow is protected by authentication. Only authenticated customers can access the specific Airflow user interface. For additional network level security you can use web server access controls along with Private IP which helps limit access from the outside world by whitelisting a set of IP addresses. VPC Native Mode: In conjunction with other features VPC native mode helps limit access to Composer components in the same VPC network, keeping it protected.VPC Service Controls: Provides increased security by enabling you to configure a network service perimeter that prevents access from the outside world and also prevents access to the outside world.Customer Managed Encryption Keys (CMEK): Enabling CMEK lets you provide your own encryption keys to encrypt/decrypt environment data.Restricting Identities By Domain: This features enables you to restrict the set of identities that can access Cloud Composer environments to specific domain names, e.g. @yourcompany.com.Integration with Secrets Manager: You can use a built-in integration with Secrets Manager to protect keys and passwords used by your DAGs for authentication to external systems.

If you are building data pipelines, then you need to check out Cloud Composer for easy and fully managed workflow orchestration. For a more in-depth look into Cloud Composer check out the documentation.

For more #GCPSketchnote, follow the GitHub repo. For similar cloud content follow me on Twitter @pvergadia and keep an eye out on thecloudgirl.dev.

Cloud BlogRead More