Amazon RDS Proxy is a fully managed, highly available database proxy that can front your Amazon RDS for MySQL or Amazon RDS for PostgreSQL databases and Amazon Relational Database Service (Amazon RDS) for MySQL or Amazon Relational Database Service (Amazon RDS) for PostgreSQL databases. It allows you to manage an application’s access to the database and provides multiplexing, connection pooling, and graceful failover. By default, RDS Proxy provides an endpoint for your applications to connect to the Aurora or RDS primary instance.

RDS Proxy lets you create additional endpoints with either a read-only or read/write role. An endpoint with a read-only role routes your queries to the Aurora Replicas, while an endpoint with a read/write role routes your queries to the writer instance. Each endpoint can have its own VPC settings, enabling access to your Aurora or RDS databases from applications in a different VPC. For example, when you are running a reporting application in a separate VPC from your database, you can selectively enable connectivity to the database by creating a RDS Proxy endpoint in the application’s VPC. In this post, we explain how RDS Proxy read-only endpoints help you achieve read scalability of your read-heavy workloads via connection pooling and improve availability for your applications.

Benefits of RDS Proxy read-only endpoints within an AWS Region

Aurora provides built-in read-only endpoints (Using the reader endpoint) to support simple load balancing of connections to available Aurora replicas in an Aurora cluster. Although this serves the basic needs of read traffic routing, with RDS Proxy read-only endpoints, you can achieve the following benefits:

Multiplexing – Connection multiplexing helps reduce the overall connection count, thereby optimizing the use of database resources such as CPU. This reduces the need to scale up your reader instance to support higher connection count.

Connection pooling – RDS Proxy read-only endpoints balance the number of connections open to different readers by evenly distributing them based on user configuration and the Aurora PostgreSQL max_connections value. When replicas are added, new client connections are associated with the new reader based on the current database workload.

Graceful failover – RDS Proxy read-only endpoints help reduce the impact of Aurora failover events by actively monitoring database instances, detecting the failures quickly, and routing the traffic to available replicas without any DNS caching delay. Because Aurora failover events often result in a reboot of every instance in the cluster, RDS Proxy keeps database connections open (and new workload queries paused) until the Aurora cluster is back online.

For more information about this feature, see Using reader endpoints with Aurora clusters.

In the following sections, we use a PostgreSQL test workload to demonstrate these benefits.

Prerequisites

This post assumes you are familiar with Aurora PostgreSQL, Amazon Elastic Compute Cloud (Amazon EC2), and RDS Proxy. I used the following testing environment. If attempting to reproduce the results seen in this post, make sure to set up your environment in a similar way:

Set up an EC2 instance with the psql client and pgbench utility to interact with PostgreSQL instances. Configure your database security groups to allow traffic from this EC2 instance. For more information, visit Connecting to a DB instance running the PostgreSQL database engine.

Launch an Aurora PostgreSQL cluster using PostgreSQL v11.9 (Aurora Engine v3.4). This cluster contains three database instances, one primary instance and two reader instances, all using the db.r5.large instance class. A third reader node is programmatically created and deleted as needed during testing.

This post assumes that you already set up RDS Proxy and can connect to the underlying Aurora instance. To learn more about creating and connecting to the database through RDS Proxy, visit Using Amazon RDS Proxy.

Creating a RDS Proxy endpoint is simple and can be accomplished in few clicks through the Amazon RDS console, or using the AWS Command Line Interface (AWS CLI) or SDK.

The following AWS CLI command creates your proxy:

The following AWS CLI command creates an RDS Proxy read-only endpoint:

Test RDS Proxy

To demonstrate the benefits for RDS Proxy outlined in this post, we use the open-source pgbench tool on Amazon EC2 to run different test scenarios with different levels of concurrency. You can use the sample script rds-proxy-benchmarking-script-v3.sh to invoke multiple connections with the RDS Proxy read-only endpoint.

Additionally, we can use the AWS CLI to add and remove Aurora replicas from the cluster while our sample workload is running, to simulate the impact of these activities on real-world workloads.

In the test cases outlined below, we repeat the same steps to create a sample workload: Open 100 connections per pgbench instantiation, have each instantiation run for 10 minutes, and continue creating new instantiations every 10 seconds (with a 50-second pause after 10 instantiations are created). Each instantiation connects to the specified PostgreSQL read-only endpoint, running with the following pgbench flags:

Test 1: Scaling read-only connections

When scaling read traffic across multiple Aurora replicas in Aurora PostgreSQL, the best practice is to limit the overall number of connections where possible. In PostgreSQL-based engines, each connection made against a database requires the usage of system resources such as CPU and memory. Although the resource requirements vary based on the query running, each database connection uses a small amount of compute resources and memory (approximately 10 MB). For more information, see Performance impact of idle PostgreSQL connections.

This test determines the CPU and memory impact of creating new connections with Aurora replicas when we connect via an Aurora read-only endpoint. Then we repeat the same test by connecting to an RDS Proxy read-only endpoint.

Let’s start by running our sample workload against our cluster’s Aurora read-only endpoint (we use the test script rds-proxy-benchmarking-script-v3.sh). We monitor three metrics in Amazon CloudWatch: the connections count (DatabaseConnections), CPU utilization (CPUUtilization), and freeable memory (FreeableMemory) on an Aurora PostgreSQL instance.

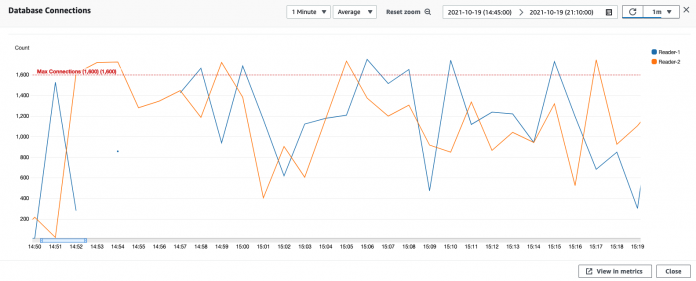

The following graph shows the DatabaseConnections metric.

The following graph shows the CPUUtilization metric.

The following graph shows the FreeableMemory metric.

As shown in the preceding graphs, the overall database connections peak at the 1,600 connection per instance limit and occasionally fall into the 400 connections per instance range. CPU utilization peaks at near 100% at one point during testing for one of our database instances, but usually stays within the 40–80% range across both Aurora Replicas in this cluster. In addition, the freeable memory metric corresponds to the CPU utilization and connection count metrics (less freeable memory is available when database connections and CPU utilization are high).

Let’s repeat this test, but now connecting through an RDS Proxy read-only endpoint. The following graph shows the client connections metric.

The following graph shows the DatabaseConnections metric.

The following graph shows the CPUUtilization metric.

The following graph shows the FreeableMemory metric.

Rerunning the workload using our RDS Proxy read-only endpoint to access our Aurora PostgreSQL cluster has resulted in much lower CPU utilization (averaging 10.5% during the peak testing period vs. 60% average when connecting through the Aurora read-only endpoint). We also observe a lower connection count (averaging 45 connections per instance and an average of 1,700 client connections established to RDS Proxy during the testing period) across our Aurora Replicas for this sample workload. We can service more client connections than was possible using the Aurora read-only endpoint directly with the current instance class and size. We also observed the closed connection metric, which shows that while RDS Proxy is handling slightly more than 1,700 active client connections during most of the test, it’s also closing more client database connections than it leaves open. Additionally, the cluster’s freeable memory metrics shows both lower (averaging 3.4 GB free per instance during this test) and more consistent memory usage while using RDS Proxy.

The following table summarizes the data we collected in this test.

Metric

Direct to Database (Average)

Through Proxy (Average)

Proxy Advantage

Database connection count

1,066

120

11 times fewer connections used

CPU utilization

65

18

3.6 times lower CPU usage

Freeable memory

1.713 GB

3.5 GB

2 times more freeable memory

Test 2: Improved connection balancing among replicas

Aurora uses a distributed and shared storage architecture that enables creation of replicas with minimal replica lag. Additionally, Aurora provides capabilities such as Auto Scaling, which dynamically adjusts the number of Aurora replicas to meet your workload demand. When replicas are provisioned, it’s important to distribute your read queries effectively to optimize the system resources and achieve better read throughput.

Using the Aurora PostgreSQL read-only endpoint is a good way to get started with distributing read-only workloads across all readers in a cluster, but this is a simple round-robin routing that doesn’t consider system resources across a cluster’s replicas.

In this test, we run a workload against our cluster using the Aurora PostgreSQL cluster read endpoint. We also add a new replica instance to the cluster to redistribute connections with concurrency. Then we repeat this test using our RDS Proxy read-only endpoint, and observe the connection distribution across replicas.

The following graph shows that the workload distribution across our readers is somewhat uneven for what is a consistent workload being run against it.

The maximum number of database connections rises to the current connection limit per instance (1,600 connections for a db.R5.large), and the load usually reduces on average to 500 connections at it’s lowest point.

Let’s add another reader instance to this cluster and observe the distribution of connections that are initiated via the cluster RDS Proxy read-only endpoint. The following graph shows our updated metrics.

Although the newly added reader instance was able to take traffic quickly, its addition to the cluster didn’t improve the distribution pattern of the workload across all reader instances. Ideally, the addition of a new reader would see an overall decrease in the number of connections across all instances in the cluster.

Let’s rerun this test using our RDS Proxy read-only endpoint. Although connections are reduced to a lower number per instance at minimum, our maximum connections still spike up to the current instance class (db.R5.large) connection limit of 1,600 connections (though RDS Proxy is able to multiplex, reducing the number of database connections).

The following graph shows our client connections.

The following graph shows our database connections.

When we rerun this workload via our RDS Proxy read-only endpoint, our workload is well distributed across all readers in the cluster, and the client connections metric shows that RDS Proxy is exceeding the level of database client traffic that the Aurora read-only endpoint hosted previously.

Now, let’s add a new reader instance and observe that effect on the workload.

When we add a new reader instance, the instance comes online for traffic within 2 minutes, and traffic is evenly distributed to it based on the workload. When Reader-3 is brought online, it immediately starts accepting traffic—29 connections, compared to Reader-1 with 41 and Reader-2 with 49. When Reader-3 is added while testing against RDS Proxy, there is no measurable impact on the pattern of client connections open against RDS Proxy, and the addition of another Aurora PostgreSQL reader instance doesn’t immediately reduce the number of connections that were already opened against Reader-1 and Reader-2. Throughout the test of RDS Proxy with an identical workload used on our Aurora PostgreSQL read-only endpoint, RDS Proxy averaged 1,700 client connections, and on average was closing 1,900 connections throughout the test period.

The following table summarizes the data collected during this test.

Metric

Direct to Database (Average)

Through Proxy (Average)

Proxy Advantage

Database connection count (per instance)

931

35

26.2 times fewer connections per instance

Client connections (across cluster)

931

1,742

1.87 times more client connections served

Aurora PostgreSQL failover time reduction with RDS Proxy

Another benefit of RDS Proxy is its ability to improve application recovery time after database failovers. Failovers occur when a primary (read/write) database instance becomes inaccessible, which allows another instance to take over as the new primary instance (and disrupts client connections in the process). Failovers can be planned, manually triggered by administrative actions (for testing or upgrade purposes), or unplanned (which occur due to failures). In either case, we want to reduce downtime to minimize disruption to client workloads.

For more information on how RDS Proxy can help improve application availability by reducing primary instance failover time, visit Improving application availability with Amazon RDS Proxy.

Conclusion

The RDS Proxy read-only endpoint functionality enables you to effectively manage read queries through a managed service. In this post, we introduced typical challenges you may experience when scaling your read workloads on Aurora PostgreSQL, introduced RDS Proxy read-only endpoint support, and demonstrated several benefits of using this feature for your database workloads. Through our test workloads, we saw the following improvements:

11 times fewer database connections (cluster-wide) used while serving 1.87 times more client connections

26 times fewer connections used per reader instance

3.6 times lower CPU usage across all reader instances

2 times less memory usage across all reader instances

RDS Proxy more intelligently distributes workloads across available reader instances

RDS Proxy allows for more client workloads to be served with lower resource overhead on each individual reader instance

For further guidance on this feature, check out Working with Amazon RDS Proxy endpoints.

We welcome your comments and feedback, and encourage you to use the sample script used in this post to generate a workload and test this feature.

About the Author

Peter Celentano is a Specialist Solutions Architect with Amazon Web Services, focusing on managed PostgreSQL. He works with AWS customers to design scalable, secure, performant, and robust database architectures on the cloud.

Read MoreAWS Database Blog