In the realm of AWS Cloud adoption, migration stands out as a pivotal aspect, demanding a comprehensive understanding of diverse tools, techniques, and best practices. This understanding becomes particularly essential when it comes to the smooth and uninterrupted migration of large databases, aiming to minimize both downtime and failures. Undertaking large database migration is an intricate and challenging task, often prompting customers to seek advice on strategies and best practices for reducing complexity and downtime.

In this post, we explore different migration methods, important factors to consider during the migration process, and the recommended tools and best practices for migrating large Oracle databases to Amazon Relational Database (Amazon RDS) for Oracle. Generally speaking very large database could be anything of size more than or equal to 1TB.

Migration methods

There are three high-level categories of migration methods to explore here:

One-time migration: Full load – Involves a bulk pickup and move of the data, with the option of an application outage. The decision to have an outage or not depends on whether you can tolerate an incomplete dataset on the target. This approach is typically suitable for small databases where the migration process is quick enough for the application to withstand downtime throughout the process, ensuring no changes are missed. It’s also suitable for proof-of-concept pilots where the focus is solely on having a dataset on the target, without the requirement for transactional completeness. Additionally, the one-time migration can serve as a starting point for continuous replication, enabling the capture and migration of changes occurring after the initial migration.

Continuous replication: Log shipping and transactional replication – Continuous replication, also called change data capture (CDC), is specifically designed to cater to databases that undergo frequent changes and require a complete dataset on the target. This mechanism is ideal for large databases that can’t afford to experience downtime during the transfer process, as well as for mission-critical databases with strict uptime requirements, necessitating near-zero downtime for migration. Additionally, this type of migration is valuable for data protection and disaster recovery purposes because it enables the creation of a full physical copy with minimal lag or delta between the source and target systems. With proper configuration, continuous replication can sustain this state indefinitely, either until the planned cutover time or in the event of a disaster occurring on the primary system.

Periodic refresh: Copy changes to Amazon RDS for Oracle – The periodic refresh approach involves incremental updates but not on a continuous basis. Let’s say you have already migrated the majority of the data to a new environment. However, you need to update that environment with the latest sales transactions that have occurred in the days since the migration. In this scenario, you can perform a smaller migration of a specific dataset, potentially based on dates or specific partitions from the source, to synchronize the target with the changes that have occurred since the initial migration. This concept resembles an ETL (extract, transform, and load) process, where you identify the changes and apply them to the target.

The following table summarizes the use cases for these three approaches.

Migration Method

Use Case

One-Time Migration

Small databases

Proof-of-concept Pilots

Initial step for continuous replication

Continuous Replication

Big Databases

Near-zero downtime migrations

Data protection, disaster recovery

Periodic Refresh

Incremental refresh for production engine

Daily refresh for reporting instance

Migration tools

With these three fundamental migration types available, it’s crucial to determine the most suitable approach for each specific use case. In this section, we discuss your options for migration tool.

Logical or physical migration

Logical migration means extracting the database objects and data from the source database and creating them again on the target database, for example using oracle Data Pump. From the view of the end-user, the data is the same, and SELECT statements will return the same results, but the data would likely be organized differently in data blocks and data files on disk than on the source database. Logical migration would demand for data validation in most of the cases.

With a physical migration method, the data blocks and data files on disk are copied as they are to the target system, for example using RMAN backup and restore method or Oracle Data Guard. Since physical migration moves the data files or their backups, in some cases it may requires access to the operating system of the target database machine. It also might require access to the root container in a multi-tenant architecture.

Amazon RDS for Oracle supports migration via RMAN Transportable Tablespaces.

Recommended tools for small datasets

If the source database is under 10 GB, and if you have a reliable high-speed internet connection, you can use one of the following tools for your data migration. For small and large datasets, Oracle Data Pump is a great tool to transfer data between databases and across platforms. For small datasets, you could also consider traditional export import using materialized views and potentially database links to pull data over, or even SQL*Loader. The following table summarizes your options.

Size

Data Migration Method

Database Size

Additional License Required

Recommended For

Small Datasets

Oracle SQL Developer

Up to 200 MB

No

Small databases with any number of objects

Oracle Materialized Views

Up to 500 MB

No

Small databases with limited number of objects (This option needs connectivity and DB link)

Oracle SQL*Loader

Up to 10 GB

No

Small to medium databases with limited number of objects

Oracle Export and Import

Up to 10 GB

No

Small to medium databases with large number of objects

Oracle Data Pump

Up to 5 TB

Only If using compression

Preferred method for any database from 10 GB to 5 TB

The 10 GB size is a guideline based on our experience with assisting customers migrating thousands of Oracle databases to AWS and customer can use the same methods for larger databases as well. The migration time varies based on the data size and the network throughput. However, if your database size exceeds 50 GB, you should use one of tools listed in the next section.

Recommended tools for large datasets

For larger datasets, Oracle Data Pump can export the dataset, which can then be transferred over the network, or the resulting dump files can be copied to an AWS Snowball, which is an edge computing, data migration, and edge storage device that you can connect via local network to your database server to receive the exported data. Then you ship the device to AWS and we upload that data to your Amazon Simple Storage Service (Amazon S3) bucket, where you can access it and import it into Amazon RDS.

You can also use AWS Database Migration Service (AWS DMS) to perform a full load of your source database to the RDS instance directly. We talk about this in detail later in this post.

AWS DMS can also perform CDC of an Oracle database source to propagate the changes to the target RDS for Oracle instance. It can do this in conjunction with a full load as well. Other logical migration and replication tools can also be used, including Oracle GoldenGate, Quest SharePlex or Qlik Data Integration as it’s now known, and others.

The following table summarizes your migration methods for large datasets.

Size

Data Migration Method

Database Size

Oracle License Required

Recommended For

Large Datasets

Oracle Data Pump (w/o networt_link)

Up to 5 TB

Only If using compression

Preferred method for any database from 10 GB to 5 TB

Oracle Data Pump with Snowball

Any size

Only If using compression

For large database migration

AWS DMS

Any size

No

Minimal downtime migration

Oracle Golden Gate

Any size

Yes

Minimal downtime migration

External tables

Up to 1 TB

No

Scenarios where this is the standard method in use

Oracle transportable tablespaces

Any size

No

For large database migration, Oracle Solaris platform to Linux migration, or lower downtime migration

Migration strategies

In the previous section, we discussed the fundamental types of migration methods and the associated tools. Now, let’s delve into the practical aspect of using these tools or combinations of tools to devise an optimal strategy based on your application’s service-level agreement (SLA), Recovery Time Objective (RTO), Recovery Point Objective (RPO), tool availability, and license availability.

Before we proceed, it’s important to understand the key factors to consider during migration. The migration strategy you choose depends on several factors:

The size of the database

The number of databases

Data types and character sets

Network bandwidth between the source server and AWS

The version and edition of your Oracle Database software

The database options, tools, and utilities that are available

Acceptable migration downtime

Transaction volume change

The team’s proficiency with tools

The following table lists the most commonly used strategies for large database migrations into Amazon RDS for Oracle.

Strategy

Full Load

Change Data Capture (CDC)

Oracle Data Pump (w/o network_link) with Amazon S3 or Amazon EFS integration for full load and AWS DMS for CDC

Oracle Data Pump

AWS DMS

AWS DMS for full load and CDC

AWS DMS

AWS DMS

Oracle Data Pump with Snowball

Oracle Data Pump with Snowball

AWS DMS

Oracle transportable tablespaces

Migrating using Oracle transportable tablespaces with periodic refresh using incremental backup and restore

AWS DMS (optional)

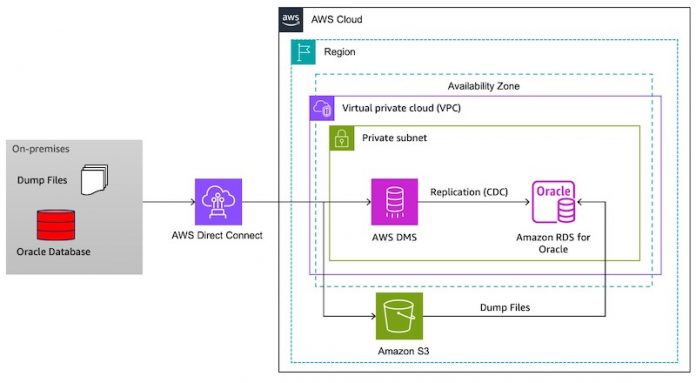

Oracle Data Pump with Amazon S3 integration for full load and AWS DMS for CDC (Recommended for database size up to 5TB)

The following diagram illustrates the architecture for using Oracle Data Pump with Amazon S3 integration for full load and AWS DMS for CDC.

The high-level steps are as follows:

Identify the schemas migrated.

Export the schema from the source database using Data Pump using the FLASHBACK_SCN or FLASHBACK_TIME option for a consistent export.

Copy the dump file to an S3 bucket.

Integrate Amazon S3 with the target RDS for Oracle instance.

Download the dump file from the S3 bucket to the target RDS for Oracle instance.

Perform a Data Pump import into the target RDS for Oracle instance.

Start an AWS DMS replication instance.

Configure source and target endpoints.

Configure an AWS DMS CDC task using SCN or timestamp as the starting point to replicate ongoing changes on the source database.

Switch applications over to the target at your convenience.

Follow the best practices related to Data Pump as well using AWS DMS mentioned later in this post. For detailed information on how to complete these steps, refer to Migrating Oracle databases with near-zero downtime using AWS DMS. Refer to Load and unload data without permanently increasing storage space using Amazon RDS for Oracle read replicas to learn how to reclaim storage occupied by dump file by having a replica in advance.

This approach has the following benefits:

With Amazon S3 integration, you can transfer your Oracle Data Pump files directly to your RDS for Oracle instance. After you export your data from your source DB instance, you can upload your Data Pump files to your S3 bucket, download the files from your S3 bucket to the RDS for Oracle instance, and perform the import. You can also use this integration feature to transfer your Data Pump files from your RDS for Oracle DB instance to your on-premises database server.

If the dump file exceeds 5 TB, you can run the Oracle Data Pump export with the parallel option. This operation spreads the data into multiple dump files so that you don’t exceed the 5 TB limit for individual files on S3 bucket.

Oracle Data Pump with Amazon EFS integration for full load and AWS DMS for CDC

The following diagram illustrates the architecture for using Oracle Data Pump with Amazon Elastic File System (Amazon EFS) integration for full load and AWS DMS for CDC.

The high-level steps are as follows:

Identify the schemas migrated.

Export the schema from the source database using Data Pump using the FLASHBACK_SCN or FLASHBACK_TIME option for consistent export.

Integrate Amazon EFS with the target RDS for Oracle instance.

Perform a Data Pump import into the target RDS for Oracle instance.

Start an AWS DMS replication instance.

Configure source and target endpoints.

Configure an AWS DMS CDC task using the SCN or timestamp as a starting point to replicate ongoing changes on the source database.

Switch applications over to the target at your convenience.

Follow the best practices related to Data Pump as well using AWS DMS mentioned later in this post. For detailed information on how to complete these steps, refer to Integrate Amazon RDS for Oracle with Amazon EFS.

After Amazon RDS for Oracle has been integrated with Amazon EFS, you can transfer files between your RDS for Oracle DB instance and EFS file system. This integration provides the following benefits:

You can export and import Oracle Data Pump files to and from Amazon EFS to your RDS for Oracle DB instance. You don’t need to copy the dump files onto Amazon RDS for Oracle storage. These operations are performed directly from the EFS file system.

This option provides faster migration of data compared to migration over database link. You can use the EFS file system mounted on RDS for Oracle DB instances as a landing zone for various Oracle files required for migration or data transfer.

Using it as a landing zone helps save the allocation of extra storage space on the RDS instance to hold the files.

The EFS file systems can automatically scale from gigabytes to petabytes of data without needing to provision storage.

There are no minimum fees or setup costs, and you pay only for what you use.

AWS DMS for full load and CDC

The following diagram shows the architecture for using AWS DMS for full load and CDC.

The high-level steps are as follows:

Prepare the data on the source and target.

Identify the schemas and tables to be migrated in source database.

Enable sufficient capacity for archived logs and set archived log retention appropriately.

Enable supplemental logging.

Complete all the prerequisites as mentioned in Using an Oracle database as a source for AWS DMS.

Complete all the prerequisites as mentioned in Using an Oracle database as a target for AWS Database Migration Service.

Additionally, review the limitations before starting the migration for the source and target Oracle databases.

Now you can set up AWS DMS replication.

Create a replication instance.

Create a source endpoint and target endpoint.

Configure an AWS DMS full load and CDC task to migrate your desired tables and schemas.

Follow the best practices for AWS DMS configuration and setup mentioned later in this post. For more information about migration, refer to Achieve a high-performance migration to Amazon RDS for Oracle from on-premises Oracle with AWS DMS.

With this approach, you can migrate and replicate your critical databases seamlessly to Amazon RDS by using AWS DMS and its CDC feature with minimal to no downtime.

Oracle Data Pump with Snowball for full load and AWS DMS for CDC

The following diagram illustrates the architecture for using Oracle Data Pump with Snowball for full load and AWS DMS for CDC.

The high-level steps are as follows:

On the source, use Data Pump to export the dump file.

Load the dump file to Snowball.

Use Snowball to import the file to an S3 bucket.

Load the dump file from the S3 bucket to Amazon RDS.

In the target, perform a Data Pump import.

Use AWS DMS for CDC.

For more information, refer to New AWS DMS and AWS Snowball Integration Enables Mass Database Migrations and Migrations of Large Databases.

With Snowball, you can migrate petabytes of data faster, especially when network conditions are limited. Please refer to Migrating large data stores using AWS Database Migration Service and AWS Snowball Edge to learn more

Migrate using Oracle transportable tablespaces

You can use the Oracle transportable tablespaces feature to copy a set of tablespaces from an on-premises Oracle database to an RDS for Oracle DB instance. The high-level steps are as follows:

Set up source

Take full backup of tablespaces

Transfer the TTS set and restore them in RDS

Make incremental backup and restore them in RDS

Final incremental backup and metadata export

Final incremental restore and metadata import

Validate and clean up

For more details refer to the documentation and blog post Migrating using Oracle transportable tablespaces for more details.

This approach offers the following benefits:

Downtime is lower than most other Oracle migration solutions.

Because the transportable tablespace feature copies only physical files, it avoids the data integrity errors and logical corruption that can occur in logical migration.

No additional license is required.

You can migrate a set of tablespaces across different platforms and endianness types, for example, from an Oracle Solaris platform to Linux. However, transporting tablespaces to and from Windows servers isn’t supported.

Best practices for using Oracle Data Pump for full load

When exporting data from your source database, consider the following best practices:

Check the database size to see if you can migrate it schema by schema, instead of migrating the full database. Migrating schemas individually is less error prone and more manageable than migrating them all at once.

Export data in parallel mode by using the Oracle Data Pump PARALLEL parameter using compression and multiple threads for better performance.

Check if the tables have large objects (LOBs). If you have large tables with LOBs, we recommend that you export those tables separately.

During the export process, avoid running long database transactions on your source database to avoid Oracle read inconsistency errors.

If you are using replication tools such as AWS DMS, Oracle GoldenGate, or Quest SharePlex, make sure that you have enough space on your on-premises server to hold archive logs for 24–72 hours, depending on how long the migration takes.

Consider the following when importing data to your target database:

If you’re migrating a very large database, we recommend that you provision the RDS instance type with sufficient resources like choosing r5b instead of r5 just for the migration initially and for the duration of the migration for faster data loads. After the migration is complete, you can change the DB instance to the right-sized instance type.

Do not import in full mode (it might cause conflicts with RDS Oracle’s data dictionary).

Increase the size of redo log files, undo tablespaces, and temporary tablespaces to improve performance during migration, if needed.

Disable the Multi-AZ option during the import process and enable it after migration is complete.

Disable the generation of archive logs by setting the backup retention to zero to achieve faster data load.

Prepare the target database by creating tablespaces, users, roles, profiles, and schemas in advance.

If you have large tables with LOBs, import each LOB table separately.

Do not import dump files that were created using the Oracle Data Pump export parameters TRANSPORT_TABLESPACES, TRANSPORTABLE, or TRANSPORT_FULL_CHECK (not supported).

Best practices for using AWS DMS

In this section, we discuss best practices for using AWS DMS during the different stages of migration. Use the relevant strategy discussed along with best practices depending on your RTO and RPO requirements for a smooth and seamless migration of your Oracle database into your RDS for Oracle instance.

Pre-migration

Keep in mind the following best practices for your source database:

Run a pre-migration assessment:

Evaluate all tables that do not have primary key constraints.

Evaluate support for data type migrations.

Evaluate tables with LOB columns defined as NOT NULL on target.

Ensure all prerequisites are met:

ARCHIVELOG mode is set to ON.

Archived log retention is adequately set.

Supplemental logging is enabled at both the database and table level.

Create the dmsuser on the source and target with appropriate privileges:

Grant DMSUSER unlimited tablespace on every tablespace you are migrating data into (and schemas that have objects in the tablespaces).

Consider using an Oracle standby as a source for AWS DMS

Understand the impact of long-running open transactions on the source database during the start of the AWS DMS task.

Consider using AWS DMS Binary Reader instead of Oracle LogMiner. If the hourly archived log generation rate on the source database exceeds 30 GB per hour, LogMiner might have some I/O or CPU impact on the Oracle source database. If multiple tasks replicate from the same source, using Oracle LogMiner is less efficient.

Evaluate the source database and develop an adept migration strategy.

If the source Oracle database is on ASM, use useLogMinerReader=N;useBfile=Y;asm_user=asm_username;asm_server=RAC_server_ip_address:port_number/+ASM;parallelASMReadThreads=6;readAheadBlocks=150000;. This helps pull more data from the ASM storage.

Understand the limitations for using Oracle as a source and develop workarounds for the specific limitations.

Note the following best practices for the target:

Create the replication instance in the same Availability Zone as the target database instance.

Provision your RDS instance with sufficient IOPS.

Reduce contention on your target database:

Disable backups.

Run in a single Availability Zone.

The target database character set should be same or a superset of the original character set. If the source has a single-byte character set, avoid picking a multi-byte character set on the target.

Understand the migration time and limitations for using an Oracle target with AWS DMS.

Migration

Keep in mind the following best practices for your migration:

Pre-create the schema and objects using Oracle Data Pump in the target database.

Provision a proper replication server with regards to storage, memory, and CPU size.

Use a full load and CDC task.

Configure parallelism with the following methods:

Load multiple tables in parallel. By default, AWS DMS loads eight tables in parallel (MaxFullLoadSubTasks). Depending on the resource availability on the source, target, and replication instance, you can consider incrementing this value to a maximum of 49.

Use parallel load for large tables:

If the tables are partitioned or sub-partitioned on source, you could use partitions-auto or subpartitions-auto to load these tables onto the target using multiple threads (the number of parallel threads is still capped with the MaxFullLoadSubTasks setting).

If the tables are not partitioned on the source, you can specify ranges to have AWS DMS split the table logically to migrate the same parallelly and faster. You could use a command similar to the following to logically split into ranges:

When using parallel load to load a single table in parallel, you should set the extra connection attribute or endpoint setting DirectPathParallelLoad to true for your target RDS endpoint by first disabling indexes and constraints on the target.

You can also set DirectPathNoLog to true, which helps increase the commit rate on the Oracle target database by writing directly to tables and without writing to redo logs.

Use either the primary key column or an indexed column to split the table into a range to avoid multiple full table scans on the source database and optimize performance. You can also consider splitting a table into multiple tasks using column filters.

When working with secondary objects, consider the following during a full load:

AWS DMS picks up tables to load in alphabetical order; therefore, referential integrity constraints might cause the table load to fail at times. Therefore, you should disable these during the full load.

Indexes can incur additional maintenance on the database when there is a large data load onto the tables and can cause the migration to run longer. Therefore, it’s recommended to rebuild indexes after the full load but before the cached changes are applied (stop the task with the task setting StopTaskCachedChangesNotApplied set to true). This is because cached changes are DML operations and therefore need indexes to be in place to prevent full table scans optimizing the performance of these queries.

Alternatively, you can defer the creation of indexes and referential constraints to after the full load.

Referential constraints can cause the task to run into an error state if enabled during the cached changes application phase. Therefore, you should defer their creation and enablement to after the application of cached changes (you can stop the task after the application of cached changes by setting StopTaskCachedChangesApplied to true).

Insert, update, and delete triggers can cause errors if the tables are already being replicated or can cause locking issues when enabled. Therefore, the recommendation is to enable triggers only during the cutover.

Adjust the CommitRate (10,000–50,000) for full load.

When working with LOBs, consider the following depending on the mode:

Limited LOB mode – If the LOB data in your database is not really large (a few KB), consider using the limited LOB mode by first identifying the larger LOB size for the migrating tables and then using this as the Max LOB size parameter (default 32 kB). Limited LOB mode is the fastest way to migrate LOB data to the target using AWS DMS because AWS DMS migrates the LOB in a single step along with the rest of the data. However, if you don’t specify the right size for the maximum LOB size, then there could be some LOB truncation for the LOB columns that are larger than the maximum LOB size.

Full LOB mode – Full LOB mode migrates all of the LOB data, regardless of the size. However, it can have a significant impact on performance of the migration. When choosing full LOB mode, you need to ensure that the LOB columns are all NULLABLE on the target Oracle database. This is because AWS DMS migrates LOB data in two phases: initially, all columns are migrated with the LOB data as NULL, then AWS DMS updates the LOB columns by performing a lookup from the source table using the primary key. This is also the reason AWS DMS requires tables to have a primary key to migrate LOB data. The advantage of this method is that it migrates all the LOB data, but you may experience an impact on migration performance.

Inline LOB mode – Inline LOB mode combines the advantages of full LOB mode and limited LOB mode. With inline LOB mode, AWS DMS migrates LOB data less than the inline LOB maximum size value inline, as with limited LOB mode, and treats LOB data larger than the inline LOB maximum size, as with full LOB mode. To best benefit from using the inline LOB mode, ensure most of the LOB data is smaller than the inline LOB maximum size value.

Consider using batch apply for improving CDC performance, especially if you have the following:

A high number of transactions captured from the source, which is causing target latency.

No requirement to keep strict referential integrity on the target (disabled foreign keys).

Primary keys on the table.

Consider the following when monitoring your AWS DMS tasks:

AWS DMS uses Amazon Simple Notification Service (Amazon SNS) to provide notifications when an AWS DMS event occurs, for example the creation or deletion of a replication instance or replication task.

You can monitor the progress of your task by checking the task status and by monitoring the task’s control table. The task status indicates the condition of an AWS DMS task and its associated resources.

You can set up alarms for Amazon CloudWatch metrics for CPU utilization, freeable memory, swap usage, and source and target latency.

You can also set up alerts on custom AWS DMS errors from the CloudWatch logs using AWS Lambda functions.

You should only increase your logging level when you are actively troubleshooting an issue. Otherwise, the logs can fill up the storage on the replication instance.

Monitor network usage using CloudWatch metrics NetworkReceiveThroughput and NetworkTransmitThroughput.

Avoid using your RDS database for any other load during migration.

Align your resources and create regular checkpoints to track progress.

Data validation

Create AWS DMS validation tasks to validate data. AWS DMS validates data by combing data row by row and therefore can be resource intensive. For this reason, you should keep your validation tasks separate from your migration tasks. You can create validation only tasks that are either full load only (recommended for static data and runs much faster) or CDC only (much slower than full load only validation, but a CDC validation only task detects which rows have changed and need to be revalidated based on the change log on the source).

Post-migration

Complete the following post-migration steps:

Turn on backups.

Enable high availability:

Turn on Multi-AZ.

Create a read replica for your reader farm and a mounted replica for disaster recovery only.

Configure disaster recovery with a cross-Region replica and cross-Region automated backups.

Check network and user access.

Considerations for data center migrations

For data center migrations, you may have hundreds of databases to migrate, so the considerations of each strategy is different.

The first one is the workload qualification framework, in which case you would analyze your Oracle workload and look at the Amazon RDS landscape. What different database engines can it support? According to your goals, you may migrate some of the databases to the open-source database engines and save on licensing cost. After sorting out the databases for each particular target, you can consider the consolidation of databases and schemas, which can reduce the number of databases to migrate and combine the schemas as well as reduce the volume of the data to be migrated.

You may have a lot of old data in your source databases that isn’t used by the application. Such data can be archived and deleted from the source database, which reduces the data volume for the migration.

Usually, when a large number of databases and applications are involved, we find that many applications and databases work together and need to be migrated together to the AWS infrastructure. Such phases need to be designed and the migration plan needs to be created accordingly.

Depending on the data volume that needs to be migrated from on premises to AWS, you can use Snowball devices, which can physically copy the data onto a device. Then you can ship it to Amazon to restore that data back into the AWS infrastructure.

With these strategies, you can quickly migrate a large number of databases during your data center migration when the landscape consists of a large number of Oracle databases. Also refer to migrate to amazon rds for oracle with cost optimization for more details.

Conclusion

In this post, we examined the advantages of migrating Oracle workloads to Amazon RDS and explored a range of migration methods, tools, and strategies. Furthermore, we delved into the best practices associated with different migration approaches. By employing these strategies in conjunction with recommended practices, you can ensure a seamless and effortless migration experience.

If you have questions or suggestions, leave a comment.

About the authors

Vishal Srivastava is a Senior Partner Solutions Architect specializing in databases at AWS. In his role, Vishal works with AWS Partners to provide guidance and technical assistance on database projects, helping them improve the value of their solutions when using AWS.

Javeed Mohammed is a Database Specialist Solutions Architect with Amazon Web Services. He works with the Amazon RDS team, focusing on commercial database engines like Oracle. He enjoys working with customers to help design, deploy, and optimize relational database workloads on the AWS Cloud.

Alex Anto is a Data Migration Specialist Solutions Architect with the Amazon Database Migration Accelerator team at Amazon Web Services. He works as an Amazon DMA Advisor to help AWS customers migrate their on-premises data to AWS Cloud database solutions.

Read MoreAWS Database Blog