NextGen Healthcare, Inc., a leading provider of innovative, cloud-based healthcare technology solutions is on a mission to improve the lives of those who practice medicine and their patients. Our NextGen Population Health solution provides actionable insights directly to care teams via the aggregation and transformation of multi-source data.

Built as a cloud native product, NextGen Population Health has coevolved with AWS over its lifetime. As new security-related services and enhancements to existing services are released by AWS we’ve strived to incorporate them into our platform. This practice has led to continuous, incremental enhancements to our security posture benefiting our clients, their care teams, and ultimately the patients they serve.

Security is a key pillar of the AWS Well-Architected Framework and part of the shared responsibility between AWS and the customer. AWS provides a multitude of options when securing databases, but the responsibility rests upon the customer to determine and implement the ideal configuration for your specific workload.

In this post, we outline steps to secure Amazon Aurora PostgreSQL-Compatible Edition clusters holding sensitive, HIPAA-compliant workloads where a high level of security is required. You can also use this pattern to secure Amazon Aurora MySQL-Compatible Edition clusters. The HIPAA Security Rule requires covered entities to maintain reasonable and appropriate administrative, technical, and physical safeguards for protecting electronic Protected Health Information (ePHI). One of the ways that we ensure the confidentiality of ePHI is through an internal security control requiring all data be encrypted both at rest and in transmission. A caveat: An exhaustive list of security steps is subject to your workload and business requirements.

We will also provide methods on how to monitor and maintain best practices in security through the incorporation of preventative, detective, and responsive controls.

Prerequisites

This post assumes a working knowledge of the following:

AWS CloudFormation

Python programming along with the Boto3 library to interact with AWS

Aurora PostgreSQL and Aurora MySQL

CI/CD concepts

Support for TLS 1.3 with ZDP support has been released with Aurora PostgreSQL versions 15.3, 14.8, 13.11, 12.15, 11.20. Also, if OpenJDK is used with TLS 1.3, refer to this fix.

Preventative controls

Encryption at rest

Encryption at rest is designed to protect data on physical and/or virtual storage devices through the use of encryption. This helps mitigate unauthorized access or theft in order to safeguard ePHI. Aurora supports encryption at rest and uses industry standard AES-256 encryption. To enable encryption of a database cluster, declare the StorageEncrypted parameter as true in the CloudFormation template. The following shows a sample CloudFormation template for configuring this parameter:

Ensure the security group is only open to the applications and clients it needs to be open. Do not open it to the world or to IP address and networks beyond your circle of trust.

When a DB cluster is encrypted, all DB instances, logs, backups, and snapshots are encrypted as well. To ensure databases can only be created with encryption, you can add an IAM policy that denies creation without encryption:

Encryption in transit is designed to protect data moving between devices and networks through the use of encryption. There are several controls that you can use to achieve network security in this aspect. Aurora PostgreSQL and Aurora MySQL allow granular configuration of security settings via parameter groups. In the next section, we’ll take a closer look at these controls. We’ll explain how you can use them to make sure your data stays secure while moving between devices and networks.

Force TLS

Using TLS (Transport Layer Security) ensures that data in transit between systems is encrypted. To force client connections to only connect through TLS, set the rds.force_ssl parameter to a value of 1 in the CloudFormation template for the DBClusterParameterGroup resource type. SSL (secure socket layer) is the precursor to TLS, and the two terms are often used interchangeably, which is why the rds.force_ssl parameter name is used.

This can be configured with the following CloudFormation code:

Before enabling this setting, you should examine the Aurora cluster logs using CloudWatch Log Insights and determine if any plaintext connections are being made. If there are, you need to update your application to only make encrypted connections and incorporate tests to validate this. We discuss an example test later in this post.

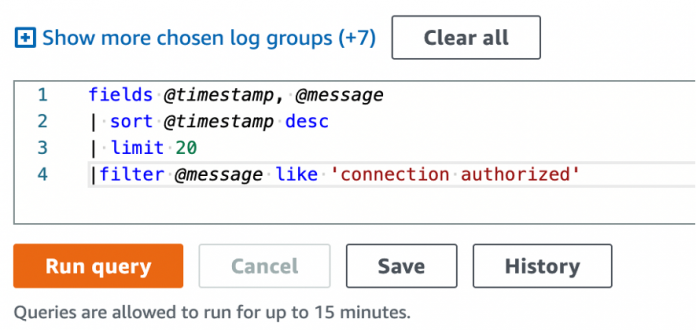

The following screenshot is an example CloudWatch Log Insights query against the /aws/rds/cluster/database-1/postgresql log group used to determine the types of connections that our database is accepting.

The following screenshot displays the output of the query where unencrypted connections are made:

The following screenshot displays the output of the query where encrypted connections are made:

TLS version 1.3

After encrypted connections have been forced, we can harden various TLS settings. Amazon Relational Database Service (Amazon RDS) for PostgreSQL and Aurora PostgreSQL support TLS versions 1.1, 1.2 and 1.3. However, TLS 1.1 is deprecated due to vulnerabilities and other technical reasons. Because we want the most robust protection, we want to use the highest version supported by Amazon RDS, which is TLS 1.3 for Aurora PostgreSQL and TLS 1.3 for Aurora MySQL. To do this, set another cluster parameter named ssl_min_protocol_version to a value of TLSv1.3 in the CloudFormation template for the DBClusterParameterGroup resource type.

Limiting cipher suites

We can allow connections using only desired cipher suites by utilizing the ssl_ciphers parameter within the cluster parameter group. We again use CloudWatch Logs Insights to determine what cipher suites have been used by our clients in the past. Because we removed TLS connections lower than version 1.3, we can also remove the associated legacy cipher suites bound to older TLS versions. A complete list of cipher suites supported for various database versions can be found in the Amazon Aurora user guides for PostgreSQL and MySQL.

The following screenshot is an example CloudWatch Log Insights query used to determine cipher suite information.

The following displays the output of the query.

We can then use this information to determine which cipher suites our applications are using, offering guidance on the cipher suites that can be safely removed. The logged ciphers can be compiled into a list of allowed ciphers, further contributing to the security of the system.

A useful resource to find information about cipher suite security is ciphersuite.info. It contains ratings of cipher suites and recommendations for the most secure suites and the technical details of each.

Certificate Authorities

A certificate authority (CA) establishes a chain of trust between communicating entities over the internet and plays a vital role in issuing digital certificates. Recently, Amazon RDS has added support for new certificate authorities which can be configured when provisioning or modifying database instances. This allows us to further harden our security posture by selecting the most secure private key and signing algorithms. Within the AWS::RDS::DBInstance CloudFormation resource, set the CACertificateIdentifier property to a value of rds-ca-2019, rds-ca-rsa2048-g1, rds-ca-rsa4096-g1, or rds-ca-ecc384-g1. Descriptions of each CA can be found in the Amazon Aurora user guide. ECC certificates, a newer method versus RSA certificates, provide the equivalent level of encryption strength with the advantage of a shorter key length, which provides both speed and security.

Detective controls

Detective controls provide insights within the workloads and alert us to issues that require human involvement. Amazon CloudWatch metrics, logs, and alarms provide the tools needed to configure these alerts, and Amazon Simple Notification Service (Amazon SNS) provides notifications when an alarm is triggered. The following example detective controls are comprised of the following AWS services:

An SNS topic to send alarm notifications

A CloudWatch log group to capture database connection telemetry

A CloudWatch metric filter to define the pattern to search for against the log group

A CloudWatch alarm, based upon the metric filter, which alerts to the SNS topic

With this pattern, we can create alarms for any or all the preventative controls that were previously covered. To create an alarm targeting unencrypted connections, we first build an SNS topic to receive alarm notifications:

Next, we require a log group to capture logs, and a metric filter to capture relevant log messages from the log group. In this case, we don’t need to create a log group. We can utilize the log group that is already created for us when the database cluster is created, and reference it within the metric filter. To create a metric filter, use the following CloudFormation code:

Notice that we reference the LogGroupName value with that log group that Amazon RDS created when the cluster was created.

Next, we create the alarm resource that references the metric we created as well as the SNS topic:

At a high level, this alarm is designed to alert the subscribers of the AlertTopic whenever one or more un-encrypted connections are detected.

There are several properties to configure in order to tune the alarm properly. The AlarmActions property references the SNS topic we created previously, which runs when the alarm transitions into the ALARM state. The Period is set to 60 (seconds) and the EvaluationPeriods is set to 1. Over 1 minute, we generate one data point, and to determine the alarm state, we evaluate the one most recent period. The Statistic property is set to Sum, which gives us a total of the alarm’s metric. Because our metric only generates data points when an unencrypted connection occurs, we set the TreatMissingData parameter to notBreaching.

Finally, the Threshold is set to 1 and ComparisonOperator to GreaterThanOrEqualToThreshold, which is compared to the Statistic and if it’s greater than or equal to it, the alarm transitions to an ALARM state.

This configuration offers detection, alarming, and notification in the event an unencrypted connection is made. You can repeat this pattern in other alarms by adding additional resources and modifying the metric filter pattern to match new patterns.

Amazon GuardDuty RDS Protection is another detective control that can extend threat detection to Amazon Aurora. As of this writing, the GuardDuty feature is in preview release. NextGen Healthcare has collaborated with the GuardDuty product team to tune and refine the service. Amazon GuardDuty RDS Protection uses machine learning to analyze and profile login activity for potential access threats, which allows for the identification of potentially suspicious login activity. This service can be enabled in the console with a few steps, which can be found in the Amazon GuardDuty user guide.

Responsive controls

The preventative controls put in place to limit weak cipher suites can also benefit from controls that determine if new, more secure cipher suites are released. An evaluation of the new cipher suites can be made to adjust our supported cipher suite list. One way to get timely feedback is to add in checks at build time that compare the list of cipher suites to cipher suites in the default ssl_ciphers parameter.

The following bash script is run as part of the CI/CD pipeline. The script calls the DescribeEngineDefaultClusterParameters API and compares the results to output from a previous call. If the results have changed, then the pipeline fails, which gives you the opportunity to update the supported cipher configuration.

Another control to put in place is a test to verify that plaintext connections can’t be established. This confirms that the preventative controls to force encryption in transit are functioning correctly. A connection attempt with encryption disabled is made, which is expected to fail. If the connection succeeds, the test fails, and in turn fails the CI/CD pipeline and prevents changes from affecting downstream (production) environments.

The following Python script is used in conjunction with the PyTest testing framework:

Clean up

To clean up the resources created in your account, you should delete any CloudFormation stacks created.

You can use the AWS CloudFormation console or the AWS APIs to perform the cleanup.

Conclusion

The preventative, detective, and responsive controls outlined in this post provide a well-rounded security posture that organizations can benefit from to ensure workloads are meeting their business policy and regulatory requirements. Security is an ongoing endeavor; organizations must remain vigilant to emerging threats and have processes in place to proactively protect against them.

For more information and further reading, refer to Amazon Aurora security documentation.

About the authors

Brandon White is a Senior Engineer, DevOps, with NextGen Healthcare. He has over 17 years of experience in the healthcare information technology industry, with an interest in serverless event-driven architecture and automation. In his free time, he enjoys cycling and making wood-fired pizza.

Morgan Killik is a Staff Engineer with NextGen Healthcare, with a focus on improving operational efficiency for both developers and systems. When not automating all the things, he enjoys running.

Stephen McDonald is a DevOps Staff Engineer at NextGen Healthcare. He is based in Rochester, NY. Stephen is excited about improving application security and building event-driven applications. He has 15 years of infrastructure engineering experience across healthcare, manufacturing, and business services industries. In his spare time, he enjoys outdoor cooking, travel, and live music.

Anand is a Principal Solutions Architect at AWS since 2016. Anand has helped global healthcare, financial services, and telecommunications clients architect and implement enterprise software solutions using AWS and hybrid cloud technologies. He has an MS in Computer Science from Louisiana State University Baton Rouge, and an MBA from USC Marshall School of Business, Los Angeles. He is AWS certified in the areas of Security, Solutions Architecture, and DevOps Engineering.

Bryan is a Principal Solutions Architect at AWS since 2022. Bryan is a Principal Solutions Architect responsible for the success of Nextgen. He has an extensive background leading datacenter, migrations, IT operations, cybersecurity and innovation for a top 10 US Bank. He has an M.S in Management Information Systems from ASU and is CISSP Certified as well as AWS certified in Solutions Architecture.

Read MoreAWS Database Blog