In this post, we dive deep into establishing your infrastructure and deploying Polygon blockchain nodes on AWS. We provide recommendations for selecting optimal compute and storage options tailored to various use cases. We discuss the approach to speed up the horizontal scaling of Polygon full nodes on AWS with Amazon Simple Storage Service (Amazon S3) and the s5cmd tool by reducing the snapshot download time from approximately 8 hours to 2 hours. Finally, we conclude by reviewing AWS-based configurations for Polygon full nodes for different usage patterns.

The solution and recommended infrastructure configurations are implemented in the accompanying application built with the Amazon Cloud Development Kit (AWS CDK) to deploy and scale Polygon nodes on AWS.

If you’re looking for a serverless managed environment, we recommend exploring Amazon Managed Blockchain (AMB) Access Polygon.

How Polygon blockchain works

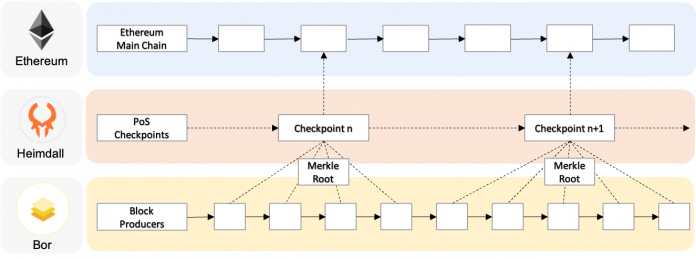

The Polygon Proof of Stake (PoS) blockchain is built to run in parallel with Ethereum blockchain, but relies on Ethereum to store checkpoint transactions (checkpoints). This allows users to speed up operations on the Polygon PoS blockchain and reduce transaction costs. On Polygon, developers can perform computational tasks and data storage outside of Ethereum, and Polygon validators rely on Ethereum’s tamper resistance characteristics to keep validating new blocks. The Polygon PoS network is at the forefront of Polygon’s offerings, and it operates as a Delegated Proof-of-Stake (DPoS) sidechain compatible with Ethereum Virtual Machine (EVM), as illustrated in the following diagram.

The Polygon PoS sidechain consists of three layers:

Ethereum layer – A set of smart contracts on Ethereum to manage stakes and store checkpoints.

Heimdall layer – A Heimdall client that runs in parallel with the Ethereum nodes, validates blocks produced by the Bor layer, and commits checkpoints to the Ethereum mainnet.

Bor layer – A set of EVM-compatible Bor layer clients that accept user transactions and assemble them into blocks. The code of the homonymous Bor client is based on the Go Ethereum client; you can also use the Erigon client as more storage-optimized Archive node.

To run a Polygon full node, we start both Heimdall and Bor clients on the same machine for better cost-efficiency. You can do the same with a combination of Heimdall and Erigon clients.

Challenges with running Polygon nodes

Polygon offers high processing speed and low transaction costs, making it a favored choice for decentralized applications (dApps). As the Polygon network grows, the disk usage of Bor and Heimdall has increased, and the current data size exceeds several terabytes. This means synchronizing the data to provision a new full node can take 2–3 days.

To address this problem, the following Polygon documentation recommends downloading node snapshot data with the aria2c tool. The total size of Polygon’s data is about 2.4 terabytes, 90% of which is the Bor client’s state data.

The following table shows the results of measuring the data download and extraction time on three types of Amazon Elastic Compute Cloud (Amazon EC2) instances with different CPU, memory, and network configurations. The experiments show that the full process takes 16–17 hours, of which download time is about 12 hours and decompression is over 4 hours. We tried one more instance size for each instance type listed in the table, and found that this process consistently takes a similar amount of time regardless of the actual instance resources.

Instance Type

(vCPUs, Memory GiB)

EBS GP3

(Size/IOPS/Throughput)

Download

(hh:mm:ss)

Extract

(hh:mm:ss)

Total

(hh:mm:ss)

c6g.8xlarge (32, 64)

8T / 10000 / 1000

11:50:39

04:13:03

16:03:42

r6g.4xlarge (16, 128)

8T / 10000 / 1000

12:24:00

04:19:08

16:43:08

m6g.8xlarge (32, 128)

8T / 10000 / 1000

12:45:51

04:14:24

17:00:15

This means that when you need to provision a new node to serve your dApps, you’ll need to wait 16–17 hours to get the snapshot ready to run your node. That’s faster than the original 3–4 days, but still long enough to make the on-demand scalability of nodes problematic. To address this problem, we conducted a series of benchmark tests to reduce the provisioning time for new Polygon nodes even further and determine the appropriate instance and Amazon Elastic Block Store (Amazon EBS) storage type for running them on AWS.

Solution overview

Our goal was to implement the following deployment architecture to run scalable and highly available Polygon full nodes on AWS.

The workflow includes the following steps:

A dedicated EC2 instance (we call it the sync node) downloads and unarchives the snapshot data from the Polygon Snapshot service to its local storage.

The sync node uploads the Polygon extracted snapshot data to an Amazon Simple Storage Service (Amazon S3) bucket.

A set of EC2 instances (we call them RPC nodes) are provisioned by an Auto Scaling group to serve JSON RPC API requests from dApps and download snapshot data from the S3 bucket to initialize the node data storage. Based on the initialized data, RPC nodes perform additional synchronization tasks to catch up with the chain head of the Polygon network.

The new RPC nodes catch up with the rest of the nodes, syncing the new data added after the snapshot was created.

The dApps and developers use highly available RPC nodes through Application Load Balancer.

To deploy the solution, follow the guidelines in the README file within the accompanying GitHub repository.

Analyzing different methods for faster copying of node data snapshots

We want the RPC nodes to scale automatically as usage changes. We tested two approaches to achieve this.

The first approach uses sync nodes to store the entire dataset and create EBS volume snapshots for that data. As you can see in the following diagram, when a new RPC node is needed, an EBS volume from a snapshot of the sync node will be loaded to the new RPC node in just a few minutes.

The second approach, shown in the following diagram, looks similar, but uses the s5cmd tool to copy data from the sync node to the S3 bucket and then from the S3 bucket to the RPC nodes. This method takes advantage of the parallel file transfer feature of Amazon S3, which can speed up file transfers depending on the number of CPU cores.

The following table shows how much time it takes for the current Polygon approach with aria2c, the approach with EBS volume snapshots, and using the s5cmd tool.

Instance Type

(vCPUs, Memory GiB)

aria2c

(hh:mm:ss)

EBS Volume Snapshot

(hh:mm:ss)

s5cmd

(hh:mm:ss)

take

restore

total

upload

download

total

r6g.8xlarge (32, 256)

17:36:50

04:39:12

0:03:28

04:42:40

01:00:38

00:52:37

01:53:25

m6g.8xlarge (32, 128)

17:00:15

04:40:52

0:03:43

04:44:35

01:04:00

00:54:35

01:58:35

c6g.8xlarge (32, 64)

16:03:42

05:30:00

0:03:35

05:34:35

01:15:14

00:53:29

02:08:43

The results show that, when using the approach with EBS volume snapshots, it took us about 4.5–5.5 hours to create a volume snapshot. The volume snapshots created by sync nodes allow new RPC nodes to be added in minutes, but you can experience higher latencies for multiple hours while the EBS snapshot is lazy loaded from the S3 storage. Nevertheless, it is still four times faster to provision RPC nodes with this approach vs. downloading them from the Polygon Snapshot service.

Alternatively, with the s5cmd tool, we directly copy files from Amazon S3 to the local data volume of each RPC node. This method avoids the EBS lazy loading and, although the new RPC node initially spends time copying the data, in the end it doesn’t suffer from the data volumes’ low latency issues. In our tests, the complete process took approximately 2 hours, regardless of the EC2 instance type. However, there are significant differences in upload and download speeds depending on the size of the instance. It takes approximately 4 hours to upload and download a snapshot using s5cmd for 4xlarge instances and around 2 hours for 8xlarge. With larger instance sizes, this approach is about two times faster than using EBS volume snapshots and about eight times faster than downloading snapshots from the Polygon Snapshot service. Because the overall time showed by this solution is the shortest, we chose to use it in the sample application that accompanies this post.

After you download the snapshot with any method mentioned so far, the new RPC node still needs to synchronize the data with the rest of the Polygon PoS network. Depending on when the snapshot was created, it might take from 30 minutes to about 1 hour on top of the snapshot processing times.

Choosing the right configuration for RPC nodes based on usage pattern

In addition to improving the initial synchronization time, we also ran a set of benchmarking tests to find suitable configurations for Polygon full nodes on AWS. We spun up multiple compute and storage combinations with Bor and Heimdall clients and load tested the JSON RPC API with random API calls to measure which one will provide a better user experience for dApps. We also measured how long each configuration will keep up with the chain head under load. The configurations in the following table performed best based on three common usage patterns selected for these benchmark tests.

Usage Pattern

Configuration on AWS

Node that can easily follow the chain head and can serve single- to double-digit requests per second for disc-intensive RPC methods like trace_block on Erigon.

Compute: m7g.4xlarge (16 vCPU, 64GB RAM)

Storage: 7000 GB EBS GP3 16000 IOPS and 1000 MBps throughput

Node that serves hundreds JSON RPC requests per second and still follows the chain head. Operationally needs to tolerate ephemeral instance storage that persists data during reboots, but needs to be reinitialized if the instance was stopped, hibernated, or deleted.

Compute: im4gn.4xlarge (16 vCPU, 64GB RAM)

Storage: 7500 GB instance storage (comes with the im4gn instance type) and extra 50 GB EBS gp3 root volume

Node that serves up to a thousand RPC requests per second (depending on RPC method) and follows the chain head with full operational convenience of EBS volumes.

Compute: m7g.4xlarge (16 vCPU and 64GB RAM)

Storage: 7000 GB EBS IO2 with 16000 IOPS

The results of the tests concluded that general-purpose m7g.4xlarge EC2 instances powered by AWS Graviton3 processors show a good cost to performance ratio, but for storage, the more cost-effective EBS gp3 volume types are struggling when it comes to both syncing and serving a higher number of concurrent RPC requests. From the cost perspective, using an AWS Graviton2-powered storage-optimized im4gn.4xlarge instance with ephemeral instance storage volumes can be more cost-effective than using EBS io2 or io1 volume types. The ephemeral nature of instance storage volumes makes operating such nodes less convenient than with permanent EBS volumes, but it might be acceptable considering the improvements in the speed of provisioning new RPC nodes with the approach using the s5cmd tool described earlier in this post.

Conclusion

In this post, we discussed how to make data snapshots available faster for newly created Polygon nodes. We discussed the ways sync nodes download, unarchive, and share snapshot data, and showed how it can be done in about 17 hours instead of days. Then we discussed two ways to use the snapshots to provision new RPC nodes. You can create an EBS volume snapshot and map it to a new RPC node during startup, but experience higher latencies because of EBS lazy loading, or use the s5cmd tool for high-speed data copy between EC2 instances and the S3 bucket to decrease the duration of initialization and provisioning to about 2 hours.

We concluded with presenting different configurations for RPC nodes for different usage patterns. EC2 instances powered by AWS Graviton3 are well suited for this purpose, and when using the s5cmd tool for high-speed data copy, it’s possible to use lower-latency instance storage to save costs despite their ephemeral nature.

These methods allow efficient infrastructure management and auto scaling of Polygon nodes. You can apply the same approaches to improve the scalability of high-throughput blockchain networks, resulting in more performant and cost-effective workloads in the future.

To try out the solution, check out the sample application that we created for this post.

About the Authors

Nikolay Vlasov is a Senior Solutions Architect with the AWS Worldwide Specialist Solutions Architect organization, focused on blockchain-related workloads. He helps clients run workloads supporting decentralized web and ledger technologies on AWS.

Hye Young Park is a Principal Solutions Architect at AWS. She helps align Amazon Managed Blockchain product initiatives with customer needs in the rapidly evolving and maturing blockchain industry for both private and public blockchains. She is passionate about transforming traditional enterprise IT thinking and pushing the boundaries of conventional technology usage. She has worked in the IT industry for over 20 years, and has held various technical management positions, covering search engine, messaging, big data, and app modernization. Before joining AWS, she gained experience in search engine, messaging, and big data at Yahoo, Samsung Electronics, and SK Telecom as a project manager.

Seungwon Choi is an Associate Solutions Architect at AWS based in Korea. She helps in developing strategic technical solutions to meet customers’ business requirements. She is also passionate about web3 and blockchain technologies, and building the web3 community.

Jaz Loh is a Technical Account Manager at AWS. He provides guidance to AWS customers on their workloads across a variety of AWS technologies. He is an individual fueled by a fervent enthusiasm for the realm of blockchain, boasting over a decade’s worth of expertise across web development, big data, data analytics, and the innovative landscape of blockchain technology.

Read MoreAWS Database Blog