We’ve all been there— asking a voice assistant to play a song, launch an app, or answer a question, but the assistant doesn’t comply. Maybe it’s a network outage, or maybe you’re in the middle of nowhere, far away from coverage—either way the result is the same: the voice assistant can’t connect to the server and thus cannot help.

With our Speech-to-Text (STT) API now processing over 1 billion minutes of speech each month, it’s clear that voice assistants — and Automatic Voice Recognition (ASR) in general — are essential to how millions of people make decisions and navigate their lives. Typically, however, to successfully provide high-quality speech results to consumers, the AI systems responsible for ASR have needed a stable cloud connection to specialized hardware.

With Speech On-Device, which went into GA at Google Cloud Next ‘22, we’re excited to embed the powerful speech recognition available in the cloud for a variety of new use cases in environments with inconsistent, little, or no internet connectivity. These on-device Speech-to-Text and Text-to-Speech technologies have already been used in Google Assistant, but with Speech On-Device, a new generation of apps and services can harness this technology.

Build speech experiences with–or without–network connectivity

From cars that drive through tunnels, to apps running on integrated devices like kiosks, to IoT devices, Speech On-Device delivers server-quality voice capabilities with a fraction of the processing power—all while helping to maintain privacy by keeping data on the local device.

Running locally is made possible by new modeling techniques, on both the Speech-to-Text (STT) and Text-to-Speech (TTS) fronts.

For Speech-to-Text (or ASR), years of work on our end-to-end Speech models, such as our latest conformer models, has decreased the size and compute necessary to run fully-featured speech models. These advancements have resulted in quality comparable to that of a server, while still allowing for models that are lightweight enough to run on local devices CPUs.

For Text-to-Speech, we leverage new technology developed at Google to bring high-quality voice into vehicles. Speech On-Device TTS not only provides acoustic quality comparable to our WaveNet technology, DeepMind’s breakthrough model for generating more natural-sounding speech, but it also is significantly less computationally demanding and can easily run on embedded CPUs without the need for accelerators.

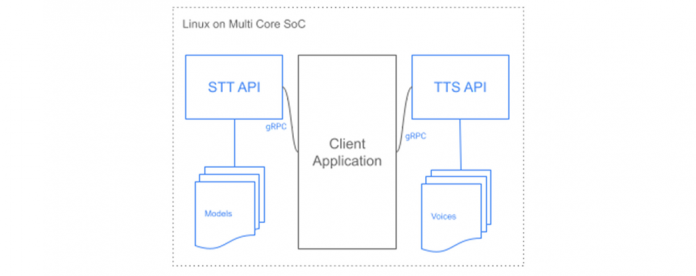

Speech On-Device is easy for developers to get started with. Each system (STT and TTS) provides customers with a binary, built for their specific hardware, operating system, and software environment. This binary exposes a local gRPC interface that other services on the device can talk to, making it easy for multiple services to access speech recognition or speech synthesis as they need to, without additional libraries or integration.

Each model is only a couple hundred megabytes in size. The entire system can run on the single core of a modern ARM-based System on Chip (SoC) while still achieving latencies usable for real-time interactions. This means it can be added to existing systems without worrying about acceleration or optimization. And, as with all Cloud Speech-to-Text API models, Speech On-Device is built to work directly out-of-the-box, with no training or customization necessary.

Join the Google Cloud customers already using Speech On-Device

We’re excited to see the new speech-driven experiences that organizations will build with this service—especially after seeing Speech On-Device’s early adopters in action. For example, Toyota is leveraging Speech On-Device as Ryan Wheeler — Vice President, Machine Learning at Toyota Connected North America — discussed in a Google Cloud Next ‘22 session.

If you are interested in Speech On-Device, there is a review process to help assess whether your use case is aligned with our best practices for using Speech On-Device. To get started, contact your seller today.

Cloud BlogRead More