Today we’re excited to announce the general availability of Rocky Linux 8-based and CentOS 7-based HPC Virtual Machine (VM) images for high-performance computing (HPC) workloads, with a focus on tightly-coupled workloads, such as weather forecasting, fluid dynamics, and molecular modeling.

With the HPC VM image, we have made it easy to build an HPC-ready VM instance, incorporating our best practices running HPC on Google Cloud, including:

VMs ready for HPC out-of-the-box – No need to manually tune performance, manage VM reboots, or stay up to date with the latest Google Cloud updates for tightly-coupled HPC workloads, especially with our regular HPC VM image releases. Reboots will be automatically triggered when tunings require them and this process will be managed for you by the HPC VM image.

Networking optimizations for tightly-coupled workloads – Optimizations that reduce latency for small messages are included, which benefits applications that are heavily dependent on point-to-point and collective communications.

Compute optimizations – Optimizations that reduce system jitter are included, which makes single-node performance consistent, important to improving scalability.

Improved application compatibility – Alignment with the node-level requirements of the Intel HPC platform specification enables a high degree of interoperability between systems.

Performance measurement using HPC benchmarks

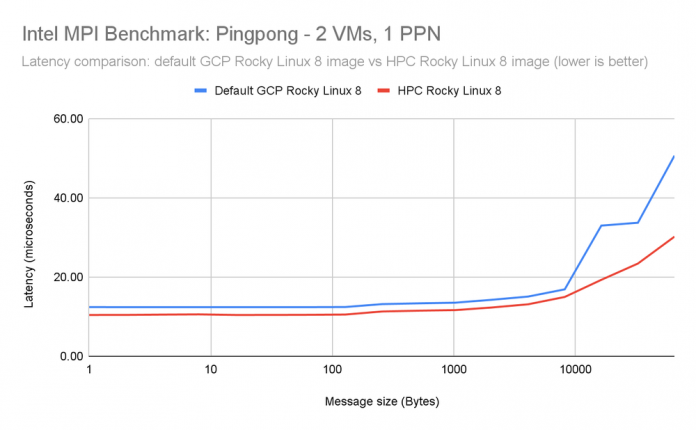

We have compared the performance of the HPC VM images against the default CentOS 7 and GCP-optimized Rocky Linux 8 images across Intel MPI Benchmarks (IMB).

The benchmarks were run against the following images.

HPC Rocky Linux 8

Image name: hpc-rocky-linux-8-v20240126

Image project: cloud-hpc-image-public

Default GCP Rocky Linux 8

Image name: rocky-linux-8-optimized-gcp-v20240111

Image project: rocky-linux-cloud

Each cluster of machines was deployed with compact placement with max_distance=1, meaning all VMs were placed on hardware that were physically on the same rack to minimize network latency.

Intel MPI Benchmark (IMB) Ping-Pong

IMB Ping-Pong measures the latency when transferring a fixed-sized message between two ranks on different VMs. We saw up to a 15% improvement when using the HPC Rocky Linux 8 image compared to the default GCP Rocky Linux 8 image.

Benchmark setup

2 x h3-standard-88

MPI library: Intel OneAPI MPI library 2021.11.0

MPI benchmarks application: Intel MPI Benchmarks 2019 Update 6

MPI environment variables:

I_MPI_PIN_PROCESSOR_LIST=0

I_MPI_FABRICS=shm:ofi

FI_PROVIDER=tcp

Command line: mpirun -n 2 -ppn 1 -bind-to core -hostfile <hostfile> IMB-MPI1 Pingpong -msglog 0:16 -iter 50000

Results

Pingpong 1 PPN – Rocky Linux 8 (lower is better)

Intel MPI Benchmark (IMB) AllReduce – 1 process per node

The IMB AllReduce benchmark measures the collective latency among multiple ranks across VMs. It reduces a vector of a fixed length with the MPI_SUM operation.

To isolate networking performance, we initially show 1 PPN (process-per-node) results (1 MPI rank) on 8 VMs.

We saw an improvement of up to 35% when comparing the HPC Rocky Linux 8 image to the default GCP Rocky Linux 8 image.

Benchmark setup

8 x h3-standard-88

1 process per node

MPI library: Intel OneAPI MPI library 2021.11.0

MPI benchmarks application: Intel MPI Benchmarks 2019 Update 6

MPI environment variables:

I_MPI_FABRICS=shm:ofi

FI_PROVIDER=tcp

I_MPI_ADJUST_ALLREDUCE=11

Command line: mpirun -n 008 -ppn 01 -bind-to core -hostfile <hostfile> IMB-MPI1 Allreduce -msglog 0:16 -iter 50000 -npmin 008

Results

Allreduce 1 PPN – Rocky Linux 8 (lower is better)

Intel MPI Benchmark (IMB) AllReduce – 1 process per core (88 processes per node)

We show 88 PPN results where there are 88 MPI ranks/node and 1 thread/rank (704 ranks).

For this test, we saw an improvement of up to 25% when comparing the HPC Rocky Linux 8 image to the default GCP Rocky Linux 8 image.

Benchmark setup

8 x h3-standard-88

1 process per core (88 processes per node)

MPI library: Intel OneAPI MPI library 2021.11.0

MPI benchmarks application: Intel MPI Benchmarks 2019 Update 6

MPI environment variables:

I_MPI_FABRICS=shm:ofi

FI_PROVIDER=tcp

I_MPI_ADJUST_ALLREDUCE=11

Command line: mpirun -n 704 -ppn 88 -bind-to core -hostfile <hostfile> IMB-MPI1 Allreduce -msglog 0:16 -iter 50000 -npmin 704

Results

Allreduce 88 PPN – Rocky Linux 8 (lower is better)

The latency, bandwidth, and jitter improvements in the HPC VM Image have resulted in historically higher MPI workload performance. We plan to update this blog as more performance results become available.

Cloud HPC Toolkit and the HPC VM image

You can use the HPC VM image through the Cloud HPC Toolkit, an open-source tool that simplifies the process of deploying environments for a variety of workloads, including HPC, AI, and machine learning. In fact, the Toolkit blueprints and Slurm images based on Rocky Linux 8 and CentOS 7 use the HPC VM image by default. Using the Cloud HPC Toolkit, you can add customization on top of the HPC VM image, including installing new software and changing configurations, making it even more useful.

By using the Cloud HPC Toolkit to customize images based on the HPC VM Image, it is possible to create and share blueprints for producing optimized and specialized images, improving reproducibility while reducing setup time and effort.

How to get started

You can create an HPC-ready VM by using the following options:

Google Cloud console – Note: the image is available through Cloud Marketplace in the console.

SchedMD’s Slurm workload manager, which uses the HPC VM image by default. For more information, see Creating Intel Select Solution verified clusters.

Omnibond CloudyCluster, which uses the HPC VM image by default.

Cloud BlogRead More