As more businesses increase their online presence to serve their customers better, new fraud patterns are constantly emerging. In today’s ever-evolving digital landscape, where fraudsters are becoming more sophisticated in their tactics, detecting and preventing such fraudulent activities has become paramount for companies and financial institutions.

Traditional rule-based fraud detection systems are capped in their ability to quickly iterate as they rely on predefined rules and thresholds to flag potentially fraudulent activity. These systems can generate a large number of false positives, significantly increasing the volume of manual investigations performed by the fraud team. Furthermore, humans are also error-prone and have limited capacity to process large amounts of data, making manual efforts to detect fraud time-consuming, which can result in missed fraudulent transactions, increased losses, and reputational damage.

Machine learning (ML) plays a crucial role in detecting fraud because it can quickly and accurately analyze large volumes of data to identify anomalous patterns and possible fraud trends. ML fraud model performance relies heavily on the quality of data it is trained on, and, specifically for the supervised models, accurate labeled data is crucial. In ML, a lack of significant historical data to train a model is called the cold start problem.

In the world of fraud detection, the following are some traditional cold start scenarios:

Building an accurate fraud model while lacking a history of transactions or fraud cases

Being able to accurately distinguish legitimate activity from fraud for new customers and accounts

Risk-decisioning payments to an address or beneficiary never seen before by the fraud system

There are multiple ways to solve for these scenarios. For example, you can use generic models, known as one-size-fits-all models, which are typically trained on top of fraud data sharing platforms like fraud consortiums. The challenge with this approach is that no business is equal, and fraud attack vectors change constantly.

Another option is to use an unsupervised anomaly detection model to monitor and surface unusual behavior among customer events. The challenge with this approach is that not all fraud events are anomalies, and not all anomalies are indeed fraud. Therefore, you can expect higher false positive rates.

In this post, we show how you can quickly bootstrap a real-time fraud prevention ML model with a little as 100 events using the Amazon Fraud Detector new feature, Cold Start, thereby dramatically lowering the barrier of entry to custom ML models for many organizations that simply don’t have the time or ability to collect and accurately label large datasets. Moreover, we discuss how by using Amazon Fraud Detector stored events, you can review results and correctly label the events to retrain your models, thereby improving the effectiveness of fraud prevention measures over time.

Solution overview

Amazon Fraud Detector is a fully managed fraud detection service that automates detecting potentially fraudulent activities online. You can use Amazon Fraud Detector to build customized fraud detection models using your own historical dataset, add decision logic using the built-in rules engine, and orchestrate risk decision workflows with a click of a button.

Previously, you had to provide over 10,000 labeled events with at least 400 examples of fraud to train a model. With the release of the Cold Start feature, you can quickly train a model with a minimum of 100 events and at least 50 classified as fraud. Compared with initial data requirements, this is a reduction of 99% in historical data and an 87% reduction in label requirements.

The new Cold Start feature provides intelligent methods for enriching, extending, and risk modeling small sets of data. Moreover, Amazon Fraud Detector performs label assignments and sampling for unlabeled events.

Experiments performed with public datasets show that, by lowering the limits to 50 fraud and only 100 events, you can build fraud ML models that consistently outperform unsupervised and semi-supervised models.

Cold Start model performance

The ability of an ML model to generalize and make accurate predictions on unseen data is impacted by the quality and diversity of the training dataset. For Cold Start models, this is no different. You should have processes in place as more data is collected to correctly label these events and retrain the models, ultimately leading to an optimal model performance.

With a lower data requirement, the instability of reported performance increases due to the increased variance of the model and the limited test data size. To help you build the right expectation of model performance, besides model AUC, Amazon Fraud Detector also reports uncertainty range metrics. The following table defines these metrics.

.

.

AUC

.

.

< 0.6

0.6 – 0.8

>= 0.8

AUC uncertainty interval

> 0.3

The model performance is very low and might vary greatly. Expect low fraud detection performance.

The model performance is low and might vary greatly. Expect limited fraud detection performance.

The model performance might vary greatly.

0.1 – 0.3

The model performance is very low and might vary significantly. Expect low fraud detection performance.

The model performance is low and might vary significantly. Expect limited fraud detection performance.

The model performance might vary significantly.

< 0.1

The model performance is very low. Expect low fraud detection performance.

The model performance is low. Expect limited fraud detection performance.

No Warning

Train a Cold Start model

Training a Cold Start fraud model is identical to training any other Amazon Fraud Detector model; what differs is the dataset size. You can find sample datasets for Cold Start training in our GitHub repo. To train an Amazon Fraud Detector custom model, you can follow our hands-on tutorial. You can either use the Amazon Fraud Detector console tutorial or the SDK tutorial to build, train, and deploy a fraud detection model.

After your model is trained, you can review performance metrics and then deploy it by changing its status to Active. To learn more about model scores and performance metrics, see Model scores and Model performance metrics. At this point, you can now add your model to your detector, add business rules to interpret the risk scores that the model outputs, and make real-time predictions using the GetEventPrediction API.

Fraud ML model continuous improvement and feedback loop

With the Amazon Fraud Detector Cold Start feature, you can quickly bootstrap a fraud detector endpoint and start protecting your businesses immediately. However, new fraud patterns are constantly emerging, so it’s critical to retrain Cold Start models with newer data to improve the accuracy and effectiveness of the predictions over time.

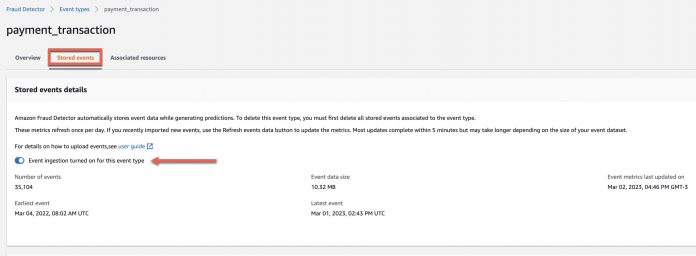

To help you iterate on your models, Amazon Fraud Detector automatically stores all events sent to the service for inference. You can change or validate the event ingestion flag is on at the event type level, as shown in the following screenshot.

With the stored events feature, you can use the Amazon Fraud Detector SDK to programmatically access an event, review the event metadata and the prediction explanation, and make an informed risk decision. Moreover, you can label the event for future model retraining and continuous model improvement. The following diagram shows an example of this workflow.

In the following code snippets, we demonstrate the process to label a stored event:

To do a real-time fraud prediction on an event, call the GetEventPrediction API:

As seen in the response, based on the decision engine rule matched, the event should be sent for manual review by the fraud team. By gathering the prediction explanation metadata, you can gain insights into how each event variable impacted the model’s fraud prediction score.

To collect these insights, we use the get_event_prediction_metada API:

API Response:

With these insights, the fraud analyst can make an informed risk decision about the event in question and update the event label.

To update the event label call the update_event_label API:

API Response

As a final step, you can verify if the event label was correctly updated.

To verify the event label, call the get_event API:

API Response

Clean up

To avoid incurring future charges, delete the resources created for the solution.

Conclusion

This post demonstrated how you can quickly bootstrap a real-time fraud prevention system with a few as 100 events using the Amazon Fraud Detector new Cold Start feature. We discussed how you can use stored events to review results and correctly label the events and retrain your models, improving the effectiveness of fraud prevention measures over time.

Fully managed AWS services such as Amazon Fraud Detector help reduce the time businesses spend analyzing user behavior to identify fraud in their platforms and focus more on driving business value. To learn more about how Amazon Fraud Detector can help your business, visit Amazon Fraud Detector.

About the Authors

Marcel Pividal is a Global Sr. AI Services Solutions Architect in the World-Wide Specialist Organization. Marcel has more than 20 years of experience solving business problems through technology for FinTechs, payment providers, pharma, and government agencies. His current areas of focus are risk management, fraud prevention, and identity verification.

Julia Xu is a Research Scientist with Amazon Fraud Detector. She is passionate about solving customer challenges using machine learning techniques. In her free time, she enjoys hiking, painting, and exploring new coffee shops.

Guilherme Ricci is a Senior Solution Architect at AWS, helping Startups to modernize and optimize the costs of their applications. With over 10 years of experience with companies in the financial sector, he is currently working together with the team of AI/ML specialists.

Read MoreAWS Machine Learning Blog