Amazon SageMaker is a fully managed service that provides developers and data scientists the ability to quickly build, train, and deploy machine learning (ML) models. With SageMaker, you can deploy your ML models on hosted endpoints and get inference results in real time. You can easily view the performance metrics for your endpoints in Amazon CloudWatch, automatically scale endpoints based on traffic, and update your models in production without losing any availability. SageMaker offers a wide variety of options to deploy ML models for inference in any of the following ways, depending on your use case:

For synchronous predictions that need to be served in the order of milliseconds, use SageMaker real-time inference

For workloads that have idle periods between traffic spurts and can tolerate cold starts, use Serverless Inference

For requests with large payload sizes up to 1 GB, long processing times (up to 15 minutes) and near-real-time latency requirements (seconds to minutes), use SageMaker Asynchronous Inference

To get predictions for an entire dataset, use SageMaker batch transform

Real-time inference is ideal for inference workloads where you have real time, interactive, low latency requirements. You deploy your model to SageMaker hosting services and get an endpoint that can be used for inference. These endpoints are backed by a fully managed infrastructure and support auto scaling. You can improve efficiency and cost by combining multiple models into a single endpoint using multi-model endpoints or multi-container endpoints.

There are certain use cases where you want to deploy multiple variants of the same model into production to gauge their performance, measure improvements, or run A/B tests. In such cases, SageMaker multi-variant endpoints are useful because they allow you to deploy multiple production variants of a model to the same SageMaker endpoint.

In this post, we discuss SageMaker multi-variant endpoints and best practices for optimization.

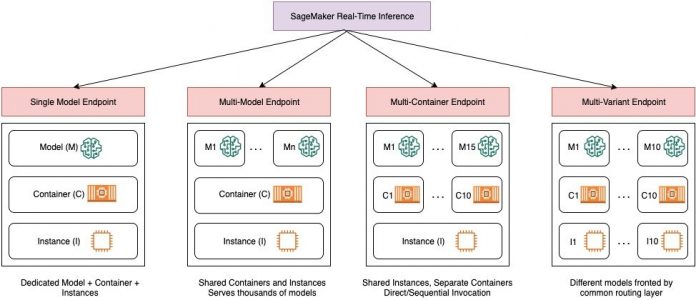

Comparing SageMaker real-time inference options

The following diagram gives a quick overview of the real-time inference options with SageMaker.

A single-model endpoint allows you to deploy one model on a container hosted on dedicated instances or serverless for low latency and high throughput. You can create a model and retrieve a SageMaker supported image for popular frameworks such as TensorFlow, PyTorch, Scikit-learn, and more. If you’re working with a custom framework for your model, you can also bring your own container that installs your dependencies.

SageMaker also supports more advanced options such as multi-model endpoints (MMEs) and multi-container endpoints (MCEs). MMEs are useful when you’re dealing with hundreds to tens of thousands of models and where you don’t need to deploy each model as an individual endpoint. MMEs allow you to host multiple models in a cost-effective, scalable manner within the same endpoint by using a shared serving container hosted on an instance. The underlying infrastructure (container and instance) remains the same, but the models are loaded and unloaded dynamically from a common S3 location, according to usage and the amount of memory available on the endpoint. Your application simply needs to include an API call with the target model to this endpoint to achieve low-latency, high-throughput inference. Instead of paying for a separate endpoint for every single model, you can host many models for the price of a single endpoint.

MCEs enable you to run up to 15 different ML containers on a single endpoint and invoke them independently. You can build these ML containers on different serving stacks (such as ML framework, model server, and algorithm), to be run on the same endpoint for cost savings. You can stitch the containers together in a serial inference pipeline or invoke the container independently. This can be ideal when you have several different ML models that have different traffic patterns and similar resource needs. Examples of when to utilize MCEs include, but are not limited to, the following:

Hosting models across different frameworks (such as TensorFlow, PyTorch, and Scikit-learn) that don’t have sufficient traffic to saturate the full capacity of an instance

Hosting models from the same framework with different ML algorithms (such as recommendations, forecasting, or classification) and handler functions

Comparisons of similar architectures running on different framework versions (such as TensorFlow 1.x vs. TensorFlow 2.x) for scenarios like A/B testing

SageMaker multi-variant endpoints (MVEs) allow you to test multiple models or model versions behind the same endpoint using production variants. Each production variant identifies a ML model and the resources deployed for hosting the model, such as the serving container and instance.

Overview of SageMaker multi-variant endpoints

In production ML workflows, data scientists and ML engineers refine models through a variety of methods, such as retraining based on data/model/concept drift, hyperparameter tuning, feature selection, framework selection, and more. Performing A/B testing between a new model and an old model with production traffic can be an effective final step in the validation process for a new model. In A/B testing, you test different variants of your models and compare how each variant performs relative to each other. You then choose the best-performing model to replace the previous model with a new version that delivers better performance than the previous version. By using production variants, you can test these ML models and different model versions behind the same endpoint. You can train these ML models using different datasets, different algorithms, and ML frameworks; deploy them to different instance types; or any combination of these options. The load balancer connected to the SageMaker endpoint provides the ability to distribute the invocation requests across multiple production variants. For example, you can distribute traffic between production variants by specifying the traffic distribution for each variant, or you can invoke a specific variant directly for each request.

You can also configure the auto scaling policy to automatically scale your variants in or out based on metrics such as requests per second.

The following diagram illustrates how MVE works in more detail.

Deploying an MVE is also very straightforward. All you need to do is define model objects with the image and model data using the create_model construct from the SageMaker Python SDK, and define the endpoint configurations using production_variant constructs to create production variants, each with its own different model and resource requirements (instance type and counts). This enables you to also test models on different instance types. To deploy, use the endpoint_from_production_variant construct to create the endpoint.

During endpoint creation, SageMaker provisions the hosting instance specified in the endpoint settings and downloads the model and inference container specified by the production variant to the hosting instance. If a successful response is returned after starting the container and performing a health check with a ping, a message indicating that the endpoint creation is complete is sent to the user. See the following code:

In the preceding example, we created two variants, each with its own different model (these could also have different instance types and counts). We set an initial_weight of 1 for both variants: this means 50% of our requests go to Variant1, and the remaining 50% to Variant2. The sum of weights across both variants is 2 and each variant has weight assignment of 1. This implies each variant receives 50% of the total traffic.

Invoking the endpoint is similar to the common SageMaker construct invoke_endpoint; you can call the endpoint directly with the data as a payload:

SageMaker emits metrics such as Latency and Invocations for each variant in CloudWatch. For a complete list of metrics that SageMaker emits, see Monitor Amazon SageMaker with Amazon CloudWatch. You can query CloudWatch to get the number of invocations per variant, to see how invocations are split across variants by default.

To invoke a specific version of the model, specify a variant as the TargetVariant in the call to invoke_endpoint:

You can evaluate each production variant’s performance by reviewing metrics such as accuracy, precision, recall, F1 score, and receiver operating characteristic/area under the curve for each variant using Amazon SageMaker Model Monitor. You can then decide to increase traffic to the best model by updating the weights assigned to each variant by calling UpdateEndpointWeightsAndCapacities. This changes the traffic distribution to your production variants without requiring updates to your endpoint. So instead of 50% of the traffic from the initial setup, we shift 75% of the traffic to Variant2 by assigning new weights to each variant using UpdateEndpointWeightsAndCapacities. See the following code:

When you’re satisfied with a variant’s performance, you can route 100% of the traffic to that variant. For example, you can set the weight for Variant1 to 0 and the weight for Variant2 to 1. SageMaker then sends 100% of all inference requests to Variant2. You can then safely update your endpoint and delete Variant1 from your endpoint. You can also continue testing new models in production by adding new variants to your endpoint. You can also configure these endpoints to scale automatically based on the traffic the endpoints receive.

Advantages of multi-variant endpoints

SageMaker MVEs allow you to do the following:

Deploy and test multiple variants of a model using the same SageMaker endpoint. This is useful for testing variations of a model in production. For example, suppose that you’ve deployed a model into production. You can test a variation of the model by directing a small amount of traffic, say 5%, to the new model.

Evaluate model performance in production without interrupting traffic by monitoring operational metrics for each variant in CloudWatch.

Update models in production without losing any availability. You can modify an endpoint without taking models that are already deployed into production out of service. For example, you can add new model variants, update the ML compute instance configurations of existing model variants, or change the distribution of traffic among model variants. For more information, see UpdateEndpoint and UpdateEndpointWeightsAndCapacities.

Challenges when using multi-variant endpoints

SageMaker MVEs come with the following challenges:

Load testing effort – You need to put in a fair amount of effort and resources for testing and model matrix comparisons for each variant. For an A/B test to be considered successful, you need to perform a statistical analysis of the metrics gathered from the test to determine if there is a statistically significant result. It could become challenging to minimize exploring poor performing variants. You could potentially use the multi-armed bandit optimization technique to avoid sending traffic to experiments that aren’t working and optimize performance as you test. For load testing, you could also explore Amazon SageMaker Inference Recommender to conduct extensive benchmarks based on production requirements for latency and throughput, custom traffic patterns, and instances (up to 10) that you select.

Tight coupling between model variant and endpoint – It could become tricky depending on model deployment frequency, because the endpoint may end up in updating status for each production variant being updated. SageMaker also supports deployment guardrails, which you can use to easily switch from the current model in production to a new one in a controlled way. This option introduces canary and linear traffic shifting modes so that you can have granular control over the shifting of traffic from your current model to the new one during the course of the update. With built-in safeguards such as auto-rollbacks, you can catch issues early and automatically take corrective action before they cause significant production impact.

Best practices for multi-variant endpoints

When hosting models using SageMaker MVEs, consider the following:

SageMaker is great for testing new models because you can easily deploy them into an A/B testing environment and you pay for only what you use. You’re charged per instance-hour consumed for each instance while the endpoint is running. When you’re done with your tests and not using the endpoint or the variants extensively anymore, you should delete it to save cost. You can always recreate it when you need it again because the model is stored in Amazon Simple Storage Service (Amazon S3).

You should use the most optimal instance type and size to deploy models. SageMaker currently offers ML compute instances on various instance families. An endpoint instance is running all the time (while the instance is in service). Therefore, selecting the right type of instance can have a significant impact on the total cost and performance of ML models. Load testing is the best practice to determine the appropriate instance type and fleet size, with or without auto scaling for your live endpoint to avoid over-provisioning and paying extra for capacity you don’t need.

You can monitor model performance and resource utilization in CloudWatch. You can configure a ProductionVariant to use Application Auto Scaling. To specify the metrics and target values for a scaling policy, you configure a target-tracking scaling policy. You can use either a predefined metric or a custom metric. For more information about policy configuration syntax, see TargetTrackingScalingPolicyConfiguration. For information about configuring automatic scaling, see Automatically Scale Amazon SageMaker Models. To quickly define a target-tracking scaling policy for a variant, you can choose a specific CloudWatch metric and set threshold values. For example, use metric SageMakerVariantInvocationsPerInstance to monitor the average number of times per minute that each instance for a variant is invoked, or use metric CPUUtilization to monitor the sum of work handled by a CPU. The following example uses the SageMakerVariantInvocationsPerInstance predefined metric to adjust the number of variant instances so that each instance has an InvocationsPerInstance metric of 70:

Changing or deleting model artifacts or changing inference code after deploying a model produces unpredictable results. Before deploying models to production, it’s a good practice to check whether the model hosting in local mode is successful after sufficiently debugging the inference code snippets (like model_fn, input_fn, predict_fn, and output_fn) in the local development environment like a SageMaker notebook instance or local server. If you need to change or delete model artifacts or change inference code, modify the endpoint by providing a new endpoint configuration. After you provide the new endpoint configuration, you can change or delete the model artifacts corresponding to the old endpoint configuration.

You can use SageMaker batch transform to test production variants. Batch transform is ideal to get inferences from large datasets. You can create a separate transform job for each new model variant and use a validation dataset to test. For each transform job, specify a unique model name and location in Amazon S3 for the output file. To analyze the results, use inference pipeline logs and metrics.

Conclusion

SageMaker enables you to easily A/B test ML models in production by running multiple production variants on an endpoint. You can use SageMaker’s capabilities to test models that have been trained using different training datasets, hyperparameters, algorithms, or ML frameworks; how they perform on different instance types; or a combination of all of the above. You can provide the traffic distribution between the variants on an endpoint, and SageMaker splits the inference traffic to the variants based on the specified distribution. Alternately, if you want to test models for specific customer segments, you can specify the variant that should process an inference request by providing the TargetVariant header, and SageMaker will route the request to the variant that you specified. For more information about A/B testing, see Safely update models in production.

References

A/B Testing ML models in production using Amazon SageMaker

Dynamic A/B testing for machine learning models with Amazon SageMaker MLOps projects

A/B Testing with Amazon SageMaker

About the authors

Deepali Rajale is AI/ML Specialist Technical Account Manager at Amazon Web Services. She works with enterprise customers providing technical guidance on implementing machine learning solutions with best practices. In her spare time, she enjoys hiking, movies and hanging out with family and friends.

Dhawal Patel is a Principal Machine Learning Architect at AWS. He has worked with organizations ranging from large enterprises to mid-sized startups on problems related to distributed computing, and Artificial Intelligence. He focuses on Deep learning including NLP and Computer Vision domains. He helps customers achieve high performance model inference on SageMaker.

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch and spending time with his family.

Read MoreAWS Machine Learning Blog