Amazon Aurora PostgreSQL-Compatible Edition and Amazon Relational Database Service (Amazon RDS) for PostgreSQL are managed PostgreSQL solutions that make it easy to set up, operate, and scale a relational database in the cloud. They provide cost-efficient and resizable capacity while automating time-consuming administration tasks such as hardware provisioning, database setup, patching, and backups.

As you migrate your workloads into Aurora and Amazon RDS for PostgreSQL, you may find that a handful of tables within your workloads tend to grow faster than others. This can cause a variety of issues, including increased query run time, query locking issues, longer bulk loads, and longer time to create or recreate indexes.

Limiting table size is the best way to ease this burden. In some cases, you can do this by offloading the data or having a designated data lifecycle where older data can be deleted or archived. Table partitioning, a process in which we split a large table into a series of smaller related tables, is the preferred solution to improve performance when data can’t be archived, offloaded, or deleted.

In this post, we provide a repeatable solution demonstrating how to do the following:

Split large tables into small parent/child tables for performance with minimal downtime

Achieve easier data lifecycle management after the table is partitioned

This post is the third in a series on partitioning for performance using managed Amazon RDS services. The previous entries in this series include:

Designing high-performance time series data tables on Amazon RDS for PostgreSQL

Speed up time series data ingestion by partitioning tables on Amazon RDS for PostgreSQL

Partitioning

In PostgreSQL-based engines, table partitioning requires that a parent table exists such that related child tables can inherit. In previous major versions of PostgreSQL (9.6 and earlier), pg_partman was written to handle the creation and management of these partition related resources (triggers, partitions, and constraints). As of PostgreSQL 10 and higher, native declarative partitioning was added, which is easier to use and manage.

Partitioning allows relational database schemas to scale with customer usage and application growth, without negatively affecting database performance. With partitioning, we accomplish this scaling by inserting data into many small tables (with associated indexes) and limited scopes of data per table. This allows for the querying of smaller sets of data by using WHERE constraints to limit the number of tables or indexes scanned, resulting in much faster query response time despite large amounts of data being held in the partitioned table. Improvements can also be seen in:

Query performance – Sometimes dramatically due to partition pruning

Accomplishing bulk loads and deletes of data faster – Via adding and dropping partitions as need

Improved data lifecycle workflows – By detaching child tables from their parents and reattaching it to a different parent

For more information on PostgreSQL partitioning, see Managing PostgreSQL partitions with the pg_partman extension.

As of this writing, native PostgreSQL partitioning handles table inheritance (table structure, indexes, primary keys, foreign keys, constraints, and so on) efficiently from major version 11 and higher. However, without the use of extensions, the process of creating and managing partitions is still a manual process.

pg_partman is a PostgreSQL extension that automates much of the creation and management of partitioned table structures. It’s a layer on top of PostrgreSQL native partitioning, which allows for more efficient creation and management of table partitions. In earlier versions of PostgreSQL (9.6 and earlier), usage of pg_partman was commonplace to manage the creation of all necessary child tables and trigger functions needed for parent/child table inheritance. With declarative partitioning now a feature in PostgreSQL versions 10 and above, these features are less frequently used. Although, with modern major versions of PostgreSQL, pg_partman still provides other benefits, such as automatic creation and removal of child tables or the handoff of child tables from one parent table to another. This move of child tables to different parent tables can be especially useful for creating data lifecycles that move older data from a production table to an analytics table. The availability of these extensions and capabilities can make a huge impact on database performance across growing datasets, though the question remains: how do we get there?

Migrate from monolithic to partitioned

Switching architectures from a monolithic table structure (one extremely large table) to a partitioned architecture (as discussed in the previous section) can be a simple and straightforward process. At a high level, we complete the following steps:

Create the new partitioned structure (using pg_partman for creation and management).

Migrate existing data into the partitioned table.

Cut over production usage from the source monolithic table to the target partitioned table.

Although this procedure is simple in theory, it’s complicated by situations that database workloads may encounter. A short downtime always exists when performing the cutover from the source table to the target table, but especially busy tables may need to have traffic temporarily blocked in order to obtain the needed table locks to perform the initial data copy (in Step 2). Depending on the size of the table and the locks required, this outage may last several minutes or hours.

Migrate to a partitioned table structure using AWS DMS

This solution applies to Amazon RDS for PostgreSQL, Aurora PostgreSQL, and self-managed PostgreSQL.

In this post, we discuss using AWS Database Migration Service (AWS DMS) to copy data from a non-partitioned table to a partitioned table and enable migration to a partitioned table with minimal downtime. In this proposed solution, we use PostgreSQL 12 on Aurora and AWS DMS.

A key feature of AWS DMS that we use is transformation rules and actions. You use the transformation (rename, prefix, postfix) actions to specify any transformations you want to apply to the selected schema, table, or view. For the purpose of migrating the data, we create a new partitioned table in a new schema and use a transformation rule to transform the source schema to the new destination schema. Although we use AWS DMS transformation rules to accomplish this, in practice we’re simply rerouting data from the source schema to the target schema (such that when the data is written into the target, it becomes partitioned).

If you wish to test the entire demo and don’t have any AWS resources provisioned, you can use the content of the following GitHub repo.

Migrate data from non-partitioned to partitioned with minimal downtime

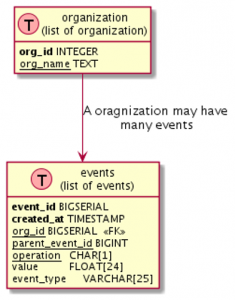

In the scenario outlined in this section, we have a sample database with a schema named data_mart, and two tables. The smaller table, organization, doesn’t need to be partitioned due to its smaller size. The larger table, events, is the table we partition to help with query performance and future scalability. See the following code:

Suppose you’re searching events on created_at and need to delete older events. created_at can be the perfect column to partition the table on. When choosing a column to partition on for your own workloads, you can use common business logic groupings such as organizations or geos for partitioning (and subpartitioning in many cases).

To create partitioned tables and schema for our large table events, complete the following steps:

Take pg_dump of the data_mart.events table with pre-data and post-data sections:

The pre-data section includes data definition items, and the post-data items include definitions of indexes, triggers, rules, and constraints other than validated check constraints.

Edit the preceding script, data_mart.events-pre-schema.sql, to change the schema name from data_mart to data_mart_new.

Update the table definition to add the PARTITION statement:

Create a new schema for the partitioned table and load the modified data_mart.events-pre-schema.sql:

As discussed earlier, we use pg_partman to create a partitioned table, in this case monthly. For more information about pg_partman, see PostgreSQL Partition Manager Extension (pg_partman).

At this point, we assume that pg_partman has been enabled for the given database cluster and that the appropriate CREATE EXTENSION statement has been run.

Use the following statement to make a monthly partition table, partitioned by created_at:

To enable change data capture (CDC) to capture ongoing database deltas (until we cut over to our newly partitioned table), we need to enable logical replication.

Enable logical replication by setting rds.logical_replication=1 for your parameter group (which requires a restart for changes to take effect):

After we create the source and destination schemas, we can create the AWS DMS resources.

Create a right-sized AWS DMS instance.

For best practices, see Best practices for AWS Database Migration Service.

Create source and target database endpoints (in this case, both are the same).

Modify the table mapping rule in the JSON editor; these rules map the source schema to the destination schema:

Create an AWS DMS task that reads from data_mart.events and writes to data_mart_new.events:

Each task has settings that you can configure according to the needs of your database migration. For more information, see Specifying task settings for AWS Database Migration Service tasks. For this post, use the following task setting as a reference.

This task is set up as full load as well as CDC. After the task is finished with the full load, we can see that data is replicated from the source table to the destination table:

Post-migration tasks

Perform the following post-migration tasks:

Create an index and foreign keys on the partitioned table using the file we created in the previous section using pg_dump:

We can now cut over to use the partitioned table.

Stop the application writing to the database.

Confirm all the CDC changes have finished by comparing the count from the source and destination table.

List all the replication slots, then delete them (they’re no longer needed). Be sure to replace <replication-slot-name> with the replication slots you wish to remove.

Disable logical replication (this requires a database restart for changes to take effect):

Assuming the replication slots have been removed prior to this step, the reboot can occur during a regularly scheduled maintenance window without risk of WAL logs accumulating on your primary instance.

Move partitioned tables from data_mart_new to the data_mart schema. Use the following code on GitHub.

Start the application.

Best practices

Prior to beginning the table migration process, make sure all tables to migrate have been vacuumed, so that as much table bloat is removed as possible. This makes sure that the tables can be efficiently read from during the AWS DMS migration between the source and target tables.

For more information on PostgreSQL partitioning general best practices, see Best Practices for Declarative Partitioning.

Conclusion

Migrating from a monolithic table structure to a partitioned structure has clear performance, management, and lifecycle benefits. Larger monolithic tables can cause certain processes (like VACUUM) to not complete successfully if that process is competing with other customer workload processes for the necessary table locks. In other cases, some customer workload queries may compete with others because of locks taken on large, unpartitioned tables.

You can alleviate or solve both of these common issues by moving large monolithic tables into a partitioned architecture. Partitioning can also assist in making data lifecycle processes easier to implement, and reduces their overall impact on other vital workload components.

In this post, we’ve walked through the migration of an existing monolithic table in Aurora PostgreSQL to a partitioned structure, and how to failover to it with minimal downtime.

Finally, we encourage you to download and utilize our Github repository referenced in this post, as it will enable you to quickly test the workflows discussed here, and clean them up when those POC resources are no longer required. If you have questions or suggestions, please leave them in the comments section below.

About the Authors

Peter Celentano is a Specialist Solutions Architect with Amazon Web Services, focusing on managed PostgreSQL. He works with AWS customers to design scalable, secure, performant, and robust database architectures on the cloud.

Chirag Dave is a Senior Database Specialist Solutions Architect with Amazon Web Services. he maintains technical relationship with customers, making recommendations on security, cost, performance, reliability, operational efficiency and best-practice architectures.

Read MoreAWS Database Blog