Customers that need to build resilient applications with the lowest possible recovery time objective (RTO) and recovery point objective (RPO) want to make the best use of AWS global infrastructure to support their resilience goals. Building an application using multiple Availability Zones in a single AWS Region can provide high levels of availability, but you might have business or regulatory requirements that lead you to explore running an application in multiple Regions. Switching to a multi-Region design is a significant shift in architecture and operations, and handling the persistence layer (database) is a challenging part of this shift.

In this four-part series, you’ll learn how to build resilient applications using Amazon DynamoDB global tables as the persistence layer. Part 1 (this post) presents a sample application and discusses the changes in availability as you move to a multi-Region footprint. Part 2 covers the characteristics of DynamoDB in the context of single-Region and multi-Region operation. Part 3 presents a multi-Region pattern for the sample application presented in Part 1. Part 4 concludes with an analysis of the multi-Region pattern with a focus on operations.

Along the way, you’ll see references to several articles from the Amazon Builder’s Library and to sample code in GitHub.

Sample application

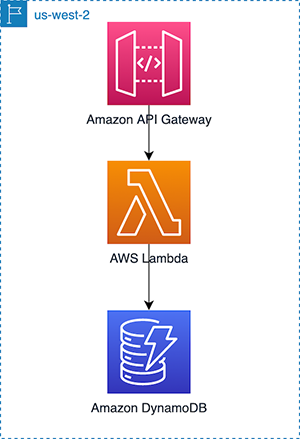

To begin, let’s consider a sample application that uses DynamoDB for persistence, AWS Lambda functions for business logic, and Amazon API Gateway to present the interface to external users (as shown in the Figure 1 that follows). In this example, you deploy the application in the us-west-2 Region.

The example is a simple order processing application that presents two methods: A POST method to create an order, and a GET method to retrieve an order. To create an order, the application must verify that the customer who places the order exists and that the product exists and is in stock.

The basic schema shown in Figure 2 that follows is based on the DynamoDB transactions example. It includes three tables—one for customers, another for orders, and a third for the product catalog. The partition keys are customer ID, order ID, and product ID, respectively. When creating an order, you confirm that the customer ID is valid and that the item is in stock, mark an item as sold, and finally create the order. When reading an order, you read the order from the order table and the related product details from the product catalog table.

Zonal, Regional, and global services

Before discussing the theoretical availability of the sample application, let’s review the differences between zonal, Regional, and global services.

Some AWS services are zonal, meaning that the resources provided by that service are scoped to a single Availability Zone. Amazon Elastic Compute Cloud (Amazon EC2) is an example. An EC2 instance runs in a specific zone. If you use an EC2 instance in your application and there’s an availability event in that zone, your application might be impacted unless you have set up resources in other zones. AWS services, such as Elastic Load Balancing and features like Auto Scaling groups, make it easier for an application to span multiple zones and tolerate an availability event in a single zone. Note also that an availability event rarely means that the entire zone has failed; an availability event can include more routine issues such as elevated error rates in a service.

Other AWS services are Regional, meaning that the resources provided by that service are distributed within a Region in such a way that they can continue to operate in the event of a single Availability Zone failure. Services that offer persistence replicate data across multiple zones.

DynamoDB, for example, replicates data across three Availability Zones in a Region. If you use Regional services exclusively in your application, you don’t have to worry about zone failures.

A few AWS services are global. For example, if you create a DNS record in Amazon Route 53, the service automatically makes that record available globally. Route 53 resources aren’t scoped to a single Region.

Calculating availability

It’s important to understand that theoretical availability calculations make assumptions about the independence of failure events, and software or operational errors often violate those assumptions. It’s also important to practice static stability. Static stability requires that the application work the same way during a failure event as during normal operations. For example, if the application runs in three Availability Zones, you might split the underlying capacity evenly across all three. If one of the zones has a failure event, you could plan to increase capacity in the other two to compensate. But that plan assumes that the underlying control plane APIs are working normally and enable provisioning of additional capacity. In this case, provisioning 50 percent capacity in each zone is a more resilient design, although the cost increases.

In the rest of this post, I calculate a theoretical availability level considering only the AWS services used by the sample application. Many AWS services offer availability service level agreements (SLAs), which aren’t guarantees of availability, but define conditions under which you become eligible for service credits if a service doesn’t meet its SLA.

To begin in the single-Region case, DynamoDB offers a 99.99 percent SLA for standard tables, and both API Gateway and Lambda offer a 99.95 percent SLA. The probability that the AWS services your application uses meet their SLA in a single Region is as follows:

For the sake of comparison, assume you use Amazon EC2 for compute instead of Lambda, and you use a single Availability Zone. In this case, the Amazon EC2 SLA is 99.5 percent. The application availability is then as follows:

By using Regional services instead of resources only in a single Availability Zone, the application composite availability increases from roughly two 9s to three 9s. What happens if you switch to using DynamoDB global tables and run the application in more than one Region? The DynamoDB SLA for global tables is five 9s. Assume you use Route 53 for routing user traffic to the closest Region. Route 53 now has a 100 percent SLA.

Now, when calculating a composite availability level for your application, the probability that the AWS services your application uses are meeting their SLA is as follows:

Note that these joint probability calculations assume that these three probabilities are independent. Because each Region is self-contained, that’s a reasonable assumption from the AWS perspective. Again, be aware that software or operational faults are likely to violate these initial assumptions.

What is the probability that the AWS services you use in at least one Region are meeting their SLA? Let’s start by defining the probability that both API Gateway and Lambda are meeting their SLA in a single Region:

If you have two Regions, the probability that at least one Region is meeting SLA is as follows:

Therefore, the probability that your application can use the AWS services it needs with two Regions is as follows:

Following the same logic, the probability with three Regions is 99.999 percent. It’s reasonable to conclude that you can get very close to a five 9s probability with two Regions, and even closer with three Regions. In practice, the choice of which Regions to use depends on resilience, cost, and performance for a geographically distributed user base. The multi-Region pattern covered in Part 3 of this series can be extended to cover more than two Regions.

The Amazon Builder’s Library article Minimizing correlated failures in distributed systems provides an in-depth analysis on how to reduce the types of correlated failures that impact even multi-Region designs. Using a Region instead of a single Availability Zone helps protect against infrastructure issues in a single zone, but the article also talks about reducing non-infrastructure events. You’ll see this topic again when we look at deployment pipelines in Part 4.

Conclusion

In this post, you learned about the differences between zonal, Regional, and global services, and how they impact availability for a simple example application. In Part 2 of this series, you’ll learn about some important characteristics of DynamoDB and how they relate to multi-Region use.

Special thanks to Todd Moore, Parker Bradshaw and Kurt Tometich who contributed to this post.

About the Author

Randy DeFauw is a Senior Principal Solutions Architect at AWS. He holds an MSEE from the University of Michigan, where he worked on computer vision for autonomous vehicles. He also holds an MBA from Colorado State University. Randy has held a variety of positions in the technology space, ranging from software engineering to product management. In entered the Big Data space in 2013 and continues to explore that area. He is actively working on projects in the ML space and has presented at numerous conferences including Strata and GlueCon.

Read MoreAWS Database Blog