Today’s enterprise applications are often assembled across distributed environments. This includes the integration of services across multi-cloud, multi-SaaS and on-premises environments. While the approach has the advantage of enabling enterprises to choose the best service available to support their applications, it adds the complexity of delivering services across heterogeneous environments. To solve this, Cloud Load Balancing supports an open cloud strategy, which includes:

Supporting universal traffic management policies across heterogeneous environment by leveraging open source and open standards

Enabling a global front-end so applications can leverage a common set of policies and security postures

Providing tools that give your users the highest possible performance and reliability

Universal traffic management with open source and open standards

Kubernetes is a great solution for managing containers across environments. We believe that traffic management policies should also be supported across environments. Cloud Load Balancing creates homogeneous traffic policies across highly distributed heterogeneous environments by supporting standard-based traffic management in a fully managed solution, and allowing open source Envoy Proxy sidecars to be used on-premises or in a multi-cloud environment, using the same traffic management as our fully managed Cloud Load Balancers.

As enterprises start modernizing services and refactor monolithic applications, they require solutions that can provide consistent traffic management across distributed systems at scale. But organizations want to invest their time and resources innovating and building new applications — not on the infrastructure and networking required to deploy and manage these services. Envoy is an open-source high-performance proxy that runs alongside the application to deliver common platform-agnostic networking capabilities, including:

New load balancing algorithms, (e.g. round robin, ring hash, least conns)

Additional header transformation options

Additional backend session affinity options

Cross-origin resource sharing (CORS)

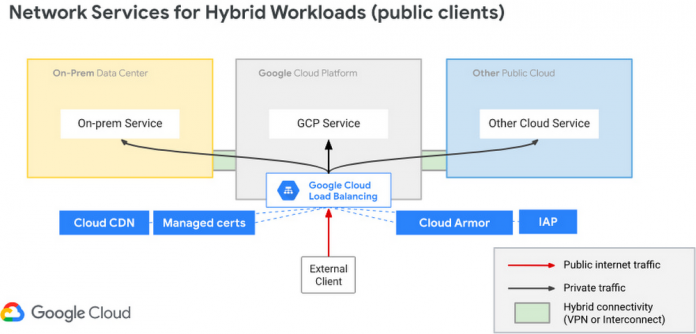

Hybrid Load Balancing across multi-cloud and private clouds

Over the years Google has deployed Load Balancers across 173+ Edge Pop locations, delivering customer applications at massive-scale on Google infrastructure. And now Google Cloud has introduced Hybrid Load Balancing, extending our Load Balancing capabilities beyond Google’s network to on-premises private clouds and multi-cloud solutions. This allows our customers to migrate applications to the cloud iteratively, or build hybrid applications that are assembled from services that are running across heterogeneous environments.

Supporting modern application delivery with HTTP3/QUIC

Cloud Load Balancing is a fully distributed load balancing solution that balances user traffic (HTTP(s), HTTPS/2, HTTPS/3 with gRPC, TCP/SSL, UDP, and QUIC) to multiple backends to avoid congestion, reduce latency, increase security, and reduce costs. It is built on the same frontend-serving infrastructure that powers Google services, supporting millions of queries per second with consistent high performance and low latency.

To serve massive amounts of traffic, Google built the first scaled-out software-defined load balancing, Maglev, which has been serving global traffic since 2008. It has sustained the rapid global growth of Google services, and it also provides network load balancing functions for Google Cloud Platform customers. To accommodate ever-increasing traffic, Maglev is specifically optimized for packet processing performance with Linux Kernel Offload. Maglev is also equipped with consistent hashing and connection tracking features, to minimize the negative impact of unforeseen faults and failures on connection-oriented protocols.

Another key enabler to support this global-scale is that our Cloud Load Balancers are built on top of QUIC(RFC9000), a protocol developed from the original Google QUIC) (gQUIC). HTTP/3 is supported between the External HTTP(S) Load Balancer, Cloud CDN, and end clients. And once enabled, customers typically see dramatic improvements in performance and throughput.

Google Cloud already supports HTTP3 in Cloud Load Balancer. To use HTTP/3 for your applications, you can enable it on your external HTTPS Load Balancers via the Google Cloud Console or the gCloud SDK with a single click.

If your service is sensitive to latency, QUIC will make it faster because it establishes connections with reduced handshakes. When a web client uses TCP and TLS, it requires two to three round trips with a server to establish a secure connection before the browser can send a request. With QUIC, if a client has connected with a given server before, it can start sending data without any round trips, so your web pages will load faster.

QUIC has advantages over legacy TCP as follows.

Summary

Since 2008, Google has been an innovator in software-defined networking, supporting applications running at massive scale. Google Cloud Load Balancers support HTTP3 and QUIC as a next generation web transport protocol, which significantly improves customer traffic latency. Google Load Balancers also have incorporated the Envoy proxy as a foundational technology, providing our customers with advanced traffic management that’s compatible with the open source Envoy ecosystem. This allows our users to have the choice to combine Google’s fully-managed Cloud Load Balancers with open source Envoy Proxies, to enable consistent traffic management capabilities across a multi-cloud distributed environment. And with Hybrid Load Balancing, customers can leverage our 173+ world wide PoPs to seamlessly manage traffic across Google Cloud, on-premises and other cloud providers.

Google Cloud Load Balancers include all these capabilities natively. And when used together, they support globally-scaled applications that run seamlessly across the heterogeneous environments many enterprises deploy today.

Cloud BlogRead More