Sorting is one of the most fundamental operations in databases, and optimizing this process is vital to the performance of applications. Sorting involves arranging records in a database table into some meaningful order to make it easier to understand, analyze, and visualize the data. For example, you might want to sort orders by their delivery date and find the fastest delivered item, or you might want to find the top 10 most expensive orders, with the most expensive at the top of the list. Sorting could be done in ascending or descending order.

Most databases involve heavy sorting operations before the data is analyzed, visualized, and presented to end-users. Often, depending on the complexity of the query and the volume of data to be sorted, it not only turns out to be highly expensive operation, but capable enough to bring chaos in a production environment. It’s critical not just to tune resources required for sorting, but also do so sensibly, so you can achieve the desired result by sorting fewer rows from a table instead of having to scan the whole table.

Tuning resources required to process a sort is as important as tuning a query in general. You must carefully review, test, and tune queries in a production-like environment, and accurately estimate resources required for executing the query for its successful journey in production.

Performance of a query degrades when it’s forced to sort the data on physical storage (disk instead of memory).

In this post, I discuss how and where to identify queries that are suboptimal, such as ones that require sorting on disk, and how to use various optimization techniques to improve performance.

Sorting in PostgreSQL

In PostgreSQL, like with any relational database platform, after a query has produced a result set by processing the SELECT, it can optionally be sorted. If sorting isn’t chosen, the rows are returned in an unspecified order. The actual order in that case depends on the scan and JOIN plan types and the order the rows are physically stored, but it shouldn’t be relied on. A particular output ordering can only be guaranteed if the sort step is explicitly chosen. The ORDER BY clause specifies this explicit sort order:

The sort expressions can be any expression that are valid in the query’s column list. An example is as follows:

Apart from ORDER BY, sorting operations are also used for DISTINCT, GROUP BY, MERGE JOINs, and many other scenarios internally by PostgreSQL’s query planner.

Where sorting happens in PostgreSQL

In most relational database platforms, sorting requires a temporary work area to produce the actual result. In Amazon Relational Database Service (Amazon RDs) for PostgreSQL and Amazon Aurora PostgreSQL-Compatible Edition, the parameter that dictates how much of memory can be allocated for a sort is work_mem. The default value is 4 MB, which is good enough for most queries. If sorting requires more than the allocated work_mem, it leads to physical I/O operation, namely, having to write to temporary files on disk, which is expensive and degrades the performance of the query.

Apart from helping sorts, work_mem is also used for various other operations. For instance, it helps in building a hash table, which is produced internally by an internal join method called a hash join, and JOINs are one of the most common reasons for using work_mem. For the sake of this post, let’s limit the discussion only to sorting.

The maximum configurable value for work_mem is approximately 2 TB, and the minimum is 64 kB. The setting itself doesn’t validate currently available memory on the host, so even for a db.t3.micro instance, you can set a value that is more than what is supported by the RDS instance type. If PostgreSQL needs to allocate more memory than what’s available on the host based on the setting, it can encounter an out of memory error or hang indefinitely in some scenarios.

Let’s dive in.

Identify queries that are lacking memory for sorting

Before I proceed, it’s also important to know the facts of my testing environment, like the instance type and volume of data this demo is being conducted against. We don’t want resource limitations of the underlying host to influence the performance of our demos because our sole focus is to tune the sort performance.

For demonstration purposes, I’m using a simple query SELECT * FROM t2 ORDER BY name; to showcase improvement in performance before and after. In a real world use case, queries get extremely complicated.

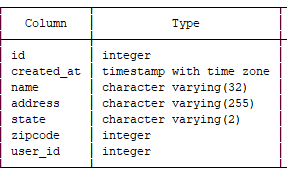

The following table t2 has approximately 13 million records with the following structure.

I used the instance type db.r5.large. I ran the ANALYZE command on the table to ensure table’s statistics for the query planner are up to date.

Log queries

When a query spills to disk, it produces temporary files. You can log this action on Amazon RDS for PostgreSQL and Aurora PostgreSQL by enabling the log_temp_files parameter, configured in KB. When enabled, a log entry is recorded for each temporary file created from a sort that spills to disk. The details are as follows:

A value of 0 logs all temporary files and size of each file.

When a value greater than 0 is set, it logs files greater than or equal to that specified setting. For example, if you set the value to 65536, PostgreSQL logs temporary files that are equal to or greater than 64 MB.

The default setting is -1, which disables logging.

Amazon RDS requires a custom parameter group to modify parameters. For Aurora PostgreSQL, we have to create a new cluster parameter group with the desired value for log_temp_files and associate it with the cluster. For Amazon RDS for PostgreSQL, we have to create a new instance parameter group with the desired value for log_temp_files and associate it with the RDS instance. Associating a new parameter group always requires a reboot of the RDS instance. In a multi-instance Aurora setup, the writer instance needs to be rebooted for the new parameter group or static parameters to take effect. If there is already an active custom parameter group, modifying this parameter takes immediate effect because it’s a dynamic parameter.

For more information, see Working with DB parameter groups for Amazon RDS for PostgreSQL, and Working with DB parameter groups and DB cluster parameter groups for Aurora PostgreSQL

Where to find logged queries

The parameter has been configured, now what?

Let’s assume that we ran the following query that sorted all the rows in the table, which resulted in the creation of temporary files that satisfy the log_temp_files setting:

Let’s go to the PostgreSQL log file and check entries during the query’s execution time. For both the Amazon RDS for PostgreSQL and Aurora PostgreSQL consoles, the log file is located on the Logs & events tab under the Logs section of the instance’s details.

From the contents of the following log file, we find information about temp files. The query created three temp files with total size around 1.9 GB. You might be wondering why there are multiple temporary files; this is due to the parallel query feature of PostgreSQL. In Amazon RDS for PostgreSQL and Aurora PostgreSQL, when the query planner determines that a parallel query is the fastest execution path for a particular query, it creates a query plan with multiple workers and parallel coordination. The number of background workers that the planner considers using is limited to at most max_parallel_workers_per_gather; the default value is two workers.

We found a query with a tuning opportunity to optimize sort performance, now what? We can now estimate the work_mem required for sorting.

Estimate work_mem required for sorting

So far, we have learned two things:

Queries violating our log_temp_files setting

Number of temporary files created by the query and size of each temp file

The size gives us a close estimate of how much of work_mem we should allocate to a specific query, but it’s not exactly right.

How the query planner works

When the query planner finds it has all the work_mem it needs to sort the data in the memory, it uses a quicksort algorithm, which is far more efficient and fast. However, when it can’t find the work_mem needed, it uses an external merge Disk algorithm, wherein sorting can be split between disk and memory with a merge in the end. When you limit the number of rows in the output using the LIMIT keyword, the query planner uses the heapsort algorithm. Because the algorithm used between writing to disk and memory is different, there is always extra overhead by the allocation of buffers, so the total work_mem required to perform the sort in memory is always greater than the size of a temporary file created by the query’s worker process.

To dive deep and find how PostgreSQL allocates memory for sorting, and learn about different algorithms used while sorting, visit the source code tuplestore.c and logtape.c on GitHub.

Now, let’s go further and discuss how we find the work_mem required for the specific query.

Prerequisites

We can review the execution plan and the combination of parameters at the session level in order to estimate the work_mem required by the query for the sorting in memory:

log_temp_files – Even though we have this setting configured at the instance level, we can still control it at the session level. The setting at the session level overrides the instance setting.

client_min_messages – Controls the logging information sent to the client. The default setting is notice.

trace_sort – Writes information about resource consumption during sort operations. The default setting is off.

Let’s turn trace_sort on, set client_min_messages to log, and set log_temp_files to 64 MB:

Analyze the logged query

Let’s run EXPLAIN on the query and find what it costs to execute the query:

However, a simple EXPLAIN only helps us with the information of the cost to execute based on the state of planner statistics. If statistics are stale, this could lead to incorrect analysis of the execution plan. To check the accuracy of the planner’s estimates and detailed information of sorting, I/O, and various nodes in the plan, options such as ANALYZE and BUFFERS are helpful.

The ANALYZE option provides more realistic information of cost, time taken to plan the query’s execution, actual execution time of the query, and other statistics that drive execution of the query. The BUFFERS option includes the information about the following:

The number of shared blocks hit, read, dirtied, and written

The number of local blocks hit, read, dirtied, and written

The number of temp blocks read and written

A hit means that physical I/O (read) is avoided because the block is already found in the cache. Shared blocks contain data from regular tables and indexes, local blocks contain data from temporary tables and indexes, and temp blocks contain short-term working data used in sorts, hashes, materialize plan nodes, and similar cases.

Let’s add the (analyze,buffers) option to the EXPLAIN to our query. It then logs a lot of information. However, what matters to us is the following piece of information highlighted in red, logged at the end of the EXPLAIN output:

Let’s pay attention to the information highlighted in green. Due to the lack of memory for sorting and default parallel query setting, it has resulted in three temporary files and required disk area worth around 1.9 GB for sorting. The total cost is 5132787, whereas the cost alone for sorting is 3813010.87, which is about 75% of the total cost. The total estimated time to execute the query is around 53 seconds (53417.000 milliseconds).

The cost is measured in arbitrary units determined by the planner’s cost parameters; the traditional practice is to measure the costs in units of disk page fetches.

Improve the query with the estimated work_mem

Now we know we have an estimated storage of 1.9 GB spilling to disk for sorting. Remember, if this needs to be translated to in-memory sorting, we require more work_mem than 1.9 GB due to additional overhead involved in buffering. One way to estimate the requirement of work_mem is by multiplying the width and number of plan rows that are sorted, found in the execution plan. Width is the estimated size in bytes of the returned rows.

From our execution plan, width is 354 and the number of plan rows are 11,425,418. If we multiply 11,425,418 and 354, we get 4,044,597,972 bytes, which is around 3.8 GB. With the use of parallel processing, this 3.8 GB is to be split between three processes: one parent process and two child (worker) processes. If we divide 3.8 GB by 3, we get approximately 1.26 GB per process. With the additional overhead we discussed earlier, let’s set it roughly to 1.4 GB and test our query:

We have a much improved execution plan, with execution time down from approximately 53 seconds to 19 seconds. The cost to execute the query is also down from 5132787.62 to 2321055.62, and the cost to process the sort is down from 3813010.87 to 1001278.87. Sorting in memory has not only helped reduce the time to execute the query, but more importantly reduced the cost to execute it, which means at the expense of fewer resources.

With Amazon RDS Enhanced Monitoring, we can monitor the memory usage. In the following graphs, we can see active memory used by the query and at the same time, drop in free memory was also observed. I reran the same query multiple times to show the consistency and accuracy of Enhanced Monitoring metrics.

With the extra overhead by the allocation of buffers, it’s always tricky to estimate the exact amount of memory. It might require more memory per process than estimated. In such cases, it’s always recommended to rerun EXPLAIN (analyze,buffers) with the initially estimated value and see if external merge Disk disappears from the plan. If not, we should keep increasing and testing a value of work_mem until external merge Disk disappears from the plan.

What if you choose to disable parallel processing to limit CPU usage or due to already having CPU-intensive workloads? In this scenario, you most likely need to set the work_mem to [(plan rows) * (width)] + [additional overhead] and keep testing a value that makes external merge Disk disappear from the execution plan.

Query with INDEX

Now, let’s INDEX the sort key, ANALYZE the table, and see what happens:

Now we have the best possible execution plan for the given query, with execution time down from approximately 19 seconds of the previous execution to 6 seconds, and the cost to execute is down from 2321055.62 to 1593480.90, and with no cost to sort at all.

You must be wondering what happened to those memory sorts or disk spills and how they disappeared. Well, the magic has been applied by INDEX. This is because the B-tree index entries are sorted and indexed in ascending order by default.

Moreover, PostgreSQL is smart enough to also apply this logic for sorts and use the INDEX with a backward scan when using DESC order on the indexed sort key column. Therefore, the query no longer requires sorting at all. When a suitable INDEX is available to satisfy sorting, work_mem is no longer required.

Considerations

Keep in mind the following:

Don’t set work_mem identified of a single query at the instance or cluster level in the parameter group. The work_mem allocated is not only session specific, it’s based on how many sorts are required to complete the request submitted by that session. For example, a complex query could comprise multiple sorts in a single request and at times, based on the query planner’s visibility of statistics, parallel processing by using max_parallel_workers_per_gather could occur with each worker process sorting within its own work_mem space. Based on the volume of data and complexity of the query, it could require lots of memory to reduce the disk overhead and often, there can only be a few such queries with a requirement for large memory space. Setting a value at the system level that is only required for fewer queries can create memory bottleneck and cause out of memory situations.

The recommended value to be set at the instance level is subjective to workload pattern, available total memory on the RDS or Aurora instance (instance type), and acceptable performance of a query. The log_temp_files setting at the instance level is the good start for projecting an average value for work_mem at the instance level.

To estimate the value for work_mem at the instance level, as discussed in this post, consider enabling log_temp_files with an initial value, say, 64 MB at the instance level. Review the PostgreSQL’s log file to find how many temp files are generated in a day or during peak traffic, and pick a work_mem setting based on the log analysis to minimize disk spills for the rest of the queries or requests.

For the targeted queries, set the optimal value at the session level, then make sure to reset it (RESET work_mem;) back to the instance level setting at the end of the request so that the memory is released back to system and used for other activities.

Like we discussed in this post, apart from settings at the session and instance level, based on the use case and requirement, you can also adjust the work_mem parameter at an individual database level, user level, or single transaction level. See the following code examples:

The following is an example at database level:

The following is an example at user or role level:

The following is an example at transaction level:

Aurora PostgreSQL uses local storage, and Amazon RDS for PostgreSQL uses database storage for temporary files. For more local storage in Aurora, you need to scale up the instance type. For more storage in Amazon RDS, you need to increase the storage of the RDS instance. For more information, see How can I troubleshoot local storage issues in Aurora PostgreSQL instances and Working with storage for Amazon RDS DB instances.

Don’t use EXPLAIN (analyze) in a busy and critical production system as it executes the statement behind the scenes to provide more accurate planner information. Its impact is significant. Let’s take an example of a query sorting resulting in an out of storage error. If we run EXPLAIN (analyze), we introduce a system crash. It’s always the best practice to do the analysis on a non-production system with production-quality data and volume for an accurate estimate of work_mem.

The one advantage of using EXPLAIN (analyze) is that it doesn’t result in network I/O traffic because no output rows are delivered to the client or application.

If you have to run EXPLAIN (analyze) on a DML statement such as INSERT, UPDATE, or DELETE, make sure to apply a transaction block so that you can roll back without actually impacting the underlying data while fetching the execution plan. And remember, the DML statement still causes locks and can potentially block other interested transactions, so be aware of this fact before checking. For example, see the following code:

Aurora supports fast cloning using copy-on-write protocol. It only takes a few minutes for you to have a copy of a production database cluster ready for testing performance with changes you see fit and migrate it back to production after satisfactory results. See Cloning a volume for an Aurora DB cluster for more information.

Make sure statistics are up to date for the query planner. If required, run ANALYZE on tables that are part of the query.

Summary

Sorting can easily become a bottleneck, whether it’s due to lack of memory or inappropriately handled. Setting appropriate work_mem is crucial to the performance of your queries that heavily rely on sorting, whether a value is targeted for fewer queries in a session or for choosing a common value at the instance level. It’s also operationally essential to revisit queries periodically that have gone through optimization and adjust the work_mem setting if needed to meet performance needs.

You can Use Amazon RDS Enhanced Monitoring to help you monitor memory usage accurately during a sort in your tests so that you can try to stay as much as possible within the capacity of your RDS instance or scale up the instance type for more memory. For Aurora PostgreSQL, you can use fast cloning to address business-critical queries on an almost on-demand basis and migrate changes to your production system at light-speed.

In this post, I demonstrated a use case in which I identified and optimized performance of a query that relies heavily on sorting, and showed significant improvement in query performance by reducing the time and cost it takes to sort.

If you have any questions, please let us know in the comments section.

About the Author

Naga is a Cloud Support Engineer with Amazon Web Services and Subject Matter Expert for RDS PostgreSQL, he has over a decade of experience in working with relational databases. At AWS, He works with Service Teams, Technical Account Managers, Solutions Architects, and assists customers secure and optimize their Aurora and RDS PostgreSQL deployments.

Read MoreAWS Database Blog