AWS Lambda is a serverless compute offering that helps you design event-driven architectures. It provides direct integration with multiple AWS services, including Amazon Aurora MySQL-Compatible Edition.

In this post, we show you how to invoke Lambda functions from Amazon Relational Databases Service (Amazon RDS) for MySQL and Amazon RDS for MariaDB using Amazon CloudWatch and messages published in audit logs. The same architecture can also be used with Amazon Aurora MySQL-Compatible Edition.

Solution overview

This solution consists of publishing RDS for MySQL or MariaDB audit logs to a CloudWatch log group, and creating a CloudWatch subscription filter for Lambda to trigger a Lambda function.

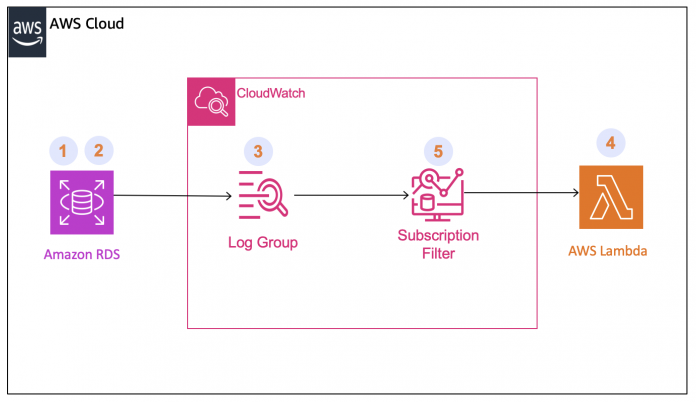

The following diagram illustrates the solution architecture and flow.

In this solution, audit logs generated by Amazon RDS for MySQL or Amazon RDS for MariaDB are published to a CloudWatch log group. The CloudWatch subscription filter filters the logs based on a user-defined pattern—when there is a pattern match, the subscription filter triggers the specified Lambda function and sends the log event to the Lambda function.

The logs received are base64 encoded and compressed with gzip format. We show you how to decode the log event to only get the desired payload for the Lambda function.

To deploy the solution, we complete the following steps (corresponding to the numbered components in the architecture diagram):

Create a table in Amazon RDS for MySQL or MariaDB to trigger the Lambda function.

Enable audit logs on the RDS for MySQL or MariaDB instance.

Publish the audit logs to a CloudWatch log group.

Create the Lambda function.

Create a CloudWatch subscription filter.

Prerequisites

To follow along with this post, you should have an RDS for MySQL or MariaDB database instance for which you wish to trigger the Lambda function. For this post, our DB instance is named Lambda-trigger-mariadb. For instructions to create an RDS instance, refer to Create a RDS Instance.

Create a table in Amazon RDS for MySQL or MariaDB to trigger the Lambda function

In this step, we create a table with the same name as the Lambda function we need to trigger, with a single column to insert the JSON payload for the Lambda function. Complete the following steps to create your table:

Connect to your RDS for MySQL or MariaDB Instance and select the database in which you wish to create the table.

Create a table named MyLambda:

Enable audit logs on the RDS for MySQL or MariaDB instance

You can capture DML queries running in the RDS instance by enabling audit logs. Unlike error logs, general logs, and slow query logs, there is no direct parameter in the parameter group to enable audit logs. You must use the MariaDB Audit Plugin using an option group to enable auditing in your RDS for MySQL or MariaDB instance. Complete the following steps:

On the Amazon RDS console, create an option group.

Add the MariaDB Audit Plugin.

Modify your RDS instance to use the option group you just created.

You can enable audits logs in Amazon Aurora MySQL from the DB cluster parameter group by setting the parameter server_audit_logging to 1. Refer to Configuring an audit log to capture database activities for Amazon RDS for MySQL and Amazon Aurora with MySQL compatibility for detailed steps.

After you have enabled audit logs on the RDS instance, the logs are listed on the Amazon RDS console in the Logs section on the instance details page, as shown in the following screenshot.

Publish the audit logs to the CloudWatch log group

Now we need to publish the logs to CloudWatch. Complete the following steps:

On the Amazon RDS console, select your RDS instance and choose Modify.

In the Log exports section, select Audit log.

Choose Continue.

On the summary page, verify that Enable publish to CloudWatch logs has Audit log listed under New value.

Choose Apply immediately.

Choose Modify DB instance.

After you publish the audit logs to CloudWatch, the log group is listed on the CloudWatch console with the naming convention aws/rds/instance/<database-name>/audit.

Create the Lambda function

To create your Lambda function, complete the following steps:

On the Lambda console, choose Functions in the navigation pane.

Choose Create function.

Choose Author from scratch.

For Function name, enter a name (for this post, we use MyLambda).

For Runtime, choose Python 3.12.

For Architecture, choose x86_64.

Choose Create function.

In the Code section, enter the following code to get the JSON data inserted in the table as your Lambda function’s payload. You can modify the code as needed to perform other custom actions.

Note that the invocation payload size for an asynchronous Lambda function is 256 KB. For more details, refer to Lambda quotas.

Create a CloudWatch subscription filter

A CloudWatch subscription filter lets you filter log data coming from a CloudWatch log group based on the terms or pattern you design and send it to Amazon Kinesis Data Streams, Amazon Kinesis Data Firehose, or Lambda. For this post, we create a filter on the audit logs for any INSERT operation that happens in the table named MyLambda. You can choose any tables or filter pattern published in the audit logs as needed for your use case.

Complete the following steps to create a CloudWatch subscription filter:

On the CloudWatch console, under Logs in the navigation pane, choose Log groups.

Choose the audit logs log group for your instance (aws/rds/instance/<database-name>/audit).

On the Subscription filters tab, on the Create menu, choose Create Lambda subscription filter.

For Lambda function, choose the Lambda function you created.

For Log format, choose Other.

For Subscription filter pattern, enter insert into MyLambda.

The Subscription filter pattern filters the log entries for the exact string that you provide to trigger the Lambda function.

Note Subscription filter is case-sensitive. It is not possible to add more than one pattern for a given filter; however, you can add more than one subscription filter in a log group.

For Subscription filter name, enter a name of your choice – for this post, we use MyInsertFilter

For Select log data to test, choose the name of your DB instance (for this post, we use Lambda-trigger-mariadb).

Choose Test pattern to confirm that the filter pattern matches with entries in the log group.

Note that the test pattern only shows the 50 most recent entries in the logs. Therefore, it’s possible that the complete logs have a matching pattern but the test returns zero matches.

Choose Start Streaming.

The filter is now listed on the Subscription filters tab in the log group page on the CloudWatch console.

Verify that the solution is working

To verify the solution is working, connect to the RDS instance and run the query for which you created the metric filter.

In the following example, we connect to our MariaDB instance using the MySQL CLI client and run an insert query on the MyLambda table:

We can see the Lambda function is triggered and a message is printed in the logs in the CloudWatch log group. The log group is listed on the CloudWatch console with the naming convention aws/lambda/<lambda-function-name>.

Tips and troubleshooting

In place of audit logs, you can use any of the other MySQL or MariaDB logs (such as general logs, slow query logs, or error logs) to trigger the Lambda function using the same architecture illustrated in this post.

Note than when using general or slow query logs, you have to set the log_output parameter as FILE from the RDS parameter group for both Amazon RDS for MySQL and Amazon RDS for MariaDB to push the logs to CloudWatch.

The example in this post shows how to trigger a Lambda function and get the payload. You can also use the Lambda function as a router function and trigger other Lambda functions from it-simply pass the name of the function to be triggered as the payload in JSON.

If the Lambda function does not trigger, check the following:

The logs are being generated and pushed to the CloudWatch log group

The subscription filter is created as expected with the correct Lambda function

Clean up

When you’re done with the solution, delete the resources you created to avoid ongoing charges.

Disable the audits logs.

Modify the RDS instance to stop publishing the logs to CloudWatch.

Delete the CloudWatch log group.

Delete the CloudWatch subscription filter.

Delete the Lambda function.

Conclusion

In this post, we showed how to deploy a solution to trigger a Lambda function from your RDS for MySQL or MariaDB instance.

Try it out today and leave a comment if you have any questions or suggestions.

About the Author

Asad Aazam is a Solutions Architect at AWS with expertise in AWS Security services and AWS Database technologies such as Amazon Aurora, Amazon RDS, and Amazon DynamoDB. He helps homogeneous and heterogeneous database migrations and optimizations in the AWS Cloud. He currently holds 11 of 12 AWS Certificates. When not working, he likes to go on bike rides, travel, and enjoy the beauty of nature.

Read MoreAWS Database Blog