A common pattern for solving application networking challenges is to use a service mesh. Users familiar with such architectures face challenges related to network flow, security, and observability. Traffic Director is a managed Google service that helps solve these problems.

In this blog, we are going to share a sample architecture, using Traffic Director to configure gateway proxies, running on a GKE cluster, with TLS routing rules to route traffic from clients outside of the cluster to workloads deployed on the cluster.

Furthermore, we will demonstrate how north-south traffic could enter the service mesh using the Envoy proxy acting as an ingress gateway. Additionally, we will demonstrate the use of the service routing API to route such traffic and share some useful troubleshooting techniques.

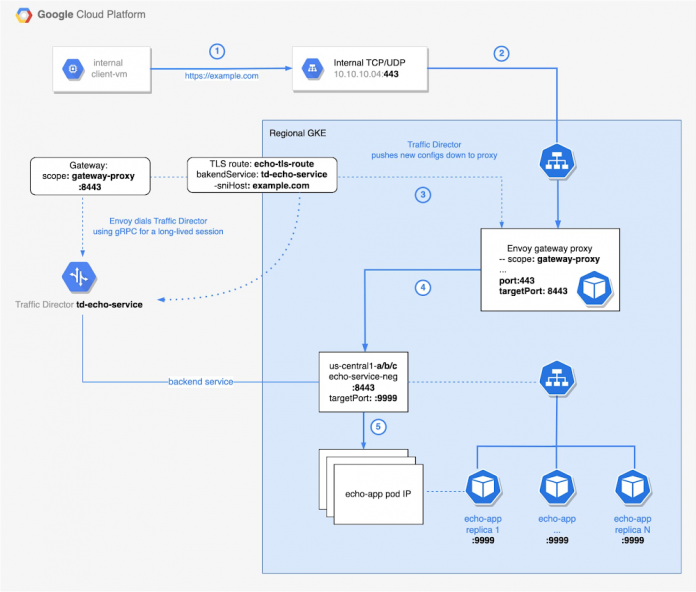

The following diagram illustrates all the key components and traffic flows that are described in this blog.

1. An internal client will make a request to a sample application (e.g. https://www.example.com) and the request will be forwarded to an internal load balancer.

2. All requests to the internal load balancer are forwarded to pods containing Envoy proxies, running as a deployment of gateway proxies on a GKE cluster.

3. These Envoy proxies receive their configuration from the Traffic Director, as their control plane.

4. Based on the routing configuration received from the Traffic Director, the proxies will then route the incoming requests to the appropriate backend service for the target application.

5. The request will be handled by any of the pods associated with the backend service.

Backend service

In this section, we show how to deploy our target application and create the backend service, i.e. echo-service-neg that will be mapped with that application as depicted on the diagram above. First, let’s deploy the echo service. Notice the following annotation:

It instructs Google Cloud to create a Network Endpoint Group, a NEG – one for each zone in the region. Later, these NEGs will be configured as backends for the Traffic Director.

Using routing information, i.e. scope_name and port 8443, Envoy gateway proxy routes requests to the correct NEG (step 5 on the architecture diagram). We use mendhak/http-https-echo:23 image as our backend. You can find a license for it here.

Ingress Gateway

Traffic enters the service mesh through the ingress gateway (step 2). The Envoy proxy acts as such a gateway. The ingress gateway uses the SNI information in the request to perform routing. We will apply the Traffic Director configurations before deploying the ingress gateway pods. Although not a hard requirement, it does provide for a better user experience, i.e. you will see less corresponding errors in the Envoy logs.

Below are sample gcloud commands to deploy the health check and backend for Traffic Director. Take note of the name: td-tls-echo-service.

Up to this point, we have created NEGs for the target echo application and the Traffic Director backend above. Let’s now add those NEGs as backends for the Backend Service.

Note that the deployment for the ingress gateway below uses an initContainer running a bootstrap image, passing certain arguments allows this image to generate the Envoy bootstrap configuration; an alternative is to manually write the Envoy bootstrap configuration and store it in a ConfigMap and mount it in the Envoy container. Take note of –scope_name=gateway-proxy. As we will see later, scope_name associates our gateway with the service routing Gateway resource.

The following is the configuration for the service routing Gateway resource; notice the scope: gateway-proxy. Its value matches the one we specified in the YAML file above. Additionally, we set our target port to 8443. Essentially, we are handling the north-south traffic as shown here. We will talk more about routing in the next section.

Traffic Director, as a control plane, generates the corresponding configuration and pushes it to the Envoy (step 3), which is our data plane.

Save the definition above in the gateway8443.yaml and import it using the following command.

In Cloud Operations Logging , you can run the query below to confirm the successful connection.

It is useful for debugging to access the local admin interface of the Envoy by forwarding HTTP requests to the local machine. Execute the following from the command line: kubectl port-forward [YOUR POD] 15000. You should see output similar to these:

Open a browser window and go to http://localhost:15000. You should see the admin interface page, as shown below.

Click on the listeners link to see names of the listeners, and ports they are listening on, that were received from the Traffic Director. The config_dump link is useful to inspect the configuration that was pushed to this proxy from the Traffic Director. The stats link outputs endpoint statistics information that could be useful for debugging purposes. Explore this public documentation to learn more about Envoy administrative interface.

Routing

Let’s configure the routing. If you are not familiar with the Traffic Director service routing API, we recommend you read the Traffic Director service routing APIs overview to familiarize yourself with this API before you continue reading.

To use the service routing API in this tutorial, we need two resources, namely the Gateway resource and the TLSRoute resource. In the previous section we defined the Gateway resource. Below is the definition for TLSRoute resource.

Notice the gateway8443 in the gateways section. It matches the name of the gateway resource we defined in the Ingress Gateway section. Also notice the value of serviceName. This is the name of our Traffic Director backend service that was also created earlier.

Note that in the TLSRoute, host header matching is based on the SNI header of incoming requests. The sniHost value matches the domain, i.e. example.com. Additionally, the value h2 inside the alpn section, allows HTTP2 request matching only. Lastly, it will route all such requests to the backend specified with the service name – the Traffic Director (step 4 on the architecture diagram) we created.

Save the definition above in echo-tls-route.yaml file and import it using the following command.

Internal Load Balancer

The client connects to the internal load balancer (step 1 on the diagram). That is a regional Internal TCP/UDP Load Balancer. The load balancer (step 2) sends traffic to the Envoy proxy that acts as an ingress gateway. The load balancer is a passthrough one, making it possible to terminate the traffic in your backends.

The load balancer listens for incoming traffic on the port 443, routing requests to the GKE service. Notice the service definition below and pay attention to the ports config block. Next, the service directs requests from port 443 to port 8443 – a port exposed by our Envoy gateway deployment.

We have created the load balancer on Google Kubernetes Engine (GKE). See the tutorial for more details.

Firewall

To allow for network flow shown in the architecture diagram, you will need to configure following firewall rules. It is important to allow ingress from GKE nodes that terminate SSL workloads to the client-vm. Google’s Network Connectivity Center helped us to troubleshoot the connection and configure corresponding firewall rules.

In addition to the two firewall rules below that we created explicitly, please note that an automatically created GKE firewall rule is instrumental in allowing the Envoy pods to communicate with the application pods. If you would like to reproduce this deployment with pods sitting in different clusters, you will need to ensure that a firewall rule exists to allow pod to pod traffic across those clusters.

Validate the deployment

In this section, we will verify if we are able to communicate successfully to our backend service via Traffic Director. We create another VM (client-vm as depicted in diagram above) in a network that has connectivity in place with the internal load balancer that was created in section Create the Envoy gateway . Next, we run a simple curl command against our URL to verify connectivity:

Notice if you have more than one load balancer, you may apply a filter to the command that grabs and stores IP address in the variable above. See “Filtering and formatting with gcloud” blog for details.

Some important takeaways from this screenshot is that the Envoy “routed” our request and one of the pods that are behind the “echo” service handled the request. If you run kubectl get pods -o wide, you will be able to verify that the ip above matches the one of the Envoy gateway pod and the hostname of your echo pod matches the one shown on the screenshot.

Next Steps

Please refer to Traffic Director Service Routing setup guides to learn more about how to configure other types of routes apart from TLS routes.

Cloud BlogRead More