Cloud computing has changed almost every business and industry by changing the delivery and consumption model. With the Cloud, businesses no longer need to plan for and procure servers and other IT infrastructure weeks or months in advance. This allows more flexibility and reliability, increased performance and efficiency, and helps to lower IT costs. The Cloud changes the IT consumption model in three major ways:

Decentralized control enables engineers to increase spend on infrastructure without finance and procurement control.

Variable operating expenses spending replaces pre-planned, fixed data center capital expenditures.

Instant access to resources enables innovation but often results in overprovisioning and resource sprawl.

It is important organizations update their on-premise cost optimization tools and processes to reflect this new consumption model. Customers that do not update their tools and processes struggle to track, maintain, and manage costs in the Cloud today because of these reasons. This new model is often referred to as Cloud Financial Operations, or Cloud FinOps.

Cloud FinOps is a management practice promoting shared responsibility for an organization’s cloud computing infrastructure and costs across technology, finance, and business. AWS provides several tools to assist your Cloud FinOps mission. AWS Cost Explorer is an out-of-the-box solution to analyze your costs in AWS. Amazon Forecast is a fully managed, time-series forecasting service based on Machine Learning (ML) and is built for business metrics analysis. Cloud Intelligence Dashboards are a series of Amazon QuickSight dashboards provided in the AWS Well-Architected Labs to support your Cloud FinOps journey. There are a number of AWS Partner Network (APN) partners also providing Cloud FinOps tools.

Tracking costs in the cloud today usually consists of three main techniques:

Tagging – This involves developing and maintaining naming and tagging standards along with tooling to apply them to all cloud resources. These tags often reflect the business unit, department, billing code, geography, environment, project, and workload or application categorization. FinOps software then rolls up cost by these tags into reporting lines.

Account organization – This requires you to create a series of accounts aligned by business unit, project, or environment and apply policies across them. Costs often roll up to a parent or biller account and are allocated in the budget by the child account generating the cost.

The Cloud Center of Excellence team – This is a centralized architecture and operations team that tests specific services the organization wants to use and develops best practices for cost management. These services are often turned off for use within their organization until this process is completed.

In this post, we discuss how using Amazon Neptune, a graph database, can improve the state-of-the-art in Cloud FinOps through enhancing four major areas:

Automation of tagging

Modeling and simulation of cloud operations

Adding context to existing alerting and reporting to enhance visibility, minimize cognitive overload, and improve enterprise-wide processes

Enabling the application of emerging AI and machine learning (ML) techniques like Graph Neural Networks (GNNs) to accelerate the automation and effectiveness of cloud FinOps analyses.

Improving manual tagging strategies

Cloud FinOps today relies on organizations creating a strong tagging strategy, actively managing and revising that strategy as business needs evolve, and developing processes to enforce the application of that strategy. The onus of applying that strategy falls on developers and operations. As the strategy evolves, it is difficult, if not impossible, to retroactively apply it to existing resources, leaving gaps in reporting. Like any manual process, this can be error prone because enforcement often confirms the tags exist and contain a value, but not necessarily the correct value. Representing your cloud resources in the form of a graph offers additional opportunities to automate processes to help optimize tagging strategies.

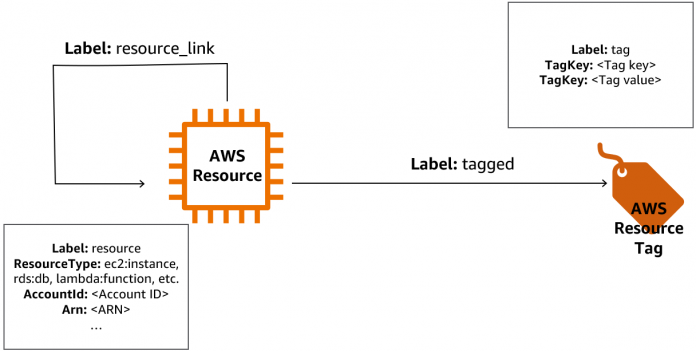

Let’s look at an example of this by using the following graph data model to model our cloud environment. Here, we represent each AWS resource as its own node labeled resource. Each unique tag key-value pair is represented by a node as well, with each AWS resource node connected to the corresponding tag node that it’s associated with.

Figure 1: The graph data model being used to represent AWS resources and tags within a cloud environment.

You may wonder how you can then extract the graph structure from the cloud environment. This can be done by using cloud APIs to automatically describe and retrieve resources and their tags, then transforming the output to model the connections in a graph. For an example of how to use cloud APIs to build a graph of your cloud resources, refer to Graph your AWS resources with Amazon Neptune.

We will now walk through a few different approaches you can use graph queries to improve upon manual tagging strategies. These approaches are:

Finding and prioritizing untagged resources

Validating tag correctness of tagged resources

Finding correctly tagged resources that are unused

Enabling better cost modeling and optimization

Let’s look more in detail at each of these approaches.

Finding and prioritizing untagged resources

After you build your graph, you can run graph queries to extract further insights about your tagging. For example, you can use the following gremlin query to find resources that don’t have tags:

But what if there are hundreds of resources that aren’t tagged—which resources should you start tagging first? In this situation, you may infer that untagged resources that are connected to or associated with other tagged resources collectively belong to a larger architecture, even if some resources within the architecture are not directly tagged.

The following figure provides an example. Even though the Amazon DynamoDB resources and one of the AWS Lambda resources are not directly tagged, they are connected with other resources that are tagged. Therefore, we can infer that DynamoDB and the untagged Lambda resources should inherit the tags of the rest of the architecture.

Figure 2: An example cloud environment with multiple dependent resources and their respective tags.

In this case, you can prioritize your tagging efforts by first tagging resources that are not connected to directly tagged resources. You can find these resources with the following graph query:

Validating tag correctness of tagged resources

Let’s look at another example. The following figure shows a sample application diagram representing Amazon API Gateway powered by containers running in Amazon Elastic Container Service (Amazon ECS) using DynamoDB as a data store. An Application Load Balancer is managing traffic between the API Gateway and Amazon ECS, and an Amazon Virtual Private Cloud (Amazon VPC) endpoint allows Amazon ECS to access DynamoDB. First, let’s look at the values of the tags on the resources. Notice that the Amazon ECS Environment tag is Testing and the DynamoDB and API Gateway Environment tag values are Staging. This is something you would want to fix to ensure cost allocations by category are correct. Second, the Cost Center tag for API Gateway has the numbers inverted and says 123465 instead of 123456 shown on the other resources. The wrong budget may be getting hit here. Finally, at the start of the project, you couldn’t tag Application Load Balancers or Amazon VPC endpoints. Now that this feature is available, you can use it.

Figure 3: Another example cloud environment with multiple dependent resources and their respective tags.

If you were to model the cloud environment in the form of a graph, you can easily see that the tags you want to apply to those resources are Environment=Staging and Cost Center=123456 without having to consult the original project documentation.

You may want to even automate this type of search using graph analytics like neighborhood detection, combined with analyzing the consistency of tagging within that neighborhood, to provide insight into incorrectly tagged resources and the proper values for untagged resources without manual inspection.

For example, you could use a graph algorithm such as Weakly Connected Components (WCC) to find the clusters of resources that belong to a single application architecture. WCC outputs components, where each node within a given component has a path to any other node within the same component regardless of edge direction. You can then validate all the tags that are used to tag resources within a given application by checking all the connected tag nodes to AWS resource nodes within the same component.

Finding correctly tagged resources that are unused

With these graph techniques, you can also find orphaned or disconnected resources such as a database or detached storage that may be correctly tagged and therefore not identified by existing tools as unnecessary cost, but such analysis can show that they are not actively connected to the application, providing opportunities for deallocation. The following figure shows the same application as before, but when we modernized our application to use DynamoDB instead of Amazon Relational Database Service (Amazon RDS), we forgot to go back and take the resource offline after we were sure the migration was stable. We never detected that this resource shouldn’t be running because the tags were set correctly, but our graph shows that it is not connected to any other resource.

Figure 4: Another example cloud environment with multiple dependent resources and their respective tags.

You could use the following graph query to detect this:

However, even with the aforementioned strategies, two other major concerns exist. First, not every cloud resource supports tagging (for example, Amazon CloudWatch alarms), and second, not every resource is allocated exclusively to a single project (for example, AWS Config). Many tagging strategies only support single values per tag, and even if they support multiple values, they often assume equal distribution of costs across those multiple values. Without the use of graphs, the former costs would go unallocated and the latter would require extremely complex tagging schemes to track costs effectively. To address the former problem, you could simply create tagged edges between the unsupported resource and the tag node it should be connected to. To address the latter problem, you could associate billing weights on the tagged edges as edge properties.

In the next section, we explore how we can further utilize the connections of the graph and combine them with other cloud cost datasets to bring additional cost saving opportunities.

Enabling better cost modeling and optimization

A digital twin is defined as a virtual model of a physical object, using real-time data sent from sensors on the object to simulate the behavior and monitor operations. Most digital twin applications today are in reference to a digital replica of a place or thing, like sensors in a factory floor tracking ambient temperature to determine the effect on employee performance or inventory durability so it can be optimized risking employee health or actual inventory, or sensors monitoring vibrations on an airplane to proactively detect part failures before your customers are placed at risk, but what if you created a digital twin of your cloud application itself to better understand its costing model? By gathering and modeling the interconnectivity of the resources, combining that with data collected about their usage such as CloudWatch metrics, Amazon VPC Flow Logs, and AWS Cost and Usage Reports (AWS CUR), you can build a digital twin of your cloud applications to enable advanced capabilities like simulating changes to provisioned resources like compute instance size to observe the cost impacts on your databases and API traffic. You can observe the interdependency of costs between related resources (for example, how much does adding a new container affect your DynamoDB cost) and how they vary over time as traffic evolves, and develop optimal automated scaling strategies with it.

For example, let’s represent our cloud application architecture from earlier in the form of a graph, according to the graph data model we defined previously. Let’s say our API Gateway API is getting much more traffic than we anticipated, and to address this, we want to increase the concurrency of our Lambda that backs the API. With a graph representation, we’re able to see the downstream impact of what this change really involves. Although it’s modifying a single component of the architecture, in reality, any connected and associated services may also be impacted depending on its integration. Specific to our example, increasing the concurrency of our Lambda function also affects the Amazon Simple Queue Service (Amazon SQS) queue it’s posting to (higher throughput of messages sent to the queue), which could mean increased invocations of polling Lambda functions, which in turn affects resources that those Lambda functions interact with.

The following figure illustrates how changing the configuration of a single resource can have cost impact on downstream services.

Figure 5: An example graph model showing the downstream impact if a central Lambda function were to change.

By facilitating better modeling of costs, as an application progresses from development to production, you are better able to budget for future cloud expenses in new development efforts (for example, the percentage increase of cloud resource costs when a development team is enhancing and testing an application over 6 months compared to maintaining it in production).

Smarter alerting through contextual awareness

Financial alerting and analysis today consists of disparate rules monitoring for specific conditions independently of each other. They are often grouped together under the context of common tags (such as cost center, environment, or project) but as previously discussed, those tags are error prone and may be mislabeled or missed altogether. Incorporating the contextual awareness enabled by the graph allows for smarter reporting and understanding of the relationships between those individual alerts.

For example, let’s use the same example as depicted in Figure 5, and let’s say our AWS Budgets cost alarm has been triggered on our untagged Lambda function due to exceeding our set value for the budget. Although the Lambda function was the triggering service, our graph reveals to us that there is a service upstream of the Lambda function (SQS queue), which could have caused the increase in Lambda function usage. Additionally, our graph reveals that there is a service downstream of the Lambda function (DynamoDB table), which we may anticipate having increased spend on due to our increased Lambda function usage.

The additional contextual awareness that a graph-based FinOps solution provides can be extended to the situation of knowing where specific financial alerts originate from. For example, we may have an alert based off our tags Env=Staging and Cost Center=123456. If not all of our resources in that architecture are tagged (for example, the polling Lambda function and DynamoDB table are not tagged), we may miss other potential causes of the alarm. However, with our graph, we can find all the associated services within the entire architecture, even if they aren’t correctly tagged.

Having the context to know which alarms are related allows the FinOps practitioner to reduce the cognitive load of determining the context and relationship of those alerts, minimizing the false alarms and maximizing the effectiveness of their response. This brings compounded cost savings because less time is spent determining context, thereby allowing more effort to be spent on deeper analysis of costs.

Additional contextual awareness is not just limited to financial benefits. Imagine that you get a cost anomaly alert because your Amazon Neptune Serverless instance is scaling to the max limit you had set for a long duration, and you don’t know the cause. Your security counterparts also get this alert and begin to check the data flow logs from that Neptune cluster and notice a significant outflow of traffic to several new destinations. By combining your contextual cost alerting with contextual security alerting, you may have detected a complex data exfiltration attack in real time.

Enabling the future with generative AI and other AI tools

It’s no secret that companies are making significant investments in artificial intelligence approaches like generative AI. It is also no secret that generative AI can only complete tasks using data it has been trained on and has access to. Retrieval-augmented generation (RAG) is a technique used in natural language processing that combines the power of both retrieval-based models and generative models to enhance the quality and relevance of generated text. Large language models (LLMs) can be inconsistent in answering questions because they understand how words relate statistically, but not what they mean. The Cloud FinOps principles that the model was trained on may not reflect your company or industry, so it is important to ground those assumptions on the reality of your cloud costs and infrastructure. By implementing RAG in your LLM-based question answering system you ensure that the model has access to the most current costs and forecasts, and that your analysts can check its claims for accuracy and verify it can be trusted.

Graph technology has long been trusted to store the knowledge graphs that are key to powering new RAG-based solutions. Your knowledge graph can store not only the architecture of past projects, but also the costs associated with them and the performance they achieved. Imagine your LLM taking the requirements and diagrams associated with your new project and using that knowledge graph to not only forecast based on similar architecture, but scale the costs appropriately based on your requirements compared to the performance it achieved. Being able to accomplish this before you’ve written a line of code can allow much more effective resource allocation and decision making than is currently achievable. And this is just one scenario. In the previous sections, we showed the importance of adding the context in how your workflows and processes are interconnected to Cloud FinOps, so now can you imagine what your LLMs can do with that context of interconnectedness instead of just viewing each resource as an independent entity? Let us know what you might be imagining in the comments as we’d love to hear them!

Beyond generative AI, you can also use techniques such as Deep Graph Library (DGL) to model and optimize your cloud costs in ways not possible today. Amazon Neptune ML uses graph neural network technology to automatically create, train, and deploy ML models on your graph data. Neptune ML supports common graph prediction tasks, such as node classification and regression, edge classification and regression, and link prediction. With the graph model, you could use a link prediction job to predict where a tagged edge should exist between a given untagged AWS resource node and a tag node. Additionally, you could use a node classification task to validate the correctness of the TagKey and TagValue properties on a tag node. GNNs generate predictions of the graph structure, and work by allowing ML models to use the connected nature of the graph data to generate features based on the underlying networking structure. Find more examples and use cases for Neptune ML in our AWS Labs GitHub repository.

Conclusion

In this post, we discussed how graph databases can be used to enhance the capabilities of your FinOps efforts by improving manual tagging strategies, providing better cost modeling and optimization, unlocking enhanced alerting capabilities through increased contextual awareness, and enabling the use of AI/ML techniques to gather additional insight for FinOps analyses.

Get started with building a graph-based FinOps solution today by modeling your application architecture in the form of a graph. For an example, refer to Graph your AWS resources with Amazon Neptune, or use open source tools such as Cartography or Starbase.

If you are a FinOps software provider, we hope you can use these techniques to provide better capabilities for your customers. If you manage FinOps for your company, we hope these ideas allow you to better optimize how your company uses the cloud. We look forward to seeing how you implement and expand upon these ideas to advance the state-of-the-art in FinOps.

If you have any comments or questions about this post, share them in the comments.

About the Authors

Brian O’Keefe is a Principal Specialist Solutions Architect at AWS focused on Neptune. He works with customers and partners to solve business problems using Amazon graph technologies. He has over two decades of experience in various software architecture and research roles, many of which involved graph-based applications.

Melissa Kwok is a Neptune Specialist Solutions Architect at AWS, where she helps customers of all sizes and verticals build cloud solutions with graph databases according to best practices. When she’s not at her desk, you can find her in the kitchen experimenting with new recipes or reading a cookbook.

Read MoreAWS Database Blog