Most migrations from on-premises Oracle to Amazon Relational Database Service (Amazon RDS) for Oracle use EXPDP/IMPDB or Transportable Tablespaces using RMAN. Depending on the size and complexity, migrations are often split at the schema level and table level.

In this post, we present a solution to help you identify missing schema objects in the target database post-migration and identify any issues. We use a tool to compare source database Oracle schema objects against target database schema objects and provide the missing objects list in HTML format. We also discuss the types of issues you can encounter during migration and how this tool can help you proactively fix migration errors.

Reasons to validate

Oracle is a complex database with lots of flexibility, and due to the breadth of features, it may be necessary to validate your objects. The following are some common reasons why import/export may require further validation:

When we performed the full database export from an Enterprise Edition (EE) 11g database and imported only selected schemas into a Standard Edition (SE) 19C database, we received the errors ORA-39014: One or more workers have prematurely exited and ORA-39029: Worker 1 with process name “DW00” prematurely terminated. This is a limitation of the export and import process. Therefore, we had to perform the selected schema export from the EE 11g database into the SE 19C database.

Export doesn’t capture some types of objects, like ALTER TYPE statements for CREATE TYPES in export dumps, so they will need to be moved over manually:

Schema-level export and import of objects to the target database will minimize downtime. However, there will be lot of errors during expdp/impdp. Going through and identifying errors is a tedious task. Therefore, this sanity report helps you find any missing objects.

When there is no downtime for the source database, you would use either AWS Database Migration Service (AWS) DMS or Oracle GoldenGate. If any of DDL statement is not supported by the tools, our solution can help you find such missing objects.

At times, we disable triggers and foreign key constraints at the target database during the data migration process and enable them post-migration. This tool can capture any missed objects to enable.

If the source database code has wrapped (using DBMS_DDL.WRAP) code, importing the data into the target fails if you’re missing Oracle patch 20594149. Ensure patch 20594149 is applied first.

If the target database is Amazon RDS and has any missing patches (for example, patch Patch 8795792), the import may cause index creation failures.

If we neglect to set a static configuration parameter similar to source databases, the database needs to be restarted after the cutover.

There will be invalid objects if you migrate the database using expdp/impdp at the schema and table level. By invalid, we usually mean different types of objects such as synonyms, functions, packages, procedures, and views that have a reference to non-existing objects or to objects that were changed in some way or built in a different order.

The database link from the source must be reviewed. If the source database is on premises, the database link will connect to the older database after migration and therefore these need to be manually updated.

A SQL profile is a collection of information stored in the data dictionary that enables the query optimizer to create an optimal run plan for a SQL statement. The SQL profile contains corrections for poor optimizer estimates discovered during automatic SQL tuning. During migration, if you’re not migrating the SQL profiles, it can lead to performance issues.

Solution overview

The Oracle-to-Oracle object sanity post-migration tool works for the following use cases starting from Oracle 12C and above:

On-premises Oracle to Oracle on Amazon Elastic Compute Cloud (Amazon EC2) and vice versa

On-premises Oracle to Amazon RDS for Oracle and vice versa

Oracle on Amazon EC2 to Amazon RDS for Oracle and vice versa

Use this script for application schemas rather than for SYS or SYSTEM schema comparisons.

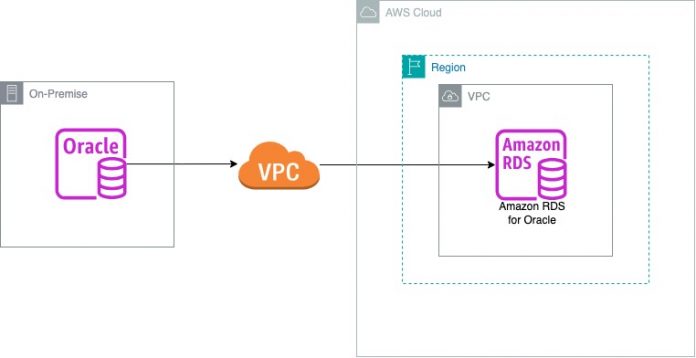

The following diagram depicts the solution architecture and AWS services used in this post.

Prerequisites

To follow along with this post, you need the following prerequisites:

An EC2 instance or bastion server that connects to the source database and target database

Access to the source and target database metadata with either SELECT_CATALOG_ROLE or DBA privileges

SQL *Plus

SQL *Loader

Clone the GitHub repo and provide the chmod 755 permissions for the files

Ensure you have connectivity to source database and target database from your workspace if run mode chosen is sql_ldr

Ensure you have connectivity to only the target database from your workspace if the run mode chosen is db_link

Validating objects

The following table summarizes the Oracle objects and validation fields.

Oracle Objects

Validation Fields

Schema list

Schema name, profile, status

Object types

Database object types, attributes, attribute position, data type, data type length

Sequences

Sequence start position, last number, min value, max value, status, is_cycle, cache_size

Tables

Tables, column names, column position, column data type, data length

Table partitions

Table name, table owner, partition name

Constraints

Constraint name, attribute, attribute type, position, status

Indexes

Index name, index column name, column position, index schema, status

Index partitions

Index name, partition name, status

Views

View name, number of lines of code

Synonyms

Synonym name, table name, database link

Triggers

Trigger name, table owner, table name, trigger type, status

Scheduler jobs

Scheduler job names, state

Queues

Queue name, queue table, queue type, enqueue enabled, dequeue enabled

Rule

Rule name, rule owner, rule status

Java

Object name, object owner

Scheduled programs

Program name, owner, status

Database links

Database link name, user, host details

Code line count

Verifying the number of lines count for every code object

Invalid list

Object name, object type, owner

Oracle patches

Patch ID, status

Oracle parameters

Parameter name, inst_id, default value, values

SQL profiles

Profile name, status

Roles

Role name

Roles and privileges

Grantee, granted, admin options

System privileges

Grantee, privilege, admin options

Table statistics

Table name, number of rows

LOB objects

Owner, table name, column name

DB profiles

Profile name, resource name

Objects count

Object type, count, owner combination

Perform schema validation

Database migration is a multistage process, which usually includes assessment, schema migration, data migration, testing, and many other steps spanning across the stages. The size and type of Oracle database migration you want to do greatly determines the tools you should use. For Oracle database, we can use native tools like export and import, and AWS tools like AWS DMS.

The following task list shows when you should conduct schema validation during your migration process:

Configure the source Oracle database server.

Configure the target Oracle database server.

Perform the code migration using the export and import process.

Perform schema and code validation.

To validate the schema conversion, compare the objects found in the source Oracle database and target Oracle database using your preferred query editor.

The standard validation method compares object counts in the source database against the counts in the target database. You can perform count verification for any schema object, but count validation alone doesn’t always satisfy end-users. In the previous section discussing reasons to validate, we discussed why we need to go beyond count verification. Users often look for object definition-level verification. This is why you must write your custom queries to retrieve the data definition language (DDL) from the database and compare them.

In this section, we walk you through the steps to perform schema validation:

After migrating the source Oracle database to the target Oracle database, verifying the schema list at the source and target is important for zero loss of objects. Use the following Oracle query to compare the schema list details:

Use your Oracle user name list in place of schema_name in the following example queries. If you exclude any schemas from migration in the source database or introduce one in the target database, then exclude those schemas by adding a WHERE clause filter in the preceding and subsequent queries.

As part of schema validation, you should verify the list of object types and its attribute-related information in the source and target database in order to ensure zero loss of data. Use the following Oracle query to compare the object type details:

Validate sequences with the following code:

Validate tables:

Validate table partitions:

Validate constraints:

Validate indexes:

Validate index partitions:

Validate views:

Validate synonyms:

Validate triggers:

Validate scheduler jobs:

Validate queues:

Validate rules:

Validate Java objects:

Validate scheduled programs:

Validate database links:

Validate lines of code in code objects:

Validate the invalid list:

Validate Oracle patches:

Validate Oracle parameters:

Validate SQL profiles:

Validate roles:

Validate roles and privileges:

Validate system privileges:

Validate table statistics:

Validate large objects (LOBs):

Validate DB profiles:

Validate object count by object type:

Use the post-migration sanity check tool

The post-migration sanity check tool runs the preceding queries in the source and target database and provides the differences in HTML format. You can run the tool in two different modes:

sql_ldr (Oracle SQL Loader) – The following script is secure and doesn’t need a password to be stored; it will request for a password:

db_link (Oracle DB Link) – Similarly, the following script will request for a password:

For a detailed step-to-step guide to run the tool, refer to the README file.

The following screenshots show a sample of the HTML sanity report.

All the object types discussed in this post are been covered in the HTML report. Refer the GitHub repo for the complete sanity report sample.

The HTML report includes two result sets:

Source Vs Target – This provides details of objects that exist in the source but not the target

Target Vs Source – This provides details of objects that exist in the target but not the source

Clean up

Because this solution runs on an Amazon EC2 server or bastion host, cleanup of AWS services is required. The script also has a feature to upload the report to Amazon Simple Storage Service (Amazon S3).

Summary

This post introduced a post-migration sanity check tool and demonstrated how it can help as a schema and code validator for Oracle-to-Oracle migration projects.

We strongly recommend going through this solution and implementing it wherever necessary. If you have any questions or comments about this post, please share your thoughts in the comments section.

About the Authors

Lokesh Gurram is a Lead Consultant with AWS ProServe, GCC India. He assists and enables customers to migrate servers and databases to AWS. He loves working backward and automating manual processes to enhance the user experience with least Business As Usual. His primary focus is homogeneous and heterogeneous migrations of on-premises databases to Amazon RDS along with complete migration automation solutions.

Suhas Basavaraj is a Lead Consultant AWS ProServe, GCC India. He is working as Database Migration Lead, helping and enabling customers in homogeneous and heterogeneous migrations from on premises to Amazon RDS. He is passionate about automation and building solutions to accelerate database migration.

Read MoreAWS Database Blog