Over the last 2 years, layer 2 technologies have gained traction and are solving the scaling constraints of Ethereum. L2beat provides a consolidated view of the different layer 2 projects. At the time of writing, Arbitrum represents approximatively half of the market value of layer 2 solutions.

AWS offers a variety of services to help builders from node deployment blueprints to fully managed infrastructure and data services on Amazon Managed Blockchain. Although Arbitrum is not yet supported, running your own serverless Arbitrum node on AWS is an uncomplicated task.

In this post, we show you how to deploy an Arbitrum full node in a serverless manner on AWS. We also illustrate how to interact with the Arbitrum network by connecting MetaMask to your Arbitrum node. To follow these instructions, you only need a working AWS account.

Solution overview

There are multiple considerations for running an Arbitrum full node, including transaction validation and security, and reduced trust requirements. The How to run a full node (Nitro) section of the Arbitrum documentation contains the prerequisites and instructions to run an Arbitrum full node. In this post, we propose an AWS architecture to address those requirements. This architecture is not intended to represent the single source of truth, but instead a way of implementing those requirements favoring serverless services like AWS Fargate. Alternatives based on Amazon Elastic Compute Cloud (Amazon EC2) instances would be as valid as the one presented here.

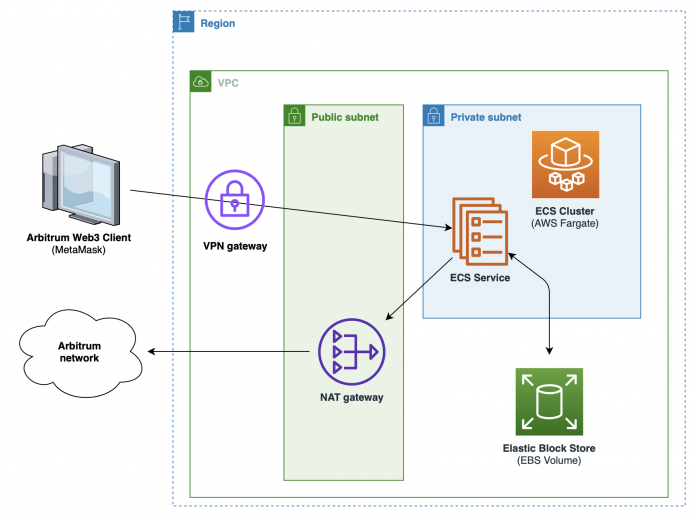

The following diagram shows the different components of the solution.

The following are some key elements to consider:

Tasks running on Fargate benefit from a native integration with Amazon Elastic Block Store (Amazon EBS) volumes. Refer to Amazon EBS volume performance for Fargate on-demand tasks for the volume performance baselines.

The proposed architecture has been tested on the Sepolia testnet and is intended to be deployed on development or test environments. In a production deployment, the Amazon Elastic Container Service (Amazon ECS) Fargate cluster should be replaced by an ECS cluster with the Amazon EC2 launch type, which can deliver higher storage performances.

At the time of writing, the latest stable version of the Arbitrum Nitro node is 2.3.1, but you may want to check for a more recent version.

This architecture is minimalistic and designed with cost in mind, but it can be extended to meet your specific requirements:

For increased resiliency, you may consider running nodes over multiple Availability Zones.

To connect to your Arbitrum node from another Web3 application backend service, you may want to use Amazon ECS Service Connect.

To avoid using the private IP of the service task (which may change) to connect to your Arbitrum node, you can extend this architecture following the guidance in Access container applications privately on Amazon ECS by using AWS Fargate, AWS PrivateLink, and a Network Load Balancer.

You can monitor IP traffic using VPC Flow Logs.

In the following sections, we walk through the steps to implement the solution. We recommend that you follow the following step-by-step instructions to get a full understanding of the different components being deployed. Alternatively, you can use the following AWS CloudFormation template. The automated deployment should take around 15 minutes to complete. You can then skip to the Test the node section of this post to check that your node successfully synchronizes with the network.

Create a VPC

Complete the following steps to create a VPC:

On the Amazon VPC console, choose Create VPC.

For Resources to create, choose VPC and more.

Under Name tag auto-generation, for Auto-generate, enter arbitrum.

For IPv4 CIDR block, enter 10.0.0.0/23.

For IPv6 CIDR block, choose No IPv6 CIDR block.

For Number of Availability Zones, choose 1.

For Number of public subnets, choose 1.

For Number of private subnets, choose 1.

For Public subnet CIDR block, enter 10.0.0.0/24.

For Private subnet CIDR block, enter 10.0.1.0/24.

For NAT Gateways, choose 1 per AZ.

For VPC endpoints, choose None.

For DNS options, choose Enable DNS hostnames and Enable DNS resolution.

Choose Create VPC.

Create IAM roles

Verify that the AWS Identity and Access Management (IAM) role ecsTaskExecutionRole exists. For more information, refer to Amazon ECS task execution IAM role.

Create an ecsInfrastructureRole IAM role using the AmazonECSInfrastructureRolePolicyForVolumes managed policy. For detailed instructions, refer to Amazon ECS infrastructure IAM role.

Create security groups

Create an /ecs/arbitrum-node Amazon CloudWatch log group. For detailed instructions, refer to Working with log groups and log streams.

Create security groups

In this step, we create two security groups. For full instructions, refer to Create a security group.

Create a VPN security group

First, create a security group for the VPN:

On the Amazon EC2 console, choose Security groups in the navigation pane.

Choose Create security group.

For Name, enter arbitrum-vpn-sg.

For Description, enter Arbitrum VPN security group.

For VPC, choose arbitrum-vpc.

Choose Create security group.

Create an Arbitrum node security group

Next, create a security group for the Arbitrum node:

On the Amazon EC2 console, choose Security groups in the navigation pane.

Choose Create security group.

For Name, enter arbitrum-node-sg.

For Description, enter Arbitrum node security group.

For VPC, choose arbitrum-vpc.

Create the following inbound rule:

For Type, choose Custom TCP.

For Port range, enter 8547.

For Source, choose Custom, then choose the arbitrum-vpn-sg security group.

For Description, enter Arbitrum RPC.

Choose Create security group.

Create an ECS cluster

Complete the following steps to create an ECS cluster:

On the Amazon ECS console, choose Clusters in the navigation pane.

Choose Create cluster.

For Cluster name, enter arbitrum-cluster.

For Infrastructure, choose AWS Fargate (serverless).

Choose Create.

Create a task definition

Complete the following steps to create a task definition:

On the Amazon ECS console, choose Task definitions in the navigation pane.

Choose Create new task definition, then Create new task definition with JSON.

Replace the content in the edition pane with the following:

Update the placeholders:

For <SEPOLIA_BEACON_CHAIN_API_ENDPOINT>, enter the URL of the Sepolia beacon chain endpoint. For more details on why this endpoint is required, refer to the Arbitrum 2.3.0 release notes.

For <SEPOLIA_ENDPOINT>, enter the URL of the Sepolia execution client endpoint.

For <ecsTaskExecutionRole_ARN>, enter the ARN of the ecsTaskExecutionRole IAM role.

For <AWS_REGION>, enter your AWS Region (for example, us-east-1).

Choose Create to create the task definition.

Create a new service

Create a new service with the following steps:

On the Amazon ECS console, choose Clusters in the navigation pane.

Open the arbitrum-cluster cluster.

Under Compute options, choose Launch type with the following parameters:

For Launch type, choose FARGATE.

For Platform version, choose LATEST.

Under Deployment configuration, configure the following parameters:

For Family, choose arbitrum-node.

For Revision, choose (LATEST).

For Service name, enter arbitrum-node-service.

For Desired tasks, enter 1.

Under Deployment options:

Choose Rolling update.

For Min running tasks, enter 0%.

For Max running tasks, enter 100%.

Under Networking:

For VPC, choose arbitrum-vpc.

For Subnets, choose the arbitrum-vpc private subnet .

For Security group, choose arbitrum-node-sg.

For Public IP, choose Turned off.

Under Volume:

For Size (GiB), enter 1000.

For IOPS, enter 5000.

For File system type, choose EXT4.

For Infrastructure role, choose ecsInfrastructureRole.

Choose Create.

The service will automatically start an ECS task with the Arbitrum full node container. To check the logs of the container, navigate to the arbitrum-cluster cluster, and on the Tasks tab, choose the newly created task. You can then check the logs of the container on the Logs tab.

After the container finishes synchronizing with the network, which could take several hours, you should be able to check that the last created blocks correspond to the ones from Arbiscan, as shown in the following screenshot:

Test the node

To test the newly created node, you can connect to our environment using a Client VPN, then connect MetaMask (a popular browser wallet) to the Arbitrum node endpoint.

Access the Arbitrum endpoint with a Client VPN

Start by generating certificates following the instructions in Mutual authentication. To do that, you can open an AWS CloudShell window and enter the following commands:

Now you can create your Client VPN. For full instructions, refer to Getting started with AWS Client VPN.

On the Amazon VPC console, choose Client VPN endpoints, then choose Create client VPN endpoint.

For Name, enter arbitrum-vpn-endpoint.

For Description, enter VPN remote access to Arbitrum node.

For Client IPv4 CIDR, enter 10.1.0.0/16.

For Server certificate ARN, choose the server certificate you created.

For Authentication options, choose Use mutual authentication.

For Client certificate ARN, choose the server certificate you created.

Choose Enable split-tunnel.

For VPC ID, choose arbitrum-vpc.

For Security group IDs, choose arbitrum-vpn-sg.

Complete your Client VPN.

Next, associate a target network.

Choose the Client VPN endpoint you created, choose Target network associations, then choose Associate target network.

For VPC, choose arbitrum-vpc.

For Choose a subnet to associate, choose the arbitrum-vpc private subnet .

Wait for the state of the client VPN endpoint to be Available.

Next, create an authorization rule.

For Destination network to enable access, enter 10.0.1.0/24 (the arbitrum-vpc private network CIDR range).

For Grant access to, choose Allow access to all users.

Wait for the authorization rule state to be Active.

Lastly, configure your local OpenVPN client:

From AWS CloudShell, choose Actions, Download file, and enter /home/cloudshell-user/easy-rsa/easyrsa3/pki/issued/arbitrum-vpn-client.mydomain.com.crt

From AWS CloudShell, choose Actions, Download file, and enter /home/cloudshell-user/easy-rsa/easyrsa3/pki/private/arbitrum-vpn-client.mydomain.com.key

From the Client VPN endpoints console, choose Download client configuration and add the following at the end of the downloaded file, replacing the placeholders with the content of the previously downloaded files:

You can now use this configuration file to connect to the Client VPN endpoint with the AWS-provided client or another OpenVPN-based client application. For more information, see the AWS Client VPN User Guide.

Configure MetaMask

In this section, we assume that you already have MetaMask installed on your browser and enough ETH on the Sepolia testnet to pay the transaction gas fees. If that’s not the case, you should start by downloading and installing MetaMask on your browser, and get ETH from a faucet for the Sepolia testnet (refer to Networks for a complete list).

Complete the following steps to first collect the node’s private IP:

On the Amazon ECS console, navigate to the arbitrum-cluster cluster.

On the Tasks tab, choose the running task and note the private IP.

Now you can start configuring MetaMask.

In MetaMask, choose Add a network manually and create a new network.

For Network name, enter Arbitrum-Sepolia-AWS.

For RPC URL, enter http://ARBITRUM_NODE_IP:8547 (ARBITRUM_NODE_IP must match the IP you noted in the previous step).

For Chain ID, enter 421614.

For Currency symbol, choose ETH.

For Block explorer URL, enter https://sepolia.arbiscan.io/.

Test using the Arbitrum bridge

Connect to the Arbitrum bridge at https://bridge.arbitrum.io/?l2ChainId=421614.

After connecting MetaMask, you should be able to transfer ETH back and forth between the Sepolia testnet (Layer 1) and the Arbitrum Sepolia testnet (Layer 2) by selecting the source and target networks, and entering the amount of ETH to transfer.

Clean up

If you deployed the solution manually, delete the elements that you created in the following order:

On the Amazon ECS console, delete the arbitrum-cluster ECS cluster.

Navigate to the arbitrum-node task definition and deregister all revisions.

On the Amazon VPC console, navigate to the arbitrum-vpc-endpoint client VPN endpoint and disassociate the private subnet on the Target network associations tab.

Delete the arbitrum-vpn-endpoint VPN endpoint.

On the AWS Certificate Manager console, delete the certificate corresponding to the arbitrum-vpn-server domain.

On the Amazon EC2 console, delete the arbitrum-node-sg and arbitrum-vpn-sg security groups.

On the Amazon VPC console, delete the NAT gateway.

Delete the arbitrum-vpc VPC.

Alternatively, if you used the CloudFormation template, complete the following steps:

On the Amazon VPC console, navigate to the arbitrum-vpn-endpoint client VPN endpoint and disassociate the private subnet on the Target network associations tab.

Delete the arbitrum-vpn-endpoint VPN endpoint.

On the AWS Certificate Manager console, delete the certificate corresponding to the arbitrum-vpn-server domain.

On the AWS CloudFormation console, delete the stack.

Conclusion

In this post, we showed you how to deploy an Arbitrum full node using AWS serverless services. We hope this will allow you to experiment with Ethereum layer 2 solutions. You can use the proposed architecture as a starting point to build more complex Web3 applications, and integrate those with the Web3 ecosystem already running on AWS.

About the Author

Guillaume Goutaudier is a Sr Enterprise Architect at AWS. He helps companies build strategic technical partnerships with AWS. He is also passionate about blockchain technologies, and is a member of the Technical Field Community for blockchain.

Read MoreAWS Database Blog