One of the benefits of Google Kubernetes Engine (GKE) is its use of a fully-integrated network model, which means that the Pod addresses are routable within the VPC network. But as your usage of GKE scales across your organization, you might find it difficult to allocate network space in a single VPC, and rapidly run out of IP addresses. If your organization needs multiple GKE clusters, it becomes a tedious task to carefully plan your network to ensure enough unique IP ranges are reserved for clusters within the VPC network.

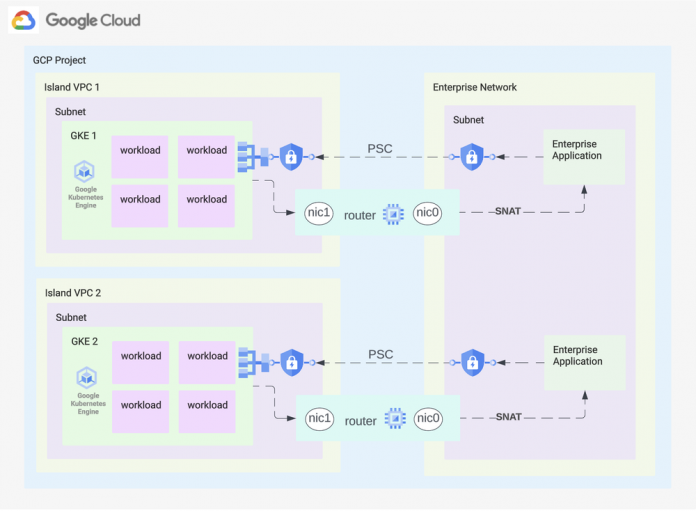

You can use the following design to completely reuse the IP space across your GKE clusters. In this blog post, we present an architecture that leverages Private Service Connect to hide the GKE Cluster ranges, but connects the networks together using a multi-nic VM that functions as a network appliance router. This keeps the GKE cluster networks hidden but connected, allowing the reuse of the address space for multiple clusters.

Architecture

Platform components:

Each cluster is created in an island VPC.

A VM router with multiple NICs is used to enable connectivity between the existing network and the island network.

The sample code uses a single VM as router, but this can be expanded to an managed instance group (MIG) for a highly available architecture.

Connectivity for services:

For inbound connectivity, services deployed on GKE are exposed through Private Service Connect endpoints. This requires reserving static internal IP addresses in the enterprise network and creating DNS entries to enable routing.

For outbound connections from the cluster, all traffic is routed through the network appliance to the enterprise network.

Getting started

1. Deploy basic infrastructure

You can provision the initial networking and GKE cluster configuration through the following public Terraform code. Follow the readme to deploy a cluster, and adjust cluster’s IP addresses as needed.

2. Expose a service on GKE

Create a new subnet for the PSC Service Attachment. This subnet must be in the same region as the GKE cluster, and be created with –purpose PRIVATE_SERVICE_CONNECT. The code also creates a PSC subnet, you can adjust the CIDR as needed.

Then, deploy a sample workload on GKE:

<ListValue: [StructValue([(‘code’, ‘kubectl apply -f – <<EOFrnapiVersion: apps/v1rnkind: Deploymentrnmetadata:rn name: psc-ilbrnspec:rn replicas: 3rn selector:rn matchLabels:rn app: psc-ilbrn template:rn metadata:rn labels:rn app: psc-ilbrn spec:rn containers:rn – name: whereamirn image: us-docker.pkg.dev/google-samples/containers/gke/whereami:v1.2.19rn ports:rn – name: httprn containerPort: 8080rn readinessProbe:rn httpGet:rn path: /healthzrn port: 8080rn scheme: HTTPrn initialDelaySeconds: 5rn timeoutSeconds: 1rnEOF’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e883d30a370>)])]>

Now, expose the deployment through an internal passthrough Network Load Balancer:

<ListValue: [StructValue([(‘code’, ‘kubectl apply -f – <<EOFrnapiVersion: v1rnkind: Servicernmetadata:rn name: whereamirn annotations:rn networking.gke.io/load-balancer-type: “Internal”rnspec:rn type: LoadBalancerrn selector:rn app: psc-ilbrn ports:rn – port: 80rn targetPort: 8080rn protocol: TCPrnEOF’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e883d30a8e0>)])]>

Create a ServiceAttachment for the load balancer:

<ListValue: [StructValue([(‘code’, “PSC_SUBNET=$(gcloud compute networks subnets list –network <NETWORK_NAME> –filter purpose=PRIVATE_SERVICE_CONNECT –format json | jq -r ‘.[0].name’)rnrnkubectl apply -f – <<EOFrnapiVersion: networking.gke.io/v1rnkind: ServiceAttachmentrnmetadata:rn name: whereami-service-attachmentrn namespace: defaultrnspec:rn connectionPreference: ACCEPT_AUTOMATICrn natSubnets:rn – ${PSC_SUBNET}rn proxyProtocol: falsern resourceRef:rn kind: Servicern name: whereamirnEOF”), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e883d30a100>)])]>

3. Connect a PSC endpoint

Retrieve the ServiceAttachment URL:

<ListValue: [StructValue([(‘code’, ‘WHEREAMI_SVC_ATTACHMENT_URL=$(kubectl get serviceattachment whereami-service-attachment -o=jsonpath=”{.status.serviceAttachmentURL}”)’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e883d30a4f0>)])]>

Using this URL, create a Private Service Connect endpoint in the connected VPC network.

<ListValue: [StructValue([(‘code’, ‘gcloud compute addresses create whereami-ip \rn –region=<REGION> \rn –subnet=<SUBNET>rnrngcloud compute forwarding-rules create whereami-psc-endpoint \rn –region=<REGION> \rn –network=<NETWORK_NAME> \rn –address=whereami-ip \rn –target-service-attachment=${WHEREAMI_SVC_ATTACHMENT_URL} \rn –allow-psc-global-access’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e883d30aca0>)])]>

4. Test access

From the connected network, you can access the service through curl:

<ListValue: [StructValue([(‘code’, ‘curl $(gcloud compute addresses list –filter=”name=whereami-ip” –format=”value(address)”)’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e883d30a1f0>)])]>

Limitations

This design has the following limitations:

It is subject to quotas regarding the number of VPC networks per project. You can view this through the command gcloud compute project-info describe –project PROJECT_ID

Limitations for Private Service Connect apply.

You can create a service attachment in GKE versions 1.21.4-gke.300 and later.

You cannot use the same subnet in multiple service attachment configurations.

At the time of this blog, you must create a GKE service that uses an internal passthrough Network Load Balancer to use PSC.

Due to these limitations, the Google Cloud resources created above (Subnet, internal passthrough Network Load Balancer, Service Attachment, PSC Endpoint) must be deployed for each GKE service that you want to expose.

This design is best suited for enterprises looking to save IP address space for GKE clusters by reusing the same address space for multiple clusters in a single project.

Since this design requires that these Google Cloud resources be deployed for every GKE service, consider using an Ingress Gateway (for example: Istio Service Mesh or Nginx) to consolidate the required entrypoints into GKE.

Learn more

To learn more on this topic please checkout the links below:

Documentation: GKE Networking Models

Documentation: Hide Pod IP addresses by using Private Service Connect

Documentation: Publish services using Private Service Connect

Cloud BlogRead More