This blog follows our guide to Designing TIC 3.0 compliant solutions on Google Cloud which provides extended guidance for telemetry reporting requirements.

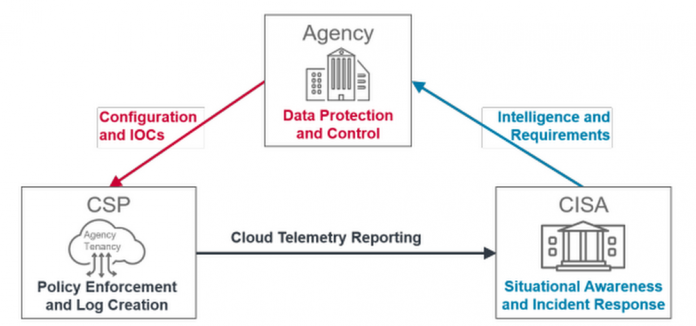

The National Cybersecurity Protection System (NCPS) provides capabilities to enable the Cybersecurity and Infrastructure Security Agency (CISA) to secure and defend federal civilian agencies’ IT infrastructure against advanced cyber threats. As agencies are moving their IT infrastructure to the cloud, the NCPS program has evolved to ensure that CISA’s analysts can continue to provide situational awareness and support to agencies’ workloads in the cloud.

In this blog, we will provide guidance for how agencies can collect, enrich, and report logs to CISA in alignment with the telemetry cycles described in the NCPS Cloud Interface Reference Architecture (NCIRA) program documentation*.

Reporting Patterns:

The NCPS telemetry cycles has three stages for passing information from agency cloud resources to CISA:

Stage A: Cloud Sensing – Generate the telemetry

Stage B: Agency Processing – Prepares the telemetry for communication

Stage C: Reporting to CISA – Includes the transmission of information and transition from the agency to CISA infrastructure and control

Each stage has attributes to capture the functions that take place within the stage. In the next section, we will discuss each of these stages and how to configure them in Google Cloud.

A. Cloud Sensing

The NCPS program identified different sensor positions (Gateway, Subnet, Interface, or Service) and different telemetry types to generate (Network Flow logs, Packet Captures, Application Logs, or Transaction logs). The cloud service model (IaaS, PaaS, or SaaS) will have an impact on the proper attributes and options available to configure when logging is enabled for the service.

We have gathered configuration guidance for how to enable logging for different Google Cloud services, sensor positions, and telemetry types and made them available in the NCPS for Google Cloud – Cloud Sensing document to achieve the requirements of this stage.

B. Agency Processing

In this stage, log data is filtered, enriched, aggregated, and transformed into an appropriate format for ingestion into CISA’s Cloud Log Aggregation Warehouse (CLAW). On Google Cloud, this is achieved using a Log Router to route desired logs from Cloud Logging to PubSub, and then using a Dataflow pipeline to process the logs from PubSub to further filter, aggregate, enrich, transform, and finally send the finalized logs to CLAW. An example diagram of this Agency Processing is shown below.

To design for scale, agencies can also deploy the PubSub and Dataflow pipeline in a separate project, and configure Log Routers in multiple other projects to export logs in a centralized model as shown below.

Agencies have options for operations to perform on the logs before sending to CISA in the next stage.

Data Filtering: Agency log data can be filtered at three levels:

Log Router – The Log Router collects logs from Google Cloud logging and routes them to a destination sink such as BigQuery or PubSub. The Log Router can specify inclusion or exclusion filters to control which logs are routed to this sink. Here is an example inclusion filter for logs from an external HTTPS load balancer with Cloud Armor for Web Application Firewall (WAF) protection:

“resource.type:(http_load_balancer) AND jsonPayload.enforcedSecurityPolicy.name:(ca-policy)”

PubSub Filter – When a Log Router sends logs to a PubSub topic, these logs can be further filtered by leveraging a filter on the PubSub subscription.Dataflow Pipeline – Once logs flow to the Dataflow Pipeline, the pipeline can also filter out (exclude) log entries or specific attributes of a given log entry by leveraging a custom Beam ParDo transformation in the pipeline.

Data Enrichment: Log data can also be enriched as the logs flow through Dataflow. An example of this would be using a Google Cloud API to get the load balancer host and port in order to add those items to each log line for that load balancer.

Data Aggregation: Log data can be aggregated as the logs flow through Dataflow. An example of this would bejoining two sources of data within a fixed window of time (such as every 5 minutes) to produce a single log file that is a logical concatenation of other logs within that window.

Data Transformation: Log data can also be transformed as the logs flow through Dataflow. An example of this would be converting log dates to specific formats and timezones, splitting log line properties to multiple properties, or conjoining log line properties to a single property.

C. Reporting to CISA

This is the final stage of agency processing. Though data can be transferred in either a push or pull model, CISA recommends the push model to enable agencies’ control of ingestion rate. Agencies will need to work with CISA to get the needed information about the receiving end credentials. With those credentials, the Dataflow pipeline can create a sink that sends the final logs to CISA CLAW on a desired window interval (generally 15 minutes).

Optionally, Agencies can add an additional sink to their pipeline to archive the logs exactly as they are sent to CISA CLAW. In most cases, this would be accomplished by creating a BigQuery sink in the Dataflow pipeline that stores the same Dataflow PCollection that is being sent to CISA CLAW.

Sample Pipeline

A full sample of what an agency’s Dataflow pipeline might look like is available here. A screenshot of that sample which shows the specific Dataflow code that reads from logs from PubSub, enhances the logs, and sends the logs to CLAW is shown below:

Conclusion

Google has a history of supporting government agency requirements such as FedRAMP, ITAR, NIST SP 800-53, DoD IL4 and IL5, and many others. Google is continuing this pattern to help Federal Civilian agencies comply with CISA’s guidance regarding TIC 3.0.

If you would like any additional information or help complying with this CISA guidance in your Google Cloud environment, please reach out to your Google Account Team, or to the Google TIC 3.0 technical team at [email protected].

Cloud BlogRead More