Amazon SageMaker now allows you to compare the performance of a new version of a model serving stack with the currently deployed version prior to a full production rollout using a deployment safety practice known as shadow testing. Shadow testing can help you identify potential configuration errors and performance issues before they impact end-users. With SageMaker, you don’t need to invest in building your shadow testing infrastructure, allowing you to focus on model development. SageMaker takes care of deploying the new version alongside the current version serving production requests, routing a portion of requests to the shadow version. You can then compare the performance of the two versions using metrics such as latency and error rate. This gives you greater confidence that production rollouts to SageMaker inference endpoints won’t cause performance regressions, and helps you avoid outages due to accidental misconfigurations.

In this post, we demonstrate this new SageMaker capability. The corresponding sample notebook is available in this GitHub repository.

Overview of solution

Your model serving infrastructure consists of the machine learning (ML) model, the serving container, or the compute instance. Let’s consider the following scenarios:

You’re considering promoting a new model that has been validated offline to production, but want to evaluate operational performance metrics, such as latency, error rate, and so on, before making this decision.

You’re considering changes to your serving infrastructure container, such as patching vulnerabilities or upgrading to newer versions, and want to assess the impact of these changes prior to promotion to production.

You’re considering changing your ML instance and want to evaluate how the new instance would perform with live inference requests.

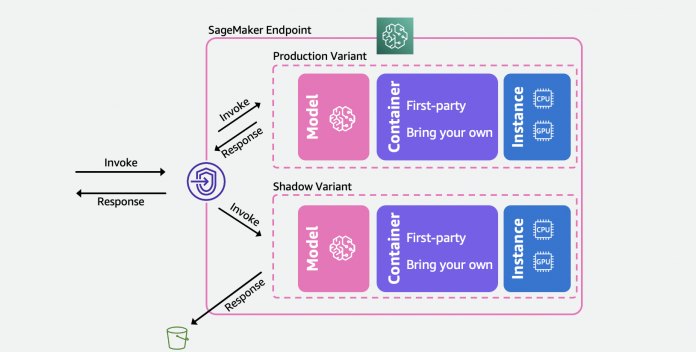

The following diagram illustrates our solution architecture.

For each of these scenarios, select a production variant you want to test against and SageMaker automatically deploys the new variant in shadow mode and routes a copy of the inference requests to it in real time within the same endpoint. Only the responses of the production variant are returned to the calling application. You can choose to discard or log the responses of the shadow variant for offline comparison. Optionally, you can monitor the variants through a built-in dashboard with a side-by-side comparison of the performance metrics. You can use this capability either through SageMaker inference update-endpoint APIs or through the SageMaker console.

Shadow variants build on top of the production variant capability in SageMaker inference endpoints. To reiterate, a production variant consists of the ML model, serving container, and ML instance. Because each variant is independent of others, you can have different models, containers, or instance types across variants. SageMaker lets you specify auto scaling policies on a per-variant basis so they can scale independently based on incoming load. SageMaker supports up to 10 production variants per endpoint. You can either configure a variant to receive a portion of the incoming traffic by setting variant weights, or specify the target variant in the incoming request. The response from the production variant is forwarded back to the invoker.

A shadow variant(new) has the same components as a production variant. A user-specified portion of the requests, known as the traffic sampling percentage, is forwarded to the shadow variant. You can choose to log the response of the shadow variant in Amazon Simple Storage Service (Amazon S3) or discard it.

Note that SageMaker supports a maximum of one shadow variant per endpoint. For an endpoint with a shadow variant, there can be a maximum of one production variant.

After you set up the production and shadow variants, you can monitor the invocation metrics for both production and shadow variants in Amazon CloudWatch under the AWS/SageMaker namespace. All updates to the SageMaker endpoint are orchestrated using blue/green deployments and occur without any loss in availability. Your endpoints will continue responding to production requests as you add, modify, or remove shadow variants.

You can use this capability in one of two ways:

Managed shadow testing using the SageMaker Console – You can leverage the console for a guided experience to manage the end-to-end journey of shadow testing. This lets you setup shadow tests for a predefined duration of time, monitor the progress through a live dashboard, clean up upon completion, and act on the results.

Self-service shadow testing using the SageMaker Inference APIs – If your deployment workflow already uses create/update/delete-endpoint APIs, you can continue using them to manage Shadow Variants.

In the following sections, we walk through each of these scenarios.

Scenario 1 – Managed shadow testing using the SageMaker Console

If you wish to choose SageMaker to manage the end-to-end workflow of creating, managing, and acting on the results of the shadow tests, consider using the Shadow tests’ capability in the Inference section of the SageMaker Console. As stated earlier, this enables you to setup shadow tests for a predefined duration of time, monitor the progress through a live dashboard, presents clean up options upon completion, and act on the results. To learn more, please visit the shadow tests section of our documentation for a step-by-step walkthrough of this capability.

Pre-requisites

The models for production and shadow need to be created on SageMaker. Please refer to the CreateModel API here.

Step 1 – Create a shadow test

Navigate to the Inference section of the left navigation panel of the SageMaker console and then choose Shadow tests. This will take you to a dashboard with all the scheduled, running, and completed shadow tests. Click ‘create a shadow test’. Enter a name for the test and choose next.

This will take you to the shadow test settings page. You can choose an existing IAM role or create one that has the AmazonSageMakerFullAccess IAM policy attached. Next, choose ‘Create a new endpoint’ and enter a name (xgb-prod-shadow-1). You can add one production and one shadow variant associated with this endpoint by clicking on ‘Add’ in the Variants section. You can select the models you have created in the ‘Add Model’ dialog box. This creates a production or variant. Optionally, you can change the instance type and count associated with each variant.

All the traffic goes to the production variant andit responds to invocation requests. You can control a portion of the requests that is routed to the shadow variant by changing the Traffic Sampling Percentage.

You can control the duration of the test from one hour to 30 days. If unspecified, it defaults to 7 days. After this period, the test is marked complete. If you are running a test on an existing endpoint, it will be rolled back to the state prior to starting the test upon completion.

You can optionally capture the requests and responses of the Shadow variant using the Data Capture options. If left unspecified, the responses of the shadow variant are discarded.

Step 2 – Monitor a shadow test

You can view the list of shadow tests by navigating to the Shadow Tests section under Inference. Click on the shadow test created in the previous step to view the details of a shadow test and monitor it while it is in progress or after it has completed.

The Metrics section provides a comparison of the key metrics and provides overlaid graphs between the production and shadow variants, along with descriptive statistics. You can compare invocation metrics such as ModelLatency and Invocation4xxErrors as well as instance metrics such as CPUUtilization and DiskUtilization.

Step 3 – Promote the Shadow variant to the new production variant

Upon comparing, you can either choose to promote the shadow variant to be the new production variant or remove the shadow variant. For both these options, select ‘Mark Complete’ on the top of the page. This presents you with an option to either promote or remove the shadow variant.

If you choose to promote, you will be taken to a deployment page, where you can confirm the variant settings prior to deployment. Prior to deployment, we recommend sizing your shadow variants to be able to handle 100% of the invocation traffic. If you are not using shadow testing to evaluate alternate instance types or sizes, you can use the choose the ‘retain production variant settings. Otherwise, you can choose to ‘retain shadow variant settings. If you choose this option, please ensure that your traffic sampling is set at 100%. Alternatively, you can specify the instance type and count if you wish to override these settings.

Once you confirm the deployment, SageMaker will initiate an update to your endpoint to promote the shadow variant to the new production variant. As with SageMaker all updates, your endpoint will still be operational during the update.

Scenario 2: Shadow testing using SageMaker inference APIs

This section covers how to use the existing SageMaker create/update/delete-endpoint APIs to deploy shadow variants.

For this example, we have two XGBoost models that represent two different versions of the models that have been pre-trained. model.tar.gz is the model currently deployed in production. model2 is the newer model, and we want to test its performance in terms of operational metrics such as latency before deciding to use it in production. We deploy model2 as a shadow variant of model.tar.gz. Both pre-trained models are stored in the public S3 bucket s3://sagemaker-sample-files. We firstdownload the modelour local compute instance and then upload to S3.

The models in this example are used to predict the probability of a mobile customer leaving their current mobile operator. The dataset we use is publicly available and was mentioned in the book Discovering Knowledge in Data by Daniel T. Larose. These models were trained using the XGB Churn Prediction Notebook in SageMaker. You can also use your own pre-trained models, in which case you can skip downloading from s3://sagemaker-sample-files and copy your own models directly to model/ folder.

Step 1 – Create models

We upload the model files to our own S3 bucket and create two SageMaker models. See the following code:

Step 2 – Deploy the two models as production and shadow variants to a real-time inference endpoint

We create an endpoint config with the production and shadow variants. The ProductionVariants and ShadowProductionVariants are of particular interest. Both these variants have ml.m5.xlarge instances with 4 vCPUs and 16 GiB of memory, and the initial instance count is set to 1. See the following code:

Lastly, we create the production and shadow variant:

Step 3 – Invoke the endpoint for testing

After the endpoint has been successfully created, you can begin invoking it. We send about 3,000 requests in a sequential way:

Step 4 – Compare metrics

Now that we have deployed both the production and shadow models, let’s compare the invocation metrics. For a list of invocation metrics available for comparison, refer to Monitor Amazon SageMaker with Amazon CloudWatch. Let’s start by comparing invocations between the production and shadow variants.

The InvocationsPerInstance metric refers to the number of invocations sent to the production variant. A fraction of these invocations, specified in the variant weight, are sent to the shadow variant. The invocation per instance is calculated by dividing the total number of invocations by the number of instances in a variant. As shown in the following charts, we can confirm that both the production and shadow variants are receiving invocation requests according to the weights specified in the endpoint config.

Next, let’s compare the model latency (ModelLatency metric) between the production and shadow variants. Model latency is the time taken by a model to respond as viewed from SageMaker. We can observe how the model latency of the shadow variant compares with the production variant without exposing end-users to the shadow variant.

We expect the overhead latency (OverheadLatency metric) to be comparable across production and shadow variants. Overhead latency is the interval measured from the time SageMaker receives the request until it returns a response to the client, minus the model latency.

Step 5- Promote your shadow variant

To promote the shadow model to production, create a new endpoint configuration with current ShadowProductionVariant as the new ProductionVariant and remove the ShadowProductionVariant. This will remove the current ProductionVariant and promote the shadow variant to become the new production variant. As always, all SageMaker updates are orchestrated as blue/green deployments under the hood, and there is no loss of availability while performing the update.

Optionally, you can leverage Deployment Guardrails if you want to use all-at-once traffic shifting and auto rollbacks during your update.

Step 6 – Clean Up

If you do not plan to use this endpoint further, you should delete the endpoint to avoid incurring additional charges and clean up other resources created in this blog.

Conclusion

In this post, we introduced a new capability of SageMaker inference to compare the performance of new version of a model serving stack with the currently deployed version prior to a full production rollout using a deployment safety practice known as shadow testing. We walked you through the advantages of using shadow variants and methods to configure the variants with an end-to-end example. To learn more about shadow variants, refer to shadow tests documentation.

About the Authors

Raghu Ramesha is a Machine Learning Solutions Architect with the Amazon SageMaker Service team. He focuses on helping customers build, deploy, and migrate ML production workloads to SageMaker at scale. He specializes in machine learning, AI, and computer vision domains, and holds a master’s degree in Computer Science from UT Dallas. In his spare time, he enjoys traveling and photography.

Qingwei Li is a Machine Learning Specialist at Amazon Web Services. He received his Ph.D. in Operations Research after he broke his advisor’s research grant account and failed to deliver the Nobel Prize he promised. Currently he helps customers in the financial service and insurance industry build machine learning solutions on AWS. In his spare time, he likes reading and teaching.

Qiyun Zhao is a Senior Software Development Engineer with the Amazon SageMaker Inference Platform team. He is the lead developer of the Deployment Guardrails and Shadow Deployments, and he focuses on helping customers to manage ML workloads and deployments at scale with high availability. He also works on platform architecture evolutions for fast and secure ML jobs deployment and running ML online experiments at ease. In his spare time, he enjoys reading, gaming and traveling.

Tarun Sairam is a Senior Product Manager for Amazon SageMaker Inference. He is interested in learning about the latest trends in machine learning and helping customers leverage them. In his spare time, he enjoys biking, skiing, and playing tennis.

Read MoreAWS Machine Learning Blog