Migrating a Transparent Data Encryption (TDE)-enabled Amazon Relational Database Service (Amazon RDS) for Oracle database instance from one AWS account to another is a common use case in scenarios when you acquire, sell, or merge operations, or if you’re reorganizing your AWS accounts and organizational structure.

TDE is a permanent and persistent option in RDS for Oracle option groups, and a DB snapshot that uses an option group with permanent or persistent options can’t be shared with another AWS account. For more information, see Sharing a DB snapshot. In this post, we discuss the steps and best practices to migrate a TDE-enabled RDS for Oracle DB instance from one AWS account to another with reduced downtime using Oracle Data Pump and AWS Database Migration Service (AWS DMS). For Amazon RDS for Oracle, AWS DMS doesn’t support TDE on the column level. Therefore, we also demonstrate the extra steps needed to migrate tables with TDE on the column level.

This migration approach involves three phases:

Initial data load – We use Oracle Data Pump to perform the initial data load in the TDE-enabled RDS for Oracle DB instance.

Ongoing replication – AWS DMS is a fully managed service that supports change data capture (CDC) for RDS for Oracle DB instances. You can use AWS DMS for ongoing replication and minimize the outage window.

Migrate tables containing columns encrypted with TDE during cutover – AWS DMS doesn’t support the replication of tables with columns that use TDE for Amazon RDS for Oracle. You need to refresh those tables again during cutover.

Solution overview

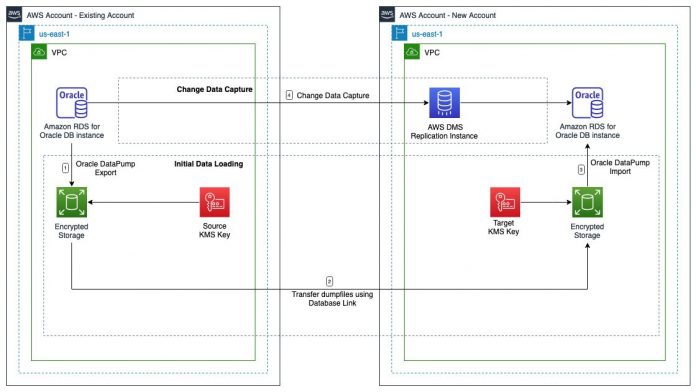

In this post, we use the Oracle Data Pump API for the initial data load and an AWS DMS CDC-only task for ongoing replication. The following diagram illustrates this architecture.

To implement this solution, we complete the following steps:

Prepare the source RDS for Oracle DB instance for migration.

Prepare the target RDS for Oracle DB instance for migration.

Capture the System Change Number (SCN).

Export the source database using Oracle Data Pump.

Transfer the Oracle Data Pump export dump file set to the target DB instance.

Load data in the target database using Oracle Data Pump.

Validate the target database.

Enable Amazon RDS backup retention and archive logging on the target DB instance.

Configure ongoing replication using AWS DMS.

Reload tables with TDE on the column level and perform cutover.

For this demonstration, the source AWS account is 6144xxxxxxxx and the target account is 2634xxxxxxxx. Both AWS accounts are part of the same AWS Organization.

The source RDS for Oracle DB instance is named rds-oracle-source-01 and the target is rds-oracle-target-01. Both DB instances are running on non-multitenant Oracle Database 19c version. We use AWS DMS for ongoing replication. In this post, we use DMS_SAMPLE as a sample schema for migration. The DMS_SAMPLE schema contains two tables with TDE-encrypted columns.

Prerequisites

Make sure you meet the following prerequisites:

Your source and target AWS accounts must have connectivity using either VPC peering or AWS Transit Gateway. For more information, see Create a VPC peering connection.

The VPC security group associated with the source and target RDS for Oracle DB instance should allow inbound connections from the AWS DMS replication instance. The security group associated with the replication instance should also allow all outbound connections. For more information, see Setting up a network for a replication instance.

Automatic backup is enabled on the source RDS for Oracle DB instance. For more information about enabling automatic backups, see Enabling automated backups.

To capture ongoing changes, AWS DMS requires that you enable minimal supplemental logging on your Oracle source database. In addition, you need to enable supplemental logging on each replicated table in the database.

You must have a bastion host with the SQL*Plus client installed and connectivity to the source and target RDS for Oracle instances.

Limitations

This solution has the following limitations:

The AWS DMS Binary Reader method supports TDE only for self-managed Oracle databases.

When replicating from Amazon RDS for Oracle, TDE is supported only with encrypted tablespace and using Oracle LogMiner.

AWS DMS supports CDC for RDS for Oracle database tables with primary keys. If a table doesn’t have a primary key enabled, add supplemental logging on all columns of the table to ensure that AWS DMS has enough data to update the target table.

During CDC, AWS DMS supports large object (LOB) data types only in tables that include a primary key.

If your tables use sequences, then the sequences will not be advanced on the target even though data is being copied from the source during ongoing replication with AWS DMS. During cutover, you need to update the NEXTVAL of the sequences in the target database after stopping replication from the source database.

For more information about the limitations of using an Oracle database as source and target using AWS DMS, see Limitations on using a Oracle database as a source for AWS DMS and Limitations on Oracle as a target for AWS Database Migration Service.

Prepare the source RDS for Oracle DB instance for migration

To prepare your source DB instance for migration, complete the following steps:

Create the DMS_USER user account in the source RDS for Oracle database. For more information on the privileges for the DMS_USER user account, see User account privileges required on an AWS-managed Oracle source for AWS DMS.

The source RDS for Oracle DB instance must have sufficient storage to keep the export dump files and archived logs created during database export, as well as during dump file transfer and loading into the destination database instance. We recommend increasing the storage according to the estimated export dump files size and archive log generation. Amazon RDS auto scaling can’t completely prevent storage-full situations for large data loads. This is because further storage modifications can’t be made for either 6 hours or until storage optimization has completed on the instance, whichever is longer. For more information on storage autoscaling limitations, see Limitations.

You can also estimate the size of the Oracle Data Pump export dump file set using the DBMS_DATAPUMP procedure within a SQL*Plus session:

To capture ongoing changes, AWS DMS requires that you enable minimal supplemental logging on your source DB instance:

Enable supplemental logging on each replicated table in the source database:

If a primary key exists, add supplemental logging on the primary key columns on the database:

If the table doesn’t have a primary key, or if that key is disabled or invalid, enable supplemental logging on all columns of the table. Use the following SQL statement to generate the statements to enable the supplemental logging on all the columns of specified tables. We recommend reviewing the SQL statements before running them in the source database.

Make sure that ARCHIVELOG mode is enabled on the source DB instance to run the AWS DMS CDC task:

The RDS for Oracle DB instance generates archived redo logs when the backup retention period of the DB instance is greater than zero. Because the archived redo logs are retained on your DB instance, ensure that DB instance has enough allocated storage for the retained logs. We recommend running the following SQL statement to analyze archive log generation trends for storage estimation. Use the Amazon CloudWatch metric FreeStorageSpace to calculate the storage available to the RDS for Oracle DB instance. For more information on instance-level metrics for Amazon RDS, see Amazon CloudWatch metrics for Amazon RDS. If the DB instance doesn’t have sufficient storage, increase the storage. For more information, see Increasing DB instance storage capacity.

When the archived log retention period expires, Amazon RDS for Oracle removes the archived redo logs from your DB instance. For more information on retaining archived logs, see Retaining archived redo logs.

In this post, we estimate that we would be able to complete the export of the source database, the import in the target database, and starting the AWS DMS CDC task in 4 hours. Define archivelog retention hours to 4 hours using following SQL statement:

Prepare the target RDS for Oracle DB instance for migration

To prepare the target DB instance, complete the following steps:

It is recommended to create the target DB instance with a larger instance class to get more compute to speed up the initial data loading process.

Keep Multi-AZ disabled for the target DB instance during the initial data load.

Create the DMS_USER user account in the target RDS for Oracle database. For more information on the privileges for the DMS_USER user account, see User account privileges required for using Oracle as a target.

The target RDS for Oracle DB instance should have storage to accommodate the export dump files and database tablespaces.

Create the required tablespaces in the target database. Run the following script in the source database to get tablespace details. Modify the size of the Oracle-provided tablespaces as per the size of the source database and create any additional tablespaces required for the application.

Create database roles in the target database. Use the following script in the source database to generate scripts. Verify the generated SQL statements and run them in the target database.

Create database profiles in the target database. Use the following script in the source database to generate scripts. Verify the generated SQL statements and run them in the target database.

Create public synonyms in the target database. Use the following script in the source database to generate scripts. Verify the generated SQL statements and run them in the target database.

Verify that the source and target DB instance have the same parameter configuration. You should review the RDS DB parameter groups and run the following query in both the source and target database:

Temporarily disable automated backups of the target RDS for Oracle DB instance to speed up the Oracle Data Pump Import jobs. You should configure the backup retention period to 0 to disable automated backups. For more information on disabling automated backups, see Disabling automated backups. You should also set archivelog retention to 0 on the target DB instance to speed up the Oracle Data Pump import process:

Modify the job_queue_processes parameter to 0 in the RDS DB parameter group of the target RDS for Oracle DB instance to prevent any database jobs from running during the migration process. For more details on DB parameter group modification, see Modifying parameters in a DB parameter group.

Capture the SCN

Capture the SCN from the source database to start the Oracle Data Pump export. We recommend stopping the application to capture the SCN and then starting the application again. Complete the following steps:

Shut down your application gracefully.

Wait until all the database sessions from application users are ended.

Run the following command to help identify users or application sessions still connected to the database:

Perform a few redo log switches in the database and force checkpoint:

To perform a consistent export, make sure that you use the flashback_scn parameter during Oracle Data Pump export. Run the following commands on the source database to get the current SCN. At this stage, the source database should not have any open transactions.

We use this SCN in a later step to start the AWS DMS CDC task.

Start the application now.

Export the source database using Oracle Data Pump

Start the export of the source database using the Oracle Data Pump PL/SQL API DBMS_DATAPUMP. For more information on the Oracle Data Pump API, see Using the Oracle Data Pump API.

Get an object count from the source database before starting the export:

Export the DMS_SAMPLE schema using DBMS_DATAPUMP:

You can view the contents of the export log by using the rdsadmin.rds_file_util.read_text_file procedure:

When the export is complete, list the export dump files in DATA_PUMP_DIR with their size. It helps at later steps to make sure that all the export dump files are transferred correctly to the target RDS for Oracle DB instance.

Transfer the Oracle Data Pump export dump file set to the target DB instance

Complete the steps in this section only after verifying that the export is complete on the source DB instance.

Create a database link in the source database pointing to the target database. The following command creates a database link to_target_rds that connects to the target database:

Transfer the dump files from the source to target database:

List the export dump files in DATA_PUMP_DIR with their size in the target RDS for Oracle DB instance and verify the size by matching the file sizes obtained from the source database in step 4 of the previous section.

Load data in the target database using Oracle Data Pump

Perform a data load into the target database using the Oracle Data Pump API DBMS_DATAPUMP. Use the following code snippets to load data in the target database.

Import the data by calling the DBMS_DATAPUMP procedures:

Data Pump jobs are started asynchronously. You can view the contents of the import log by using the rdsadmin.rds_file_util.read_text_file procedure:

Validate the target database

Complete the following steps to validate the target database:

Verify the objects count in the target database and compare with the objects count captured earlier from the source database:

List invalid objects of the DMS_SAMPLE schema:

Recompile the DMS_SAMPLE schema using following SQL statement:

Generate SQL statements to recompile the private synonyms, public synonyms, packages, and package body. We recommend reviewing the generated SQL statements before running them in the target database.

You can verify the current errors on all the objects of the schema using the following SQL statement:

Enable Amazon RDS backup retention and archive logging on the target DB instance and Multi-AZ

Enable Amazon RDS automatic backup and archive logging on the target DB instance after data loading in the target database.

Enable automated backups of your target RDS for Oracle DB instance by setting the backup retention period to a positive non-zero value. For more information, see Enabling automated backups.

Enable archive logging on the target DB instance:

We recommend defining the archivelog retention hours parameter based on your application workload.

You can convert a Single-AZ RDS for Oracle DB instance to a Multi-AZ instance. For more information on best practices for converting to Multi-AZ instances, see Best practices for converting a Single-AZ Amazon RDS instance to a Multi-AZ instance.

Configure ongoing replication using AWS DMS

Prepare the source and target RDS for Oracle databases for ongoing replication using AWS DMS.

Configure VPC security groups for the AWS DMS replication instance in the target AWS account

Complete the following steps to configure your VPC security groups:

Create a VPC security group called aws-dms-sg without any inbound rules in the target AWS account.

Update the inbound rules of the security group that is associated with the target RDS for Oracle DB instance:

For Type, choose Oracle-RDS.

For Port, use the DB port.

For Source, use the security group ID of the aws-dms-sg security group.

For Description, enter Rule to connect AWS DMS Replication Instance and target DB Instance.

Update the inbound rules of the security group that is associated with the source RDS DB instance in the source AWS account:

For Type, choose Oracle-RDS.

For Port, use the DB port.

For Source, use the security group ID of the aws-dms-sg security group that you created in the target AWS account with the target AWS account number; for example, 2634xxxxxxxx/aws-dms-sg.

For Description, enter Rule to connect AWS DMS Replication Instance and source DB Instance.

Disable all triggers and referential integrity constraints

Because AWS DMS doesn’t support TDE on the column level for Amazon RDS for Oracle, you must disable triggers and referential integrity constraints of the user that you intend to replicate in the target database to avoid data inconsistency during the CDC phase. You generate SQL statements to disable and enable the triggers and referential integrity constraints of the DMS_SAMPLE user using the following sample script. Before running, you should test and change it according to your requirements.

Run the following statement in the target database to generate the SQL statements to enable triggers. Don’t run the generated SQL statements now. Save this output to enable triggers again during cutover.

Run the following statement in the target database to generate the SQL statements to enable referential integrity constraints. Don’t run the generated SQL statements now. Save this output to use later during cutover.

Run the following statement in the target database to generate the SQL statements to disable triggers. Run the generated SQL statements in the target database.

Run the following statement in the target database to generate the SQL statements to disable referential integrity constraints. Run the generated SQL statements in the target database.

Create an AWS DMS replication instance

Create an AWS DMS replication instance called dms-repl-instance-oracle-01 in the target AWS account using the VPC security group aws-dms-sg created earlier. For more information on replication instance sizing, see Choosing the right AWS DMS replication instance for your migration.

Create AWS DMS source and target endpoints

Complete the following steps:

Create an AWS DMS source endpoint called source-ep in the target AWS account for the source database.

Create an AWS DMS target endpoint called target-ep for the target Oracle database.

Use the source and target endpoints to test connectivity with the source and target database.

Create an AWS DMS migration task

Create an AWS DMS database migration task in the target AWS account to specify the schema for ongoing replication. The task details depend on your migration project. For more information, see Working with AWS DMS tasks. Note the following key steps while creating the AWS DMS database migration task in this post:

For Task identifier, enter migration-task-cdc-01.

For Replication instance, choose dms-repl-instance-oracle-01.

For Source database endpoint, choose source-ep.

For Target database endpoint, choose target-ep.

For Migration type, choose Replicate data changes only (CDC).

For CDC start mode for source transactions, select Enable custom CDC start mode.

For Custom CDC start point for source transactions, choose Specify a log sequence number and specify the SCN for System change number captured from the source database. In this post, we specify 3357030. We provided detailed guidance earlier in this post on how to acquire the SCN.

For Custom CDC stop mode for source transactions, choose Disable custom CDC stop mode.

For Target table preparation mode, leave as default.

For LOB column settings, choose Full LOB mode and for LOB chunk size (kb), leave as default. For more information on LOB modes, see Setting LOB support for source databases in an AWS DMS task.

For Validation, choose Turn on.

For Task logs, choose Turn on CloudWatch logs.

AWS DMS task logging uses CloudWatch to log information during the migration process. With task logging settings, you can change which component activities are logged and the severity level of information that is written to the log. We use the default settings in this post.

In the Advanced task settings section, under Control table settings, specify DMS_USER for Create control table in target using schema.

The AWS DMS control tables such as awsdms_apply_exceptions and awsdms_validation_failures_v1 will reside in the schema DMS_USER of the target database. If you don’t enter any information for this option, then AWS DMS uses the target schema for control tables.

For Table mappings, specify the source database schema under Selection rules. In this post, we migrate all tables from the DMS_SAMPLE schema.

When you use Oracle as a target, AWS DMS migrates the data to the schema owned by the target endpoint’s user.

For example, suppose that you’re migrating a schema named DMS_SAMPLE to an Oracle target endpoint, and the target endpoint user name is DMS_USER. AWS DMS connects to the Oracle target as DMS_USER and populates the DMS_USER schema with database objects from the source DMS_SAMPLE.

To override this behavior, provide a transformation rule. For example, to migrate the source DMS_SAMPLE schema objects to the DMS_SAMPLE schema at the target endpoint, use the following transformation.

A premigration assessment warns you of potential migration issues before starting your migration task. Premigration assessments generally have minimal impact on your databases to run. We run the premigration assessment as shown in the following screenshot. For more information on premigration assessments for a task, see Enabling and working with premigration assessments for a task.

For Migration task startup configuration, choose Manually Later.

Choose Create task.

AWS DMS creates a premigration assessment report. We recommend reviewing the findings and taking corrective actions as needed. You can also consider creating another premigration assessment after fixing the earlier issues. It is expected to have some issues with the tables with TDE on the column level.

Run the task.

Monitor replication

You can monitor your AWS DMS task using CloudWatch metrics. AWS DMS provides statistics for replication tasks, including incoming and committed changes, and latency between the replication host and both the source and target databases. For a complete list of the available metrics, see Replication task metrics. You can determine the total latency, or replica lag, for a task by combining the CDCLatencySource and CDCLatencyTarget metric values.

Validate the data

You use AWS DMS data validation to make sure all the preexisting data in the tables is migrated accurately. It also compares the incremental changes for a CDC-enabled task as they occur. During data validation, AWS DMS compares each row in the source with its corresponding row at the target, verifies the rows contain the same data, and reports any mismatches. See AWS DMS data validation for more details.

Perform cutover

You can plan the cutover of the application to the target RDS for Oracle database when the replication lag is minimal. We recommend planning your cutover during a low traffic window. The following are some of key cutover steps:

Stop your application.

Make sure that CDC latency is 0. You can use the CDCLatencySource and CDCLatencyTarget metrics to monitor the replication task.

Make sure that there are no data validation errors in AWS DMS.

Stop the AWS DMS database migration task. For more information, see Stop Replication Task.

AWS DMS does not support TDE on the column level for Amazon RDS for Oracle. You should reload all those unsupported tables again. In this post, the PLAYER and PERSON tables have TDE on the column level.

Take the export for the tables with encrypted columns from the source database using DMS_DATAPUMP:

You can view the contents of the import log by using the rdsadmin.rds_file_util.read_text_file procedure:

Transfer the export dump file set to the target database as demonstrated in earlier steps. Import the encrypted tables into the target database DBMS_DATAPUMP with the TABLE_EXISTS_ACTION parameter set to TRUNCATE:

Forward all the sequences in the target using the source value. AWS DMS doesn’t replicate incremental sequence numbers during CDC from the source database; you need to generate the latest sequence value from the source sequences and apply it on the target database to avoid sequence value inconsistencies. Run the following query in the source database to generate the DDL to reset sequences in the target database. Run the generated SQL statements in the target database to update sequences.

You disabled triggers and referential integrity constraints in the target database before starting the AWS DMS CDC-only task. Enable the triggers and referential integrity constraints in the target database now. Use the SQL statements generated in the previous step to enable the triggers and referential integrity constraints in the target database. We recommend verifying the SQL statements before running them in the target database.

Modify job_queue_processes in the parameter group associated with the target DB instance with the appropriate value as configured in the source DB instance.

Update the application configuration or DNS CNAME records with the target RDS for Oracle DB instance endpoint.

After the application has successfully connected to the target DB instances, you may decide to temporarily stop or delete the Amazon RDS resources in the source AWS account. To stop the RDS DB instance temporarily, refer to Stopping an Amazon RDS DB instance temporarily and to delete the RDS DB instance, refer to Deleting a DB instance.

Start your application.

Clean up

As part of this migration, you deployed AWS DMS-related resources in your AWS account to replicate data. These resources will incur costs as long as they are in use. Be sure to remove any resources you no longer need.

Conclusion

In this post, we discussed the steps involved in migrating a TDE-enabled RDS for Oracle DB instance from one AWS account to another. We also used AWS DMS for ongoing replication to reduce the downtime. With the complexity involved in database migrations, we highly recommend testing the migration steps in non-production environments prior to making changes in production.

We welcome your feedback. If you have any questions or suggestions, leave them in the comments section.

About the Authors

Javeed Mohammed is a Database Specialist Solutions Architect with Amazon Web Services. He works with the Amazon RDS team, focusing on commercial database engines like Oracle. He enjoys working with customers to help design, deploy, and optimize relational database workloads on AWS Cloud.

Alok Srivastava is a Senior Database Consultant at AWS. He works with AWS customers and partners in their journey to the AWS Cloud with a focus on database migration and modernization programs.

Read MoreAWS Database Blog