Many websites and mobile apps use Google Analytics to measure and analyze the behavior of visitors on their platforms. GA4 is the newest version of Google Analytics. It provides a number of new capabilities, such as an export to BigQuery (BQ) that’s available to all GA users, and a way to stream data to BQ in near real time. This post provides an overview of four ways to ingest events for Discovery solutions using GA4 integrations: Google Tag Manager (GTM) GA4, GA BQ Historical ingestion, GA4 BQ Streaming, and Cloud Functions for Firebase.

Events supported by GA4

Cloud Retail can use the following GA4 event types:

add_to_cart (add-to-cart in Retail)

purchase (purchase-complete in Retail)

view_item (detail page view in Retail)

view_item_list (maps to the Cloud Retail search event if combined with a search query )

What about home page views? See more.

GTM GA4 [Availability: GA]

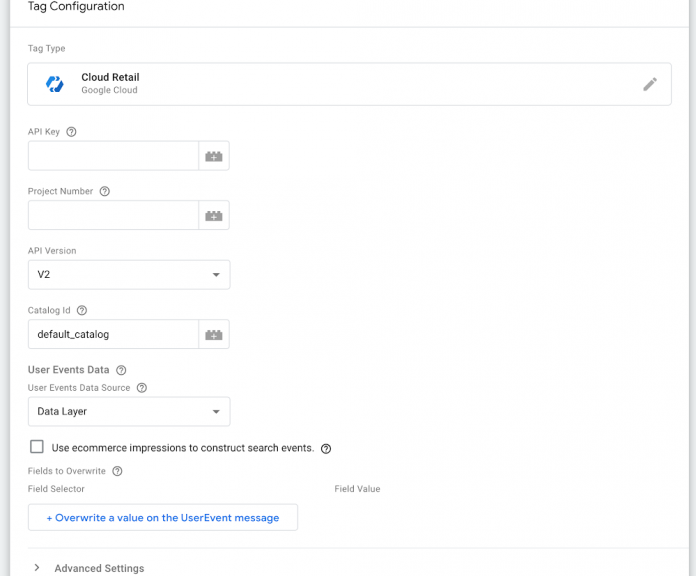

Many people use Google Tag Manager to configure and deploy measurement tags on their websites. Tag Manager provides an easy way to manage and test multiple tags without needing to do many server-side code changes to a site. Cloud Retail has its own first-party tag called ‘Cloud Retail’. If you are sending data via the data_layer to Google Analytics (see the Event reference), the Cloud Retail tag can re-use that same data and send it to the Cloud Retail API.

All you have to do to use the Cloud Retail tag with GA4 is:

Set up a few key parameters (API key, project number)

Choose the ‘Data Layer’ as your desired source for user events

Set up triggers for the Cloud Retail tag – you can re-use your existing GA4 triggers: Set up triggers

More details: https://cloud.google.com/retail/recommendations-ai/docs/record-events#gtm.

Who is this for: Customers already using GTM, especially those with GA4. Additionally, if you use the Cloud Retail tag you can attach extra dimensions, such as a search query and attribution token, that might not be available if you only use a server-side GA integration (described below).

Who is this not for: Customers not using GTM or not wanting to modify their clients. GTM also does not support mobile events – you would have to ingest these through another integration.

GA4 BQ Historical Import [Availability: Public Preview]

One of the exciting new features introduced with GA4 is the ability to export user events to BigQuery – available to all GA accounts (previously this was restricted to only GA360 users). Unlike the GTM approach above, GA4 BQ will have both mobile and web events and requires no client side changes. To take advantage of this feature, Cloud Retail natively supports importing historical user events via GA4 BQ.

To try this feature out you will need to reach out to your support team to allowlist your project for it. After being allowlisted, the following steps are required:

Enable DataFlow API (this is used to transform the data from GA4 to Cloud Retail).

Grant the following permissions to service accounts used by Cloud Retail:

service-{Project_Number}@google-cloud-sa-retail.iam.gserviceaccount.com hasiam.serviceAccounts.actAs permission on the Compute Engine default service account

If the BQ is in another project than your Retail solution, you will need to grant both the Retail and Compute Engine service account Bigquery Admin role.

Call theRetail API import with data_schema=’ga4′, or go to the Retail console Import tab and choose GA4 as your BQ user events schema.

GA4 BQ exports shards each day into a separate table, so you will need to import each day using a separate import call.

Caveats: GA4 BQ has limitations on what jobs you can perform at no charge such as the number of events that can be exported, learn more. Data Flow also incurs costs on the end use. See Data Flow pricing.This cost is amortized by subsequent Retail model training and prediction costs.

Who is this for: Any customer wanting to import their historical events.

Who is this not for: This solution can work for most people, but it only has the ability to import events in bulk. You will need to use GTM or GA4 BQ Streaming to get real-time events. GA4 also does not allow backfilling data into BQ, so in order to have historical data, you have to have the BQ export set up prior to integrating with Cloud Retail.

GA4 BQ Streaming [Availability: Public Preview]

In addition to supporting a batch export to BQ, GA4 also supports streaming events into BQ in near real-time. Unlike the GTM approach above, GA4 BQ will have both mobile and web events and requires no client-side changes. To take advantage of this capability, we have architected a system on top of Cloud Functions and Cloud Scheduler that periodically polls data from GA4 BQ and streams them to Cloud Retail API.

The Cloud scheduler will trigger a Cloud Function to run every one minute, and pull the most recent data and send it to Cloud Retail. Detailed instructions are in our customer support Github.

By default, for home page view events, the import will ingest page views with a url that has no suffix after “/” e.g. myhomepage.com or myhomepage.com/. To ingest home page views that do not match against these, you will need to reach out to your support team.

To try this feature out you will currently need to reach out to your support team to allowlist your project for it.

Caveat: Given that events might arrive out of order and no timestamp exists for when the data was written, the function might pull duplicate events into Cloud Retail (but our models can handle this). GA4 BQ streaming also provides no SLOs on e2e latency. That being said, the latency from our own empirical analysis suggests latency on the order of less than 1 minute.

Who is this for: Customers who do not use GTM and don’t want to do client-side changes. This is also ideal for customers who have mobile apps, given that GTM won’t cover those.

Who is this not for: Customers who need SLO-backed real-time events and don’t want to deploy a server-side solution – GTM is a better option in this case.

Cloud Functions for Firebase [Availability: Public Preview]

From a functional point of view, there are situations where minimizing the time an event captured by GA4 occurs and enriching the Retail AI models can be key, especially at the customer’s native apps level where, for many retailers, the traffic from the applications represents a high percentage of the global traffic.

To help guide you through this section, it is helpful to understand how the data will flow through the system:

The native Android or iOS app generates an analytics event

The Cloud Functions for Firebase trigger for the analytics event is activated.

The Cloud Function configured to handle the event begins processing, and sends data to the Cloud Retail API

Cloud Retail receives the data and processes it for consumption in models and serving systems.

In this section we are going to show an implementation strategy based on the principles of event driven architecture. The key idea is to extract a GA4 event generated on the mobile app and produce a Recommendations AI event to inject into the Cloud Retail system in real time. This will be achieved through the following 5 steps:

Step 1: Adding Google Analytics for Firebase SDK

Step 2: Sending a Google Analytics event from your app, and marking it as a conversion.

Step 3: Configuring a Cloud Functions for Firebase trigger to send Analytics events to Google Cloud

Step 4: Using a Google Cloud Function to process the Analytics Events

Step 5: Deploying the Cloud Functions for Firebase

Let’s look at the setup steps in more detail.

Configuring Firebase to send Analytics events to Google Cloud

Before proceeding to this step, we need to log the event via the Google Analytics for Firebase SDK – learn more.

Once we have instrumented Firebase to log Analytics events, we can configure Firebase to send those events in real-time by going to the Firebase console and marking all relevant events as conversions, as shown in the following figure. Events not marked as a conversion are not sent in real time.

The name of the event is very important because we will need it later for the configuration of the component that should capture it.

At this point, Firebase will generate an event with a JSON payload with the appropriate user and the event dimensions as we will see later.

Using a Google Cloud Function to process the Analytics Events

Now it’s time to configure the Cloud Function that will process the event. Cloud Functions is a scalable, pay-as-you-go function as a service (FaaS) and it’s the easiest way to process the Analytics events.

Under the hood, the Firebase project that’s linked to your GA4 property is also a Google Cloud project. They share the same project ID, and you can open the same project in both the Firebase console and the Google Cloud Console. The Cloud Function will be deployed there. Cloud Functions can be triggered by different event types. In this case, a Google Analytics for Firebase event is the type of event we need. So, for every conversion event generated by Google Analytics we will trigger our Cloud Function which will receive and process that event data.

In the case of Java language, a raw Cloud Function implementation could be used as you can see in the following code skeleton:

Now, it’s time to write the code to generate a Cloud Retail event. Before doing so, you have to take into account the different elements you will see in the JSON payload. One of the caveats of the status of the Google Analytics for Firebase trigger is that it’s hard to get the product items information. That information is key in order to generate Cloud Retail events where the product item is mandatory for events like add-to-cart or purchase.

Since we cannot directly access the product items information, it’s necessary to add the product item ad-hoc. One of the recommended workarounds is the Analytics parameters. For the event types that only require one product, the item_id could be used. For the purchase event, one strategy could be to use multiple instances of the custom property purchased_items_i, where i represents an index from 1 to N, providing the product identifiers and also the quantity of the products in the cart.

Now, we will demonstrate how to use Java to send events to the Cloud Retail API. You will see a full example of in this Github repo but basically, you have to take into account the following key aspects:

To use a Cloud Retail API, you need to get the right credentials to the model. You need to get a GoogleCredentials using a credential in its JSON representation. You need the public key of the service account with the right permissions (retail editor and token creator) to interact with the model. As you can see in the full example, the Google OAuth Library is needed.

A UserEvent object has to be created to be sent using the UserEventServiceClient.

The method you need to send a user event to Cloud Retail is the write method. To write a user event using the API, you need to set up an instance of the UserEventServiceClient.

You will see that the builder pattern is omnipresent when it comes to building a UserEvent type object, but here you can see a reference example to build a UserEvent corresponding to the detail-page-view event:

Once we have the UserEvent and the credentials in GoogleCredentials, it’s time to send the event to the Cloud Retail API. Another parameter that is needed is the model URI that follows the pattern projects/<your_recai_project_id>/locations/global/catalogs/default_catalog

Home Page Views are not supported natively in GA4, so you will need to extract them from existing events such as Page Views or Promotion Views.

Deploying the Cloud Function for Firebase

Now It’s time to deploy your Cloud Function. You have two options: the gcloud CLI and the Google Cloud Console.

CLI

First al all, modify the cloudfunctions.allowedIngressSettings organization policy:

Next deploy your Cloud Function. Below you can see an example showing the key parameters:

To get more information about all the Cloud Function parameters you can visit the gcloud functions reference.

Parameter

Description

entry-point

Full package name and the main class that represents the Cloud Function entry point.

trigger-resource

Conversion event path from which notifications should be received.

trigger-event

providers/google.firebase.analytics/eventTypes/event.log. This is a fixed value corresponding to the trigger type for Firebase for analytics.

Cloud Console

In addition to the gcloud CLI tool. You can use the Google Cloud Console to deploy your Cloud Functions as you can see in the following pictures.

Using the Google Cloud Project that corresponds to your Firebase Project, go to organization policies to set the cloudfunctions.allowedIngressSettings policy to ALLOW_INTERNAL_ONLY:

After that, select Cloud Functions in the navigation menu. Click the Create Function button to enter a deployment wizard to deploy your Cloud Function.

First, select the first generation, the Cloud Function name and the region. After that, select the event type (Firebase for Analytics). The log event name must be equal to the Firebase conversion event. After that, it’s time to provide your Cloud Function. In the following picture you can see that you can upload your fat jar in a zip format through a custom bucket that you can create using the console.

After that, you will see your Cloud Function deployed. It’s very useful to have the key metrics, the logs, and the possibility to test your Cloud Function.

Who is this for: Customers who want to enrich Cloud Retail solutions using the events coming from the native apps.

Caveats: The Firebase for analytics trigger event is not able to capture the product item information, so the product items must be injected specifically. Cloud Functions also incur costs on the end user. See Cloud Functions pricing. However, this cost is amortized by subsequent Retail model training and prediction costs.

Future directions: Cloud functions are evolving to their second version, where Eventarc standards will be adopted and the product items information might be provided out of the box.

Cloud Retail Home Page Views

Home Page Views are not supported natively in GA4, so you will need to construct them synthetically.

For GTM, the best way to ingest the Home Page View is to create a separate GTM tag with the data source set as “Variable – Cloud Retail”. Manually construct the Home Page View whenever a visitor lands on your home page, with the trigger being window load, filtered to your home page URL. For example:

In this example, all the required fields are provided by overrides, and the actual variable for the data source is just an empty JSON object.

For GA4, the import will ingest page views with a URL that has no suffix after “/” as Home Page Views. For example, myhomepage.com or myhomepage.com/ would be ingested a Home Page View, but myhomepage.com/home would not. To ingest Home Page Views that do not match against these, you will need to reach out to your support team.

For Firebase, you will need to construct your Home Page Views based on an upstream GA4 event such as page_view or view_promotion.

Cloud BlogRead More