In this post, we show you how to use advanced replication features between MySQL-compatible databases such as Amazon Aurora MySQL-Compatible Edition clusters or Amazon Relational Database Service (Amazon RDS) for MySQL instances.

We discuss two replication capabilities in Amazon RDS and Amazon Aurora: multi-source replication and replication filtering. Multi-source replication is supported only in Amazon RDS for MySQL (8.0.35 and higher minor version and 5.7.44 and higher minor versions) but at the time of writing this post, it’s not supported for Aurora. We then implement those capabilities using an intermediate MySQL replication instance (a relay server) running in Amazon Elastic Compute Cloud (Amazon EC2).

MySQL binary log replication overview

A MySQL replication topology begins with a primary database server receiving write traffic and recording equivalent replication events in the binary log (or binlog). The binary log events describe all changes that happen on the primary server. Replicas connect to the primary server, download the binary logs, and apply the events locally in order to synchronize themselves with the primary.

In the most common scenario, the primary server records all changes, and each replica receives and applies all changes from a single primary. This is sufficient in most scenarios, but advanced use cases may require a tailored approach where the replicas aren’t simply one-to-one mirrors of the primary database.

Solution overview

Multi-source replication enables data from multiple MySQL-compatible sources to replicate to a single target (replica). Multi-source replication can facilitate a variety of use cases, including the following:

Efficiency and cost optimization, such as migrating several under-utilized single-tenant databases into a single multi-tenant cluster

Dataset management, such as moving tenants around in a multi-tenant system

Convenience, such as stitching multiple datasets together for analytics or extract, transform, and load (ETL) purposes.

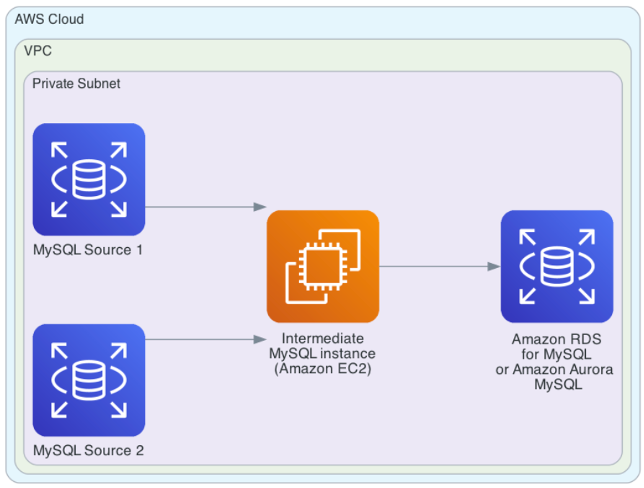

In our solution we are using an intermediate replication instance, which reads the binary log streams from multiple sources and produces a single replication stream that can be consumed by Amazon Aurora MySQL or Amazon RDS for MySQL.

The following diagram shows multiple MySQL databases instances being used as a source and replicating to another MySQL instance using an intermediate instance.

Replication filtering allows database administrators to exclude schemas or tables from replication. The configuration is flexible: you can list objects that should be replicated (and ignore everything else), or list objects that should be ignored (and replicate everything else). You can also choose to apply the filtering rules on the source or on the target.

Replication filtering can be helpful in the following situations:

Reducing overhead and improving replication performance in cases when the replica doesn’t need to contain all the schemas or tables

Managing shards or tenants in sharded or multi-tenant databases, where filtering can be used to replicate or ignore objects that belong to a particular shard or tenant

Migrations from managed databases that contain vendor-specific schemas or tables, where those objects interfere with replication and must be excluded

Replication filtering is partially supported in Amazon Aurora MySQL and Amazon RDS for MySQL. Consult the user guides for Amazon RDS and Amazon Aurora for details. At the time of writing, feature limitations include the following:

Each replication filtering parameter has a 2,000-character limit

Commas aren’t supported in replication filters

In Amazon RDS for MySQL, –binlog-do-db and –binlog-ignore-db parameters aren’t supported

In Amazon Aurora MySQL, filtering is supported only for Aurora MySQL version 3

Tables in the mysql schema can’t be ignored

The solution outlined in this post allows you to bypass these limitations.

The BLACKHOLE storage engine is an alternative way of implementing replication filtering at a table level. In this approach, a table converted to the BLACKHOLE engine ignores all writes and doesn’t contain any data. The binary log format (STATEMENT or ROW) determines whether or not replication records are written to the binary log, and therefore whether the intermediate replication instance sends these records further down the replication stream. This post includes a BLACKHOLE example for completeness. However, due to the relative complexity of BLACKHOLE behavior, we recommend using the replication filtering parameters instead where possible.

The following diagram shows a MySQL database instance being used as a source and replicating to another MySQL instance using an intermediate instance that is using the BLACKHOLE engine.

You can use the aforementioned replication features individually, or you can run a combination of filtering, multi-source replication, and BLACKHOLE tables on the same intermediate replication instance. The intermediate instance acts as a processing layer that replicates from binary log sources, applies the desired operations (multi-source aggregation, filtering), and generates new binary logs of its own. This new binary logs stream can then be consumed by Amazon Aurora MySQL or Amazon RDS for MySQL.

Prerequisites

You need the following components to implement this solution:

MySQL servers that you intend to use as the replication sources (primary servers) and targets (replicas). Our examples use Amazon RDS for MySQL as the source and Amazon Aurora MySQL as the target. The solution can also be used with other MySQL-compatible databases, such as when migrating from an external MySQL or MariaDB database to Amazon Aurora MySQL or Amazon RDS for MySQL.

An EC2 instance, on which you’ll install the intermediate MySQL replication server.

Network connectivity and security configuration that allows replication connections between MySQL sources and targets and the EC2 instance.

Note that for replication to work reliably throughout the entire chain, all MySQL servers must be replication-compatible in terms of their versions and configuration. For example, MySQL supports replication to the next higher major version (for example, 5.7 to 8.0), but doesn’t officially support replication from a higher to a lower major version. Similarly, the gtid_mode configuration must be compatible across all servers.

In this post, the test databases start out empty and aren’t receiving any write traffic until after replication is configured. Consequently, there’s no need to synchronize binary log positions between the servers, and we can use the current positions without running into replication conflicts. In real-world migration scenarios where the intermediate and target servers are provisioned from physical backups or logical dumps, you must ensure the binary log positions are correct in the context of those backups and dumps.

The examples provided in this post were tested using MySQL 8.0 and Amazon Aurora MySQL version 3 (compatible with MySQL 8.0).

Prepare the replication sources

MySQL servers acting as a replication source must meet the following configuration requirements:

Binary logging is enabled and set to ROW format.

The servers retain enough binary logs to maintain replication continuity. For example, if the replica will be created from a backup of the primary server, the primary must retain binary logs created from the backup time onwards, and those binlogs can’t be deleted until they’re processed by the replica.

The network and security configuration allows MySQL connections from the intermediate EC2 instance.

There’s a MySQL user account with REPLICATION SLAVE permissions, which will be used to accept replication connections from the intermediate server.

The exact steps will vary depending on whether you’re working with a managed MySQL service or a self-managed database.

If you don’t have existing MySQL servers you could use to test this solution, you can provision one or more RDS for MySQL instances by completing the following steps:

Create an RDS DB instance. Because we’re demonstrating multi-source replication, we use two instances in this example.

Make sure automated backups are enabled on both instances, which also enables binary logging.

Connect to each instance using a MySQL client, set the binary log retention period to 72 hours, and create a replication user with REPLICATION SLAVE permissions:

Prepare the intermediate MySQL instance in Amazon EC2

Complete the following steps to provision the intermediate MySQL instance:

Launch the EC2 instance using the Amazon Linux 2 operating system.

Configure the MySQL 8.0 package repository:

Install the MySQL 8.0 server package:

Confirm that MySQL is installed and initialized, and obtain the administrator password you’ll need to connect to MySQL:

Connect to MySQL and create a replication user with REPLICATION SLAVE permissions:

At this time, you can make additional MySQL configuration changes according to your requirements. At a minimum, verify the following prerequisites:

Binary logs are enabled (log_bin setting) and set to ROW format (binlog_format setting). This should be the default in MySQL 8.0.

Binary logging is enabled for changes received from replication sources (log_replica_updates setting, or log_slave_updates in versions before 8.0.26).

The server_id variable is set to a value that’s unique across the entire replication chain. RDS for MySQL instances and Aurora MySQL clusters automatically set that variable to a semi-random value, so you can pick any value such as 2 or 99 for your EC2 server.

The examples in this post don’t require advanced MySQL tuning, but production use cases might require adjustments to the instance sizing as well as MySQL buffers, caches, and other configuration values that influence the performance and behavior of the server.

Prepare the replication target in Amazon Aurora MySQL

The target preparation steps depend on the nature of your project. Migration projects might create the target database from a backup; others might start with an empty database to be filled with data after creation.

If you don’t already have a target database to use with this solution, you can create a new Aurora MySQL cluster. Make sure to use a major engine version that’s compatible with the MySQL version of the intermediate EC2 instance. For example, if using MySQL 8.0 on the EC2 instance, use Amazon Aurora MySQL version 3.

Configure replication from Amazon RDS for MySQL to Amazon EC2

We now configure multi-source replication between the two RDS for MySQL source instances and the intermediate MySQL server we created in the preceding section.

Multi-source replication uses the concept of replication channels, with each channel connecting to a different binary log source. Note that MySQL doesn’t perform automatic conflict resolution for changes coming from multiple sources, which means that the changes must be non-conflicting for the replication to work.

Use the CHANGE REPLICATION SOURCE TO statement to configure each channel. In MySQL versions before 8.0.23, the equivalent command is CHANGE MASTER TO.

This example involves two RDS for MySQL source instances, so the setup requires the creation of two replication channels.

To configure multi-source replication, complete the following steps:

Connect to each RDS for MySQL source instance and obtain binary log coordinates

On source-mysql-instance-1, use the following code:

On source-mysql-instance-2, use the following code:

Connect to the intermediate MySQL server in Amazon EC2 and configure replication channels, one for each source:

Use the following code to replicate from source-mysql-instance-1:

Use the following code to replicate from source-mysql-instance-2:

Verify that replication is up and running on the intermediate server (the following example output has been edited for brevity):

Configure replication from Amazon EC2 to Amazon Aurora MySQL

As the final step in our setup, we configure replication from the intermediate MySQL server to the Amazon Aurora MySQL target:

Connect to the intermediate MySQL server and obtain binary log coordinates:

Use an Aurora MySQL stored procedure to configure replication from the intermediate server:

Start replication, and verify that it’s up and running using the SHOW REPLICA STATUS command as demonstrated previously:

At this point, binary log replication is up and running between all three database layers: the Amazon RDS for MySQL sources, the intermediate MySQL server in Amazon EC2, and the Amazon Aurora MySQL target. Let’s proceed with the demonstration of replication features.

Multi-source replication

To demonstrate multi-source replication, we create a schema with a couple of tables on each of the RDS for MySQL source instances. The objects are replicated to the intermediate MySQL server in Amazon EC2, so that the server sees both schemas, each replicated from a different source. Complete the following steps:

Create a schema with a couple of tables on source-mysql-instance-1:

Do the same on source-mysql-instance-2. Make sure to use a different schema name, so that it doesn’t conflict with the schema we created in the previous step:

Connect to the intermediate MySQL server and list the schemas. Both schemas should have been replicated:

Connect to the Aurora MySQL cluster and list the schemas. You should see both schemas, and the tables are there too:

Replication filtering

Building upon our existing replication setup, we now introduce replication filtering to ignore certain tables on the intermediate MySQL server. Let’s say that one of the source instances (source-mysql-instance-1) runs regular data archiving jobs on tables called demo_source1_db.archive_*. We still want those tables to be binary logged for other reasons (like backup and restore), but we don’t need them in our Amazon Aurora MySQL target.

We use our intermediate MySQL server to filter those tables out, so that the Aurora MySQL cluster never has to process them. Complete the following steps:

Edit the MySQL server configuration file on the intermediate EC2 instance (by default, /etc/my.cnf) and add the following setting, then restart the MySQL service:

Connect to source-mysql-instance-1 and create a table that’s matched by the replication filtering rule:

Connect to the intermediate MySQL server and list tables in the schema. Note that the table was not replicated. More precisely, the replication events were received, but ignored:

The same is true on the Aurora MySQL cluster. Although we didn’t configure any replication filtering rules on the Aurora side, the events for the filtered table were already ignored on the intermediate database, and they never made it to the Aurora cluster.

There are several replication filtering parameters that you can use according to your requirements. Filtering settings can list objects that should be replicated (and ignore everything else), or list objects that should be ignored (and replicate everything else).

Note that if you configure multiple filtering settings, there’s a specific order in which the server evaluates them. This can sometimes lead to unexpected results, such as when the same table names are listed in both the do and the ignore parameters. Refer to How Servers Evaluate Replication Filtering Rule for details.

Demonstrate the BLACKHOLE storage engine

As the final step in our demonstration, let’s take one of the tables that have already been replicated and convert that table to BLACKHOLE storage engine. We then observe how it affects replication on that table.

Connect to source-mysql-instance-1 and insert a few rows into one of the tables:

Confirm that the rows replicated all the way through the intermediate MySQL server and into the Aurora MySQL cluster.

Convert the table to the BLACKHOLE engine on the intermediate MySQL server. Note that we want to modify the table on the intermediate server, but we want the table to stay on InnoDB in the Aurora cluster. To achieve that, we’re using the session-level sql_log_bin variable to temporarily disable binary logging while we’re altering the table:

At this point, the table is a regular InnoDB table on the Amazon RDS for MySQL source and in Amazon Aurora MySQL, but it’s a BLACKHOLE table on the intermediate server. Let’s see how that affects replication.

On the source-mysql-instance-1, insert a couple more rows into the table:

Check the table contents on the intermediate server. The table appears empty, as expected with the BLACKHOLE engine:

Check the table contents in Aurora. We can see the new rows:

Now, let’s go back to source-mysql-instance-1 and delete all the rows from the table:

Check the table contents in Aurora. We can still see the rows even though they were deleted from the source:

Out of all the changes initially made on the source, the inserted rows made it to the target, but the deletes did not. This is due to the following reasons:

The source table is InnoDB, so it accepted and logged the inserts normally.

The intermediate server ignored the inserts, but it recorded them in its own binary log. This is expected because inserts to BLACKHOLE tables are always logged, regardless of the binary log format. Because the inserts were logged, Aurora received and replicated them.

The delete statements were again accepted and logged on the source. However, the intermediate server’s binary log format is set to ROW, so it didn’t record those deletes in its binary log. That’s because the BLACKHOLE engine treats updates and deletes differently than inserts. Refer to Replication and BLACKHOLE Tables for details.

Because the deletes weren’t logged on the intermediate server, Aurora never saw those changes. The table in Amazon Aurora MySQL still exists and contains previously replicated data. It looks like we’ve created an insert-only table on Aurora! (Not really, and you could try a TRUNCATE statement next!)

This demonstration shows why BLACKHOLE tables might be seen as unpredictable in complex replication setups. For that reason, we recommend using replication filtering instead of the BLACKHOLE engine where possible. Nevertheless, BLACKHOLE tables are an interesting concept and might be useful in scenarios that can take advantage of their unique characteristics.

Conclusion

In this post, we demonstrated how you can use advanced replication features by inserting an intermediate replication component between two MySQL servers. This technique can be very useful in situations when the source or target servers are constrained in their features or configuration options, or when you want to perform data transformations such as schema aggregation or data filtering without having to modify the source databases directly.

We hope you find this post helpful, please let us know your thoughts and questions in the comment section.

About the Authors

Shyam Sunder Rakhecha is a Lead Consultant with the Professional Services team at AWS based out of Hyderabad, India, and specializes in database migrations and modernization. He helps customers in migration and optimization in the AWS Cloud. He is curious to explore emerging technology in terms of databases. He is fascinated with RDBMS and big data. He also loves to organize team building events and activities.

Neha Sharma is a Database Consultant with Amazon Web Services. With over a decade of experience in working with databases, she enables AWS customers to migrate their databases to AWS Cloud. Besides work, she likes to be actively involved in various sports activities and likes to socialize with people.

Szymon Komendera is a Database Solutions Architect at AWS, with nearly 20 years of experience in databases, software development, and application availability. He spent the majority of his 8-year AWS tenure developing Aurora MySQL, and supporting other AWS databases such as Amazon Redshift and Amazon ElastiCache.

Read MoreAWS Database Blog