In Part 1 of this series, we discussed how the IDENTITY column is used in different relational database management systems. In this post, we focus on how AWS Database Migration Service (AWS DMS) handles tables with IDENTITY column. For the source database, AWS DMS captures the IDENTITY column as a regular column. For the target database, AWS DMS will take the source database column value and apply it to the IDENTITY column.

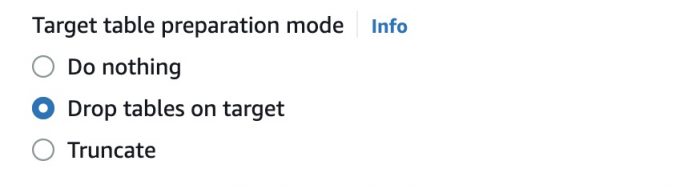

When setting up an AWS DMS replication task, one option is let AWS DMS recreate the table on the target by choosing Drop tables on target.

In this case, the IDENTITY column is created as a regular column, and the IDENTITY property for a column isn’t migrated to a target database column. This behavior is consistent with all database engines, even without AWS DMS . For example, a create table A as select * from table B will not inherit the IDENTITY property of the columns in table A. Regular columns can be converted into IDENTITY columns by using an alter table command for the target database after the data migration.

The following code shows the extracted Data Definition Language (DDL) on the target after AWS DMS recreates the table (in SQL Server):

However, in many cases, tables are created manually on the AWS DMS target endpoint with database definition language (DDL) from a schema export, AWS Schema Conversion Tool (AWS SCT) or restored from a backup, therefore the IDENTITY property is kept. When setting up an AWS DMS task via the AWS DMS console, we choose Do nothing (for CDC only) or Truncate (for full load and CDC) for Target table preparation mode.

In the following sections, we look at how SQL Server, MySQL, PostgreSQL, and Oracle handle things differently.

SQL Server

We apply the same DDL from the source on the target endpoint:

When AWS DMS replicates data from the source to the target, it sets IDENTITY_INSERT to ON when it updates the target table, and sets it to OFF after the change is complete. We can validate this behavior by turning on SQL Server Audit (see the following screenshot). As we discussed in Part 1, SQL Server can accept values for the IDENTITY column with IDENTITY_INSERT set to ON.

Therefore, AWS DMS handles the SQL Server target with an IDENTITY column automatically by using the following SQL Server features:

INDENTITY_INSERT

Automatically set the next value after it’s provided by the insert SQL

There is no manual work involved; you can stop the AWS DMS task and cut over the application to the target database when the data is in sync.

MySQL

In this example, we use auto converted DDL from the AWS Schema Conversion Tool (AWS SCT):

AWS DMS successfully migrates the data for a table with an IDENTITY column without any issue. In MySQL, you can specify the value for the IDENTITY column as long as it doesn’t violate the unique rule (in MySQL, the IDENTITY column has to be primary key or unique index).

When we want to cut over, we can stop the AWS DMS task and use the following SQL query to match the AUTO_INCREMENT value of IDENTITY_COLUMN to match that of source. This ensures data consistency and prevents failure when the app starts to insert data into the new target MySQL database.

On the source database, find the current value of the AUTO_INCREMENT column. For an example on MySQL, use the following SQL:

Now that we have the current AUTO_INCREMENT value from the source database, use the following SQL to set it on the target MySQL database:

Note the following:

The DELETE command doesn’t decrease or reset the value of AUTO_INCREMENT. Therefore, if you delete all rows of a table with AUTO_INCREMENT, it will not reset its value.

TRUNCATE will always reset AUTO_INCREMENT to 1.

If you’re expecting the IDENTITY column to exceed an integer limit of 2^31 (2,147,483,648), it’s better to define the IDENTITY column as BIGINT.

You can also define the IDENTITY column as UNSIGNED if your application doesn’t need to store negative numbers. This will increase your limit of the INT data type.

PostgreSQL

AWS DMS handles the IDENTITY column differently for full load compared to change data capture (CDC).

For a full load-only task, you can create the table on the target database beforehand manually or by using scripts from the AWS SCT. The following code is the auto converted DDL from the AWS SCT:

AWS DMS successfully migrates the data for a table with an IDENTITY column. Note that the AWS DMS full load-only task will be successful for IDENTITY columns with GENERATED ALWAYS and GENERATED BY DEFAULT.

When using an AWS DMS full load with CDC or CDC-only task, note the following: If the source tables have the IDENTITY columns created with GENERATED ALWAYS and if the target tables are also created using GENERATED ALWAYS, the AWS DMS task will throw an error while migrating the data because the source IDENTITY columns will have the values already generated for the IDENTITY column.

To overcome the AWS DMS error, the target tables must be created with the IDENTITY columns using GENERATED BY DEFAULT. Then migrate the data and after a successful migration, at cutover, modify the IDENTITY column back to GENERATED ALWAYS.

Let’s say the Name table has an IDENTITY column created with GENERATED ALWAYS in the source. If using the AWS SCT, the table DDL will have the IDENTITY as GENERATED ALWAYS in PostgreSQL as well, which when created will error out for CDC.

First, create the table manually by changing the IDENTITY column to GENERATED BY DEFAULT. You can also run ALTER TABLE to change the property of the IDENTITY column. See the following code:

After the AWS DMS tasks complete successfully, at the time of final cutover, modify the IDENTITY column back to GENERATED ALWAYS:

Post-migration

At the final cutover, run the following code to set the current value of the IDENTITY column:

We provide a code snippet to automate these two steps:

Convert a table’s IDENTITY column from GENERATED ALWAYS to GENERATED BY DEFAULT.

Reset the current value of the IDENTITY column after cutover.

For example, let’s say that we are migrating data from a commercial system (Oracle or SQL Server) to PostgreSQL. The source has two tables, NAME and NAME2, with IDENTITY columns. Complete the following steps:

Create a table in the source database with the schema name, table name, column name, and generation type of the IDENTITY column:

Insert the required data into the IDENTITY_col_tables table:

For Oracle, use the following code:

For SQL Server, use the following code:

Create the same table in the target database (PostgreSQL):

Migrate the data from source to target only for the IDENTITY_col_tables table. You can use AWS DMS for this step.

Run the DDL that is generated by the AWS SCT or equivalent. This creates the same IDENTITY columns as in the source database.

Run the following in PostgreSQL to change the GENERATE ALWAYS IDENTITY columns to GENERATE BY DEFAULT:

Run the full load and CDC.

Run the following in PostgreSQL to change the IDENTITY columns:

Run the following on the target to reset all the IDENTITY column values at cutover:

Oracle

Given the flexibility provided by Oracle for IDENTITY columns, the following approach would be the most appropriate to migrate data into the IDENTITY columns in target Oracle databases. Complete the following steps:

Define the IDENTITY column in the target Oracle table with a GENERATED BY DEFAULT ON NULL clause before or during data migration.This ensures that previous values from source tables are inserted into these target tables without any challenges. This also ensures that if the source table has NULL values, Oracle will insert a NOT NULL integer value for this ID column on the target.

Determine the maximum value of the IDENTITY column on the source table and supply the START WITH clause to modify the target Oracle table.This ensures data uniqueness and that no duplicate values are inserted into the ID column, which may cause insertion errors due to the IDENTITY definition of the column. Provide a higher value for the START WITH clause to prevent duplicate data insertion when additional data is inserted into the source table.

Perform post-data migration and validation using AWS DMS. You may alter the table to change this IDENTITY column to GENERATED ALWAYS or GENERATED BY DEFAULT based on your application’s requirements.Now you can use the Oracle-provided dictionary views to identify the IDENTITY columns and their characteristics. The DBA_TAB_IDENTITY_COLS, ALL_TAB_IDENTITY_COLS, and USER_TAB_IDENTITY_COLS views provide the IDENTITY columns, their data generation type, internal sequence associated with each column, and the options for each of these internal sequences.

Use the ALL_TAB_IDENTITY_COLS view on the source Oracle database to script out the SQL required to create or alter these columns on the target Oracle database.

Use the following SQL to list all the IDENTITY column details on the source database. Spool the output to a text or CSV file for your records.

The following SQL generates a script to modify the IDENTITY column generation type to GENERATED BY DEFAULT ON NULL. Before migrating the data using AWS DMS, run this script against the source database to generate the output script. Then run the resulting output script against the target database to change the IDENTITY column properties first. This makes sure that all the IDENTITY columns on the target Oracle database are set to the GENERATED BY DEFAULT ON NULL type.

After you migrate the data using AWS DMS, you must reset the data generation type for these IDENTITY columns back to their original settings (matching the source Oracle database). The following SQL generates a script to modify the IDENTITY column generation type back to its original settings. Run this script against the source database to capture the current generation type and generate the output script. Then run the resulting output script against the target database to change the IDENTITY column properties to the same as the source.

As mentioned earlier, Oracle internally maintains some sequences to comply with the IDENTITY options defined for IDENTITY columns. These sequences are generated internally and have their own naming convention. Even though user didn’t create these sequences manually, they may be identified by querying the *_TAB_IDENTITY_COLS dictionary:

We need to reset the values for these sequences associated with each IDENTITY column post-data migration using AWS DMS and before applications start writing to the tables to maintain data uniqueness for these IDENTITY columns.

Alternately, you can modify the IDENTITY column itself by resetting its START value with the ALTER TABLE command. Oracle provides the START WITH LIMIT VALUE clause in its ALTER TABLE statement.

Let’s see what this START WITH LIMIT VALUE clause does on the ALTER TABLE statement in more detail. This clause can only be specified with an ALTER TABLE statement and by implication against an existing IDENTITY column. When this clause is specified, Oracle locks the table, scans it for the highest value for the IDENTITY column, and resets the underlying sequence for this IDENTITY column to start with a new value of highest value + 1. The next value generated by the sequence for this IDENTITY column is this high water mark + INCREMENT BY value. See the following example SQL:

Oracle locks the sample_tab table and scans for the highest value for the id_col column. The underlying sequence generator used internally by this IDENTITY column is reset with the highest value found + the INCREMENT BY value.

This option saves time in identifying high values on each table manually and updating its corresponding sequence generator value individually.

Now we repeat Step 7 to reset the IDENTITY column generation type to include this START WITH LIMIT VALUE clause. This way, when setting the type and option of the IDENTITY columns on the target database, we also reset the values of its sequence generators automatically.

Revise the SQL to run after data migration as follows, and run this against the source database to generate a dynamic SQL output and run the output script against the target:

The IDENTITY columns are altered to the same generation type as the source and the next values are automatically adjusted with the highest value added to the IDENTITY column in the table.

Summary

In this blog series we covered the IDENTITY column migration best practice with AWS DMS. In part 1 we demonstrated how different relational database management systems handle IDENTITY columns. In this post (part 2), we demonstrated the implementation of the IDENTITY column and cutover strategy in AWS DMS. This will help you migrate data from on-premises databases to AWS.

If you have any questions or comments, post your thoughts in the comments section.

About the authors

Feng Cai is a Database Consultant at AWS. He has a wide background in providing database services to different clients. Feng is currently a member of the AWS Global Competency Center, providing homogeneous and heterogenous database migration support to customers.

Sindhusha Paturu is a Database Consultant with the AWS Professional Services Team. She has been supporting and enabling customers to migrate their database from on-premises data centers to the AWS Cloud and also to migrate from commercial database engines to open-source databases in Amazon.

Bala K is a Senior Database Consultant with the AWS Global Competency Center and brings a vast experience of database technologies. Starting with mainframes, Bala has worked on all modern database engines like Oracle, SQL Server, DB2, Sybase, PostgreSQL and Amazon Redshift. At AWS, he helps empower customers with their digital transformation and accelerate migration of their database workload to the AWS Cloud. He has successfully migrated a number of complex databases from on premises to the AWS Cloud.

Mohammad Waseem is a Database Consultant at AWS. He works as a database migration specialist, helping and enabling customers to build highly available, cost-effective database solutions and migrate their database workloads to the AWS Cloud. He works with AWS customers and partners to provide guidance and technical assistance on database projects, helping them improve the value of their solutions when using AWS.

Read MoreAWS Database Blog