Amazon Kendra customers can now enrich document metadata and content during the document ingestion process using custom document enrichment (CDE). Amazon Kendra is an intelligent search service powered by machine learning (ML). Amazon Kendra reimagines search for your websites and applications so your employees and customers can easily find the content they’re looking for, even when it’s scattered across multiple locations and content repositories within your organization.

You can further enhance the accuracy and search experience of Amazon Kendra by improving the quality of documents indexed in it. Documents with precise content and rich metadata are more searchable and yield more accurate results. Organizations often have large repositories of raw documents that can be improved for search by modifying content or adding metadata before indexing. So how does CDE help? By simplifying the process of creating, modifying, or deleting document metadata and content before they’re ingested into Amazon Kendra. This can include detecting entities from text, extracting text from images, transcribing audio and video, and more by creating custom logic or using services like Amazon Comprehend, Amazon Textract, Amazon Transcribe, Amazon Rekognition, and others.

In this post, we show you how to use CDE in Amazon Kendra using custom logic or with AWS services like Amazon Textract, Amazon Transcribe, and Amazon Comprehend. We demonstrate CDE using simple examples and provide a step-by-step guide for you to experience CDE in an Amazon Kendra index in your own AWS account.

CDE overview

CDE enables you to create, modify, or delete document metadata and content when you ingest your documents into Amazon Kendra. Let’s understand the Amazon Kendra document ingestion workflow in the context of CDE.

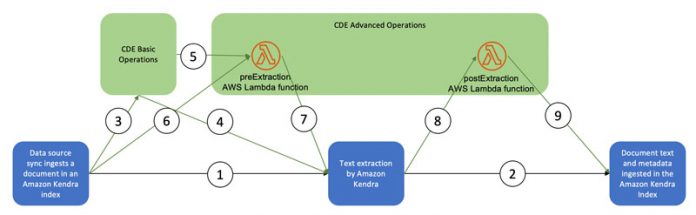

The following diagram illustrates the CDE workflow.

The path a document takes depends on the presence of different CDE components:

Path taken when no CDE is present – Steps 1 and 2

Path taken with only CDE basic operations – Steps 3, 4, and 2

Path taken with only CDE advanced operations – Steps 6, 7, 8, and 9

Path taken when CDE basic operations and advanced operations are present – Steps, 3, 5, 7, 8, and 9

The CDE basic operations and advanced operations components are optional. For more information on the CDE basic operations and advanced operations with the preExtraction and postExtraction AWS Lambda functions, refer to the Custom Document Enrichment section in the Amazon Kendra Developer Guide.

In this post, we walk you through four use cases:

Automatically assign category attributes based on the subdirectory of the document being ingested

Automatically extract text while ingesting scanned image documents to make them searchable

Automatically create a transcription while ingesting audio and video files to make them searchable

Automatically generate facets based on entities in a document to enhance the search experience

Prerequisites

You can follow the step-by-step guide in your AWS account to get a first-hand experience of using CDE. Before getting started, complete the following prerequisites:

Download the sample data files AWS_Whitepapers.zip, GenMeta.zip, and Media.zip to a local drive on your computer.

In your AWS account, create a new Amazon Kendra index, Developer Edition. For more information and instructions, refer to the Getting Started chapter in the Amazon Kendra Essentials workshop and Creating an index.

Open the AWS Management Console, and make sure that you’re logged in to your AWS account

Create an Amazon Simple Storage Service (Amazon S3) bucket to use as a data source. Refer to Amazon S3 User Guide for more information.

Click on to launch the AWS CloudFormation to deploy the preExtraction and postExtraction Lambda functions and the required AWS Identity and Access Management (IAM) roles. It will open the AWS CloudFormation Management Console.

Provide a unique name for your CloudFormation stack and the name of the bucket you just created as a parameter.

Choose Next, select the acknowledgement check boxes, and choose Create stack.

After the stack creation is complete, note the contents of the Outputs. We use these values later.

Configure the S3 bucket as a data source using the S3 data source connector in the Amazon Kendra index you created. When configuring the data source, in the Additional configurations section, define the Include pattern to be Data/. For more information and instructions, refer to the Using Amazon Kendra S3 Connector subsection of the Ingesting Documents section in the Amazon Kendra Essentials workshop and Getting Started with an Amazon S3 data source (console).

Extract the contents of the data file AWS_Whitepapers.zip to your local machine and upload them to the S3 bucket you created at the path s3://<YOUR-DATASOURCE-BUCKET>/Data/ while preserving the subdirectory structure.

Automatically assign category attributes based on the subdirectory of the document being ingested

The documents in the sample data are stored in subdirectories Best_Practices, Databases, General, Machine_Learning, Security, and Well_Architected. The S3 bucket used as the data source looks like the following screenshot.

We use CDE basic operations to automatically set the category attribute based on the subdirectory a document belongs to while the document is being ingested.

On the Amazon Kendra console, open the index you created.

Choose Data sources in the navigation pane.

Choose the data source used in this example.

Copy the data source ID.

Choose Document enrichment in the navigation pane.

Choose Add document enrichment.

For Data Source ID, enter the ID you copied.

Enter six basic operations, one corresponding to each subdirectory.

Choose Next.

Leave the configuration for both Lambda functions blank.

For Service permissions, choose Enter custom role ARN and enter the CDERoleARN value (available on the stack’s Outputs tab).

Choose Next.

Review all the information and choose Add document enrichment.

Browse back to the data source we’re using by choosing Data sources in the navigation pane and choose the data source.

Choose Sync now to start data source sync.

The data source sync can take up to 10–15 minutes to complete.

While waiting for the data source sync to complete, choose Facet definition in the navigation pane.

For the Index field of _category, select Facetable, Searchable, and Displayable to enable these properties.

Choose Save.

Browse back to the data source page and wait for the sync to complete.

When the data source sync is complete, choose Search indexed content in the navigation pane.

Enter the query Which service provides 11 9s of durability?.

After you get the search results, choose Filter search results.

The following screenshot shows the results.

For each of the documents that were ingested, the category attribute values set by the CDE basic operations are seen as selectable facets.

Note Document fields for each of the results. When you click on it, it shows the fields or attributes of the document included in that result as seen in the screenshot below.

From the selectable facets, you can select a category, such as Best Practices, to filter your search results to be only from the Best Practices category, as shown in the following screenshot. The search experience improved significantly without requiring additional manual steps during document ingestion.

Automatically extract text while ingesting scanned image documents to make them searchable

In order for documents that are scanned as images to be searchable, you first need to extract the text from such documents and ingest that text in an Amazon Kendra index. The pre-extraction Lambda function from the CDE advanced operations provides a place to implement text extraction and modification logic. The pre-extraction function we configure has the code to extract the text from images using Amazon Textract. The function code is embedded in the CloudFormation template we used earlier. You can choose the Template tab of the template on the AWS CloudFormation console and review the code for PreExtractionLambda.

We now configure CDE advanced operations to try out this and additional examples.

On the Amazon Kendra console, choose Document enrichments in the navigation pane.

Select the CDE we configured.

On the Actions menu, choose Edit.

Choose Add basic operations.

You can view all the basic operations you added.

Add two more operations: one for Media and one for GEN_META.

Choose Next.

In this step, you need the ARNs of the preExtraction and postExtraction functions (available on the Outputs tab of the CloudFormation stack). We use the same bucket that you’re using as the data source bucket.

Enter the conditions, ARN, and bucket details for the pre-extraction and post-extraction functions.

For Service permissions, choose Enter custom role ARN and enter the CDERoleARN value (available on the stack’s Outputs tab).

Choose Next.

Choose Add document enrichment.

Now we’re ready to ingest scanned images into our index. The sample data file Media.zip you downloaded earlier contains two image files: Yosemite.png and Yellowstone.png. These are scanned pictures of the Wikipedia pages of Yosemite National Park and Yellowstone National Park, respectively.

Upload these to the S3 bucket being used as the data source in the folder s3://<YOUR-DATASOURCE-BUCKET>/Data/Media/.

Open the data source on the Amazon Kendra console start a data source sync.

When the data source sync is complete, browse to Search indexed content and enter the query Where is Yosemite National Park?.

The following screenshot shows the search results.

Choose the link from the top search result.

The scanned image pops up, as in the following screenshot.

You can experiment with similar questions related to Yellowstone.

Automatically create a transcription while ingesting audio or video files to make them searchable

Similar to images, audio and video content needs to be transcribed in order to be searchable. The pre-extraction Lambda function also contains the code to call Amazon Transcribe for audio and video files to transcribe them and extract a time-marked transcript. Let’s try it out.

The maximum runtime allowed for a CDE pre-extraction Lambda function is 5 minutes (300 seconds), so you can only use it to transcribe audio or video files of short duration, about 10 minutes or less. For longer files, you can use the approach described in Make your audio and video files searchable using Amazon Transcribe and Amazon Kendra.

The sample data file Media.zip contains a video file How_do_I_configure_a_VPN_over_AWS_Direct_Connect_.mp4, which has a video tutorial.

Upload this file to the S3 bucket being used as the data source in the folder s3://<YOUR-DATASOURCE-BUCKET>/Data/Media/.

On the Amazon Kendra console, open the data source and start a data source sync.

When the data source sync is complete, browse to Search indexed content and enter the query What is the process to configure VPN over AWS Direct Connect?.

The following screenshot shows the search results.

Choose link in the answer to start the video.

If you seek to an offset of 84.44 seconds (1 minute, 24 seconds), you’ll hear exactly what the excerpt shows.

Automatically generate facets based on entities in a document to enhance the search experience

Relevant facets such as the entities in documents like places, people, and events, when presented as as part of search results, provide an interactive way for a user to filter search results and find what they’re looking for. Amazon Kendra metadata, when populated correctly, can provide these facets, and enhances the user experience.

The post-extraction Lambda function allows you to implement the logic to process the text extracted by Amazon Kendra from the ingested document, then create and update the metadata. The post-extraction function we configured implements the code to invoke Amazon Comprehend to detect entities from the text extracted by Amazon Kendra, and uses them to update the document metadata, which is presented as facets in an Amazon Kendra search. The function code is embedded in the CloudFormation template we used earlier. You can choose the Template tab of the stack on the CloudFormation console and review the code for PostExtractionLambda.

The maximum runtime allowed for a CDE post-extraction function is 60 seconds, so you can only use it to implement tasks that can be completed in that time.

Before we can try out this example, we need to define the entity types that we detect using Amazon Comprehend as facets in our Amazon Kendra index.

On the Amazon Kendra console, choose the index we’re working on.

Choose Facet definition in the navigation pane.

Choose Add field and add fields for COMMERCIAL_ITEM, DATE, EVENT, LOCATION, ORGANIZATION, OTHER, PERSON, QUANTITY, and TITLE of type StringList.

Make LOCATION, ORGANIZATION and PERSON facetable by selecting Facetable.

Extract the contents of the GenMeta.zip data file and upload the files United_Nations_Climate_Change_conference_Wikipedia.pdf, United_Nations_General_Assembly_Wikipedia.pdf, United_Nations_Security_Council_Wikipedia.pdf, and United_Nations_Wikipedia.pdf to the S3 bucket being used as the data source in the folder s3://<YOUR-DATASOURCE-BUCKET>/Data/GEN_META/.

Open the data source on the Amazon Kendra console and start a data source sync.

When the data source sync is complete, browse to Search indexed content and enter the query What is Paris agreement?.

After you get the results, choose Filter search results in the navigation pane.

The following screenshot shows the faceted search results.

All the facets of the type ORGANIZATION, LOCATION, and PERSON are automatically generated by the post-extraction Lambda function with the detected entities using Amazon Comprehend. You can use these facets to interactively filter the search results. You can also try a few more queries and experiment with the facets.

Clean up

After you have experimented with the Amazon Kendra index and the features of CDE, delete the infrastructure you provisioned in your AWS account while working on the examples in this post:

CloudFormation stack

Amazon Kendra index

S3 bucket

Conclusion

Enhancing data and metadata can improve the effectiveness of search results and improve the search experience. You can use the custom data enrichment (CDE) feature of Amazon Kendra to easily automate the CDE process by creating, modifying, or deleting the metadata using the basic operations. You can also use the advanced operations with pre-extraction and post-extraction Lambda functions to implement the logic to manipulate the data and metadata.

We demonstrated using subdirectories to assign categories, using Amazon Textract to extract text from scanned images, using Amazon Transcribe to generate a transcript of audio and video files, and using Amazon Comprehend to detect entities that are added as metadata and later available as facets to interact with the search results. This is just an illustration of how you can use CDE to create a differentiated search experience for your users.

For a deeper dive into what you can achieve by combining other AWS services with Amazon Kendra, refer to Make your audio and video files searchable using Amazon Transcribe and Amazon Kendra, Build an intelligent search solution with automated content enrichment, and other posts on the Amazon Kendra blog.

About the Authors

Abhinav Jawadekar is a Senior Partner Solutions Architect at Amazon Web Services. Abhinav works with AWS Partners to help them in their cloud journey.

Read MoreAWS Machine Learning Blog