Every organization will inevitably migrate data between locations at some point. Data migration refers to the movement of data between storage locations and data platforms. For example, you might need data migration when you introduce new database systems or migrate applications from on-premises to the cloud. Before the evolution of data migration tools, the data migration process was inefficient, lengthy, and complicated and often resulted in data quality issues.

But thanks to modern data migration tools, migrating your organization’s data doesn’t have to be so hard or untrustworthy anymore. An efficient, well-established data migration process will help you prevent downtime, data loss, and budget overruns and ensure maximum use of data.

Since the average IT downtime costs over $300,000 per hour or more for larger organizations, you can see how crucial an efficient process is. Let’s explore data migration, the various steps involved in data migration, and the essential steps involved in each phase of the data migration process.

Types of Data Migration “Processes”

Data migration “processes” refer to the triggers that lead to the migration. There are three primary triggers:

Storage migration occurs when organizations discard old storage systems for newer ones often to modernize or save cost.

Cloud migration involves data migration from an on-premise location to the cloud.

Database migration comprises migrating to a database or upgrading from legacy systems.

Businesses may employ the following approaches during migration:

One Time migration process (Lift and shift): A one time migration process usually occurs within a specific period and involves one main operation. An often needed first step, it may cause system-wide downtime and presents a massive risk in proportion to the amount of migrated data.

Continuous data migration: This data migration process employs an ongoing migration strategy performed in phases or workloads and helps decrease the risk of downtime. This process addresses not only the static nature of the data but also accounts for changes in the data over time (including Change Data Capture).

Essential Data Migration Steps

Every efficient data migration approach must have the following steps included in the overall process:

Pre-migration/Planning phase

Migration phase

Post-migration

Data Synchronization

Planning Phase

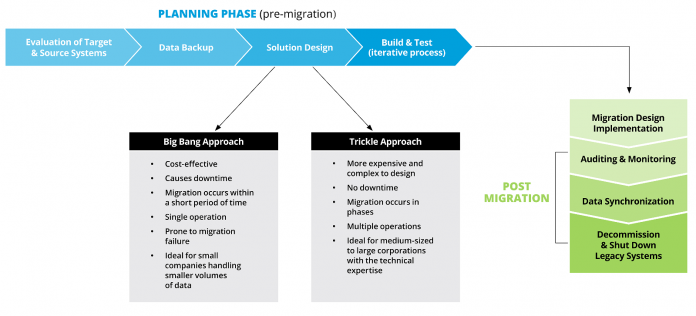

The planning phase accounts for all preparations made before the migration process. The planning phase consists of;

Evaluation of data source and target systems: An in-depth understanding of the data source and target system is required to help design an effective migration strategy. How does the source data fit into the target data system? Are there fields from the source system that need not exist in the target system? Will there be resulting null values after migration, and how can you fill in those values? The existence of a data profiling tool will be beneficial in this case, as data profiling helps establish patterns and relationships for better data consolidation. With the right data integration tool, you won’t need to bother yourself with these issues at all.

Solution design: Migration solutions usually pick between a big-bang or trickle approach. The choice of solution helps affect the decision of setting budgets, timelines and deadlines. The big-bang process, for example, is a one-time operation and occurs during a short period. A big-bang approach also causes significant downtime to system operations, so it is advisable to migrate during periods when customers are most likely not using the application. The trickle-down migration approach is a more advanced process that involves continuous migration over time, which helps prevent significant downtime, and helps maintain fast, consistent operations. Although the trickle approach requires a good understanding of future needs it is more practical and effective. Additionally, the solution design should factor in the data security needs and weave in necessary security measures to maintain data safety and compliance.

Plan budgets: Gartner lists missing hidden costs associated with hiring, vacating old facilities, and adopting DevOps practices as mistakes when making budgets for migration strategies. Organizations should ensure an end-end prices view of each migration step to provide a more holistic migration budget and prevent budget overruns.

Building and Testing: Implementation of the migration process occurs just once, hence the need to make it proceed without errors. Before the implementation of the migration process, solutions need continuous testing with actual data to evaluate the completeness and efficiency of the chosen solution and identify potential failure points to fix them.

Data Backup: Although this is not a requirement, creating a backup for the intended migrated data is considered a best practice to provide an extra layer of protection in case of migration failure or data losses.

Migration Phase

This phase includes the extraction and loading of data (the E and L of ETL). Depending on the chosen migration process and volume of data, migration may occur in several days or over several phases. Businesses should consider the need for availability of their services when choosing an approach as a big-bang approach will not be ideal for applications needing real-time availability.

Monitoring and auditing also form a critical part of the migration phase as the process needs to be examined and monitored to ensure the accuracy of the entire process. Auditing ensures data migration proceeds according to set guidelines, and the final migrated data is of excellent quality for business use. Frequent testing and monitoring should occur throughout the implementation process to ensure the safe transit of data.

Post-migration

Verifying the migrated data’s accuracy and completeness takes place in this phase by running the source and destination systems parallel to each other to observe and verify their functionality in the new system. A sidestep from the intended functionality could pinpoint a variety of reasons which may need further investigation. This continuous verification after the migration is also considered best practice for efficient migration processes.

Data Synchronization and Monitoring

After migration, data synchronization across devices, databases, and applications occurs to help maintain consistency with data over time. Synchronization helps keep high-quality data with high trust value for business operations.

After verifying that the destination systems work as predicted, legacy systems are decommissioned and shut down.

The Data Migration Process

The data migration process involves three essential steps:

Data Extraction: Sometimes referred to as data collection, this first step involves collecting data from its source systems like Relational Database Management Systems (RDBMS), file systems, Customer Relationship Management(CRM) systems, legacy applications, and marketing systems. Data extraction collates various forms of data, from structured data in rows and columns to unstructured data formats. Data extraction can be a time-consuming process, hence the need for a data extraction tool which helps automate the process, improve agility and reduces the risk of errors.

Data Transformation: If needed, transform the data to the format needed for the landing system. Data transformation may be:

Destructive, which may involve deleting fields and records

Constructive, like adding new fields or replicating data

Aesthetic, which may entail changing and standardizing field names to improve readability.

Joining and linking data from various sources

Validating data: Data undergoes testing against standardized guidelines, and data gets rejected if it doesn’t fulfill such requirements.

Data engineers may choose to perform this process by creating scripts in Python or using SQL, or employing an ETL tool. However, it is essential to note that the final transformed data undergo testing against the standards set during the planning phase of migration. When the data satisfactorily meets the specified criteria, loading occurs.

Data Loading: This last step entails transferring data to its final destination systems. Data loading may occur at once or incrementally in batches. Batch loading helps check and validate data for inconsistencies.

Enable Agile Data Migrations With a DataOps Approach

StreamSets data integration platform enables continuous sync and easy migration which is essential for cloud adoption. A schema-less approach means that users do not need to spend time profiling and investigating their data structure. And with active data drift detection and multi-table updates users can build migration pipelines and simply hit play and the system will recreate the schema and attributes on the new platform. This greatly reduces the time, cost, and workload required to migrate data to new platforms, while at the same time having advanced monitoring and testing to ensure data quality at the end.

The post Documenting the Steps in Your Data Migration Process appeared first on StreamSets.

Read MoreStreamSets