In this post, we demonstrate how to build a mechanism to automate the detection of sensitive data, in particular personally identifiable information (PII), in your relational database. PII is information connected to an individual and can be used to identify them. Handling PII data in a relational database, such as Amazon Aurora, requires planning and integration with other services.

You can integrate your Aurora database with Amazon Comprehend to detect PII. Amazon Comprehend is a natural language processing (NLP) service that uses machine learning (ML) to uncover valuable insights in text and can automatically detect PII. In this post, we use AWS Lambda and database triggers to build a mechanism that detects PII data at the moment you insert it in your database and use Amazon Simple Notification Service (Amazon SNS) to send email notifications.

This mechanism is completely transparent to the application layer and requires no code changes or application-level configuration. The benefits of an application-transparent mechanism include seamless application upgrades and the ability to use it with any kind of application, even those whose code you don’t have access to. However, it’s important to take into consideration the scale of your workloads and the transactions on your database. This mechanism, by design, imposes a temporary increase on the database storage needs and adds extra load on your database engine during processing.

Solution overview

For this post, we test the mechanism with an Amazon Aurora MySQL-Compatible Edition relational database. This solution also works with Amazon Aurora PostgreSQL-Compatible Edition and Amazon Relational Database Service (Amazon RDS) for PostgreSQL, for MySQL, and for MariaDB.

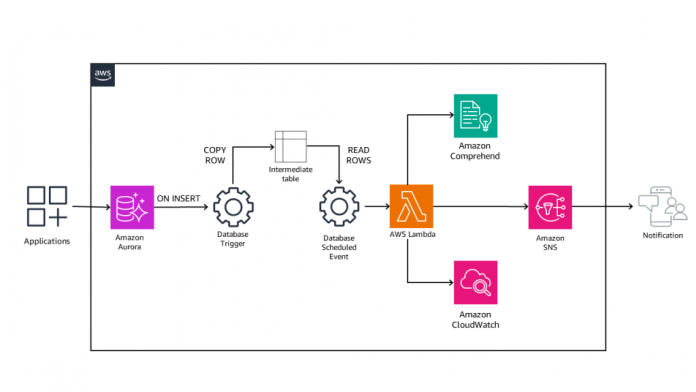

The following diagram illustrates the architecture of the integration mechanism.

The components and the flow in this architecture are as follows:

An application inserts data in one of the monitored tables.

A database trigger on insert runs and copies the row into an intermediate table.

A database scheduled event runs and reads all rows of the intermediate table.

The database event invokes a Lambda function synchronously with the ROW data as the payload.

The Lambda function calls the Amazon Comprehend API to detect PII in the data payload.

The Lambda function logs the detection result in Amazon CloudWatch for logging and auditing purposes.

If PII is detected, the Lambda function calls Amazon SNS to send a notification to the designated users.

The design of the mechanism is generic and applicable to any application that uses Aurora. However, different applications can have different database schemas and different tables that can potentially store PII data. As such, the details of the mechanism apply to a simple and specific use case for demonstration purposes. You should adapt this mechanism to your application and in particular the code of the Lambda function, the code for the database trigger, and the code of the database event.

The database engine has native support to invoke a Lambda function synchronously or asynchronously. The synchronous invocation of a Lambda function can have significant performance penalty in your application because it halts the transaction until the Lambda function runs and returns a result. The asynchronous invocation of a Lambda function has less performance penalty because, although it still needs to call the Lambda API, it doesn’t have to wait for the response. In this example, you have the option to choose between synchronous and asynchronous mode by changing the value of a Boolean parameter, in this example lambda_sync_mode. The default value for this example is to run in synchronous mode so as to ensure reliable PII detection.

The solution uses a sample table with a predefined and static schema, which is common in various use cases. In some other use cases, the database schema or table definition may change. Changes such as a table deletion, a new column in a table, or the change of a data type can impact the functionality of this mechanism. For this kind of change, go through all the steps presented in this solution and adjust accordingly. Check and adapt the database triggers, the database events, and the Lambda function code to ensure the same level of functionality and that you’re checking all the necessary fields for PII data.

The cost of the mechanism is another point to consider. A relational database can have a vast amount of INSERT or UPDATE queries, which can increase the cost of the mechanism. To mitigate this risk, you have to examine the database schema and identify the tables and columns that you want to monitor for PII data. This will allow you to run the database trigger and invoke the Lambda function only for data insertion on the specific tables and columns and not for all INSERT or UPDATE queries into the database.

In the following sections, we detail the steps to build the automatic PII detection mechanism:

Create a table and columns in your database.

Create an SNS topic and configure it accordingly to receive email notifications.

Create the Lambda function that incorporates the logic of the mechanism.

Configure the database engine and build the mechanism in the database.

Prerequisites

To build this mechanism, you need to have the following already configured:

An Aurora MySQL database

Access to the Aurora MySQL database with a user that has the following privileges to be able to run commands:

CREATE

DROP

INSERT

SELECT

EVENT

TRIGGER

AWS_LAMBDA_ACCESS

Your Aurora MySQL database must be connected to subnets with a NAT gateway if you access Lambda over the public internet or configure AWS PrivateLink and an inbound interface for Lambda if you access it directly through your VPC

Note that the mechanism you are going to build is application agnostic and there are no prerequisites for the application or connection method.

Create a table and columns

The automatic PII detection mechanism needs to adapt to the database schema and the table or tables you want to monitor for PII data. For demo purposes, you just need to create a simple table in your Aurora database with three columns, an auto-increment id column and two text fields with the names col1 and col2:

In this use case, you can create a trigger on the table with the name pii_detection and pass the columns col1 and col2 as a JSON object to the Lambda function. However, database triggers are atomic with the triggering statements. If the trigger fails, the statement also fails. This means that the trigger is always blocking on the Lambda API call and also taking an external dependency on the Lambda API. If the API is down and the trigger errors out, then the INSERT transaction fails. A failed transaction requires extra error handling on the application level.

To overcome this risk, the approach is to use an intermediate table with a similar structure to the original one. Then add two triggers, one for INSERT and one for UPDATE, on the original table to copy all new or updated rows to the intermediate table. From the intermediate table, create a database scheduled event that parses all rows in this table, invokes the Lambda function, and detects PII data. This allows the original transaction to remain non-blocking and mitigates any performance consideration. For the sample table pii_detection, create a similar intermediate table called pii_detection_queue with the following statement:

With both the original and the intermediate table in place, you can start building the mechanism. The use of the intermediate table reduces the performance penalty on the database functions but does not eliminate it. The mechanism duplicates all INSERT and UPDATE statements in your sample table, which adds computing overhead and also temporarily increased storage needs until the intermediate table is processed. Depending on the frequency of the database event that processed data in the intermediate table, storage overhead might be something that you need to consider.

Create an SNS topic

For instructions on creating your SNS topic, refer to Creating an Amazon SNS topic. The topic is going to forward notifications to designated personnel for further investigation and action or trigger other processes. The destination of the SNS topic is out of scope for this post; for the sake of simplicity, assume that the SNS topic destination is an email message to the DevSecOps team.

Note the SNS topic name to use later in the Lambda function.

Create a Lambda function

The next step is to create the Lambda function of the mechanism. The mechanism communicates with Amazon Comprehend and Amazon SNS, so you need first to create an AWS Identity and Access Management (IAM) role that delegates access to your Lambda function to access those services.

Follow the procedure in Creating IAM policies to create a custom policy. This policy allows the Lambda function to publish messages to the SNS topic you created earlier. In the following policy code, provide the name of the custom IAM policy and your SNS topic:

Refer to Creating IAM roles for instructions to create a role and attach the following permission policies:

AWSLambdaBasicExecutionRole

AllowLambdaPublishtoSNS

ComprehendReadOnly

Note the name of the role you just created and then create a Lambda function following the instructions in Create a Lambda function with the console using Python 3.11 for your runtime. On the Configuration tab of your Lambda function, go to Permissions and add the role you created as an execution role. For more information on creating a Lambda execution role, refer to Lambda execution role.

Next, go to the Code tab of the function and enter the following code:

The database trigger invokes this Lambda function passing a JSON object with key-value pairs of the database column name and its data. Take into consideration Lambda limits, especially regarding concurrency and payload size. Depending on the expected traffic and size of data, plan accordingly and do the necessary adjustments to stay within limits. Then the workflow proceeds through the following steps:

The function iterates through all keys. If the key doesn’t have an empty value, it checks if it is the unique row ID to record it and skip any other processing. If the key is not the unique ID and the value is not empty, it passes the payload to Amazon Comprehend for PII detection.

The Amazon Comprehend API returns the results.

If PII data is found, the function appends the column name to the pii_fields array.

When the check for all columns is complete, the function checks if there are any fields in the pii_fields array.

If there are fields, then PII data has been detected. The function prepares a message with the relevant information and publishes it to the SNS topic you configured. It also logs a message to CloudWatch about the detected PII data. Note that the function only logs and publishes the row ID of the offending record in the database rather than the actual PII data.

If no PII data is found, the function also logs a message to CloudWatch.

The function returns a HTTP 200 status code and a message.

Note the name of the Lambda function and proceed with the next steps.

Configure Aurora to invoke the Lambda function

The final step to complete the automatic PII detection mechanism is to configure Aurora to invoke the Lambda function you created every time you insert data into the table you’re monitoring (pii_detection).

Create an IAM role

You must create an IAM role for AWS Service: rds that allows Aurora to invoke the Lambda function you created. Use the following permission policy for this role (provide your Region, account ID, and Lambda function name):

Note the name of the role you just created.

Define the default Lambda role

Your Aurora database uses this role to invoke the Lambda function. Follow the instructions in Working with parameter groups to modify the parameters in Aurora.

If you are not already using a custom cluster parameter group, create a cluster parameter group. Then modify the parameter aws_default_lambda_role. The value of this parameter should be as follows (provide your account ID and name of the role):

Next, associate this customer parameter group with your database. Note that if you created a new cluster parameter group, this process will cause downtime in your database because it needs to reboot the database instances to apply the change.

When the customer parameter group configuration is complete, connect to your database and grant access to your database user to invoke Lambda. For example, if your user is piiusr, the command to run should look as follows:

Test the synchronous Lambda function invocation

Now test that you can run the Lambda function you created, with the user that you granted permissions to connect to the database. Use the following code (provide your Region, account ID, and function name):

The query invokes the Lambda function in synchronous mode, and you get the result of the Python code in your function return statement. Note that the time required to complete the run of the command is 0.96 seconds. This is the time to have Aurora invoke the Lambda function, run the code, communicate with the Amazon Comprehend API, and return the result.

Test the asynchronous Lambda function invocation

Now run the same test but instead invoke the Lambda function asynchronously:

The SELECT query invokes the Lambda function in asynchronous mode, but now you get an empty result because the asynchronous call returns immediately after the invocation of the Lambda function and doesn’t wait for the run to complete. Note that now the time required to complete the run of the command is just 0.06 seconds. This is a significant performance improvement—you should use asynchronous calls unless you need to know the result of the Lambda function.

For this use case, you only want alerts, so asynchronous invocation is the suggested choice. In case that you would like to update the data before insert in the database, you should use the synchronous call and evaluate the returned result.

Set up the database triggers

The next step is to set up the database triggers. The triggers copy data to the intermediate table. The first trigger runs whenever you insert data into the table that you want to monitor. It’s important to note that this trigger will increase the database storage cost and the database IOPS. The impact on cost and performance depends on the number of transactions and amount of data that the trigger copies from the main table to the intermediate table.

The trigger code for this example is the following:

With this code, you do the following:

Create a trigger with the name pii_detection_queue_trigger_insert

Run the trigger for all rows after inserting data into the table pii_detection

Insert into the intermediate table pii_detection_queue the values for the original table columns (id, col1, col2)

The second trigger is the same as the first but you configure it to run after the update on an existing column. The trigger code for this example is the following:

Set up the database event

The final step to complete the mechanism is to set up the database event. The event runs on scheduled intervals and processes all rows in the intermediate table. The event code in our example is the following:

With this code, you do the following:

Create a scheduled event with the name detect_pii.

Run the scheduled event every 1 minute. You can adjust this according to your needs and the rate at which the intermediate table grows and the frequency of PII checks.

Declare a few variables to use later in the code. Note the declaration of the variable cur. You build a cursor on the record set with the statement SELECT id, col1, col2 FROM pii_detection_queue. This allows you to further customize the records to retrieve and process.

Declare a handler to continue running when the cursor has no more rows.

Declare a handler to stop running and exit in case of SQL errors. This is useful to cancel processing and avoid losing data if Lambda invocation fails for any reason.

Set the ARN of your Lambda function.

Set the desired mode for running the Lambda function. The value true means the function will run in synchronous mode. The event runs the function and checks the result for each row and only deletes it if the function return result status is 200. The value false means the function will run in asynchronous mode. As long as the function invocation is successful, it deletes the row in the intermediate table.

Open the cursor on the record set and loop through all rows:

For each row, create a JSON object with:

Key – The unique row ID and the names of the columns you want to check.

Value – The actual value stored in the database.

Check the lambda_sync_mode. If the lambda_sync_mode is true, invoke the function in synchronous mode with this JSON object. Wait for the result and check if the JSON object has a status code equal to 200. Then delete the row and continue to the next row.

If the lambda_sync_mode is false, invoke the function in asynchronous mode with this JSON object. As long as there is no SQL exception in the function invocation, do not wait for the result. Delete the row and continue to the next row.

Close the loop and the cursor.

Optimize the intermediate table due to the potential large number of writes and deletions.

With this mechanism, when you insert new records in the table that you monitor, they are automatically copied to an intermediate table. At the same time, you have a scheduled event running every 1 minute that checks the intermediate table for rows. If there are rows in the table, it creates a JSON object and invokes the Lambda function to detect PII. This is non-blocking for the database transactions and does not affect the running environment in case of delays or unavailability of the Lambda API.

The mechanism is now complete. For all data inserted into the table pii_detection, you indirectly send the data to Amazon Comprehend, with the help of Lambda, to check if it contains PII data. If Amazon Comprehend detects PII data, the Lambda function sends a notification to Amazon SNS to generate an alert for your DevSecOps team.

Test the automatic PII detection mechanism

With the mechanism in place, you now need to test its functionality. Connect to the database and run a couple of INSERT queries:

You have run two different INSERT statements, where the first does not contain any PII data and the second contains “John Doe”, which is PII.

You can examine the CloudWatch logs and see that for the first INSERT statement, you have the following log entries:

For the second INSERT statement, you have the following log entries:

Because you defined the SNS topic to send emails, you should also receive an email notification for this entry.

Depending on the number of transactions in your database and especially the INSERT or UPDATE statements in the table that you’re checking for PII, you may need to consider an alternative notification mechanism. This mechanism sends an email notification for every row that detects PII, which can lead to a large number of emails in a matter of minutes. In that case, it would be more efficient to use an alternative mechanism that aggregates findings before sending notifications.

Clean up

To remove the automatic PII detection mechanism from your database, you have to remove all its building blocks.

Remove Aurora configurations

Complete the following steps to clean up your resources in Aurora:

Connect to the database with an admin user and delete the triggers you created:

Connect to the database with an admin user and delete the event you created:

Connect to the database with an admin user and revoke access to the Lambda functions for the database user:

Connect to the database and drop the intermediate table:

Modify the database parameter group and modify the parameter aws_default_lambda_role or, if this is the only change, you can reset the parameter group.

Delete the Lambda function

To clean up the Lambda function, do the following:

On the Lambda console, select the function that you created.

On the Actions menu, choose Delete.

Enter delete in the text input field and choose Delete.

Delete the SNS topic

Delete the SNS topic you created. For instructions, refer to Deleting an Amazon SNS subscription and topic.

Delete IAM roles

This mechanism requires two custom IAM roles: one to allow your Lambda function to interact with Amazon Comprehend and Amazon SNS, and another one to allow Aurora to invoke Lambda functions. Follow the instructions in Deleting roles or instance profiles to delete these roles.

Conclusion

In this post, you configured your Aurora MySQL database to detect PII data and send notifications. The mechanism uses database triggers and database events to invoke Lambda functions when you insert data into a table that you want to monitor for PII data. The function parses the input and uses Amazon Comprehend to detect PII and send notifications using Amazon SNS.

This mechanism is absolutely transparent to your applications and doesn’t require any code changes or altering configuration parameters. The use of database triggers, database events, and Lambda allows you to use more sophisticated code to do further processing or conditional detection for cost-optimization purposes.

If you have any questions or suggestions about this post, leave them in the comments section.

About the Authors

Alexandros Soumplis is a Senior Solutions Architect at Amazon Web Services. He has wide experience in the IT sector through various roles, helping stakeholders and customers design, evaluate and deploy solutions to address business challenges.

Dimitrios Papageorgiou is a Senior Solutions Architect at Amazon Web Services, where he assists customers in designing scalable, robust, and secure systems ready to support global markets. He is particularly passionate about data engineering and its applications in addressing customers’ business needs. Additionally, he enjoys tweaking, writing, and improving the code of large, high-performance backend systems.

Read MoreAWS Database Blog