Last Updated on October 28, 2022

Over the past few years, a lot has changed in the world of stream processing systems. This is especially true as companies manage larger amounts of data than ever before.

In fact, roughly 2.5 quintiliion bytes worth of data are generated every day.

Manually processing the sheer amount of data that most companies collect, store, and one day hope to use simply isn’t realistic. So how can an organization leverage modern advances in machine learning and build scalable pipelines that will actually make use of data gathered from various sources?

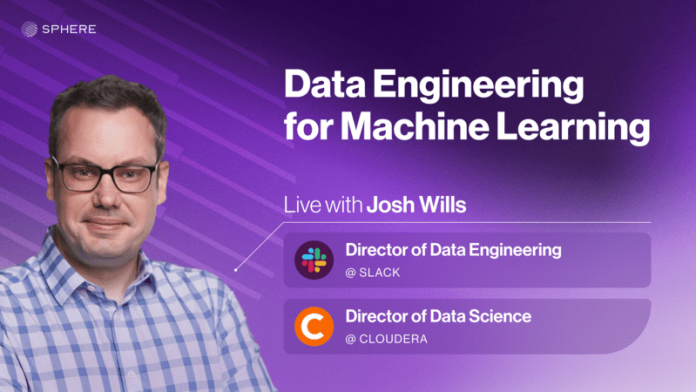

This is exactly what Josh Wills hopes to accomplish with his upcoming course, Data Engineering for Machine Learning.

Throughout four sessions live with Josh, students will learn how to master the data engineering best practices required to support reliable and scalable production models.

More specifically, learners will:

Build and monitor production services for capturing high-quality data for model training and for serving data computed in data warehouses

Design batch data pipelines for training models that integrate diverse data sources, avoid data leakage, and run-on time (not to mention under budget)

Learn how to make the leap from batch to streamlining pipelines to support real-time model features, model evaluation, and even model training

But here’s the real kicker for this course: Josh is specifically focusing in cost optimization/reduction techniques that will have a tangible impact on your company’s ROI.

In other words, the content of this course will focus on how to efficiently build data systems that generate higher revenue at a lower cost. And as the strength of the global economy continues to be uncertain, increasing profits is at the top of mind for most organizations.

Click here to learn more about Josh Wills’s upcoming course, Data Engineering for Machine Learning.

The post Data Engineering for ML: Optimize for Cost Efficiency appeared first on Machine Learning Mastery.

Read MoreMachine Learning Mastery