Filesystem in Userspace (FUSE) is an interface used to export a filesystem to the Linux kernel. Cloud Storage FUSE allows you to mount Cloud Storage buckets as a file system so that applications can access the objects in a bucket using common file I/O operations (e.g., open, read, write, close) rather than using cloud-specific APIs. Cloud Storage FUSE is generally available.

Google Kubernetes Engine (GKE) offers turn-key integration with Cloud Storage FUSE using the Cloud Storage FUSE CSI driver. The CSI driver lets you use the Kubernetes API to consume pre-existing Cloud Storage buckets as persistent volumes. Your applications can upload and download objects using Cloud Storage FUSE file system semantics. The Cloud Storage FUSE CSI driver is a fully-managed experience powered by the open-source Google Cloud Storage FUSE CSI driver. You get portability, reliability, performance, and out-of-the-box GKE integration.

Powering AI workloads with data portability

Cloud Storage is a common choice for AI/ML workloads because of its near-limitless scale, simplicity, affordability, and performance. While some AI/ML frameworks have libraries that support native object-storage APIs directly, others require file-system semantics. Moreover, it is desired to standardize on file-system semantics for a consistent experience across environments. With Cloud Storage FUSE CSI driver, GKE workloads can access Cloud Storage buckets mounted as a local file system using the Kubernetes API, providing data portability across various environments.

Here is an example showing how to access your Cloud Storage objects using common file-system semantics in a Job workload on GKE:

<ListValue: [StructValue([(‘code’, ‘apiVersion: batch/v1rnkind: Jobrnmetadata:rn name: gcs-fuse-csi-job-examplern namespace: my-namespacernspec:rn template:rn metadata:rn annotations:rn gke-gcsfuse/volumes: “true”rn spec:rn serviceAccountName: my-sarn containers:rn – name: data-transferrn image: busyboxrn command:rn – “/bin/sh”rn – “-c”rn – touch /data/my-file;rn ls /data | grep my-file;rn echo “Hello World!” >> /data/my-file;rn cat /data/my-file | grep “Hello World!”;rn volumeMounts:rn – name: gcs-fuse-csi-ephemeralrn mountPath: /datarn volumes:rn – name: gcs-fuse-csi-ephemeralrn csi:rn driver: gcsfuse.csi.storage.gke.iorn volumeAttributes:rn bucketName: my-bucket-namern restartPolicy: Neverrn backoffLimit: 1’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e602171c8b0>)])]>

You can find more AI/ML workload examples using the CSI driver to consume objects in Cloud Storage buckets:

Train a model with GPUs on GKE Standard modeServe a model with a single GPU in GKE AutopilotTPUs in GKE introduction

With the Cloud Storage FUSE CSI driver, you only need to specify a Cloud Storage bucket and a service account, and GKE takes care of the rest. If you want more control or want to learn more about the underlying design, read on.

Making easy things easy, but hard things possible

You can easily specify the Cloud Storage bucket using in-line CSI ephemeral volumes on your Pod specification, which does not require maintaining separate PersistentVolume (PV) and PersistentVolumeClaim (PVC) objects. If compatibility or authentication requirements make the conventional PV/PVC approach preferable, the CSI driver also supports the standard static provisioning approach. This approach offers ReadWriteMany access mode, enabling multiple applications to consume data using a single PV/PVC.

The CSI driver automatically injects a sidecar container into your Pod specification, so you can focus on your application code and don’t have to worry about managing the Cloud Storage FUSE runtime. Additionally, we designed an auto-termination mechanism that terminates the sidecar container when it is no longer needed. This design prevents the sidecar container from blocking the termination of your Kubernetes Job workloads.

When the sidecar container is injected, we set default resource allocation and mount options that work well with most lightweight workloads. We also provide the flexibility for you to fine-tune the mount options and resource allocation for the Cloud Storage FUSE runtime.

Tight constraints leading to an innovative design

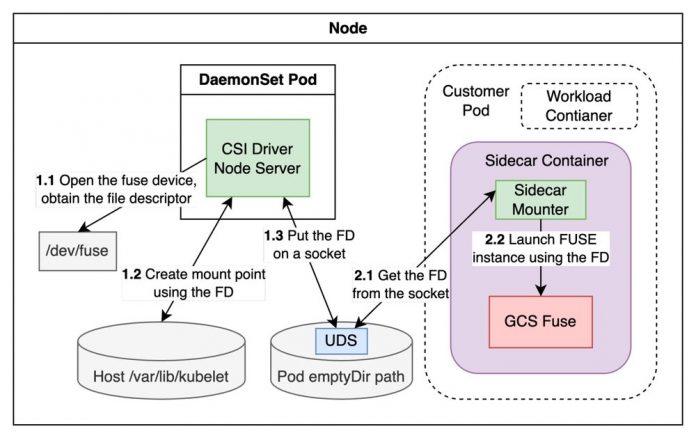

Running FUSE drivers on Kubernetes has several limitations that could hinder adoption. We developed a new sidecar-based solution to overcome these limitations by leveraging a kernel cross-process file descriptor transfer to cleanly separate privileges, authentication, workload lifecycle, and resource usage.

Traditional FUSE CSI drivers run all FUSE instances inside the CSI driver container, which creates a single point of failure. In some cases, FUSE instances run directly on the node’s underlying virtual machine (VM), but this can consume reserved system resources. Our solution is different. Cloud Storage FUSE runs alongside workload containers in a sidecar container. This per-Pod based model ties the Cloud Storage FUSE lifecycle to the lifecycle of the workload. Since Cloud Storage FUSE runs as part of your workload, you can use Workload Identity to authenticate it. This means you have fine-grained, Pod-level IAM access control without having to manually manage your service account credentials. Additionally, the resources consumed by Cloud Storage FUSE are accounted for by the Pod, which makes it possible to fine-tune performance.

Another major challenge of running FUSE drivers in sidecar containers is that the FUSE runtime requires elevated container privileges. This restriction makes the sidecar-based solution almost impossible for GKE Autopilot, because privileged containers are not permitted on Autopilot clusters. Our solution introduced a two-step FUSE mount technique that allows the FUSE sidecar container to run without privileges. Only the CSI driver container needs to be a privileged container. The following diagram illustrates how the two-step FUSE mount process works.

Conclusion

Cloud Storage FUSE CSI driver is a GKE fully managed solution that lets you use the Kubernetes API to access pre-existing Cloud Storage buckets in your applications. The innovative sidecar-based design solves privilege, authentication, resource allocation, and FUSE lifecycle management issues. See GKE documentation for details: Access Cloud Storage buckets with the Cloud Storage FUSE CSI driver. Submit issues on GitHub repository.

Cloud BlogRead More