In today’s era of data-driven applications, leveraging advanced machine learning and artificial intelligence services like computer vision has become increasingly important. One such service is the Vision API, which provides powerful image analysis capabilities. In this blog, we will explore how to create a Computer Vision application using Spring Boot and Java, enabling you to unlock the potential of image recognition and analysis in your projects. The application UI will accept as input, public URLs of images that contain written or printed text, extract the text, detect the language and if it is one of the supported languages, it will generate the English translation of that text.

Spring Boot and Google Cloud

Spring Boot is a powerful open-source framework for creating Spring-based applications. It simplifies development by providing auto-configuration, starter dependencies, and embedded servers. It also offers production-ready features like metrics and health checks. With Spring Boot, you can focus on writing code and deploying efficient applications without worrying about complex configuration or dependencies. Apart from the well-known features that make it an ideal choice for enterprise apps, a new exciting development is the official support for Native Image Builder using GraalVM, enabling the creation of native standalone executables without the need for a Java Runtime and are leaner and offer a super fast startup experience. Try Spring Native on Google Cloud.

The Spring Cloud GCP library makes it easy for Spring Boot applications to use Google Cloud services. It provides Spring Boot APIs for over a dozen Google Cloud services. This means you can take advantage of the benefits of Google Cloud services without having to learn separate Google Cloud client libraries. It is very easy to migrate or create a new Spring Boot application in Google Cloud. With just one command, you can bootstrap your production-ready Spring Boot project structure and start making code changes for your requirement. Refer to the documentation for a full list of features.

Prerequisites

Before diving into the development process, make sure you have the following prerequisites in place:

A Google Cloud account with a project created and billing enabled

Vision API, Translation, Cloud Run, and Artifact Registry APIs enabled

Cloud Storage API enabled with a bucket created and images with text or handwriting in local supported languages uploaded (or you can use the sample image links provided in this blog)

Refer to the documentation for steps on how to enable Google Cloud APIs.

Bootstrapping a Spring Boot project

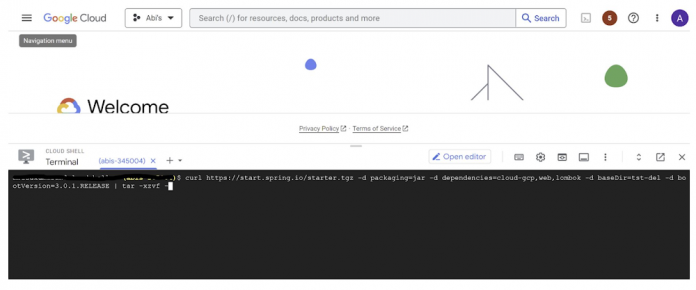

To get started, create a new Spring Boot project using your preferred IDE or Spring Initializr. Include the necessary dependencies, such as Spring Web, Spring Cloud GCP, and Vision AI, in your project’s configuration. Alternatively, you can use Spring Initializr from Cloud Shell using the below steps to bootstrap your Spring Boot application easily:

1. Open a Cloud Shell terminal and make sure it is pointing to the correct project and that you are authorized (if not you can use the command below to set the right project):

2. Run the following command to create your Spring Boot project:

spring-vision is the name of your project, change it per your requirement.

bootVersion is the version of Spring Boot, make sure to update it if required at the time of your implementation.

type is the version of project build tool type, you can change it to gradle if preferred.

This creates a project structure under “spring-vision” as below:

pom.xml contains all the dependencies for the project (dependencies you configured using this command are already added in your pom.xml).

src/main/java/com/example/demo has the source classes .java files.

resources contain the images, XML, text files and the static content the project uses that are maintained independently.

application.properties enable you to maintain the admin features to define profile specific properties of the application.

Configuring the Vision API

Once you have the Vision API enabled, you have the option to configure the API credentials in your application. You can optionally use Application Default Credentials for setting up authentication. In this demo implementation however I have not implemented the use of credentials.

Implementing the vision and translation services

Create a service class that interacts with the Vision API. Inject the necessary dependencies and use the Vision API client to send image analysis requests. You can implement methods to perform tasks like image labeling, face detection, recognition, and more, based on your application’s requirements. In this demo, we will use handwriting extraction and translation methods. For this make sure you include the following dependencies in pom.xml

Clone / Replace the following files from the repo and add them to the respective folders / path in the project structure:

Application.java (/src/main/java/com/example/demo)

TranslateText.java (/src/main/java/com/example/demo)

VisionController.java (/src/main/java/com/example/demo)

index.html (/src/main/resources/static)

result.html (/src/main/resources/templates)

pom.xml

The method extractTextFromImage in the service org.springframework.cloud.gcp.vision.CloudVisionTemplate lets you extract text from your image input. The method getTranslatedText from the service com.google.cloud.translate.v3 lets you pass the extracted text from your image and get the translated text in the desired target language as response (if the source is in one of the supported languages list).

Building the REST API

Design and implement the REST endpoints that will expose the Vision API functionalities. Create controllers that handle incoming requests and utilize the Vision API service to process the images and return the analysis results.

In this demo, our VisionController class implements the endpoint, handles the incoming request, invokes the Vision API and Cloud Translation services and returns the result to the view layer. Implementation of the GET method for the REST endpoint is as follows:

The TranslateText class in the above implementation has the method that invokes the Cloud Translation service:

With the VisionController class, we have the GET method for the REST implemented.

Integrating Thymeleaf for frontend development

When building an application with Spring Boot, one popular choice for frontend development is to leverage the power of Thymeleaf. Thymeleaf is a server-side Java template engine that allows you to seamlessly integrate dynamic content into your HTML pages. Thymeleaf provides a smooth development experience by allowing you to create HTML templates with embedded server-side expressions. These expressions can be used to dynamically render data from your Spring Boot backend, making it easier to display the results of image analysis performed by the Vision API service.

To get started, ensure that you have the necessary dependencies for Thymeleaf in your Spring Boot project. You can include the Thymeleaf Starter dependency in your pom.xml:

In your controller method, retrieve the analysis result from the Vision API service and add it to the model. The model represents the data that will be used by Thymeleaf to render the HTML template. Once the model is populated, return the name of the Thymeleaf template that you want to render. Thymeleaf will take care of processing the template, substituting the server-side expressions with the actual data, and generating the final HTML that will be sent to the client’s browser. Example:

In the case of the extractText method in VisionController, we have returned the result as a String to and not added to the model. But we have invoked the GET method extractText method on the index.html on page submit.

With Thymeleaf, you can create a seamless user experience, where users can upload images, trigger Vision API analyses, and view the results in real-time. Unlock the full potential of your Vision AI application by harnessing the power of Thymeleaf for frontend development.

Deploying your Spring Boot application with Cloud Run

Write unit tests for your service and controller classes to ensure proper functionality under the /src/test/java/com/example folder. Once you’re confident in its stability, package it into a deployable artifact, such as a JAR file, and deploy it to Cloud Run, a serverless compute platform on Google Cloud. In this step, we will focus on deploying your containerized Spring Boot application using Cloud Run.

a. Package your application by executing the following steps from Cloud Shell(make sure the terminal is prompting at the project root folder)

Build:

Once the build is successful, run locally to test:

b. Containerize your Spring Boot Application with Jib:

Instead of manually creating a Dockerfile and building the container image, you can use the Jib utility to simplify the containerization process. Jib is a plugin that integrates directly with your build tool (such as Maven or Gradle) and allows you to build optimized container images without writing a Dockerfile. Before proceeding, you need to enable the Artifact Registry API (Use of Artifact Registry is encouraged over container registry). Then Run Jib to build a Docker image and publish to the Registry:

Note: In this experiment, we did not configure the Jib Maven plugin in pom.xml, but for advanced usage, it is possible to add it in pom.xml with more configuration options

c. Deploy the container (that we pushed to Artifact Registry in the previous step) to Cloud Run. This is again a one-command step:

You can alternatively do this from the UI as well. Navigate to the Google Cloud Console and locate the Cloud Run service. Click on “Create Service” and follow the on-screen instructions. Specify the container image you previously pushed to the registry, configure the desired deployment settings (such as CPU allocation and autoscaling), and choose the appropriate region for deployment. You can set environment variables specific to your application. These variables can include authentication credentials (API keys etc.), database connection strings, or any other configuration needed for your Vision AI application to function correctly. When the deployment is completed successfully, you should get an endpoint to your application.

For our demo, the endpoint Cloud Run created for us is: https://vision-app-********-uc.a.run.app

Playing with your Vision AI app

For demo purposes, you can use the image URL below for your app to read and translate:

https://storage.googleapis.com/img_public_test/tamilwriting1.jfif

Conclusion

Congratulations! You have successfully created a Vision AI application using Spring Boot and Java. With the power of Vision AI, your application can now perform sophisticated image analysis, including labeling, face detection, and more. The integration of Spring Boot provides a solid foundation for building scalable and robust Google Cloud Native applications. Continue exploring the vast capabilities of Vision AI, Cloud Run, Cloud Translation and more to enhance your application with additional features and functionalities. To learn more, check out the Vision API, Cloud Translation, and GCP Spring docs. Try out the same experiment with the Spring Native option!! Also as a sneak-peak to Gen-AI world, checkout how this API shows up in Model Garden.

Cloud BlogRead More