In this post, we discuss how you can build an Internet of Things (IoT) sensor network solution to process IoT sensor data through AWS IoT Core and store it with Amazon DocumentDB (with MongoDB compatibility).

An IoT sensor network consists of multiple sensors and other devices like RFID readers made by various manufacturers, generating JSON data with diverse attributes to be stored in a database for analysis. Because the schema is dynamic and can evolve over time, you should use a database that supports a flexible data model to simplify development and save costs. Amazon DocumentDB is a good candidate of database service for such use case because it supports flexible schema and is designed to manage JSON workloads at scale.

Amazon DocumentDB is a fast, scalable, highly available, and fully managed document database service that supports MongoDB workloads. As a document database, Amazon DocumentDB makes it easy to store, index, and query JSON data.

AWS IoT Core is a managed cloud platform that enables connected devices to interact easily and securely with cloud applications and other devices. AWS IoT Core can support billions of devices and handle trillions of messages. It can also process and route these messages reliably and securely to AWS endpoints and other devices.

You can build an efficient IoT sensor network using Amazon DocumentDB and AWS IoT Core to address a variety of challenges, such as the following:

Vehicle manufacturers can collect information about vehicle health to identify and notify the driver of any service required

Insurance companies can collect driving performance data to provide cost-effective policies for their customers who follow safe driving practices

Smart homemakers can enhance safety and increase energy efficiency by using cameras and thermostats to detect human activity

Farmers can achieve better yield with low cost by efficient irrigation by deploying soil moisture sensors

Solution overview

At a high level, this solution comprises three main processes:

Data collection – IoT sensors and devices capture the information and send that information to the AWS Cloud. These devices can collect different information depending upon the type and send that at different frequencies.

Data ingestion and storage – AWS IoT Core service ingest the information coming from these devices and store it in a data store like Amazon DocumentDB.

Data processing – Different applications utilize stored data for processing, like creating dashboards and visualizations, performing real-time analytics, performing event processing and reporting, and more. Further data can be sent to downstream systems for integration. Advanced analytics can also be performed using machine learning to generate prediction and anomaly detection models so further corrective action can be taken.

For this post, we show how to build a smart farm (IoT sensor network for agriculture) that enables farmers to optimize crop yields and minimize environmental impact by enabling them to act based on the analysis of real-time data gathered by a network of IoT sensors on weather, soil, and crops.

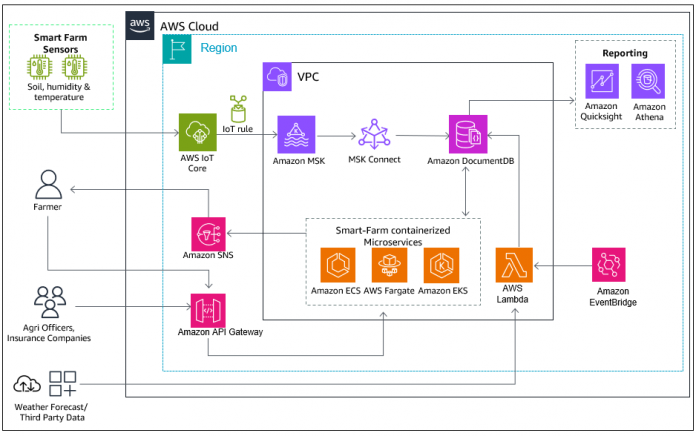

The following is a reference diagram for the complete solution. Sensors installed at the smart farm send the data securely to AWS IoT Core. An AWS IoT rule is triggered based on a predefined condition, which then sends the data to Amazon Managed Streaming for Apache Kafka (Amazon MSK). Kafka Connector reads data from Amazon MSK and stores it to Amazon DocumentDB. Smart farms containerized microservices process the sensor and third-party data received, and generate recommendations to farmers.

In this post, we focus on how to move the IoT data to Amazon DocumentDB using AWS IoT Core, Amazon MSK, and a Kafka connector on MSK Connect.

Prerequisites

To follow along with this post, you need the following resources:

An Amazon Elastic Compute Cloud (Amazon EC2) instance

IoT sensors (we use a virtual device in place of an actual sensor)

An IoT rule to send data from AWS IoT Core to Amazon MSK.

An Amazon DocumentDB cluster

An MSK cluster

The MongoDB Kafka connector on MSK Connect

Complete the steps in this section to create these resources.

Configure an EC2 instance

You can choose an existing EC2 instance or create a new one. You use this EC2 instance for running the virtual device and connecting to Amazon DocumentDB. Complete the following steps to configure the instance:

Make sure the instance is in the same VPC of your Amazon DocumentDB cluster and MSK cluster and shares the same security group of Amazon DocumentDB and MSK. These services can be deployed in sperate security group and inbound rules need to adjusted accordingly.

Configure the EC2 instance security group for inbound to connect to and from the MSK cluster (ports 9098, 9096) and Amazon DocumentDB cluster (port 27017).

Install the mongo shell:

Install Java and Git on the EC2 instance:

Configure an IoT sensor

AWS IoT Core provides a registry that helps you manage things, which are a representation of a specific device or logical entity. It can be a physical device or sensor (for example, a light bulb or a switch on a wall). It can also be a logical entity like an instance of an application or a physical entity that doesn’t connect to AWS IoT Core.

For this post, you create a virtual device to run from the EC2 instance. It will send the data to AWS IoT Core that is then stored in Amazon DocumentDB.

Complete the following steps:

On the AWS IoT Core console, choose Connect one device.

In the Prepare your device section, no action is required; choose Next.

In the Register and secure your device section, choose Create a new thing.

For Thing name, enter the name for your thing and choose Next.

In the Choose platform and SDK section, choose the platform as Linux/MacOS and the language of the AWS IoT Device SDK as Node.js, then choose Next.

In the Download connection kit section, choose Download connection kit.

Upload the connection kit to the EC2 instance.

Configure an MSK cluster

You can use an existing MSK cluster or create a new MSK provisioned cluster. If you create a new cluster, follow Steps 1–3 as documented in How to integrate AWS IoT Core with Amazon MSK .

The cluster should be deployed in the same VPC as your Amazon DocumentDB cluster and share the same security group used for Amazon DocumentDB. Make sure you follow the above steps, to setup SASL/SCRAM authentication as Security Settings for your MSK Cluster, create credentials in AWS Secrets Manager with customer managed KMS key and attach the credentials to your MSK cluster.

Configure IAM Access control for MSK cluster

AWS IoT Core rules support SASL/SCRAM authentication and MSK Connect supports IAM authentication.

The MSK cluster security settings should be updated with both SASL/SCRAM authentication and AWS Identity and Access Management (IAM) access control.

On the IAM console, select your MSK cluster.

In the Action, choose edit security settings and select IAM role-based authentication

Click on Save changes.

It takes approximately 10–15 minutes for the security settings to be updated.

Create an AWS IoT rule to send data from AWS IoT Core to Amazon MSK

Before you create your AWS IoT rule, you need to set up the necessary IAM resources and VPC configuration. Complete the following steps:

Create an IAM policy:

On the IAM console, choose Policies in the navigation pane.

Choose Create policy.

Create a customer managed policy called IoTCorePolicy using the following document:

Create an IAM role IOTCoreRole with above policy IoTCorePolicy.

On the IAM console, choose Roles in the navigation pane.

Choose Create Role

For Select trusted entity, select Custom trust policy

Add below trust policy

Choose Next.

On Add permissions, select IOTCorePolicy.

Provide Role Name as IOTCoreRole

Select Create Role

Add IAM role IOTCoreRole access to the AWS Key Management Service (AWS KMS) key that you created during MSK cluster creation for SASL/SCRAM authentication:

On the AWS KMS console, navigate to the KMS key you created earlier.

For Key users, add the IAM role IOTCoreRole.

Create the VPC destination for the AWS IoT rule:

On the AWS IoT Core console, under Message routing in the navigation pane, chose Destinations.

Choose Create destination and select Create VPC destination.

Select the VPC and subnets that are used for your MSK cluster.

Select the security group that is used for your MSK cluster.

Select the role IOTCoreRole created in the previous step.

Select Create

Now you’re ready to create your AWS IoT rule.

Create the AWS IOT Rule

On the AWS IoT Core console, under Message routing in the navigation pane, choose Create Rule.

In the Rules properties section, add the rule name.

In the Configure SQL statement section, add the SQL select * from ‘sdk/test/js‘, then choose Next.

In the Attach rule action section, choose the rule action Apache Kafka cluster.

Provide the VPC destination that you created earlier.

Specify the Kafka topic as iottopic.

Specify the SCRAM/SASL bootstrap servers of your MSK cluster. You can view the bootstrap server URLs in the client information of your MSK cluster console.

Specify SSL_SASL as security.protocol and SCRAM-SHA512 as sasl.mechanism.

Specify the following variable in sasl.scram.username and sasl.scram.password and replace the secret name and role name which you created earlier:

Accept remaining default values, choose next and then choose Create.

Create an Amazon DocumentDB cluster

You can use an existing instance-based cluster or create a new Amazon DocumentDB instance-based cluster. You can also use Amazon DocumentDB elastic clusters which elastically scale database to handle millions of reads and writes per second with petabytes of storage.

Configure the MongoDB Kafka connector on MSK Connect

You need the MongoDB Kafka connector to move the data from the MSK topic to Amazon DocumentDB. You can configure the MongoDB Kafka connector on MSK Connect by following the sink use case in Stream data with Amazon DocumentDB, Amazon MSK Serverless, and Amazon MSK Connect.

Now that you have created all the prerequisites, you can implement the solution as detailed in the following sections.

Create a Kafka topic

To create a Kafka topic, complete the following steps:

Log in to your EC2 instance and download the binary distribution of Apache Kafka and extract the archive in local_kafka:

In the ~/local_kafka/kafka/config/ directory, create a JAAS configuration file that contains the user credentials stored in your secret. For example, create a file called users_jaas.conf with the following content:

In the ~/local_kafka/kafka/config/ directory, create a client_sasl.properties file to configure a Kafka client to use SASL for connection:

kafka_iam_truststore.jks file is created in previous step.

Define the BOOTSTRAP_SERVERS environment variable to store the SASL/SCRAM bootstrap servers of the MSK cluster, define KAFKA_OPTS to export your JAAS config file, and locally install Kafka in the path environment variable. You can view the bootstrap server URLs in the client information of your MSK cluster console.

Create the Kafka topic iottopic, which you defined in the AWS IoT rule:

Run the IoT thing on Amazon EC2

To run your IoT device, complete the following steps:

Connect to your EC2 instance.

Upload the IoT thing connection kit if you haven’t done so already.

Unzip the connection kit:

Install NodeJS on Amazon EC2:

Run the start.sh script, which on first run will install all the required packages and send 10 messages to AWS IoT Core:

You can also send a customized message and increase the iteration using the following command:

You can retrieve the endpoint name from start.sh file.

You can also get the endpoint name from IOT core Console->settings.

Access the data on Amazon DocumentDB

Open a second terminal and connect to the Amazon DocumentDB cluster using the mongo shell. The JSON documents should be part of the sinkcollection collection in sinkdatabase

Open a terminal on EC2 instance

Connect the Amazon DocumentDB cluster using mongo shell

Execute the command to get the results which are pushed through IOT thing.

Clean up

To clean up the resources you used in your account, delete them in the following order:

EC2 instance

AWS IoT rule

IAM role and customer managed policy

MSK Kafka cluster and Kafka connector

Amazon DocumentDB cluster

AWS IoT thing

AWS IoT Core VPC destination

Summary

In this post, we discussed how to build an IoT sensor network solution to store and process IoT sensor data through AWS IoT Core and store it with Amazon DocumentDB with a smart farming use case.

Visit Get Started with Amazon DocumentDB to begin using Amazon DocumentDB.

About the Authors

Kaarthiik Thota is a Senior DocumentDB Specialist Solutions Architect at AWS based out of London. He is passionate about database technologies and enjoys helping customers solve problems and modernize applications leveraging NoSQL databases. Before joining AWS, he worked extensively with relational databases, NoSQL databases, and Business Intelligence technologies for more 14 years.

Anshu Vajpayee is a Senior DocumentDB Specialist Solutions Architect at Amazon Web Services (AWS). He has been helping customers to adopt NoSQL databases and modernize applications leveraging Amazon DocumentDB. Before joining AWS, he worked extensively with relational and NoSQL databases.

Read MoreAWS Database Blog