You can now use AWS Schema Conversion Tool (AWS SCT) to streamline a migration project from Db2 for z/OS (v12) to Amazon Relation Database Services (Amazon RDS). The list of supported targets for this source in AWS SCT is:

Amazon Relational Database Service (Amazon RDS) for MySQL

Amazon Aurora MySQL-Compatible Edition

Amazon Relational Database Service (Amazon RDS) for PostgreSQL

Amazon Aurora PostgreSQL-Compatible Edition

In this post, we discuss AWS SCT’s support for DB2 for z/OS as a source and its key features to convert schemas and program code from the mainframe database to database services in AWS.

AWS SCT is a tool to simplify migration from proprietary databases engines such as Oracle or Cassandra to database services in AWS. AWS SCT generates program code applicable to a target system based on the source database metadata, database executable code, and predetermined rules. For example, you can use AWS SCT to convert database schema and code objects from an Oracle system to a PostgreSQL system. This helps reduce the time and cost of a database migration, and makes heterogeneous database migrations predictable.

There are four main problems to be solved when migrating a database:

Migration of database objects used to organize and store data (data types, tables, constraints, indexes, sequences)

Migration of the database executable code used to access or manipulate data (triggers, views, procedures, functions)

Migration of the data itself

Testing the logic and performance in the new target database.

These problems are only related to database migration and do not include environment or connection problems.

AWS SCT solves the first two problems: migration of database objects and migration of database executable code. Post conversion and data migration can be done using AWS Database Migration Service (AWS DMS).

You can now use Db2 for z/OS (version 12) as a source starting with AWS SCT version 661 and AWS DMS version 3.4.7.

You can use the AWS Schema Conversion Tool User Guide to familiarize yourself with the terminology and definitions in this post. For more details about supported source and target systems in AWS DMS, refer to Sources for data migration.

Start using Db2 for z/OS as a source engine

To download the latest version of AWS SCT, refer to Installing, verifying, and updating AWS SCT.

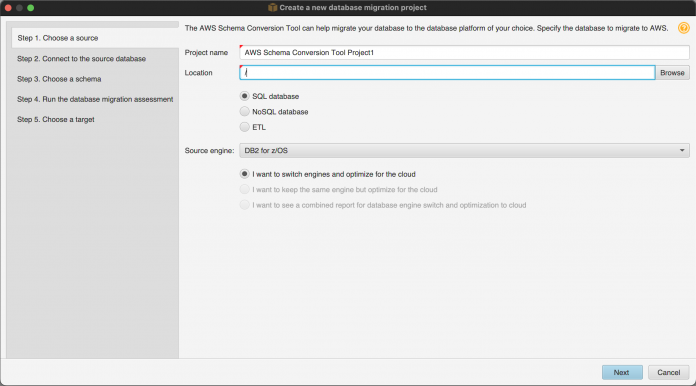

You can create a project in the AWS SCT by using the new project wizard. Select Db2 for z/OS as your source engine.

Create a new database migration project

You can also add Db2 for z/OS as a source to an existing project. Choose Db2 for z/OS on the Add source page and connect to your source system.

Add source

For details about the AWS SCT interface, refer to Using the AWS SCT user interface.

Once the Db2 for z/OS connection type is selected, enter the connection properties needed to connect to the database server. AWS SCT uses a JDBC driver to connect to the Db2 for z/OS database.

Connection properties

To ensure a secure connection, AWS SCT supports the Secure Sockets Layer (SSL) protocol for Db2 for z/OS. For detailed information about connection configuration, refer to Using IBM Db2 for z/OS as a source for AWS SCT.

Migrate database metadata and program code with AWS SCT

The following is a brief overview of how AWS SCT converts metadata and source code from a database running on Db2 for z/OS to Amazon RDS for PostgreSQL or Amazon Aurora PostgreSQL.

Data domains (data types)

The RDBMS are based on data domains (data types). They’re important because they describe a range of values that an element of the domain may contain. Each database supports its own set of data types. Most databases support commonly used types, but a lot of data types in the RDBMSs are vendor dependent and have their own features as well.

AWS SCT supports conversion of the following data types for Db2 for z/OS to Amazon RDS for PostgreSQL, Amazon Aurora PostgreSQL, Amazon RDS for MySQL, and Amazon Aurora MySQL:

NULLs

Numbers

Character strings

Date and time

Graphic strings

Binary strings

Large objects

Row IDs

XMLs

User-defined types (UDTs)

Distinct

Array

The conversion rules assume usage of the most appropriate domains (types) of the target system. If there is no corresponding type or if the type has a smaller range of values than the original one, an informational message (action item) is generated.

For example, the TIMESTAMP(p) WITHOUT TIME ZONE data type in Db2 for z/OS may contain values from 0–12 digits for the second’s fraction. Amazon RDS for PostgreSQL supports the same data type but contains only six digits for the second’s fractions. Because of this, TIMESTAMP(12) WITHOUT TIME ZONE converts into TIMESTAMP(6) WITHOUT TIME ZONE and an alert informs you about the target system limits (via message AI8505).

The migration rules for table columns are a useful AWS SCT feature. This feature allows you to modify the conversion for a specific column or set of columns from the default conversion. AWS SCT supports this feature in 663 version for Db2 for z/OS also and allows you to make necessary changes in the default conversion rules.

For more details about this feature, refer to Creating migration rules in AWS SCT.

Relations (tables)

Another fundamental entity of any RDBMS is relations or tables. AWS SCT supports conversion of the following types of Db2 for z/OS tables:

Base tables

Materialized query tables

Global temporary tables

Temporary tables

Auxiliary tables

XML tables

Partitioned tables (range partition)

The main purpose of table conversion is similar for a data domain. It’s an opportunity to have similar relations in both source and target systems. The AWS SCT conversion considers architectural characteristics and features of the target system and uses suitable entities to represent the source tables. For instance, Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL don’t use special table spaces for some of the table types. Therefore, conversion of an XML table creates a basic table that contains a column of XML type in PostgreSQL.

Based on customer feedback, special rules for table automatic partitioning were added for migrations from Db2 for z/OS to Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL targets. AWS SCT creates partitioned tables in the target based on the source table size and the conversion settings. These rules allow you to improve performance in the target system by using partitioned tables in place of non-partitioned tables from the source system. Additional conversion settings set the conditions like table size, count of partition and so on under which the target tables are created as partitioned.

Conversion settings

Additionally, AWS SCT supports the specification of collation rules for table columns as part of the conversion. Using this feature, you can specify the character classification and the data sort order for appropriate tables and columns after a migration to Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL.

Transformation rules

For more details, refer to Creating migration rules in AWS SCT.

Constraints and indexes

Data consistency and database performance are two of the main reasons to use an RDBMS. Constraints and indexes are used to strengthen the integrity of data and increase database performance. AWS SCT supports conversion of these objects to enable data access and referential integrity in the target system.

The following types of constraints are supported:

Check constraints

Foreign keys

Unique constraints

Primary keys

Taking into account Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL particulars, AWS SCT detects possible name duplication or collision and generates unique names in case it’s required.

The following index types are supported:

Secondary index

Unique index

Unique index where not null

Index on expression

The B-tree index is used as a default index type in the Amazon RDS for PostgreSQL and Aurora PostgreSQL similar to the Db2 for z/OS due to its widely known advantages, such as balance, ordering, and the possibility to be built on any data type to which comparison operators are applicable.

Depending on the definition of a unique index, Db2 for z/OS can store one or more NULL values in the table columns used in the index keys. If the unique index was created using the WHERE NOT NULL option, then there are no limits on the number of NULL values in the table columns. Otherwise, if the unique index was created without the WHERE NOT NULL option only one NULL value is allowed.

The unique indexes in the Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL don’t consider NULL values similar to Db2 for z/OS unique indexes with the WHERE NOT NULL option.

AWS SCT creates two indexes for source indexes created without the WHERE NOT NULL clause. The first is used to provide index functionality and limits significant values, and the second limits the number of NULL values.

The following screens show two indexes created as a result of the UNQ_EG_TBL_UNQ_IDX_F1 index conversion.

UNQ_EG_TBL_UNQ_IDX_F1 index conversion

UNQ_EG_TBL_UNQ_IDX_F18 index limits the number of NULL values

Views

Views are commonly used database objects. A view is a named query (usually complex) that is stored in the database. From the AWS SCT perspective, a view is an object that contains executable code known as the parsable part. The parsable part requires additional actions such as parsing and resolution to guarantee the correct result in the target system.

AWS SCT supports conversion of views based on the following:

One or more tables

One or more views

A mix of tables and views

Common table expressions

Also, it supports views with CHECK OPTIONS.

For example, the following screenshot shows the result of conversion of a view based on one table.

View conversion

Sequences

Sequences are a special database feature commonly used to generate a sequential unique series of numbers. Sequences in Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL support integer types only. AWS SCT considers this feature and uses the BIGINT type and the AI8501 message (to alert this significant change) for those sequences that are based on non-integer types.

Sequence conversion

Aliases

In Db2 for z/OS, aliases are a special type of object that define alternative names for objects like tables, views, sequences, or other aliases. You can find the aliases in the database schema in the source tree of the AWS SCT project. However, Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL don’t support aliases.

Alias conversion

Action item on the resolved alias

To solve this, AWS SCT resolves the alias in the program code during the conversion: all calls to aliases are converted to calls directly to the object by its full name. Additionally, the AI8513 informational message is added when attempting the conversion.

Using the alias referenced object

Data Modification Language (DML)

A SELECT is the most commonly used command (statement) not only in an application that retrieves data from a database, but also within the database routines and views.

Db2 for z/OS contains two statements for extracting data: SELECT and SELECT INTO. Although they function differently, they have similar clauses such as SELECT, FROM, WHERE, GROUP BY, HAVING, and OFFSET. AWS SCT supports conversion of both SELECT statements and their clauses.

The current release also supports conversion of all SET and JOIN operations, common table expressions, and nested and recursive sub-queries.

The AWS SCT covers the majority of commonly used cases of SELECT and SELECT INTO statements. SCT warns you if there are any unsupported use cases via appropriate AI messages.

SELECT statement conversion

INSERT, UPDATE, and DELETE statements are usually used in executable code of database objects like user-defined procedures and functions. Sub-queries, simple expressions, and the DEFAULT keyword are supported by AWS SCT within INSERT and UPDATE statements.

The current release of AWS SCT supports conversion for a DELETE that contains only a WHERE clause; other possible clauses are unavailable. Notifications and actions items are created for clauses that aren’t supported within a DELETE statement.

The assessment report identifies all database routines that contain currently unsupported clauses.

Action items on the unsupported clauses

Built-in functions

AWS SCT supports conversion of a number of built-in scalar and aggregate database functions.

An aggregate function returns a single-value result for the set of input values that is passed to each function’s parameter. For example, the built-in function AVG could be used within a SELECT INTO statement to calculate an average value of the values stored in a table column.

The supported aggregate functions in the current release include ARRAY_AGG, AVG, CORRELATION, COUNT, COUNT_BIG, COVAR_POP, COVARIANCE, COVAR, COVARIANCE_SAMP, CUME_DIST, GROUPING, LISTAGG, MAX, MEDIAN, MIN, PERCENT_RANK, PERCENTILE_CONT, PERCENTILE_DISC, REGR_AVGX, REGR_AVGY, REGR_COUNT, REGR_ICPT, REGR_INTERCEPT, REGR_R2, REGR_SLOPE, REGR_SXX, REGR_SXY, REGR_SYY, STDDEV, STDDEV_SAMP, SUM, VARIANCE, VARIANCE_SAMP, and XMLAGG.

Within conversion of the aggregation functions, the use of the keywords DISTINCT and ALL is also supported.

A scalar function takes a single set of parameter values and returns a single-value result. According to the Db2 for z/OS guidelines, a scalar function can be presented whenever an expression can be. AWS SCT supports the conversion of the expression within scalar functions.

The supported built-in functions in the current release include ABS, ABSVAL, ACOS, ASCII, ASCII_CHR, ASIN, ATAN, ATAN2, ATANH, BIGINT, CEILING, COALESCE, CONCAT, COS, COSH, DEC, DECFLOAT, DECIMAL, DEGREES, DIGITS, DOUBLE, DOUBLE_PRECISION, EXP, FLOAT, FLOOR, IFNULL, INT, INTEGER, LCASE, LEFT, LN, LOCATE, LOG10, LOWER, LPAD, LTRIM, MOD, MULTIPLY_ALT, NULLIF, NVL, POWER, RADIANS, REAL, REPEAT, REPLACE, RIGHT, RPAD, RTRIM, SIGN, SIN, SINH, SMALLINT, SPACE, STRIP, SUBSTR, SUBSTRING, TAN, TANH, TRIM, TRUNC, TRUNCATE, UCASE, UPPER, and VALUE.

Program code

Another important type of routine that can be in the database is user-defined functions or procedures. These objects can be created with CREATE FUNCTION and CREATE PROCEDURE statements and they extend the functionality of Db2 for z/OS by adding their own functionality. This functionality is important for the migration process because it provides a large part of the user logic of data processing.

Db2 for z/OS supports the use of triggers: executable code that runs when a specific event occurs. This executable code can be stored in the database or as an external program:

Internal – The body of the routine is written in the procedure language SQL or SQL PL. The routine body is stored in the database. These can be functions, procedures, or triggers.

External – The body of the routine is written in a program language like ASSEMBLER, C, C++, COBOL, JAVA, or PL/I. The routine resides outside of the database. These can be functions or procedures.

AWS SCT identifies routines of both types, but the conversion is only possible for internal routines.

The following are the supported program objects for conversion:

SQL scalar functions

SQL table functions

Sourced functions (only SQL)

Basic triggers

Advanced triggers

SQL procedures

Note that Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL use special trigger functions to implement trigger logic. In contrast, Db2 for z/OS defines the executable code in the trigger body. The AWS SCT considers these differences and creates separate trigger functions for every Db2 for z/OS trigger.

On the following screens show the trigger and trigger function created during the conversion of the EG_TBL_TRG_AD trigger.

Trigger conversion

Trigger function

The following screenshots demonstrate converting the internal Db2 for z/OS routine language (either SQL or SQL PL) to the Amazon RDS for PostgreSQL and Amazon Aurora PostgreSQL PLPGSQL language. AWS SCT covers the general and widely uses patterns and constructions of the program code and statements. AWS SCT warns you if there are any unsupported or unrecognized parts of the program code as well.

Internal routine conversion

Conclusion

In this post, we provided an overview of the conversion features of database schemas and code objects while migrating a database from Db2 for z/OS database to AWS database services using the AWS Schema Conversion Tool (AWS SCT). The AWS SCT features we mentioned can significantly improve the quality and reduce the time to migration.

Refer to Database migration to learn more.

About the authors

Denys Nour Database Engineer II, AWS Project Delta Migration

Ed Murray Pr. DBE, Supernova, AWS Mainframe Modernization

Read MoreAWS Database Blog