The recently published IDC MarketScape: Asia/Pacific (Excluding Japan) AI Life-Cycle Software Tools and Platforms 2022 Vendor Assessment positions AWS in the Leaders category. This was the first and only APEJ-specific analyst evaluation focused on AI life-cycle software from IDC. The vendors evaluated for this MarketScape offer various software tools needed to support end-to-end machine learning (ML) model development, including data preparation, model building and training, model operation, evaluation, deployment, and monitoring. The tools are typically used by data scientists and ML developers from experimentation to production deployment of AI and ML solutions.

AI life-cycle tools are essential to productize AI/ML solutions. They go quite a few steps beyond AI/ML experimentation: to achieve deployment anywhere, performance at scale, cost optimization, and increasingly important, support systematic model risk management—explainability, robustness, drift, privacy protection, and more. Businesses need these tools to unlock the value of enterprise data assets at greater scale and faster speed.

Vendor Requirements for the IDC MarketScape

To be considered for the MarketScape, the vendor had to provide software products for various aspects of the end-to-end ML process under independent product stock-keeping units (SKUs) or as part of a general AI software platform. The products had to be based on the company’s own IP, and the products should have generated software license revenue or consumption-based software revenue for at least 12 months in APEJ as of March 2022. The company had to be among the top 15 vendors by the reported revenues of 2020–2021 in the APEJ region, according to IDC’s AI Software Tracker. AWS met the criteria and was evaluated by IDC along with eight other vendors.

The result of IDC’s comprehensive evaluation was published October 2022 in the IDC MarketScape: Asia/Pacific (Excluding Japan) AI Life-Cycle Software Tools and Platforms 2022 Vendor Assessment. AWS is positioned in the Leaders category based on current capabilities. The AWS strategy is to make continuous investments in AI/ML services to help customers innovate with AI and ML.

AWS position

“AWS is placed in the Leaders category in this exercise, receiving higher ratings in various assessment categories—the breadth of tooling services provided, options to lower cost for performance, quality of customer service and support, and pace of product innovation, to name a few.”

– Jessie Danqing Cai, Associate Research Director, Big Data & Analytics Practice, IDC Asia/Pacific.

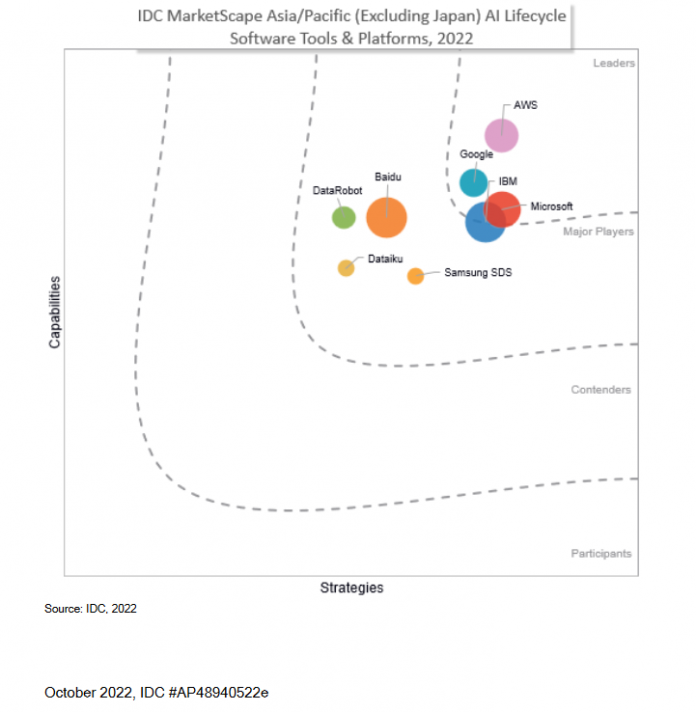

The visual below is part of the MarketScape and shows the AWS position evaluated by capabilities and strategies.

The IDC MarketScape vendor analysis model is designed to provide an overview of the competitive fitness of ICT suppliers in a given market. The research methodology utilizes a rigorous scoring methodology based on both qualitative and quantitative criteria that results in a single graphical illustration of each vendor’s position within a given market. The Capabilities score measures vendor product, go-to-market, and business execution in the short term. The Strategy score measures alignment of vendor strategies with customer requirements in a 3–5-year time frame. Vendor market share is represented by the size of the icons.

Amazon SageMaker evaluated as part of the MarketScape

As part of the evaluation, IDC dove deep into Amazon SageMaker capabilities. SageMaker is a fully managed service to build, train, and deploy ML models for any use case with fully managed infrastructure, tools, and workflows. Since the launch of SageMaker in 2017, over 250 capabilities and features have been released.

ML practitioners such as data scientists, data engineers, business analysts, and MLOps professionals use SageMaker to break down barriers across each step of the ML workflow through their choice of integrated development environments (IDEs) or no-code interfaces. Starting with data preparation, SageMaker makes it easy to access, label, and process large amounts of structured data (tabular data) and unstructured data (photo, video, geospatial, and audio) for ML. After data is prepared, SageMaker offers fully managed notebooks for model building and reduces training time from hours to minutes with optimized infrastructure. SageMaker makes it easy to deploy ML models to make predictions at the best price-performance for any use case through a broad selection of ML infrastructure and model deployment options. Finally, the MLOps tools in SageMaker help you scale model deployment, reduce inference costs, manage models more effectively in production, and reduce operational burden.

The MarketScape calls out three strengths for AWS:

Functionality and offering – SageMaker provides a broad and deep set of tools for data preparation, model training, and deployment, including AWS-built silicon: AWS Inferentia for inference workloads and AWS Trainium for training workloads. SageMaker supports model explainability and bias detection through Amazon SageMaker Clarify.

Service delivery – SageMaker is natively available on AWS, the second largest public cloud platform in the APEJ region (based on IDC Public Cloud Services Tracker, IaaS+PaaS, 2021 data), with regions in Japan, Australia, New Zealand, Singapore, India, Indonesia, South Korea, and Greater China. Local zones are available to serve customers in ASEAN countries: Thailand, the Philippines, and Vietnam.

Growth opportunities – AWS actively contributes to open-source projects such as Gluon and engages with regional developer and student communities through many events, online courses, and Amazon SageMaker Studio Lab, a no-cost SageMaker notebook environment.

SageMaker launches at re:Invent 2022

SageMaker innovation continued at AWS re:Invent 2022, with eight new capabilities. The launches included three new capabilities for ML model governance. As the number of models and users within an organization increases, it becomes harder to set least-privilege access controls and establish governance processes to document model information (for example, input datasets, training environment information, model-use description, and risk rating). After models are deployed, customers also need to monitor for bias and feature drift to ensure they perform as expected. A new role manager, model cards, and model dashboard simplify access control and enhance transparency to support ML model governance.

There were also three launches related to Amazon SageMaker Studio notebooks. SageMaker Studio notebooks gives practitioners a fully managed notebook experience, from data exploration to deployment. As teams grow in size and complexity, dozens of practitioners may need to collaboratively develop models using notebooks. AWS continues to offer the best notebook experience for users, with the launch of three new features that help you coordinate and automate notebook code.

To support model deployment, new capabilities in SageMaker help you run shadow tests to evaluate a new ML model before production release by testing its performance against the currently deployed model. Shadow testing can help you catch potential configuration errors and performance issues before they impact end-users.

Finally, SageMaker launched support for geospatial ML, allowing data scientists and ML engineers to easily build, train, and deploy ML models using geospatial data. You can access geospatial data sources, purpose-built processing operations, pre-trained ML models, and built-in visualization tools to run geospatial ML faster and at scale.

Today, tens of thousands of customers use Amazon SageMaker to train models with billions of parameters and make over 1 trillion predictions per month. To learn more about SageMaker, visit the webpage and explore how fully managed infrastructure, tools, and workflows can help you accelerate ML model development.

About the author

Kimberly Madia is a Principal Product Marketing Manager with AWS Machine Learning. Her goal is to make it easy for customers to build, train, and deploy machine learning models using Amazon SageMaker. For fun outside work, Kimberly likes to cook, read, and run on the San Francisco Bay Trail.

Read MoreAWS Machine Learning Blog