Amazon SageMaker Data Wrangler reduces the time it takes to collect and prepare data for machine learning (ML) from weeks to minutes. You can streamline the process of feature engineering and data preparation with SageMaker Data Wrangler and finish each stage of the data preparation workflow (including data selection, purification, exploration, visualization, and processing at scale) within a single visual interface. Data is frequently kept in data lakes that can be managed by AWS Lake Formation, giving you the ability to implement fine-grained access control using a straightforward grant or revoke procedure. SageMaker Data Wrangler supports fine-grained data access control with Lake Formation and Amazon Athena connections.

We are happy to announce that SageMaker Data Wrangler now supports using Lake Formation with Amazon EMR to provide this fine-grained data access restriction.

Data professionals such as data scientists want to use the power of Apache Spark, Hive, and Presto running on Amazon EMR for fast data preparation; however, the learning curve is steep. Our customers wanted the ability to connect to Amazon EMR to run ad hoc SQL queries on Hive or Presto to query data in the internal metastore or external metastore (such as the AWS Glue Data Catalog), and prepare data within a few clicks.

In this post, we show how to use Lake Formation as a central data governance capability and Amazon EMR as a big data query engine to enable access for SageMaker Data Wrangler. The capabilities of Lake Formation simplify securing and managing distributed data lakes across multiple accounts through a centralized approach, providing fine-grained access control.

Solution overview

We demonstrate this solution with an end-to-end use case using a sample dataset, the TPC data model. This data represents transaction data for products and includes information such as customer demographics, inventory, web sales, and promotions. To demonstrate fine-grained data access permissions, we consider the following two users:

David, a data scientist on the marketing team. He is tasked with building a model on customer segmentation, and is only permitted to access non-sensitive customer data.

Tina, a data scientist on the sales team. She is tasked with building the sales forecast model, and needs access to sales data for the particular region. She is also helping the product team with innovation, and therefore needs access to product data as well.

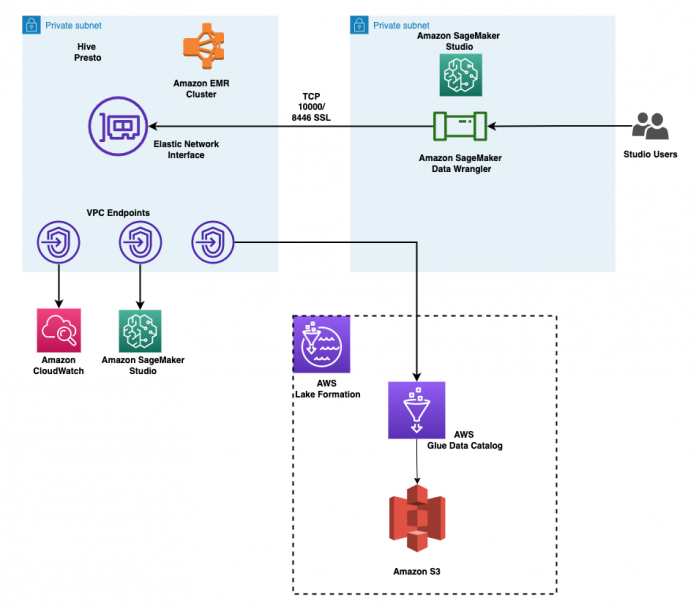

The architecture is implemented as follows:

Lake Formation manages the data lake, and the raw data is available in Amazon Simple Storage Service (Amazon S3) buckets

Amazon EMR is used to query the data from the data lake and perform data preparation using Spark

AWS Identity and Access Management (IAM) roles are used to manage data access using Lake Formation

SageMaker Data Wrangler is used as the single visual interface to interactively query and prepare the data

The following diagram illustrates this architecture. Account A is the data lake account that houses all the ML-ready data obtained through extract, transform, and load (ETL) processes. Account B is the data science account where a group of data scientists compile and run data transformations using SageMaker Data Wrangler. In order for SageMaker Data Wrangler in Account B to have access to the data tables in Account A’s data lake via Lake Formation permissions, we must activate the necessary rights.

You can use the provided AWS CloudFormation stack to set up the architectural components for this solution.

Prerequisites

Before you get started, make sure you have the following prerequisites:

An AWS account

An IAM user with administrator access

An S3 bucket

Provision resources with AWS CloudFormation

We provide a CloudFormation template that deploys the services in the architecture for end-to-end testing and to facilitate repeated deployments. The outputs of this template are as follows:

An S3 bucket for the data lake.

An EMR cluster with EMR runtime roles enabled. For more details on using runtime roles with Amazon EMR, see Configure runtime roles for Amazon EMR steps. Associating runtime roles with EMR clusters is supported in Amazon EMR 6.9. Make sure the following configuration is in place:

Create a security configuration in Amazon EMR.

The EMR runtime role’s trust policy should allow the EMR EC2 instance profile to assume the role.

The EMR EC2 instance profile role should be able to assume the EMR runtime roles.

The EMR cluster should be created with encryption in transit.

IAM roles for accessing the data in data lake, with fine-grained permissions:

Marketing-data-access-role

Sales-data-access-role

An Amazon SageMaker Studio domain and two user profiles. The SageMaker Studio execution roles for the users allow the users to assume their corresponding EMR runtime roles.

A lifecycle configuration to enable the selection of the role to use for the EMR connection.

A Lake Formation database populated with the TPC data.

Networking resources required for the setup, such as VPC, subnets, and security groups.

Create Amazon EMR encryption certificates for the data in transit

With Amazon EMR release version 4.8.0 or later, you have option for specifying artifacts for encrypting data in transit using a security configuration. We manually create PEM certificates, include them in a .zip file, upload it to an S3 bucket, and then reference the .zip file in Amazon S3. You likely want to configure the private key PEM file to be a wildcard certificate that enables access to the VPC domain in which your cluster instances reside. For example, if your cluster resides in the us-east-1 Region, you could specify a common name in the certificate configuration that allows access to the cluster by specifying CN=*.ec2.internal in the certificate subject definition. If your cluster resides in us-west-2, you could specify CN=*.us-west-2.compute.internal.

Run the following commands using your system terminal. This will generate PEM certificates and collate them into a .zip file:

Upload my-certs.zip to an S3 bucket in the same Region where you intend to run this exercise. Copy the S3 URI for the uploaded file. You’ll need this while launching the CloudFormation template.

This example is a proof of concept demonstration only. Using self-signed certificates is not recommended and presents a potential security risk. For production systems, use a trusted certification authority (CA) to issue certificates.

Deploying the CloudFormation template

To deploy the solution, complete the following steps:

Sign in to the AWS Management Console as an IAM user, preferably an admin user.

Choose Launch Stack to launch the CloudFormation template:

Choose Next.

For Stack name, enter a name for the stack.

For IdleTimeout, enter a value for the idle timeout for the EMR cluster (to avoid paying for the cluster when it’s not being used).

For S3CertsZip, enter an S3 URI with the EMR encryption key.

For instructions to generate a key and .zip file specific to your Region, refer to Providing certificates for encrypting data in transit with Amazon EMR encryption. If you are deploying in US East (N. Virginia), remember to use CN=*.ec2.internal. For more information, refer to Create keys and certificates for data encryption. Make sure to upload the .zip file to an S3 bucket in the same Region as your CloudFormation stack deployment.

On the review page, select the check box to confirm that AWS CloudFormation might create resources.

Choose Create stack.

Wait until the status of the stack changes from CREATE_IN_PROGRESS to CREATE_COMPLETE. The process usually takes 10–15 minutes.

After the stack is created, allow Amazon EMR to query Lake Formation by updating the External Data Filtering settings on Lake Formation. For instructions, refer to Getting started with Lake Formation. Specify Amazon EMR for Session tag values and enter your AWS account ID under AWS account IDs.

Test data access permissions

Now that the necessary infrastructure is in place, you can verify that the two SageMaker Studio users have access to granular data. To review, David shouldn’t have access to any private information about your customers. Tina has access to information about sales. Let’s put each user type to the test.

Test David’s user profile

To test your data access with David’s user profile, complete the following steps:

On the SageMaker console, choose Domains in the navigation pane.

From the SageMaker Studio domain, launch SageMaker Studio from the user profile david-non-sensitive-customer.

In your SageMaker Studio environment, create an Amazon SageMaker Data Wrangler flow, and choose Import & prepare data visually.

Alternatively, on the File menu, choose New, then choose Data Wrangler flow.

We discuss these steps to create a data flow in detail later in this post.

Test Tina’s user profile

Tina’s SageMaker Studio execution role allows her to access the Lake Formation database using two EMR execution roles. This is achieved by listing the role ARNs in a configuration file in Tina’s file directory. These roles can be set using SageMaker Studio lifecycle configurations to persist the roles across app restarts. To test Tina’s access, complete the following steps:

On the SageMaker console, navigate to the SageMaker Studio domain.

Launch SageMaker Studio from the user profile tina-sales-electronics.

It’s a good practice to close any previous SageMaker Studio sessions on your browser when switching user profiles. There can only be one active SageMaker Studio user session at a time.

Create a Data Wrangler data flow.

In the following sections, we showcase creating a data flow within SageMaker Data Wrangler and connecting to Amazon EMR as the data source. David and Tina will have similar experiences with data preparation, except for access permissions, so they will see different tables.

Create a SageMaker Data Wrangler data flow

In this section, we cover connecting to the existing EMR cluster created through the CloudFormation template as a data source in SageMaker Data Wrangler. For demonstration purposes, we use David’s user profile.

To create your data flow, complete the following steps:

On the SageMaker console, choose Domains in the navigation pane.

Choose StudioDomain, which was created by running the CloudFormation template.

Select a user profile (for this example, David’s) and launch SageMaker Studio.

Choose Open Studio.

In SageMaker Studio, create a new data flow and choose Import & prepare data visually.

Alternatively, on the File menu, choose New, then choose Data Wrangler flow.

Creating a new flow can take a few minutes. After the flow has been created, you see the Import data page.

To add Amazon EMR as a data source in SageMaker Data Wrangler, on the Add data source menu, choose Amazon EMR.

You can browse all the EMR clusters that your SageMaker Studio execution role has permissions to see. You have two options to connect to a cluster: one is through the interactive UI, and the other is to first create a secret using AWS Secrets Manager with a JDBC URL, including EMR cluster information, and then provide the stored AWS secret ARN in the UI to connect to Presto or Hive. In this post, we use the first method.

Select any of the clusters that you want to use, then choose Next.

Select which endpoint you want to use.

Enter a name to identify your connection, such as emr-iam-connection, then choose Next.

Select IAM as your authentication type and choose Connect.

When you’re connected, you can interactively view a database tree and table preview or schema. You can also query, explore, and visualize data from Amazon EMR. For a preview, you see a limit of 100 records by default. After you provide a SQL statement in the query editor and choose Run, the query is run on the Amazon EMR Hive engine to preview the data. Choose Cancel query to cancel ongoing queries if they are taking an unusually long time.

Let’s access data from the table that David doesn’t have permissions to.

The query will result in the error message “Unable to fetch table dl_tpc_web_sales. Insufficient Lake Formation permission(s) on dl_tpc_web_sales.”

The last step is to import the data. When you are ready with the queried data, you have the option to update the sampling settings for the data selection according to the sampling type (FirstK, Random, or Stratified) and the sampling size for importing data into Data Wrangler.

Choose Import to import the data.

On the next page, you can add various transformations and essential analysis to the dataset.

Navigate to the data flow and add more steps to the flow as needed for transformations and analysis.

You can run a data insight report to identify data quality issues and get recommendations to fix those issues. Let’s look at some example transforms.

In the Data flow view, you should see that we are using Amazon EMR as a data source using the Hive connector.

Choose the plus sign next to Data types and choose Add transform.

Let’s explore the data and apply a transformation. For example, the c_login column is empty and it will not add value as a feature. Let’s delete the column.

In the All steps pane, choose Add step.

Choose Manage columns.

For Transform, choose Drop column.

For Columns to drop, choose the c_login column.

Choose Preview, then choose Add.

Verify the step by expanding the Drop column section.

You can continue adding steps based on the different transformations required for your dataset. Let’s go back to our data flow. You can now see the Drop column block showing the transform we performed.

ML practitioners spend a lot of time crafting feature engineering code, applying it to their initial datasets, training models on the engineered datasets, and evaluating model accuracy. Given the experimental nature of this work, even the smallest project will lead to multiple iterations. The same feature engineering code is often run again and again, wasting time and compute resources on repeating the same operations. In large organizations, this can cause an even greater loss of productivity because different teams often run identical jobs or even write duplicate feature engineering code because they have no knowledge of prior work. To avoid the reprocessing of features, we can export our transformed features to Amazon SageMaker Feature Store. For more information, refer to New – Store, Discover, and Share Machine Learning Features with Amazon SageMaker Feature Store.

Choose the plus sign next to Drop column.

Choose Export to and SageMaker Feature Store (via Jupyter notebook).

You can easily export your generated features to SageMaker Feature Store by specifying it as the destination. You can save the features into an existing feature group or create a new one. For more information, refer to Easily create and store features in Amazon SageMaker without code.

We have now created features with SageMaker Data Wrangler and stored those features in SageMaker Feature Store. We showed an example workflow for feature engineering in the SageMaker Data Wrangler UI.

Clean up

If your work with SageMaker Data Wrangler is complete, delete the resources you created to avoid incurring additional fees.

In SageMaker Studio, close all the tabs, then on the File menu, choose Shut Down.

When prompted, choose Shutdown All.

Shutdown might take a few minutes based on the instance type. Make sure all the apps associated with each user profile got deleted. If they were not deleted, manually delete the app associated under each user profile created using the CloudFormation template.

On the Amazon S3 console, empty any S3 buckets that were created from the CloudFormation template when provisioning clusters.

The buckets should have the same prefix as the CloudFormation launch stack name and cf-templates-.

On the Amazon EFS console, delete the SageMaker Studio file system.

You can confirm that you have the correct file system by choosing the file system ID and confirming the tag ManagedByAmazonSageMakerResource on the Tags tab.

On the AWS CloudFormation console, select the stack you created and choose Delete.

You’ll receive an error message, which is expected. We’ll come back to this and clean it up in the subsequent steps.

Identify the VPC that was created by the CloudFormation stack, named dw-emr-, and follow the prompts to delete the VPC.

Return to the AWS CloudFormation console and retry the stack deletion for dw-emr-.

All the resources provisioned by the CloudFormation template described in this post have now been removed from your account.

Conclusion

In this post, we went over how to apply fine-grained access control with Lake Formation and access the data using Amazon EMR as a data source in SageMaker Data Wrangler, how to transform and analyze a dataset, and how to export the results to a data flow for use in a Jupyter notebook. After visualizing our dataset using SageMaker Data Wrangler’s built-in analytical features, we further enhanced our data flow. The fact that we created a data preparation pipeline without writing a single line of code is significant.

To get started with SageMaker Data Wrangler, refer to Prepare ML Data with Amazon SageMaker Data Wrangler.

About the Authors

Ajjay Govindaram is a Senior Solutions Architect at AWS. He works with strategic customers who are using AI/ML to solve complex business problems. His experience lies in providing technical direction as well as design assistance for modest to large-scale AI/ML application deployments. His knowledge ranges from application architecture to big data, analytics, and machine learning. He enjoys listening to music while resting, experiencing the outdoors, and spending time with his loved ones.

Isha Dua is a Senior Solutions Architect based in the San Francisco Bay Area. She helps AWS enterprise customers grow by understanding their goals and challenges, and guides them on how they can architect their applications in a cloud-native manner while ensuring resilience and scalability. She’s passionate about machine learning technologies and environmental sustainability.

Parth Patel is a Senior Solutions Architect at AWS in the San Francisco Bay Area. Parth guides enterprise customers to accelerate their journey to the cloud and help them adopt and grow on the AWS Cloud successfully. He is passionate about machine learning technologies, environmental sustainability, and application modernization.

Read MoreAWS Machine Learning Blog