Amazon SageMaker provides a suite of built-in algorithms, pre-trained models, and pre-built solution templates to help data scientists and machine learning (ML) practitioners get started on training and deploying ML models quickly. You can use these algorithms and models for both supervised and unsupervised learning. They can process various types of input data, including tabular, image, and text.

The SageMaker XGBoost algorithm allows you to easily run XGBoost training and inference on SageMaker. XGBoost (eXtreme Gradient Boosting) is a popular and efficient open-source implementation of the gradient boosted trees algorithm. Gradient boosting is a supervised learning algorithm that attempts to accurately predict a target variable by combining an ensemble of estimates from a set of simpler and weaker models. The XGBoost algorithm performs well in ML competitions because of its robust handling of a variety of data types, relationships, distributions, and the variety of hyperparameters that you can fine-tune. You can use XGBoost for regression, classification (binary and multiclass), and ranking problems. You can use GPUs to accelerate training on large datasets.

Today, we are happy to announce that SageMaker XGBoost now offers fully distributed GPU training.

Starting with version 1.5-1 and above, you can now utilize all GPUs when using multi-GPU instances. The new feature addresses your needs to use fully distributed GPU training when dealing with large datasets. This means being able to use multiple Amazon Elastic Compute Cloud (Amazon EC2) instances (GPU) and using all GPUs per instance.

Distributed GPU training with multi-GPU instances

With SageMaker XGBoost version 1.2-2 or later, you can use one or more single-GPU instances for training. The hyperparameter tree_method needs to be set to gpu_hist. When using more than one instance (distributed setup), the data needs to be divided among instances as follows (the same as the non-GPU distributed training steps mentioned in XGBoost Algorithm). Although this option is performant and can be used in various training setups, it doesn’t extend to using all GPUs when choosing multi-GPU instances such as g5.12xlarge.

With SageMaker XGBoost version 1.5-1 and above, you can now use all GPUs on each instance when using multi-GPU instances. The ability to use all GPUs in multi-GPU instance is offered by integrating the Dask framework.

You can use this setup to complete training quickly. Apart from saving time, this option will also be useful to work around blockers such as maximum usable instance (soft) limits, or if the training job is unable to provision a large number of single-GPU instances for some reason.

The configurations to use this option are the same as the previous option, except for the following differences:

Add the new hyperparameter use_dask_gpu_training with string value true.

When creating TrainingInput, set the distribution parameter to FullyReplicated, whether using single or multiple instances. The underlying Dask framework will carry out the data load and split the data among Dask workers. This is different from the data distribution setting for all other distributed training with SageMaker XGBoost.

Note that splitting data into smaller files still applies for Parquet, where Dask will read each file as a partition. Because you’ll have a Dask worker per GPU, the number of files should be greater than instance count * GPU count per instance. Also, making each file too small and having a very large number of files can degrade performance. For more information, see Avoid Very Large Graphs. For CSV, we still recommend splitting up large files into smaller ones to reduce data download time and enable quicker reads. However, it’s not a requirement.

Currently, the supported input formats with this option are:

text/csv

application/x-parquet

The following input mode is supported:

File mode

The code will look similar to the following:

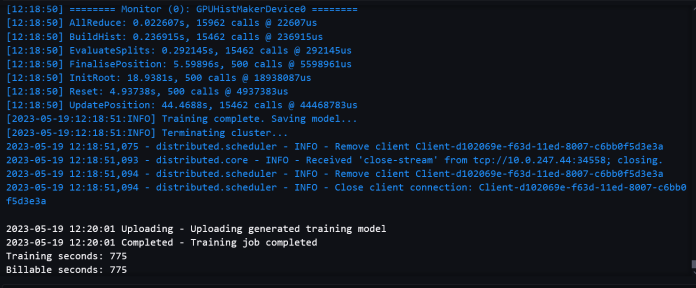

The following screenshots show a successful training job log from the notebook.

Benchmarks

We benchmarked evaluation metrics to ensure that the model quality didn’t deteriorate with the multi-GPU training path compared to single-GPU training. We also benchmarked on large datasets to ensure that our distributed GPU setups were performant and scalable.

Billable time refers to the absolute wall-clock time. Training time is only the XGBoost training time, measured from the train() call until the model is saved to Amazon Simple Storage Service (Amazon S3).

Performance benchmarks on large datasets

The use of multi-GPU is usually appropriate for large datasets with complex training. We created a dummy dataset with 2,497,248,278 rows and 28 features for testing. The dataset was 150 GB and composed of 1,419 files. Each file was sized between 105–115 MB. We saved the data in Parquet format in an S3 bucket. To simulate somewhat complex training, we used this dataset for a binary classification task, with 1,000 rounds, to compare performance between the single-GPU training path and the multi-GPU training path.

The following table contains the billable training time and performance comparison between the single-GPU training path and the multi-GPU training path.

Single-GPU Training Path

Instance Type

Instance Count

Billable Time / Instance(s)

Training Time(s)

g4dn.xlarge

20

Out of Memory

g4dn.2xlarge

20

Out of Memory

g4dn.4xlarge

15

1710

1551.9

16

1592

1412.2

17

1542

1352.2

18

1423

1281.2

19

1346

1220.3

Multi-GPU Training Path (with Dask)

Instance Type

Instance Count

Billable Time / Instance(s)

Training Time(s)

g4dn.12xlarge

7

Out of Memory

8

1143

784.7

9

1039

710.73

10

978

676.7

12

940

614.35

We can see that using multi-GPU instances results in low training time and low overall time. The single-GPU training path still has some advantage in downloading and reading only part of the data in each instance, and therefore low data download time. It also doesn’t suffer from Dask’s overhead. Therefore, the difference between training time and total time is smaller. However, due to using more GPUs, multi-GPU setup can cut training time significantly.

You should use an EC2 instance that has enough compute power to avoid out of memory errors when dealing with large datasets.

It’s possible to reduce total time further with the single-GPU setup by using more instances or more powerful instances. However, in terms of cost, it might be more expensive. For example, the following table shows the training time and cost comparison with a single-GPU instance g4dn.8xlarge.

Single-GPU Training Path

Instance Type

Instance Count

Billable Time / Instance(s)

Cost ($)

g4dn.8xlarge

15

1679

15.22

17

1509

15.51

19

1326

15.22

Multi-GPU Training Path (with Dask)

Instance Type

Instance Count

Billable Time / Instance(s)

Cost ($)

g4dn.12xlarge

8

1143

9.93

10

978

10.63

12

940

12.26

Cost calculation is based on the On-Demand price for each instance. For more information, refer to Amazon EC2 G4 Instances.

Model quality benchmarks

For model quality, we compared evaluation metrics between the Dask GPU option and the single-GPU option, and ran training on various instance types and instance counts. For different tasks, we used different datasets and hyperparameters, with each dataset split into training, validation, and test sets.

For a binary classification (binary:logistic) task, we used the HIGGS dataset in CSV format. The training split of the dataset has 9,348,181 rows and 28 features. The number of rounds used was 1,000. The following table summarizes the results.

Multi-GPU Training with Dask

Instance Type

Num GPUs / Instance

Instance Count

Billable Time / Instance(s)

Accuracy %

F1 %

ROC AUC %

g4dn.2xlarge

1

1

343

75.97

77.61

84.34

g4dn.4xlarge

1

1

413

76.16

77.75

84.51

g4dn.8xlarge

1

1

413

76.16

77.75

84.51

g4dn.12xlarge

4

1

157

76.16

77.74

84.52

For regression (reg:squarederror), we used the NYC green cab dataset (with some modifications) in Parquet format. The training split of the dataset has 72,921,051 rows and 8 features. The number of rounds used was 500. The following table shows the results.

Multi-GPU Training with Dask

Instance Type

Num GPUs / Instance

Instance Count

Billable Time / Instance(s)

MSE

R2

MAE

g4dn.2xlarge

1

1

775

21.92

0.7787

2.43

g4dn.4xlarge

1

1

770

21.92

0.7787

2.43

g4dn.8xlarge

1

1

705

21.92

0.7787

2.43

g4dn.12xlarge

4

1

253

21.93

0.7787

2.44

Model quality metrics are similar between the multi-GPU (Dask) training option and the existing training option. Model quality remains consistent when using a distributed setup with multiple instances or GPUs.

Conclusion

In this post, we gave an overview of how you can use different instance type and instance count combinations for distributed GPU training with SageMaker XGBoost. For most use cases, you can use single-GPU instances. This option provides a wide range of instances to use and is very performant. You can use multi-GPU instances for training with large datasets and lots of rounds. It can provide quick training with a smaller number of instances. Overall, you can use SageMaker XGBoost’s distributed GPU setup to immensely speed up your XGBoost training.

To learn more about SageMaker and distributed training using Dask, check out Amazon SageMaker built-in LightGBM now offers distributed training using Dask

About the Authors

Dhiraj Thakur is a Solutions Architect with Amazon Web Services. He works with AWS customers and partners to provide guidance on enterprise cloud adoption, migration, and strategy. He is passionate about technology and enjoys building and experimenting in the analytics and AI/ML space.

Dewan Choudhury is a Software Development Engineer with Amazon Web Services. He works on Amazon SageMaker’s algorithms and JumpStart offerings. Apart from building AI/ML infrastructures, he is also passionate about building scalable distributed systems.

Dr. Xin Huang is an Applied Scientist for Amazon SageMaker JumpStart and Amazon SageMaker built-in algorithms. He focuses on developing scalable machine learning algorithms. His research interests are in the area of natural language processing, explainable deep learning on tabular data, and robust analysis of non-parametric space-time clustering. He has published many papers in ACL, ICDM, KDD conferences, and Royal Statistical Society: Series A journal.

Tony Cruz

Read MoreAWS Machine Learning Blog