In this post, I discuss Amazon Relational Database Service (Amazon RDS) Multi-AZ DB cluster configurations for Amazon RDS for MySQL and Amazon RDS for PostgreSQL database instances.

When you create a Multi-AZ DB cluster, Amazon RDS maintains a primary and two readable standby copies of your data. If there are problems with the primary copy, Amazon RDS automatically failovers to the readable standby copy to provide continued availability to the data. The two readable copies are maintained in different Availability Zones. Having separate Availability Zones greatly reduces the likelihood that all three copies will be affected concurrently by most types of disturbances. Proper management of the data, simple reconfiguration, and reliable user access to the copies are key to addressing the high availability requirements that customer environments demand.

Basic design of RDS Multi-AZ DB clusters

A RDS Multi-AZ database (DB) cluster is a cluster configuration consisting of three nodes and is designed to offer high availability, reduced write latency, and cost-effectiveness specifically for those who require read replicas. An RDS Multi-AZ DB cluster manages data replication between one primary and two standby DB instances in three separate Availability Zones. The primary DB instance provides both read and write capability, and the standby DB instances act as automatic failover targets during failure of the primary instance and provide read capability.

The new Multi-AZ cluster deployment uses the database engine’s native replication in conjunction with database instance types with local NVMe storage and attached Amazon Elastic Block Store (Amazon EBS) volumes for durable storage to deliver high availability, performance, and throughput in a single deployment of Amazon RDS. As with the existing RDS Multi-AZ architecture, Amazon RDS automatically detects and manages failover for high availability and automates the time-consuming administrative tasks of configuring, monitoring, and maintaining Multi-AZ databases and frees you to focus on your applications.

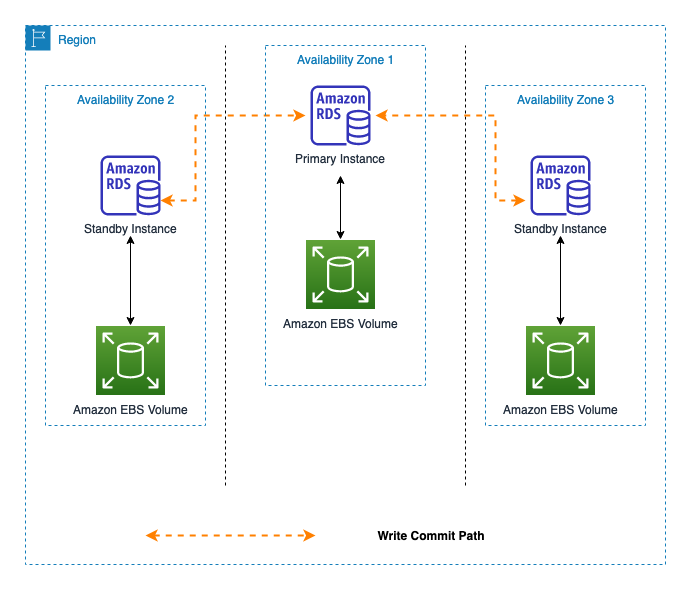

The following diagram illustrates the three RDS DB instances in a Multi-AZ DB cluster: the primary instance (shown in the middle) and the two readable standby instances (shown on the right and left). In this example, DNS is directing the application to the primary DB instance in Availability Zone (AZ1), also the write endpoint which can handle both read and write traffic, serving the primary copy of the data in a database storage volume in AZ1. After the application writes to the primary instance through a writer endpoint, the database replication process ensures that writes propagate to a standby instance (the dotted-line path).

Figure 1: Diagram of a Multi-AZ DB cluster write path

During normal operation, there are three active Amazon RDS instances in the replication process. Each instance manages one database storage volume with a full copy of the data. At any moment in time, each instance is assigned a specific role. One is the primary instance, which exposes an external endpoint serving both read and write traffic through which users access their data. The other two are the standbys, which act as secondary instances that semi-synchronously write all data that they receive from the primary. Semi-synchronous replication means that the primary won’t acknowledge a commit to a client until one of the replicas has acknowledged receiving the transaction. This behavior ensures that the transaction is persisted on at least two machines and reduces the perceived write latency in the steady state as the client experiences the best-of-two latency. However, read operations can also be done using the two readable standbys because the database server process is running on the standby instance as well and is exposed through the reader endpoint. Consequently, the reader endpoint’s copy of the data is available to users for read scalability.

If there is an availability issue, RDS automatically promotes a standby instance to the primary role and availability is restored through redirection or DNS propagation. This event is referred to as a failover. Redirection to the new primary instance is provided through DNS. The relevant IP address records in the results from client DNS queries have very low time-to-live values. The low time-to-live is intended to inhibit long-term caching of the name-to-address information. This causes the client to refresh the information sooner in the failover process, picking up the DNS redirection changes more quickly.

Generally, failover events are rare, but they do occur. For situations in which Amazon RDS detects problems, the failover is automatic. You can also manually initiate failover through the Amazon RDS API or Amazon RDS console. For more information, see Manually failing over a Multi-AZ DB cluster.

The replication process has limited visibility outside itself and is therefore incapable of making some of the more strategic decisions. For example, it doesn’t know about user connectivity issues, local or regional outages, or the state of its peer database instance. For this reason, the database instances are monitored and managed by a monitoring system on each instance that has access to more critical information and periodically queries the instances for status. When appropriate, the monitoring system takes action to ensure that availability and performance requirements are met. I discuss the monitoring system in more detail later in this post.

The availability and durability improvements provided by a Multi-AZ DB cluster come at a minimal performance cost. In a normal use case, the replication layers are connected and semi-synchronous write operations to the readable standby database storage volume occur. The readable standby instance and volume are in a distinct and geographically distant Availability Zones. Assessment shows up to two-times faster commits for writes as compared to a Multi-AZ instances deployment. However, the actual impact on real-world use cases is highly dependent on customer workload.

This design enables AWS to provide a service level agreement (SLA) that exceeds 99.95 percent availability to customer data. To learn more, see the Amazon RDS Service Level Agreement.

Intricacies of the implementation

You might think that the design of a volume replication facility is rather simple and straightforward. However, the actual implementation is fairly complex. This is because it must account for all the predicaments that three networked, discrete instances and volumes might experience, inside a constantly changing and sometimes disrupted environment.

Normal ongoing replication assumes that everything is in reasonable working order and is performing well: the DB instances are available, regular instance monitoring is functional, the storage volumes are available, and the network is performing as expected. But what happens when one or more of these pieces isn’t working as expected? Let’s examine some of the potential problems and their solutions.

Connectivity issues and synchronization

Occasionally, the primary and standby instances aren’t connected to each other, either due to a problem or a deliberate administrative action. Ongoing replication isn’t possible and waiting a long time for connectivity to be restored isn’t acceptable. When connectivity is lost, the instances wait for a decision to be made by the monitoring system. When the monitoring system detects this condition, it directs an standby instance to assume the primary role (failover) and to proceed on its own without replication. There is now only one current copy of the data, and the other copy is becoming stale. In a Multi-AZ instance deployment architecture, the standby is a cold standby where the data replication is happening at the database storage layer. After failover, the database engine does a crash recovery to start the database and therefore failover time is determined by the engine crash recovery time, which is typically 1–2 minutes. In a Multi-AZ DB cluster deployment, we use engine-native replication of data and the database engines on the standbys are hot. The failover time depends more on apply lag on the standby and the DNS propagation time, providing an improved failover time of typically 20 seconds.

Another architectural change in the RDS Multi-AZ DB cluster is the to move from a multi-tenant, single-node centralized monitoring and recovery system to a single-tenant, consensus-based monitoring and recovery solution running on the same Multi-AZ DB cluster nodes as the database. This allows us to monitor database health at a much higher frequency and initiate failover more rapidly. The engine-native replication allows us to rapidly failover from the primary node without having to wait for database engine start time and crash recovery, allowing us to increase the Amazon RDS availability target to 99.95 percent as compared to Single-AZ deployment which offer 99.5 percent SLA. Also, connectivity issues are promptly investigated using an automated detection system, and the problem is often quickly corrected. If the issue persists beyond a minimum amount of time, it invokes an alert for operator intervention. It’s therefore expected that most connectivity issues will be relatively short-lived conditions, and the three instances will soon have connectivity restored. When connectivity is restored, the volumes must be resynchronized before returning to the normal ongoing replication state. The resynchronization process ensures that both copies of the data are restored to a consistent state.

Fault tolerance in a dynamic environment

In the event of a disruption, instance or volume availability problems are the most common type of disruptions, and they are predominantly resolved by performing a simple failover operation. This restores availability through the readable standby instance and volume. In the unlikely event that a volume experiences a failure, it’s replaced with a new one. This is mainly for durability reasons, but it also helps improve the performance of the subsequent resynchronization of the volumes. Upon completion, the volumes are resynchronized and replication is restored.

Instance or volume replacement might also be an option in situations where a component exhibits unexpected behaviors. For example, a substantial or prolonged increase in latency or reduction in bandwidth can indicate an issue with the location of the path to the resource. Replacement is expected to be a permanent solution in such situations. Note that a replacement can impact performance, so it’s only performed when necessary.

There could be situations in which an entire AWS Region or Availability Zone is affected—for example, during extreme weather or a widespread power outage. In such circumstances, automated monitoring systems ensure the continuous availability of Amazon RDS instances, with a cautious approach to avoid disruptions and involve manual observers to take requisite actions. The observer uses monitoring and availability information to pause unnecessary automated recovery actions until the underlying issue is resolved.

Summary

Amazon RDS Multi-AZ DB cluster configurations improve the availability and durability of customer data. With automated monitoring for problem detection and subsequent corrective action to restore availability in the event of a disruption, Multi-AZ DB clusters ensure that your data remains intact. For more information, see Amazon RDS High Availability.

Post your question in the AWS re:Post or leave a comment.

About the Author

Ankush Agarwal works at AWS as a Solutions Architect, where he focuses on developing resilient workloads using AWS. He has experience in creating, executing, and enhancing data analytics workloads using AWS services. Ankush spends his free time exploring urban forests and watching science fiction films.

Read MoreAWS Database Blog