Many customers are using Amazon Aurora PostgreSQL-Compatible Edition for running their business-critical database workloads in AWS. Aurora PostgreSQL is a fully managed, PostgreSQL-compatible, and ACID-compliant relational database engine that combines the speed, reliability, and manageability of Amazon Aurora with the simplicity and cost-effectiveness of open-source databases.

In this post, we present a database authorization solution using role-based access control (RBAC). You can use the same solution on Amazon Relational Database Service (Amazon RDS) for PostgreSQL.

Terminology

We use the following terminology throughout this post:

PostgreSQL database cluster – This is a collection of databases managed by a PostgreSQL server.

Database – The database contains one or more named schemas, which in turn contain tables and other named object types such as views, functions, and so on.

Role – PostgreSQL manages database access permissions using the concept of roles. A role can be thought of as either a database user, or a group of database users, depending on how the role is set up. Roles can own database objects (for example, tables and functions) and can assign privileges on those objects to other roles to control who has access to which objects. Note that roles are defined at the database cluster level, and so are valid in all databases in the cluster.

Database authentication – This is the process of validating identity in order to control the access to the database resources.

Database authorization – This defines who can do what inside the database.

Least privilege model – With this method, users are given minimum levels of access or permissions needed to perform their job. For example, a business user of an application who runs the sales report only needs read access to the data in selective tables and shouldn’t be able to write or modify the data.

In the following sections, we demonstrate how you can implement database authorization with a set of predefined roles and least access privileges.

Role setup

We categorize authorization into three roles to provide granular access that is applicable to most applications.

Read-only role – This role has access to read the data in the database. An example of users that need a read-only role are the users who generate the reports or need to query the data on an ad hoc basis for troubleshooting business tickets.

Read/write role – This role can read as well as write and modify the data in the database. For example, the read/write role is granted to application users that are defined in the application code to connect to the database to perform the transactions in the tables.

DDL (Data Definition Language) role – This role can read, write, and modify the data, and also alter the schema by making structural changes to the database objects. For example, the DDL role is granted to the users (or developers) who are deploying new code that requires schema changes in the database.

These roles can be created in each database for each schema. The users (application or individual users) can be granted one of the three roles according to their job role for the particular application. You can design and expand this role setup further based on various requirements and scenarios, for example applying additional granularity levels or sub-grouping based on your use cases.

PostgreSQL function code

In PostgreSQL, roles are synonymous to users, with the difference that users can log in to the database but roles can’t. We create the roles, and the roles can then be granted to users according to their required access in the application.

The following sample code creates the function in the PostgreSQL database that creates the three RBAC roles (read-only, read/write, and DDL) with the necessary permissions. The input parameters for this function are as follows:

Schema name – For which the roles and permissions need to be set up.

List of tables – The default value NULL means the roles and permissions are set up for all the tables in the schema. Sometimes because of security policies related to sensitive data, all the tables of a schema aren’t permitted to the roles or users. In that case, if the list of tables is passed, it sets up the permissions only for the specified list of tables.

This example handles only the privileges on tables, but you can enhance the code as needed to handle least privileges on the rest of the object types like sequences, functions, and so on. You can deploy this code in an Aurora PostgreSQL database that has schemas and tables already set up.

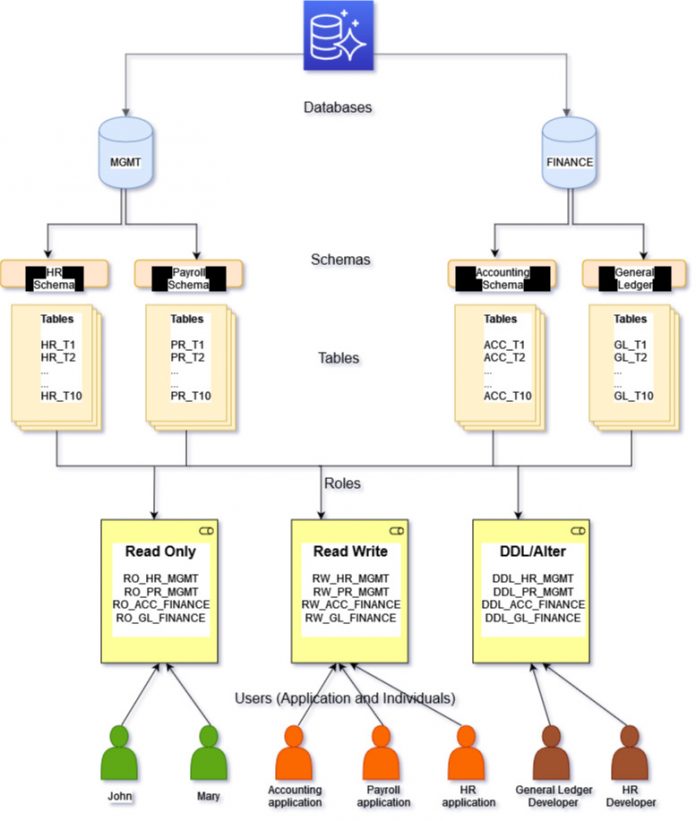

The following diagram describes a typical setup of an Aurora database in a customer environment and shows various roles and permissions that are automatically created by the function provided in this post.

After you create the RBAC roles using this function, you can assign them to the application or individual users who want to use the database. The users are able to perform their job duties within the database (read-only, read/write, or DDL) based on their assigned roles.

Solution overview

To test our PostgreSQL function, complete the following steps:

Create the databases and schemas.

Deploy and call the function to configure the roles and permissions.

Create users.

Grant the configured roles to the users.

Now let’s walk through the complete steps to deploy the solution using the following example. This example illustrates how to set up the database authorization as shown in the previous diagram.

Prerequisites

An AWS account with permissions to create CloudFormation stacks and to create Amazon VPC resources, EC2 instances, and an Aurora database

The prerequisites as mentioned in the post Deploy an Amazon Aurora PostgreSQL DB cluster with recommended best practices using AWS CloudFormation

Please consider the cost incurred for some resources during their use.

EC2 instance with PostgreSQL client (psql) installed to connect to Aurora instance.

Create the databases and schemas

Complete the following steps:

Connect to the EC2 instance

Connect to Aurora database

Create the databases MGMT and FINANCE (these should already exist in the customer environment):

Create the schemas HR and PAYROLL in MGMT, and the schemas ACC and GL in FINANCE

Connect to the mgmt database

Create the HR and PAYROLL schema

Create and populate test tables in HR and PAYROLL schemas.

Deploy and call the function

Now you deploy and call the function in the MGMT database.

Connect to the MGMT database

Create the function by copying the function code from PostgreSQL function code section

Take the before-image of the roles and permissions – run the following SQL statements:

We call the function with a different configuration for each schema.

In the Payroll schema, access to all the tables is granted to the three roles (read-only, read/write, and DDL):

In the HR schema, only t1 and t2 are granted access to the three roles, based on the input parameters to the function:

To check the roles and permissions after calling the function, run the following SQL statements:

Create users and grant the roles

Create the following users in the MGMT database :

JOHN – Their job duty is to run the HR reports

PR_APP – This role is configured as the database connection string in the code of the Payroll application

HR_DEVELOPER – The developer of the HR application

Perform the following tasks:

Connect to MGMT database

Create the users using following SQL statements :

Grant the appropriate roles(the roles were created by the function) to the users

We have demonstrated how the roles and permissions are set up within the database, and these can now be granted to the various database users as per their job duties.

RBAC and IAM database authentication

The recommended database authentication solution is AWS Identity and Access Management (IAM) database authentication, wherein the tokens are stored external to the database in IAM. This is a secured way of authenticating to the database because the passwords aren’t used and the lifetime of the tokens is just 15 minutes, after which the token isn’t valid and a new token needs to be requested to authenticate to the database. After the user is authenticated, they have access to log in to the database and grant the configured roles using RBAC to authorize users to access the required data as per their job duties.

RBAC and AWS Secrets Manager

AWS Secrets Manager is the centralized solution for credential management. You no longer have to store the database credentials (user name and password) in the source code or configuration files of your application. Instead, the application calls Secrets Manager API to retrieve the credentials and pass it to the application for database authentication. Additionally, you can configure Secrets Manager to automatically rotate the secret as per your security policies and guidelines. After authentication occurs, a connection is established to the database, and you can configure access control to determine authorization. Authorization is achieved through RBAC using the three preconfigured roles that we described in this post.

Conclusion

In this post, you learned how to configure Aurora PostgreSQL database authorization using role-based access control. This automation can save you time and resources, enabling you to focus on solving other business problems instead of managing database access.

If you have questions or feedback, leave a comment in the comments section.

About the Authors

Vivek Pinyani is a Data Architect at AWS Professional Services with expertise in Big Data and Database technologies. He focuses on helping customers build robust and performant Data Analytics solutions and Data Lake migrations. In his free time, he loves to spend time with his family, enjoys playing cricket and running.

John Lonappan is a Senior Database Specialist Consultant / Solutions Architect at Amazon Web Services (AWS) with a focus on relational databases. Prior to AWS, John has worked as Database Architect for large Data center providers across the Globe. Outside of work, he is passionate about, Long drives, EV Conversion, playing chess and traveling.

Read MoreAWS Database Blog