Amazon ElastiCache for Redis is a fully managed service compatible with the Redis API. ElastiCache is a fast in-memory data store, and many customers choose it to power some of their most performance-sensitive, real-time applications. Today, we are excited to share that you can now effectively maximize your performance by upgrading from ElastiCache for Redis 7.0 to 7.1, and can scale to 500 million requests per second (RPS) with microsecond response time.

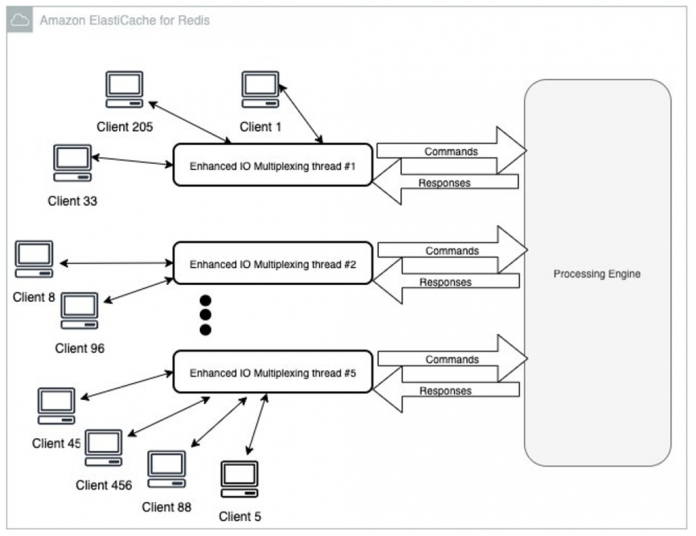

This launch is the latest in our continued push to help you get the most out of ElastiCache performance. In February 2023, we launched ElastiCache for Redis 7.0 with enhanced I/O multiplexing, which is ideal for throughput-bound workloads with multiple client connections, and its benefits scale with the level of workload concurrency. For example, when using r6g.xlarge node and running 5,200 concurrent clients, you can achieve up to 72% increased throughput (read and write operations per second) and up to 71% decreased P99 latency, compared with ElastiCache for Redis 6. It combines many client requests into a single channel, and improves the efficiency of the Redis main thread, as illustrated in the following figure.

In August 2023, we announced support for Graviton3 (M7g and R7g) instances that deliver up to 28% increased RPS, and up to 21% improved P99 latency compared to Graviton2, on top of the improvement provided by enhanced I/O multiplexing.

Now, with ElastiCache for Redis v7.1, you can get up to double the performance on instances with at least 8 physical cores (2xlarge on Graviton, and 4xlarge on x86). It delivers up to 100% more throughput, and 50% lower P99 latency, compared to version 7.0. On large enough nodes, for example r7g.4xlarge, you can achieve over 1 million requests per second (RPS) per node, and 500M RPS per cluster. The leap in performance is achieved with techniques we refer to as presentation layer offloading and memory access amortization. In this post, we discuss these techniques in more detail and provide a performance analysis.

Redis presentation layer offloading

ElastiCache for Redis uses enhanced I/O threads to handle processing network I/O and Transport Layer Security (TLS) encryption. With version 7.1, we extended the enhanced I/O threads functionality to also handle the presentation layer logic. By presentation layer, what we mean is that enhanced I/O threads are now not only reading client input, but also parsing the input into Redis binary command format, which is then forwarded to the main thread to run. Similarly, on the response path, the main thread redirects the binary format output back to the enhanced I/O threads. The enhanced I/O threads format and send the response to the client. As illustrated in the following diagram, by pushing this work to dedicated threads, we are both better using parallelism and the available CPU cores in each instance, as well as letting the Redis main thread do what it does best, which is running commands.

Memory access amortization

Random memory access is an expensive operation that impacts the efficiency of memory intensive applications. We now introduce memory access amortization (MAA), a technique to reduce memory access costs, especially for big dynamic data structures such as those used in Redis. MAA interleaves the steps from many data structure operations to ensure parallel memory access and reduces memory access latency. In ElastiCache for Redis 7.1 we applied this method on the Redis dictionary, and you get up to 60% reduction in hash-find, and accelerated commands. This is depicted in the following example, where the main Redis processing thread receives three parsed commands from the enhanced I/O threads. Next, it interleaves a dictionary-find procedure to simultaneously prefetch needed data into the CPU cache. In our simple example, the Redis main thread receives the following commands from the enhanced I/O threads: GET “19”, GET “65”, and GET “23”.

The main thread now retrieves those keys from the Redis dictionary. In ElastiCache version 7.0, hash table walks in the Redis dictionary would have been run serially while Redis inefficiently waited for memory. However, in version 7.1, the three hash table walks are interleaved to get parallel memory requests.

Performance analysis

To benchmark the performance gains that you can realize using ElastiCache for Redis 7.1, we compared it to version 7.0. We used our typical benchmarking setup, with the industry-standard Redis-benchmark tool, which included 20% SET (write) and 80% GET (read) commands. All tests were run with 500 clients, 80% GET and 20% SET on a database populated with 3 million 16-byte keys, and 512-byte string values. Both ElastiCache nodes and the client applications were running in the same AWS Availability Zone.

Requests per second gain

The following diagram details results from benchmark runs on different node sizes. It captures the percentage improvement in requests per second (RPS), as introduced in ElastiCache for Redis 7.1 and compared with 7.0.

We observe an exciting improvement of at least 100% (double) is achieved across all different nodes. This translates into more than 1 million requests per second on r7g.4xlarge and up. In the same benchmark, we also measured commands latencies. The following figure shows the P99 latency improvements introduced.

Latency reduction

We observe that even at peak load with maximum RPS, we are able to reduce the P99 latency by more than 50% per node across all node sizes, accelerating it to less than 1 milliseconds per request. Throughput is doubled, and at the same time latency is cut by half.

Conclusion

On nodes larger than xlarge, regardless of your cluster size or mode, with TLS or without, the improvements introduced in ElastiCache for Redis 7.1 deliver better performance. Upgrade to the newest ElastiCache, and enjoy the speed, at no additional cost, and without any changes to your applications. ElastiCache for Redis 7.1 is available in all AWS Regions.

For more information, see Supported versions. To get started, create a new cluster, or upgrade an existing cluster using the ElastiCache console.

Stay tuned for more performance improvements on ElastiCache for Redis in the future.

Happy caching!

Mickey Hoter is a Principal Product Manager on the Amazon ElastiCache team. He has 20+ years of experience in building software products – as a developer, team lead, group manager and product manager. Prior to joining AWS, Mickey worked for large companies such as SAP, Informatica and startups like Zend and ClickTale. Off work, he spends most of his time in the nature, where most of his hobbies are.

Dan Touitou is a Principal Engineer at AWS, focused on improving ElastiCache Redis efficiency. He has extensive experience optimizing software for network and security devices and storage systems. As an entrepreneur, he co-founded Riverhead Networks, which was later acquired by Cisco Systems, where he served as Distinguished Engineer. Subsequently, Dan held positions as CTO at Huawei Technologies and Chief Scientist at Orchestra Group. Dan earned his PhD in computer science from Tel Aviv University. He won the 2012 Dijkstra Prize in distributed computing for the introduction and first implementation of software transactional memory and co-invented more than 40 patents. In his free time, Dan enjoys being with family and friends, engaging with new technologies, and specializes in building audiophile loudspeakers as a hobby.

Read MoreAWS Database Blog