The amount of data created or replicated in 2020 reached 64.2 zettabytes — around 64 billion one terabyte hard drives worth of data.

Companies account for a major part of global data production. From manufacturing to distribution to sales, businesses track a range of events and collect data to find insights, improve performance, and cut costs. This data typically gets stored in a centralized location, such as a data lake and data warehouse, and is managed by a specialized data team. But the volumes and types of data are growing rapidly. Eventually, data teams become overwhelmed, and businesses get less and less value from their data investments.

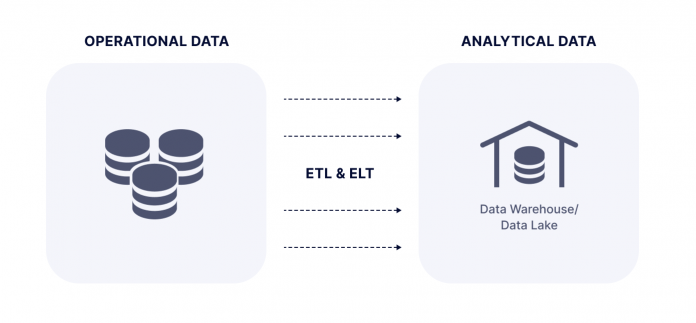

The centralized approach to data management, where operational data from different sources is centralized in a data warehouse or data lake for analysis by a specialized team.

Data mesh is one way out of this deadlock. It’s a decentralized approach to data architecture that enables companies to scale their operations faster and get more value out of data. Data mesh is especially useful for larger companies that collect, manage, and analyze huge data sets.

In this piece, we’ll explore data mesh, its principles, benefits, and challenges, and who should consider using it.

What is Data Mesh Architecture?

How Striim Enables Data Mesh Architecture

Who Should Use Data Mesh Architecture?

A New Way to Manage Date: Try Striim

What is Data Mesh Architecture?

Data mesh is a highly decentralized data architecture in which independent teams are responsible for managing data within their domains. Each domain or department, such as finance or supply chain, becomes a building block of an ecosystem of data products called mesh.

The concept of data mesh was created by Zhamak Dehghani, director of next tech incubation at the software consultancy ThoughtWorks. Dehghani attempted to solve problems caused by centralized data infrastructure. She observed how many of her clients that centralized all of their data in one platform found it hard to manage a huge variety of data sources. A centralized setup also forced teams to change the whole data pipeline when responding to new needs. Teams struggled with solving the influx of data requests from other departments, which suffocated innovation, agility, and learning.

Data Mesh Principles

Data mesh architecture is based on four main principles:

Domain ownership: Business domains of a company – supply chain, finance, HR, sales, marketing, customer service – should own the data closest to them. Domain teams are also responsible for serving relevant data to other teams.

Data as a product: Domain teams should treat the data they provide as a high-quality product. This means that data consumers should be satisfied with data quality, formats, and interfaces. Dehghani also recommends that domain teams introduce the role of domain data product owner, an expert responsible for developing, managing, and serving the domain’s data products.

Self-serve data platform: Managing data as a product requires developing a self-serve data platform that supports product owner workflows and removes friction when connecting different sets of infrastructure. The platform will provide tooling that allows generalist developers to manage data as a product. There’ll be less need for specialized data experts. The platform also has capabilities such as data products schema, data products lineage, compute and data locality, and more.

Federated computational governance: The data mesh strategy requires implementing a set of rules that applies to all data products and makes them interoperable. Standardization is vital for enabling domain teams to correlate their products, unify them, or perform other operations. But there also needs to be an equilibrium between decisions that are made locally and decisions imposed globally on all domains.

Data Mesh vs. Data Fabric

The data mesh concept may appear similar to data fabric as both architectures provide access to data across various platforms. But there are several differences between the two.

For one, data fabric brings data to a unified location, while with data mesh, data sets are stored across multiple domains. Also, data fabric is tech-centric because it primarily focuses on technologies, such as purpose-built APIs and how they can be efficiently used to collect and distribute data. Data mesh, however, goes a step further. It not only requires teams to build data products by copying data into relevant data sets but also introduces organizational changes, including the decentralization of data ownership.

There are various interpretations of how data mesh compares to data fabric. And two companies may introduce different tech solutions for data mesh or data fabric depending on their data size and type, security protocols, employee skillsets, and financial resources.

Benefits and Challenges of Data Mesh

Adopting data mesh architecture may provide companies with several benefits, including:

Increased agility: Decentralized data operations and self-serve infrastructure allow teams to be more agile and operate independently, reducing time-to-market and cutting down the IT backlog.

Better quality control: Global data governance guidelines encourage teams to produce and deliver data of high quality and in a standardized format that’s easy to access.

Cross-functional cooperation: Data mesh puts domain experts and product owners in charge of data and encourages closer cooperation between business and IT teams.

Faster data delivery: Self-serve data infrastructure provided by data mesh handles complexities such as identity management, data storage, and monitoring and allows teams to focus on delivering data faster.

Decentralized data architecture also leads to several challenges, such as:

Duplication of data: Repurposing and replicating data from the source domain to serve other domain’s needs may lead to the duplication of data and higher data management costs.

Neglected quality: The existence of multiple data products and pipelines may lead to the neglect of quality principles and huge technical debt.

Change management efforts: Deploying data mesh architecture and decentralized data operations will involve a lot of change management efforts.

Choosing future-proof technologies: Teams will have to carefully decide on which technologies to use to standardize them across the company and ensure they can tackle future challenges.

How Striim Enables Data Mesh Architecture

Striim is a data integration solution that combines real-time data ingestion, stream processing, pipeline monitoring, and real-time delivery with validation in a single product. It continuously ingests a wide variety of high-volume, high-velocity data from a variety of sources including enterprise databases (via low-impact change data capture), log files, messaging systems, Hadoop, cloud applications, and IoT devices in real time.

Striim makes it easy to ingest, process, and deliver real-time data across diverse environments (on-premise, cloud, and hybrid cloud environments). The diagram below shows an example of a decentralized data architecture enabled by Striim.

By using Striim, the owners of each data domain can manage their own data and make it available to other domains. For example, the domain on the far left is processing data from a database (using log-based CDC), and sending events to both a data warehouse and Kafka. The data domain on the right can use Striim to read events from both Kakfa and a file system, and combine and analyze this data to generate real-time dashboards. The data domain in the center reads change data from a database as well as sensor data, and uses machine learning to detect (and create alerts for) any anomalies.

Striim enables a decentralized data mesh architecture by empowering teams to manage, analyze, and share data from their respective domains.

Who Should Use Data Mesh Architecture?

The data mesh concept is particularly useful for companies that want to scale quickly and work with large, diverse, and frequently changing data sets.

Companies whose data platforms and data teams are slowing down innovation efforts may also benefit from data mesh. There are several signs of this slowdown, such as lead times increasing as teams need more and more time to deliver projects. Also, ad-hoc solutions get built without inputs from the centralized data team. And temporary data integration solutions are deployed without using the central data platform.

Data mesh strategy can also benefit teams that are already decentralized or plan to be. The decentralized data architecture empowers individual teams to own and manage their data and provide it to other departments as a product.

Several companies, including Intuit, JPMorgan Chase, Zalando, and HSBC, have mentioned that they have either implemented or are experimenting with data mesh.

An example of data mesh architecture for a financial institution is shown below, where different business units independently manage their own data and share it with the finance and risk management arms of the institution.

An example of data mesh architecture for a financial institution using Striim

A New Way to Manage Data: Try Striim

Data mesh can help teams better organize their ever-growing volumes and types of data. This approach is about moving away from centralized data architecture and having a network of domain teams own the data and handle it as a product. And while data mesh might not be for everyone, more and more companies will consider decentralized data architecture as a way to get more value out of their data operations.

If you’d like to see how Striim can help you enable a decentralized, data mesh architecture, request a demo here or try Striim for free.

Read MoreStriim