The daily volume of third-party and user-generated content (UGC) across industries is increasing exponentially. Startups, social media, gaming, and other industries must ensure their customers are protected, while keeping operational costs down. Businesses in the broadcasting and media industries often find it difficult to efficiently add ratings to content pieces and formats to comply with guidelines for different markets and audiences. Other organizations in financial and healthcare services find it challenging to protect personally identifiable and health information (PII and PHI) across internal and external environments and processes.

In this post, we discuss how you can automate content moderation and compliance with artificial intelligence (AI) and machine learning (ML) to protect online communities, their users, and brands.

The need for content moderation

Content moderation is fundamental to protecting online communities, their members, and members’ personal information. There are also strong business reasons to reconsider how your organization moderates content.

The UGC platform industry is growing at 26% CAGR, and it’s expected to reach $10 billion by 2028 (Grand View Research, 2021). 79% of consumer purchase decisions are influenced by UGC (Stackla Customer Survey, 2019), 40% of consumers disengage with brands after a single exposure to toxic content, and 85% agree that brands are responsible for moderating the content shared by users online (BusinessWire, 2021).

Let’s explore other compelling reasons for content moderation across industries:

Social media – Prevent user exposure to inappropriate content on photo and video sharing platforms, such as gaming communities and dating applications. These protections increase community growth, session length, conversion metrics, and other responsible social media objectives and network metrics.

Gaming – Prevent inappropriate content such as hate speech, profanity, or bullying within in-game chat. Additionally, moderating user-generated values (such as nicknames and profiles) keeps gamers engaged and active, and without motive to leave the game’s ecosystem.

Brand safety – Avoid associations that increase the risk of public backlash due to an unwanted association between your brand, an ad, or content within ads.

Ecommerce – Keep out illegal or controversial product listings that violate compliance policies that could incur both liability and buyer and seller churn.

Financial services – Detect and redact PII to ensure that sensitive user data remains private. Your customers can trust your platform and increase participation, investment, and referrals.

Healthcare – Detect and redact PHI and other sensitive information to ensure that data remains private. Healthcare providers can remain compliant with HIPAA and other regulators to avoid fines.

Some businesses employ large teams of human moderators. In contrast, others use a reactive approach by moderating content or sensitive information users have already viewed. This approach leads to a poor user experience, high moderation costs, brand risk, and unnecessary liability. Organizations are turning to AI, ML, deep learning, and natural language processing (NLP) to gain the accuracy and efficiency needed to keep online environments, customers, and information safe—while reducing content moderation costs!

AWS AI services and solutions cover your moderation needs. They scale with your business to improve content safety, streamline moderation workflows, and increase reliability while lowering operational costs.

Content moderation using AWS AI services

Addressing your content moderation needs requires a combination of computer vision (CV), text and language transform, and other AI and ML capabilities to efficiently moderate the increasing influx of UGC and sensitive information. For example, content moderation teams can employ ML to reclaim most of the time spent moderating content and manually protecting information. They can also reduce moderation costs and safeguard the organization from risk, liability, and brand damage by integrating additional contextual analysis and human teams in the moderation workflow. You can also define granular moderation rules that meet business-specific safety and compliance guidelines. End-users expect to collaborate across media types, so the tooling and capabilities must support that rich content. You can significantly reduce complexity by using AWS AI capabilities to automate tasks, update prediction models, and integrate human review stages.

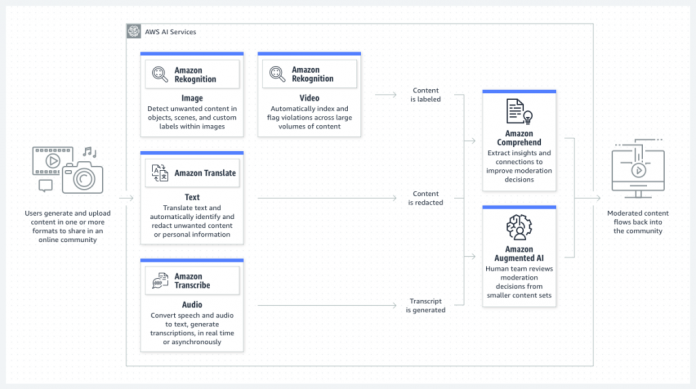

The following diagram illustrates the architecture of AWS AI services in a content moderation solution.

You can use the following AWS AI services for moderation, contextual insights, and human-in-the-loop moderation:

Amazon Augmented AI (Amazon A2I) makes it easy to build the workflows required for human review, whether moderation runs on AWS or not.

Amazon Comprehend uses NLP to extract insights about the content of documents. Amazon Comprehend processes text and image files and semi-structured documents, such as Adobe PDF and Microsoft Word documents.

Amazon Rekognition identifies objects, people, text, scenes, and activities in images and videos. It can detect inappropriate content as well.

Amazon Transcribe is an automatic speech recognition (ASR) service that uses ML models to convert audio to text.

Amazon Translate is a text translation service that uses advanced ML technologies to provide high-quality translation on demand.

You can combine these services to mitigate the impact of unwanted content by reviewing every content piece, which proactively provides content safety for users and brands. For example, you can assess images and videos against predefined categories or from your list of prohibited terms to moderate media at scale with Amazon Rekognition. Also, you can extend your moderation capabilities to audio files with Amazon Transcribe to then derive and understand valuable insights and sentiment with Amazon Comprehend.

According to Zehong, Senior Architect at Mobisocial, “To ensure that our gaming community is a safe environment to socialize and share entertaining content, we used ML to identify content that does not comply with our community standards. We created a workflow leveraging Amazon Rekognition to flag uploaded image and video content that contains non-compliant content. Amazon Rekognition’s Content Moderation API helps us achieve the accuracy and scale to manage a community of millions of gaming creators worldwide. Since implementing Amazon Rekognition, we’ve reduced the amount of content manually reviewed by our operations team by 95% while freeing up engineering resources to focus on our core business.”

With AWS content moderation services and solutions, you can streamline and automate workflows, and decide where to integrate human moderation to bring the most value to your business. You can customize these services or use turnkey workflows to help you enable specific business needs and industry use cases, for reliable, scalable, and cost-effective cloud-based content moderation workflows without upfront commitments or expensive licenses.

Conclusion

Moderating content today is an imperative expectation from your customers. Not acting has an impact not only on your customers’ safety but on crucial business outcomes. Poor or inefficient moderation strategies lead to poor user experiences, high moderation costs, and unnecessary brand risk and liability.

Check out Content Moderation Design Patterns to learn more about how to combine AWS AI services into a multi-modal solution. For additional information about how to contact our sales and specialist teams, find an AWS Partner with content moderation expertise, or to get started for free, please visit our AWS content moderation page.

About the Authors

Lauren Mullennex is a Sr. AI/ML Specialist Solutions Architect based in Denver, CO. She works with customers to help them accelerate their machine learning workloads on AWS. Her principal areas of interest are MLOps, computer vision, and NLP. In her spare time, she enjoys hiking and cooking Hawaiian cuisine.

Marvin Fernandes is a Solutions Architect at AWS, based in the New York City area. He has over 20 years of experience building and running financial services applications. He is currently working with large enterprise customers to solve complex business problems by crafting scalable, flexible, and resilient cloud architectures.

Nate Bachmeier is an AWS Senior Solutions Architect that nomadically explores New York, one cloud integration at a time. He specializes in migrating and modernizing applications. Nate is also a full-time student and has two kids.

Read MoreAWS Machine Learning Blog