Customers developing software as a service (SaaS) applications often need to send outgoing webhooks (HTTP call-backs in response to events) to other SaaS applications such as Salesforce, Marketo, or ServiceNow. When processing webhooks, you often have to implement custom logic or services to enqueue and emit these events. This introduces additional complexity and operational overhead.

This post explains how to use Amazon Aurora and Amazon EventBridge to send outgoing HTTP requests (webhooks) to external systems and SaaS applications. The solution requires less custom code to be written and reduces operational overhead by removing the need to run and scale the underlying infrastructure.

EventBridge is a serverless event bus that makes it easier to build event-driven applications at scale using events generated from applications. With the new API Destinations support, EventBridge is well-suited for acting as the event bus that emits these webhooks.

Aurora is a fully managed MySQL and PostgreSQL-compatible relational database built for the cloud, which combines the performance and availability of traditional enterprise databases with the simplicity and cost-effectiveness of open-source databases. Amazon Aurora PostgreSQL-Compatible Edition can now make calls to AWS Lambda functions. This means that outgoing HTTP requests—such as webhooks—can now be sent directly from Aurora to their destination using EventBridge. This removes the need to write, build, and maintain complex processing and sending logic.

This post focuses on the example of a new widget entity being created; you can use the same pattern for any notification where the event is sourced from the database engine, such as the following:

Administrator notifications of scheduled jobs or maintenance status

Updates to CRM systems based on changing customer records

Notifying downstream systems and microservices that there is a unit of work for them to perform

Creating recommendations for a customer when they sign up

Overview of the solution

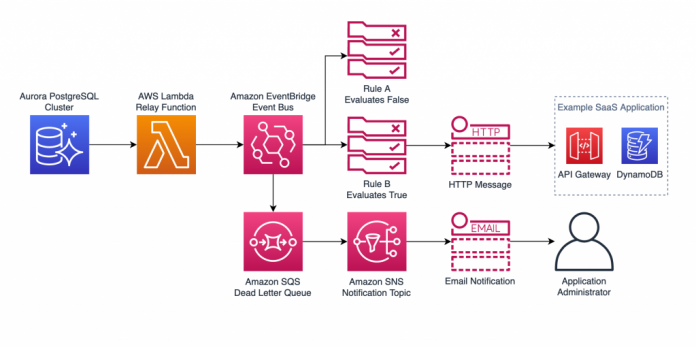

The following diagram illustrates the architecture of the solution.

Figure 1: Solution overview

This workflow performs the following steps:

A widget entity is created in Aurora.

The create_widget stored procedure invokes an AWS Lambda

Lambda adds the event to an EventBridge event bus.

EventBridge evaluates the event against rules to determine if and where to send the event, based on the TenantId property.

For any rules matching the event, EventBridge makes an HTTP request to the SaaS application destination configured in the rule.

If EventBridge fails to send the request, the event is added to a dead-letter queue in Amazon Simple Queue Service (Amazon SQS).

Prerequisites

Before you begin, complete the following prerequisites:

Create or have access to an AWS account

Deploy the resources for this example the AWS Cloud Development Kit (AWS CDK) and make sure the AWS CDK client is installed

Deploying the template provided in this post creates the resources in your account, which incur charges. For details on the cost of the services used in this post, see the Amazon Aurora, Amazon EventBridge, and AWS Lambda pricing pages.

Walkthrough overview

This post covers the steps required to deploy this architecture using the AWS CDK project available on GitHub. Instructions on how to deploy the solution are also available in the repository.

The high-level steps involved are:

Deploy the AWS CDK project. This creates the following key components:

A Lambda function to relay events from Aurora to EventBridge.

An AWS Identity and Access Management (IAM) role assigned to an Aurora cluster with access to invoke the Lambda function.

An EventBridge event bus, API destination, and rule for processing the event.

Deploy the source schema to the Aurora database and create the aws_lambda extension provided with Aurora PostgreSQL.

Verify everything works as expected by calling the stored procedure that invokes the Lambda function.

Deploy the AWS CDK project

Start by cloning the repository from GitHub:

To deploy the AWS CDK stack, open a terminal window in the cdk directory of the newly cloned repository and run npm i followed by cdk deploy.

After a few minutes, you should see the deployment succeed.

Figure 2: cdk deploy

For more details on deploying AWS CDK stacks, see Deploying the stack.

Create a Lambda function to relay events from Aurora to EventBridge

The toEventBridge Lambda function created by the stack acts as the glue between Aurora and EventBridge. This function takes the event parameter passed in to the Lambda function and sets this as the detail property on the widget-creation event sent to EventBridge. The custom event bus name is passed to the function as an environment variable. See the following code:

Associate an IAM role with an Aurora cluster

Aurora needs permission to invoke the Lambda function. This is achieved by an IAM role attached to the Aurora cluster.

The policy attached to this role grants permission to invoke the specific Lambda function:

The following policy is attached to a role that the RDS service is allowed to assume:

Finally, this role is assigned to the Aurora cluster. The feature selected when assigning the role is the Lambda feature. For more details on adding IAM roles to Aurora, see Associating an IAM role with an Amazon Aurora MySQL DB cluster.

Figure 3: IAM Role

Configure EventBridge

With the Aurora database configured to send events to Lambda, and Lambda configured to put the events onto the event bus, the final component is EventBridge.

The first resource created in EventBridge is the API destination. This example uses an Amazon API Gateway API created by the AWS CDK stack as the target SaaS application. But you can use any HTTP endpoint (alternatively, you can use a free HTTP request testing site such as webhook.site).

Figure 4: API Destination

Next is the connection, which specifies the authorization mechanism to use. This example uses an API key provided as an x-api-key header. The secret value is then stored in AWS Secrets Manager. At this point, you can also add any custom headers or query string parameters to send to the destination.

Figure 5: Connection

The rule is the EventBridge resource that matches the incoming events and sends them to the target. In this example, the rule matches on the tenantId property of the event payload. If this property is tenant 1, the rule matches and the event is sent to the target.

Figure 6: Rule

It’s good practice to use a dead-letter queue along with a retry policy. This allows EventBridge to automatically retry the event up to the specified number of attempts before sending the failed message to an SQS queue.

Figure 7: Retry policy

You can configure up to five targets per rule. You can use the same rule for multiple downstream targets, for example if a tenant, CRM system, and administrator must be notified of a particular event.

To learn more about setting up and configuring API Destinations, see Using API destinations with Amazon EventBridge.

Deploy the database schema

To test the webhook solution, connect to the Aurora cluster using psql, as shown in the following screenshot. You may need to install the postgresql package in order to have access to the client tools: sudo yum install postgresql postgresql-contrib -y. You can use the AWS Cloud9 IDE created by the AWS CDK stack for this. For more details on connecting to an AWS Cloud9 IDE, see Opening an environment in AWS Cloud9. The admin password for the Aurora cluster created by the AWS CDK stack is stored in Secrets Manager.

Figure 8: Connect to the cluster

After you’re connected, create the basic schema for the source widget application. Create some base tables that represent the source application and the required PostgreSQL extensions to invoke the Lambda function:

Now create and test the stored procedure, which invokes the Lambda function. This stored procedure inserts records into a widget table (representing the entities being created by tenants in the source application), then invokes the Lambda function created earlier with the ID of the created widget generated by PostgreSQL. Be sure to replace the function ARN with the one created by the AWS CDK stack:

Verify the solution

Finally, in the destination SaaS application, view the webhook emitted by the solution. For this example, the SaaS application is an API Gateway that routes the request to a Lambda function that stores the event in an Amazon DynamoDB table, so navigate to the DynamoDB console and view the Items page in the WebhoooksStack table.

Figure 9: Items

Clean up

To avoid incurring future charges, clean up the resources you made as part of this post.

A quick way to remove the resources created by the AWS CDK stack is to run the cdk destroy command. For more information, see Destroying the app’s resources.

Conclusion

This solution provides a fully serverless way to manage the enqueuing and dispatch of webhooks in response to events, leading to less operational overhead and improved reliability. Being able to trigger these webhooks directly from a relational database removes the need to build and run complex webhook handling software.

In addition, this functionality can provide compatibility when breaking free of legacy databases because there may be legacy application dependencies that use OLE Automation in SQL Server or the UTL_HTTP package in Oracle to send outgoing HTTP requests directly from the database engine. When you use Aurora to invoke Lambda functions, you gain this functionality without expensive license fees and with the added benefits of cloud-native databases, such as improved performance and increased developer agility.

For more information about what you can configure as an event target, see Amazon EventBridge targets. See the API Destinations announcement for details on Region availability for this feature.

See Invoking an AWS Lambda function from an Aurora PostgreSQL DB cluster for more details on using Lambda with Aurora PostgreSQL. This functionality is also now available in Amazon RDS for PostgreSQL.

About the Author

Josh Hart is a Senior Solutions Architect at Amazon Web Services. He works with ISV customers in the UK to help them build and modernize their SaaS applications on AWS.

Read MoreAWS Database Blog