Enterprise customers have multiple lines of businesses (LOBs) and groups and teams within them. These customers need to balance governance, security, and compliance against the need for machine learning (ML) teams to quickly access their data science environments in a secure manner. These enterprise customers that are starting to adopt AWS, expanding their footprint on AWS, or plannng to enhance an established AWS environment need to ensure they have a strong foundation for their cloud environment. One important aspect of this foundation is to organize their AWS environment following a multi-account strategy.

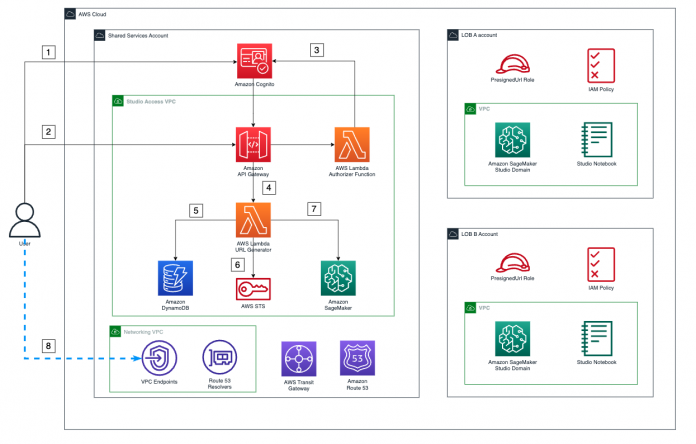

In the post Secure Amazon SageMaker Studio presigned URLs Part 2: Private API with JWT authentication, we demonstrated how to build a private API to generate Amazon SageMaker Studio presigned URLs that are only accessible by an authenticated end-user within the corporate network from a single account. In this post, we show how you can extend that architecture to multiple accounts to support multiple LOBs. We demonstrate how you can use Studio presigned URLs in a multi-account environment to secure and route access from different personas to their appropriate Studio domain. We explain the process and network flow, and how to easily scale this architecture to multiple accounts and Amazon SageMaker domains. The proposed solution also ensures that all network traffic stays within AWS’s private network and communication happens in a secure way.

Although we demonstrate using two different LOBs, each with a separate AWS account, this solution can scale to multiple LOBs. We also introduce a logical construct of a shared services account that plays a key role in governance, administration, and orchestration.

Solution overview

We can achieve communication between all LOBs’ SageMaker VPCs and the shared services account VPC using either VPC peering or AWS Transit Gateway. In this post, we use a transit gateway because it provides a simpler VPC-to-VPC communication mechanism over VPC peering when there are a large number of VPCs involved. We also use Amazon Route 53 forwarding rules in combination with inbound and outbound resolvers to resolve all DNS queries to the shared service account VPC endpoints. The networking architecture has been designed using the following patterns:

Centralizing VPC endpoints with Transit Gateway

Associating a transit gateway across accounts

Privately access a central AWS service endpoint from multiple VPCs

Let’s look at the two main architecture components, the information flow and network flow, in more detail.

Information flow

The following diagram illustrates the architecture of the information flow.

The workflow steps are as follows:

The user authenticates with the Amazon Cognito user pool and receives a token to consume the Studio access API.

The user calls the API to access Studio and includes the token in the request.

When this API is invoked, the custom AWS Lambda authorizer is triggered to validate the token with the identity provider (IdP), and returns the proper permissions for the user.

After the call is authorized, a Lambda function is triggered.

This Lambda function uses the user’s name to retrieve their LOB name and the LOB account from the following Amazon DynamoDB tables that store these relationships:

Users table – This table holds the relationship between users and their LOB.

LOBs table – This table holds the relationship between the LOBs and the AWS account where the SageMaker domain for that LOB exists.

With the account ID, the Lambda function assumes the PresignedUrlGenerator role in that account (each LOB account has a PresignedURLGenerator role that can only be assumed by the Lambda function in charge of generating the presigned URLs).

Finally, the function invokes the SageMaker create-presigned-domain-url API call for that user in their LOB´s SageMaker domain.

The presigned URL is returned to the end-user, who consumes it via the Studio VPC endpoint.

Steps 1–4 are covered in more detail in Part 2 of this series, where we explain how the custom Lambda authorizer works and takes care of the authorization process in the access API Gateway.

Network flow

All network traffic flows in a secure and private manner using AWS PrivateLink, as shown in the following diagram.

The steps are as follows:

When the user calls the access API, it happens via the VPC endpoint for Amazon API Gateway in the networking VPC in the shared services account. This API is set as private, and has a policy that allows its consumption only via this VPC endpoint, as described in Part 2 of this series.

All the authorization process happens privately between API Gateway, Lambda, and Amazon Cognito.

After authorization is granted, API Gateway triggers the Lambda function in charge of generating the presigned URLs using AWS’s private network.

Then, because the routing Lambda function lives in a VPC, all calls to different services happen through their respective VPC endpoints in the shared services account. The function performs the following actions:

Retrieve the credentials to assume the role via the AWS Security Token Service (AWS STS) VPC endpoint in the networking account.

Call DynamoDB to retrieve user and LOB information through the DynamoDB VPC endpoint.

Call the SageMaker API to create a presigned URL for the user in their SageMaker domain through the SageMaker API VPC endpoint.

The user finally consumes the presigned URL via the Studio VPC endpoint in the networking VPC in the shared services account, because this VPC endpoint has been specified during the creation of the presigned URL.

All further communications between Studio and AWS services happen via Studio’s ENI inside the LOB account’s SageMaker VPC. For example, to allow SageMaker to call Amazon Elastic Container Registry (Amazon ECR), the Amazon ECR interface VPC endpoint can be provisioned in the shared services account VPC, and a forwarding rule is shared with the SageMaker accounts that need to consume it. This allows SageMaker queries to Amazon ECR to be resolved to this endpoint, and the Transit Gateway routing will do the rest.

Prerequisites

To represent a multi-account environment, we use one shared services account and two different LOBs:

Shared services account – Where the VPC endpoints and the Studio access Gateway API live

SageMaker account LOB A – The account for the SageMaker domain for LOB A

SageMaker account LOB B – The account for the SageMaker domain for LOB B

For more information on how to create an AWS account, refer to How do I create and activate a new AWS account.

LOB accounts are logical entities that are business, department, or domain specific. We assume one account per logical entity. However, there will be different accounts per environment (development, test, production). For each environment, you typically have a separate shared services account (based on compliance requirements) to restrict the blast radius.

You can use the templates and instructions in the GitHub repository to set up the needed infrastructure. This repository is structured into folders for the different accounts and different parts of the solution.

Infrastructure setup

For large companies with many Studio domains, it’s also advisable to have a centralized endpoint architecture. This can result in cost savings as the architecture scales and more domains and accounts are created. The networking.yml template in the shared services account deploys the VPC endpoints and needed Route 53 resources, and the Transit Gateway infrastructure to scale out the proposed solution.

Detailed instructions of the deployment can be found in the README.md file in the GitHub repository. The full deployment includes the following resources:

Two AWS CloudFormation templates in the shared services account: one for networking infrastructure and one for the AWS Serverless Application Model (AWS SAM) Studio access Gateway API

One CloudFormation template for the infrastructure in the SageMaker account LOB A

One CloudFormation template for the infrastructure of the SageMaker account LOB B

Optionally, an on-premises simulator can be deployed in the shared services account to test the end-to-end deployment

After everything is deployed, navigate to the Transit Gateway console for each SageMaker account (LOB accounts) and confirm that the transit gateway has been correctly shared and the VPCs are associated with it.

Optionally, if any forwarding rules have been shared with the accounts, they can be associated with the SageMaker accounts’ VPC. The basic rules to make the centralized VPC endpoints solution work are automatically shared with the LOB Account during deployment. For more information about this approach, refer to Centralized access to VPC private endpoints.

Populate the data

Run the following script to populate the DynamoDB tables and Amazon Cognito user pool with the required information:

The script performs the required API calls using the AWS Command Line Interface (AWS CLI) and the previously configured parameters and profiles.

Amazon Cognito users

This step works the same as Part 2 of this series, but has to be performed for users in all LOBs and should match their user profile in SageMaker, regardless of which LOB they belong to. For this post, we have one user in a Studio domain in LOB A (user-lob-a) and one user in a Studio domain in LOB B (user-lob-b). The following table lists the users populated in the Amazon Cognito user pool.

User

Password

user-lob-a

UserLobA1!

user-lob-b

UserLobB1!

Note that these passwords have been configured for demo purposes.

DynamoDB tables

The access application uses two DynamoDB tables to direct requests from the different users to their LOB’s Studio domain.

The users table holds the relationship between users and their LOB.

Primary Key

LOB

user-lob-a

lob-a

user-lob-b

lob-b

The LOB table holds the relationship between the LOB and the AWS account where the SageMaker domain for that LOB exists.

LOB

ACCOUNT_ID

lob-a

<YOUR_LOB_A_ACCOUNT_ID>

lob-b

<YOUR_LOB_B_ACCOUNT_ID>

Note that these user names must be consistent across the Studio user profiles and the names of the users we previously added to the Amazon Cognito user pool.

Test the deployment

At this point, we can test the deployment going to API Gateway and check what the API responds for any of the users. We get a presigned URL in the response; however, consuming that URL in the browser will give an auth token error.

For this demo, we have set up a simulated on-premises environment with a bastion host and a Windows application. We install Firefox in the Windows instance and use the dev tools to add authorization headers to our requests and test the solution. More detailed information on how to set up the on-premises simulated environment is available in the associated GitHub repository.

The following diagram shows our test architecture.

We have two users, one for LOB A (User A) and another one for LOB B (User B), and we show how the Studio domain changes just by changing the authorization key retrieved from Amazon Cognito when logging in as User A and User B.

Complete the following steps to test the deployment:

Retrieve the session token for User A, as shown in Part 2 of the series and also in the instructions in the GitHub repository.

We use the following example command to get the user credentials from Amazon Cognito:

For this demo, we use a simulated Windows on-premises application. To connect to the Windows instance, you can follow the same approach specified in Secure access to Amazon SageMaker Studio with AWS SSO and a SAML application.

Firefox should be installed in the instance. If not, once in the instance, we can install Firefox.

Open Firefox and try to access the API of Studio with either user-lob-a or user-lob-b as the API path parameter.

You get a not authorized message.

Open the developer tools of Firefox and on the Network tab, choose (right-click) the previous API call, and choose Edit and Resend.

Here we add the token as an authorization header in the Firefox developer tools and make the request to the Studio access Gateway API again.

This time, we see in the developer tools that the URL is returned along with a 302 redirect.

Although the redirect won´t work when using the developer tools, you can still choose it to access the LOB SageMaker domain for that user.

Repeat for User B with its corresponding token and check that they get redirected to a different Studio domain.

If you perform these steps correctly, you can access both domains at the same time.

In our on-premises Windows application, we can have both domains consumed via the Studio VPC endpoint through our VPC peering connection.

Let’s explore some other testing scenarios.

If you edit the API again and change the path to the opposite LOB, when resending, we get an error in the API response: a forbidden response from API Gateway.

Trying to take the returned URL for the correct user and consume it in your laptop´s browser will also fail, because it won’t be consumed via the internal Studio VPC endpoint. This is the same error we saw when testing with API Gateway. It returns an “Auth token containing insufficient permissions” error.

Taking too long to consume the presigned URL will result in an “Invalid or Expired Auth Token” error.

Scale domains

Whenever a new SageMaker domain is added, you must complete the following networking and access steps:

Share the transit gateway with the new account using AWS Resource Access Manager (AWS RAM).

Attach the VPC to the transit gateway in the LOB account (this is done in AWS CloudFormation).

In our scenario, the transit gateway was set with automatic association to the default route table and automatic propagation enabled. In a real-world use case, you may need to complete three additional steps:

In the shared services account, associate the attached Studio VPC to the respective Transit Gateway route table for SageMaker domains.

Propagate the associated VPC routes to Transit Gateway.

Lastly, add the account ID along with the LOB name to the LOBs’ DynamoDB table.

Clean up

Complete the following steps to clean up your resources:

Delete the VPC peering connection.

Remove the associated VPCs from the private hosted zones.

Delete the on-premises simulator template from the shared services account.

Delete the Studio CloudFormation templates from the SageMaker accounts.

Delete the access CloudFormation template from the shared services account.

Delete the networking CloudFormation template from the shared services account.

Conclusion

In this post, we walked through how you can set up multi-account private API access to Studio. We explained how the networking and application flows happen as well as how you can easily scale this architecture for multiple accounts and SageMaker domains. Head over to the GitHub repository to begin your journey. We’d love to hear your feedback!

About the Authors

Neelam Koshiya is an Enterprise Solutions Architect at AWS. Her current focus is helping enterprise customers with their cloud adoption journey for strategic business outcomes. In her spare time, she enjoys reading and being outdoors.

Alberto Menendez is an Associate DevOps Consultant in Professional Services at AWS. He helps accelerate customers´ journeys to the cloud. In his free time, he enjoys playing sports, especially basketball and padel, spending time with family and friends, and learning about technology.

Rajesh Ramchander is a Senior Data & ML Engineer in Professional Services at AWS. He helps customers migrate big data and AL/ML workloads to AWS.

Ram Vittal is a machine learning solutions architect at AWS. He has over 20 years of experience architecting and building distributed, hybrid, and cloud applications. He is passionate about building secure and scalable AI/ML and big data solutions to help enterprise customers with their cloud adoption and optimization journey to improve their business outcomes. In his spare time, he enjoys tennis and photography.

Read MoreAWS Machine Learning Blog