AWS recently added Google Cloud for MySQL as a source to AWS Data Migration Service (AWS DMS) for MySQL 8.0 and newer. In this post, we demonstrate how you can migrate from Google Cloud for MySQL to Amazon Aurora MySQL-Compatible Edition in an automated way and minimize downtime using AWS DMS.

As of this writing, there is a limitation on supporting databases that have Google SQL’s Allow only SSL connections setting because it requires both server-side and client-side certificate validation. AWS DMS only supports server-side certificate verification. In this post, we demonstrate an approach to migrate databases with and without SSL.

Solution overview

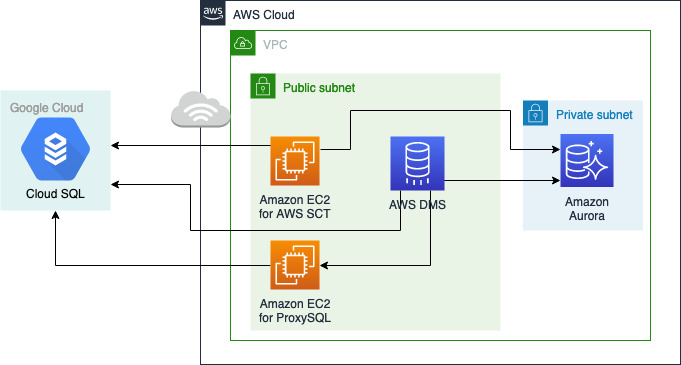

For Google Cloud for MySQL databases without SSL, we use AWS Schema Conversion Tool (AWS SCT) to convert the source schema to the target Aurora MySQL database. We use AWS DMS to migrate the data between Google Cloud for MySQL and Aurora MySQL with change data capture (CDC) enabled for incremental changes.

For Google Cloud for MySQL databases with SSL, we install a non-SSL MySQL proxy on a standalone Amazon Elastic Compute Cloud (Amazon EC2) instance. AWS DMS connects to that proxy MySQL instance to communicate with the source Google Cloud for MySQL database. This enables us to migrate the data from an SSL-enabled Google Cloud for MySQL database to Aurora MySQL. You have to reach the Google Cloud for MySQL database over the internet from your AWS environment, but that traffic is encrypted with SSL during transit.

The following diagram shows the architecture of the overall solution.

We use a simple customers-products-orders table structure and the following dataset for this migration. The following figure shows the entity relationship of this sample dataset. There are three tables: customers, products and orders. Orders has two foreign keys, one from the customer table and the other from the product table.

Prerequisites

For this walkthrough, you should have the following prerequisites:

An existing Google Cloud for MySQL database with public connectivity

An AWS account

An Aurora MySQL database, which acts as the destination

This solution incurs costs in your account while using the services described.

Section 1: Migrate Google Cloud for MySQL databases without SSL

For homogeneous migrations, particularly for those that don’t need to capture ongoing changes, we recommend MySQL native tools like mysqldump and the MySQL Workbench Data Export and Import wizard. If the source is a different database engine, such as Google Cloud for SQL Server, then AWS SCT is required to convert the schema. However, even for this homogeneous migration (Google Cloud for MySQL to Aurora MySQL), we use AWS SCT to apply schema changes to the destination before starting the migration. The following diagram shows the architecture.

You can follow a similar approach for heterogenous migrations (for example, from Google Cloud for MySQL to Amazon Aurora PostgreSQL-Compatible Edition).

Install AWS SCT

AWS SCT is a free application that you can install on an on-premises server, a local workstation, or an EC2 instance. For installation instructions, refer to Installing, verifying, and updating AWS SCT. For this post, we create a t3.medium EC2 instance and install the tool there.

Create the AWS DMS replication instance

You need a replication instance to migrate databases. This instance requires sufficient storage and processing power to perform the tasks that you assign and migrate data from your source database to the target database. For appropriate instance selection, see Working with an AWS DMS replication instance.

Let’s start by creating a basic instance. We set the parameters as follows:

For Name, enter a friendly name that uniquely identifies this instance.

For Instance class, choose an instance according to your workload.

For Engine version, we recommend choosing the latest version.

For Allocated storage, choose the storage according to your workload.

For VPC, choose the VPC where this instance will be placed.

We recommend choosing Multi-AZ for production workloads.

Select the Publicly accessible setting, because this instance needs to communicate with Google Cloud over the internet.

Create the target Aurora MySQL instance

If you already have an Aurora MySQL instance, feel free to use it. If not, create an Aurora MySQL cluster. Make sure the target has both AWS SCT and AWS DMS permissions. AWS SCT needs the following additional permissions to apply a schema to the target:

Configure networking changes on Google Cloud

To ensure the AWS DMS instance and AWS SCT running on Amazon EC2 can connect to the Google Cloud for MySQL instance, make sure the instance has a public IP. Add the public IP of the AWS DMS replication instance and the AWS SCT EC2 instance under Authorized networks. The following image shows the configuration.

Configure source MySQL changes

We create a database user and, using the principle of least privilege, assign only the permissions needed for the task:

For the AWS SCT to read the schema properly, you need to add the following privileges:

If you want to also capture ongoing changes in the AWS DMS task, you need to add the following privileges:

Perform schema conversion

Now it’s time to apply the source schema to the target.

Connect to the EC2 instance where AWS SCT is installed.

Open the AWS SCT application and on the File menu, chose New Project Wizard.

For Project name, enter an appropriate name.

For Location, choose an appropriate location on your local machine.

For Kind, choose SQL Database.

For Source engine, choose MySQL.

For Migration, select I want to keep the same engine but optimize for the cloud.

Choose Next.

For Connection name, enter an appropriate name.

For Server name, choose the source Google Cloud for MySQL public IP.

For Server port, use 3306 if using the default port.

Enter the user name and password you created as a prerequisite.

Choose Next.

After the source connection is established, it shows all the schemas that the MySQL user has access to.

Select the schemas you want to migrate and choose Next.

The database migration assessment report summarizes all the schema conversion tasks and details the action items for schemas that can’t be converted to the DB engine of your target DB instance. Because this a homogeneous migration, the migration assessment may not provide many action items, as shown in in the following screenshot.

Now you configure the target.

For Name, enter the Aurora MySQL DNS.

For Server port¸ use 3306 if using the default port.

Enter the user name and password for the user with appropriate write permissions.

Choose Finish to end the wizard.

Because there are no action items in terms of converting or adjusting the source schema, you can directly load the schema from source to target, as shown in the following screenshot.

Choose (right-click) the target schema and choose Apply to database.

A save icon appears on top of the objects, indicating that they’re applied to the target database.

Now that the source schema is applied to the target, it’s time to create AWS DMS endpoints and tasks.

Create the AWS DMS endpoints

An endpoint provides the connection, data store type, and location information about your sources and the targets. You need to create at least one source and one target endpoint.

Source endpoint

When creating the source endpoint, provide the following information:

For Endpoint identifier, enter a friendly name.

For Source engine, choose Google Cloud for MySQL.

For Access to endpoint, select Provide access information manually.

For Server name, enter the IP address of the Cloud SQL.

For Port, use 3306 if using the default port.

Enter the appropriate user name and password.

Test the endpoint, then complete endpoint creation.

Target endpoint

Repeat the previous process with the following parameters for the target endpoint:

For Select RDS instance, choose the Aurora MySQL instance.

For Access to endpoint, select Provide access information manually.

For Server name, use the pre-populated setting.

For Port, use 3306 if using the default port.

Enter the appropriate user name and password.

Test the endpoint, then complete endpoint creation.

Create the AWS DMS migration task

An AWS DMS task is where all the work happens. This is where you configure what database objects to migrate, logging requirements, error handling, and so on. Provide the following information when creating your task:

For Task identifier, enter a friendly and identifiable name.

For Replication instance, choose the one you created.

For Source database endpoint, choose the Google Cloud for MySQL endpoint you created.

For Target database endpoint, choose the Aurora MySQL endpoint you created.

For Migration type, choose Migrate existing data and replicate ongoing changes.

Enable Amazon CloudWatch Logs so you can debug issues.

For Table mappings, select Wizard.

Choose the schemas you want to migrate.

Leave everything else as defaults and choose Create task.

As soon as the task is created, it’s in Creating status. After a few seconds, it changes to Ready status. The migration (and CDC if enabled) starts automatically.

After the load is complete, navigate to the Table statistics tab as shown in the following screenshot.

If replicating ongoing changes, try inserting new records on the source to see the inserts column updated on the statistics, as shown in the last row in the following screenshot.

You may want to connect to the destination using something like MySQL Workbench. Also, you may want to perform data validation. When you’re satisfied with the counts between the source and destination, feel free to point your applications to the Aurora MySQL database and shut down the source Google Cloud for MySQL database.

Section 2: Migrate SSL-enabled Google Cloud for MySQL databases

As of this writing, AWS DMS does not support the Google Cloud for MySQL setting Allow only SSL connections. A possible way to work around this limitation is to create a MySQL proxy in a known, safe environment. The proxy accepts non-SSL connections but uses SSL to connect to the source Google Cloud for MySQL database. An EC2 instance with a MySQL proxy installed can handle that for you.

We use ProxySQL, an open-source, high performance, high availability, database protocol aware proxy for MySQL. The following diagram shows the architecture.

Install ProxySQL

The following instructions apply for an Amazon Linux AMI. You can follow a similar approach for other Linux distributions. If you don’t have an instance that is available, launch one. The size and instance type depend upon various factors, like how much data is there and how quickly you want the migration to complete.

When an instance is ready, make sure you allow SSH traffic from wherever you want to connect from. SSH into the instance and run the following commands. These commands get ProxySQL from the yum repository and install it. The command also installs MySQL. You may need to make slight adjustments for a different Linux distribution.

If everything worked, you should now be logged in to ProxySQL.

We recommend changing the default password for the ProxySQL admin from admin to something else:

Create the AWS DMS replication instance

As stated earlier, you need a replication instance to migrate databases. This instance requires sufficient storage and processing power to perform the tasks that you assign and migrate data from your source database to the target database. For appropriate instance selection, see Working with an AWS DMS replication instance.

Create your instance with the following parameters:

For Name, enter a friendly name that uniquely identifies this instance.

For Instance class, choose an instance according to your workload.

For Engine version, we recommend choosing the latest version.

For Allocated storage, choose the storage according to your workload.

For VPC, choose the VPC where this instance will be placed.

We recommend choosing Multi-AZ for production workloads.

De-select the Publicly accessible setting

Create the target Aurora MySQL instance

If you already have an Aurora MySQL instance, feel free to use it. If not, create an Aurora MySQL cluster. Make sure the target has AWS DMS permissions.

Configure networking, security, and user permissions on Google Cloud for MySQL

On the Google Cloud Platform (GCP) console, navigate to the networking section under the MySQL cluster that you want to migrate, and complete the following steps:

Add the public IP of the EC2 instance running ProxySQL so traffic can reach from that.

On the Security tab, select Allow only SSL connections.

Generate a client certificate if you don’t have one already.

Download both the server certificate and the client certificates.

On the source MySQL database, create two users: a dmsuser that is used by AWS DMS to migrate the data, and a monitor user that is used by ProxySQL to monitor the connection:

Configure ProxySQL

The following commands configure your ProxySQL instance to ensure it can connect to the source Google Cloud for MySQL database. You add the server information, user information, and SSL certificates, and also create a monitor user that pings the source to make sure it’s online. You should run these commands in the ProxySQLAdmin prompt. If you lost that on the ProxySQL EC2 instance, run the following code:

Insert the source MySQL information in the mysql_servers table:

Load the servers to runtime and persist it to disk:

Insert the dmsuser in the mysql_users table:

Load the users to runtime and persist it to disk:

Copy the SSL certificates you downloaded from GCP to the /var/lib/proxysql directory. These commands copy those files from a local machine (MacOS terminal). Follow the recommended directions for the operating system of your local machine if you’re not on MacOS.

They will be in the home directory in the EC2 instance for ProxySQL, so copy them to /var/lib/proxysql:

Run the following queries to set various variables for SSL and monitoring of the Google Cloud for MySQL database from ProxySQL:

Run SELECT on the MySQL connect log to make sure you can successfully connect to the source Google Cloud for MySQL database:

The following screenshot shows that ProxySQL is successfully interacting with the source database.

Create the AWS DMS endpoints

As stated earlier, an endpoint provides the connection, data store type, and location information about your sources and the targets. You need to create at least one source and one target endpoint.

Source endpoint

Because you already have a non-SSL proxy running for the Google Cloud for MySQL database, you can configure that as the source as follows:

For Endpoint identifier, enter a friendly name.

For Source engine, choose MySQL.

For Access to endpoint, select Provide access information manually.

For Server name, enter the IP address of the EC2 instance running ProxySQL.

For Port, use 3306 if using the default port.

Enter the appropriate user name and password.

Test the endpoint, then complete endpoint creation.

Target endpoint

Repeat the process with the following parameters for the target endpoint:

For Select RDS instance, choose the Aurora MySQL instance.

For Access to endpoint, select Provide access information manually.

For Server name, use the pre-populated setting.

For Port, use 3306 if using the default port.

Enter the appropriate user name and password.

Test the endpoint, then complete endpoint creation.

Create the AWS DMS task

As mentioned earlier, the AWS DMS task is where you can configure what database objects to migrate, logging requirements, error handling, and so on. Create your task with the following information:

For Task identifier, enter a friendly and identifiable name.

For Replication instance, choose the one you created.

For Source database endpoint, choose the ProxySQL MySQL endpoint you created.

For Target database endpoint, choose the Aurora MySQL endpoint you created.

For Migration type, choose Migrate existing data.

Enable CloudWatch Logs so you can debug issues.

For Table mappings¸ select Wizard.

Choose the schemas you want to migrate.

Leave everything else as defaults and choose Create task.

As soon as the task is created, it’s in Creating status. After a few seconds, it changes to Ready status. The migration starts automatically.

After the load is complete, navigate to the Table statistics tab as shown in the following screenshot.

You may want to connect to the destination using something like MySQL Workbench. Also, you may want to perform data validation. When you’re satisfied with the counts between the source and destination, feel free to point your applications to the Aurora MySQL database.

Clean up

Stop the EC2 instances that are running AWS SCT and ProxySQL. Additionally, either delete the associated security groups for those instances or remove rules that are no longer needed. If you don’t need the DMS instance anymore, delete it.

Conclusion

In this post, we demonstrated how to migrate your database and schema from Google Cloud for MySQL (with and without SSL) to Aurora MYSQL using the AWS SCT and AWS DMS. Try this solution out and let us know if you have any comments.

See a blog post from a customer following a similar approach: Gearing up OLX Autos’ platform by migrating from GCP to AWS using AWS DMS

About the author

Kasi Muthu is a global partner solutions architect focusing on databases, analytics and AI/ML at AWS based out of Houston, TX. He is passionate about helping partners and customers accelerate their cloud data journey. He is a trusted advisor in this field and has plenty of experience architecting and building scalable, resilient and performant workloads in the cloud. Outside of work, he enjoys spending time with his family and spending quite a bit of his free time on YouTube.

Read MoreAWS Database Blog