This is a guest post co-written by Mayank Lahiri (Ph.D), Software Architect at Twilio Inc.

Twilio, a trailblazer in customer engagement and communication services, sustains exponential growth of its billing platform on Amazon Aurora. Twilio enables software engineers to programmatically make and receive phone and video calls, send and receive text messages and emails, and perform other communication functions using its web service APIs. Twilio’s billing platform is one of its largest application platforms by traffic volume. It provides internal teams with an end-to-end microtransaction pricing engine that processes more than one billion billing transactions per day.

In this post, we discuss how Twilio modernized their billing platform with Amazon Aurora MySQL-Compatible Edition to support their growth. Amazon Aurora is a MySQL and PostgreSQL-compatible relational database built for the cloud. Aurora combines the performance and availability of traditional enterprise databases with the simplicity and cost-effectiveness of open-source databases.

Billing platform: Previous architecture

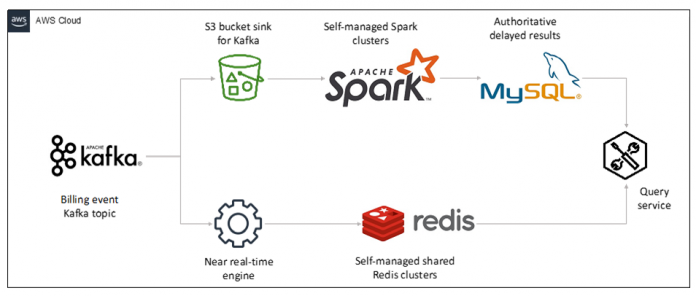

Twilio’s previous billing processing architecture consisted of an authoritative batch layer and a non-authoritative, but near real-time speed layer. The batch layer was made up of a set of Spark jobs running on a self-managed Spark cluster that wrote directly to Amazon Simple Storage Service (Amazon S3) buckets and self-managed MySQL databases. The near real-time speed layer consisted of a set of manually sharded Redis clusters. To collect and report on a customer account balance, for example, short-term counters from the Redis system were dynamically combined with longer-term outputs from the Spark jobs.

The following diagram depicts the previous architecture that comprised Twilio’s billing processing pipeline. All services, databases, and data processing frameworks in the diagram were self-managed on Amazon Elastic Compute Cloud (Amazon EC2).

The previous architecture used 102 EC2 nodes for running Spark clusters, 144 EC2 nodes for the Redis clusters, and 38 EC2 instances for serving the output from the Spark jobs. Instance classes spanned the c5, r5, r4, and i3 families, with a total provisioned Amazon Elastic Block Store (Amazon EBS) capacity in excess of 300 TB. The system processed one billion billing events per day and relied on internal platform services to broker events through Kafka into various processing subsystems (Kafka brokers aren’t included in the instance count given), as well as Amazon Simple Storage Service (Amazon S3).

Key drivers to modernize the billing platform

Twilio experienced significant transaction volume growth in 2020. Twilio’s recent acquisitions of companies like SendGrid and Segment also required greater scalability to support future integration and organic growth. The previous billing platform was designed and deployed more than 5 years ago, had reached the limits of its scalability, and couldn’t keep up with the platform’s growth anymore. The previous architecture was expensive to run and had a complex infrastructure that made it very difficult to upgrade and add new features.

The following key factors drove the push for modernization:

The previous platform introduced an unacceptable amount of latency (1–2 hours) due to the authoritative batch phase, which sometimes caused delays in month-end financial closing.

The self-managed nature of the Spark and Redis clusters was consuming far too much engineering time, and most of the institutional knowledge of this legacy platform was lost over the years. The on-call burden for the aggregation pipeline was among the highest for any system in the company and it didn’t align with Twilio’s philosophy of small DevOps engineering teams.

Innovation had stalled partly due to the difficulty of simultaneously upgrading both paths of the platform (batch layer and speed layer).

The modernization journey started with the selection of Aurora MySQL

A key requirement for the next-generation system was that the new architecture would reduce the operational burden on engineering teams. A managed service datastore was key to satisfying this requirement. Twilio evaluated multiple database solutions and finally selected Aurora for its new billing processing architecture. To accommodate Twilio’s write-intensive workload, the team distributed the total traffic over multiple Aurora clusters by implementing a custom sharding algorithm that is based on account_id and other attributes of the write-intensive workload.

The first step in building confidence in the solution was to pursue a detailed proof of concept (POC). To maintain schema compatibility with the output of the old system (Spark and Redis clusters), the engineering team started with the final MySQL tables generated by the prior system. An online transaction processing (OLTP) workload was built on top of these tables, consisting of approximately 10–40 MySQL 5.7 UPSERT statements per transaction, which was representative of the production workload.

Because access to production billing data is tightly controlled for security, privacy, and compliance reasons, a realistic synthetic data generator was created for the proof of concept to quickly battle-test the architecture. The synthetic data generator created realistic billing events using three distinct traffic generation models: uniform, skewed, and hot shard. In all these models, the primary key is a combination of account_id and other attributes; a hot primary key occurs when a few accounts generate a majority of the traffic.

Uniform – This is a best-case model, where each billing event is generated by a unique account. In this model, there are no hot primary keys caused by certain accounts generating more traffic than others.

Skewed – In this model, most traffic is generated by a small set of accounts, which is more representative of real-world traffic. This leads to several hot primary keys created by these high-volume accounts.

Hot shard – This is a worst-case model, where all billing events are generated by a single customer account. This leads to very high contention in the database for a small set of rows.

The skewed and hot shard synthetic traffic modes were designed to stress test individual Aurora instances to find the Aurora cluster’s peak write throughput. In production, these write workloads are sharded over multiple Aurora clusters to mitigate the effects of hot primary keys.

Twilio experimented with different Aurora DB instance classes in production mode, which entailed using Aurora features such as encryption, Aurora replicas across multiple Availability Zones, automatic backups, and Amazon RDS Performance Insights for each of the three traffic models to test and tune performance results. This also allowed them to collect cost data for variables like database instances, database storage and I/O requests, snapshot exports, and the ideal sharding configuration to support current and future volume. They also saw additional savings from switching to AWS Graviton2 r6g DB instance classes. After Twilio had both performance and cost data, they felt confident that they could build a production-grade billing platform on Aurora. This became the first large-scale, production-facing Aurora and Graviton2 initiative at Twilio.

Modernized architecture and future direction

As an initial production setup, the new architecture based on Aurora was built and deployed as a parallel shadow system fed off of Kafka. It consists of 30 Aurora clusters; each Aurora cluster has one write instance and one Aurora replica (all using a mix of r6g.4xlarge and r6g.8xlarge Graviton2 instances). This was done to let the OLTP write path of the platform “bake,” collect production cost and performance metrics, and generally build confidence in the new architecture. The read query path increased the number of DB instances per Aurora cluster to between 2–5 auto scaling Aurora replicas across multiple Availability Zones. This integrated the engine into the broader ecosystem of billing services, gradually allowing the older architecture to be deprecated.

The rebuilt billing processing engine that feeds Aurora is a collection of stateless microservices running on Amazon EC2. It consisted of two broad components: complex, proprietary billing rules that determine which accounts to charge and how much, and a custom sharding layer that determines which Aurora cluster to send transactions to.

The following diagram shows the new, simplified architecture using Aurora MySQL, which has enabled innovation by having a single source of truth over the previous platform, which had two layers (batch layer and speed layer).

The following are some key metrics of the current production system using Aurora MySQL:

Ability to sustain 2.5 times the peak processing rate of the current system at less than 60% of the cost of the previous architecture

No observable Aurora downtime taken in over 5 months of experimentation, and almost 2 months of running shadow production

Steady state metrics on over 40 accumulated days of live production data across all Aurora clusters:

Over 46 billion transaction records indexed and available, compared to less than one billion stored in the former online Redis system

4.8 TB of data across all tables

Over 11,000 active database connections to all clusters

Less than 10 milliseconds median end-to-end transaction run latency

Less than 60 milliseconds 99th percentile end-to-end transaction run latency

During the initial data load from Kafka into the new architecture, the overall system was able to achieve the following sustained throughput metrics over a period of 4 days across all shards:

60,000 MySQL write transactions per second sustained, every transaction has multiple inserts and updates

1.3 million queries per second sustained, all inserts and updates

Less than 6 millisecond maximum Aurora write latency on slowest shard

Based on these metrics, Twilio achieved higher scalability, lower latency, and improved developer efficiency by replacing two aggregation systems (Spark and Redis clusters) with a single system. This also allows Twilio to increase the shard count to accommodate eight times the headroom for peak processing volumes, up from the previous value of 2.5 times. Over the next 6–8 months, Twilio will integrate the core engine based on Aurora into the broader billing ecosystem. To attain further cost optimizations, Twilio is exploring Amazon Aurora Serverless v2, which can scale to hundreds of thousands of transactions in a fraction of a second and deliver up to 90% cost savings compared to provisioning for peak capacity. Similarly, to achieve disaster recovery across regions, Twilio will use Amazon Aurora Global Database, which is designed for globally distributed applications and replicates data with no impact on database performance.

Benefits of the new architecture

By combining the two paths of the former platform into a single go-forward interface for aggregated billing data, Twilio is now able to realize several technical and business benefits:

Developers are able to use a familiar database technology (MySQL) without having to ramp up on other technologies and reconcile the differences between Redis queries and Spark jobs written in Scala.

Any innovation and changes to billing processing can occur in a single location and be built, tested, and deployed seamlessly. Previously, changes needed to be written in two very different data layers (Spark and Redis), in multiple languages.

Decrease in operational burden for engineers. The Twilio engineering team was able to take advantage of AWS enterprise support when encountering difficulties with Aurora, rather than debugging database and Spark issues themselves.

The scalability of the design has opened up a lot of headroom for growth—the Aurora-based system is able to sustain 2.5 times the previous peak volume without issues, with levers for increasing this even further as needed.

Conclusion

In this post, we saw how Twilio embarked on the journey to modernize its billing platform to support its growth. The key factors that drove the modernization decisions were the ability to scale the platform to support new acquisitions, improve latency for faster month-end financial closing, and facilitate future innovation. The modernized billing platform on Aurora also decreases the ongoing operational support burden for engineers, and the scalability of the design opens up a lot of headroom for growth.

We encourage you to look into modernizing your existing platforms with Aurora, a relational database service built for the cloud with high performance at global scale.

About the Authors

Purna Sanyal is a Senior Solutions Architect at AWS, helping strategic customers solve their business problems with successful cloud adoption and cloud migration. He provides technical thought leadership and architecture guidance, and conducts POCs to enable customers’ digital transformation. He is also passionate about building innovative solutions around digital native architecture, database, analytics, and machine learning.

Mayank Lahiri (Ph.D) is a Software Architect at Twilio Inc. His specialties are scalable distributed systems, cloud computing, data mining, and machine learning.

Read MoreAWS Database Blog