Digital publishers are continuously looking for ways to streamline and automate their media workflows in order to generate and publish new content as rapidly as they can.

Many publishers have a large library of stock images that they use for their articles. These images can be reused many times for different stories, especially when the publisher has images of celebrities. Quite often, a journalist may need to crop out a desired celebrity from an image to use for their upcoming story. This is a manual, repetitive task that should be automated. Sometimes, an author may want to use an image of a celebrity, but it contains two people and the primary celebrity needs to be cropped from the image. Other times, celebrity images might need to be reformatted for publishing to a variety of platforms like mobile, social media, or digital news. Additionally, an author may need to change the image aspect ratio or put the celebrity in crisp focus.

In this post, we demonstrate how to use Amazon Rekognition to perform image analysis. Amazon Rekognition makes it easy to add this capability to your applications without any machine learning (ML) expertise and comes with various APIs to fulfil use cases such as object detection, content moderation, face detection and analysis, and text and celebrity recognition, which we use in this example.

The celebrity recognition feature in Amazon Rekognition automatically recognizes tens of thousands of well-known personalities in images and videos using ML. Celebrity recognition can detect not just the presence of the given celebrity but also the location within the image.

Overview of solution

In this post, we demonstrate how we can pass in a photo, a celebrity name, and an aspect ratio for the outputted image to be able to generate a cropped image of the given celebrity capturing their face in the center.

When working with the Amazon Rekognition celebrity detection API, many elements are returned in the response. The following are some key response elements:

MatchConfidence – A match confidence score that can be used to control API behavior. We recommend applying a suitable threshold to this score in your application to choose your preferred operating point. For example, by setting a threshold of 99%, you can eliminate false positives but may miss some potential matches.

Name, Id, and Urls – The celebrity name, a unique Amazon Rekognition ID, and list of URLs such as the celebrity’s IMDb or Wikipedia link for further information.

BoundingBox – Coordinates of the rectangular bounding box location for each recognized celebrity face.

KnownGender – Known gender identity for each recognized celebrity.

Emotions – Emotion expressed on the celebrity’s face, for example, happy, sad, or angry.

Pose – Pose of the celebrity face, using three axes of roll, pitch, and yaw.

Smile – Whether the celebrity is smiling or not.

Part of the API response from Amazon Rekognition includes the following code:

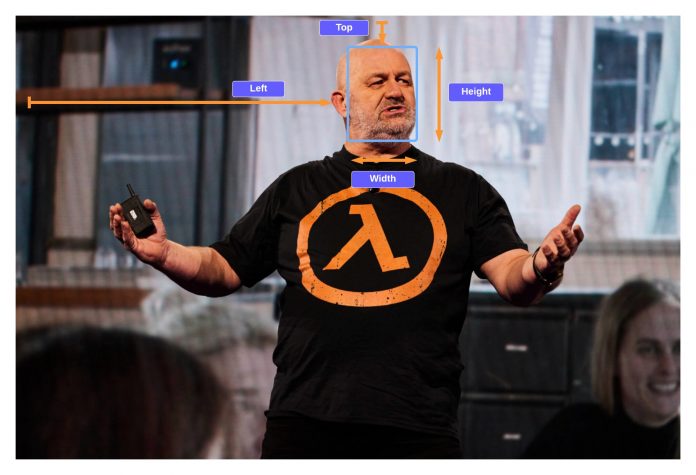

In this exercise, we demonstrate how to use the bounding box element to identify the location of the face, as shown in the following example image. All of the dimensions are represented as ratios of the overall image size, so the numbers in the response are between 0–1. For example, in the sample API response, the width of the bounding box is 0.1, which implies the face width is 10% of the total width of the image.

With this bounding box, we are now able to use logic to make sure that the face remains within the edges of the new image we create. We can apply some padding around this bounding box to keep the face in the center.

In the following sections, we show how to create the following cropped image output with Werner Vogels in crisp focus.

We launch an Amazon SageMaker notebook, which provides a Python environment where you can run the code to pass an image to Amazon Rekognition and then automatically modify the image with the celebrity in focus.

The code performs the following high-level steps:

Make a request to the recognize_celebrities API with the given image and celebrity name.

Filter the response for the bounding box information.

Add some padding to the bounding box such that we capture some of the background.

Prerequisites

For this walkthrough, you should have the following prerequisites:

An AWS account

A S3 bucket

Upload the sample image

Upload your sample celebrity image to your S3 bucket.

Run the code

To run the code, we use a SageMaker notebook, however any IDE would also work after installing Python, pillow, and Boto3. We create a SageMaker notebook as well as the AWS Identity and Access Management (IAM) role with the required permissions. Complete the following steps:

Create the notebook and name it automatic-cropping-celebrity.

The default execution policy, which was created when creating the SageMaker notebook, has a simple policy that gives the role permissions to interact with Amazon S3.

Update the Resource constraint with the S3 bucket name:

Create another policy to add to the SageMaker notebook IAM role to be able to call the RecognizeCelebrities API:

On the SageMaker console, choose Notebook instances in the navigation pane.

Locate the automatic-cropping-celebrity notebook and choose Open Jupyter.

Choose New and conda_python3 as the kernel for your notebook.

For the following steps, copy the code blocks into your Jupyter notebook and run them by choosing Run.

First, we import helper functions and libraries:

Set variables

Create a service client

Function to recognize the celebrities

Function to get the bounding box of the given celebrity:

Function to add some padding to the bounding box, so we capture some background around the face

Function to save the image to the notebook storage and to Amazon S3

Use the Python main() function to combine the preceding functions to complete the workflow of saving a new cropped image of our celebrity:

When you run this code block, you can see that we found Werner Vogels and created a new image with his face in the center.

The image will be saved to the notebook and also uploaded to the S3 bucket.

You could include this solution in a larger workflow; for example, a publishing company might want to publish this capability as an endpoint to reformat and resize images on the fly when publishing articles of celebrities to multiple platforms.

Cleaning up

To avoid incurring future charges, delete the resources:

On the SageMaker console, select your notebook and on the Actions menu, choose Stop.

After the notebook is stopped, on the Actions menu, choose Delete.

On the IAM console, delete the SageMaker execution role you created.

On the Amazon S3 console, delete the input image and any output files from your S3 bucket.

Conclusion

In this post, we showed how we can use Amazon Rekognition to automate an otherwise manual task of modifying images to support media workflows. This is particularly important within the publishing industry where speed matters in getting fresh content out quickly and to multiple platforms.

For more information about working with media assets, refer to Media intelligence just got smarter with Media2Cloud 3.0

About the Author

Mark Watkins is a Solutions Architect within the Media and Entertainment team. He helps customers creating AI/ML solutions which solve their business challenges using AWS. He has been working on several AI/ML projects related to computer vision, natural language processing, personalization, ML at the edge, and more. Away from professional life, he loves spending time with his family and watching his two little ones growing up.

Read MoreAWS Machine Learning Blog